这段代码整理自:《动手深度学习》

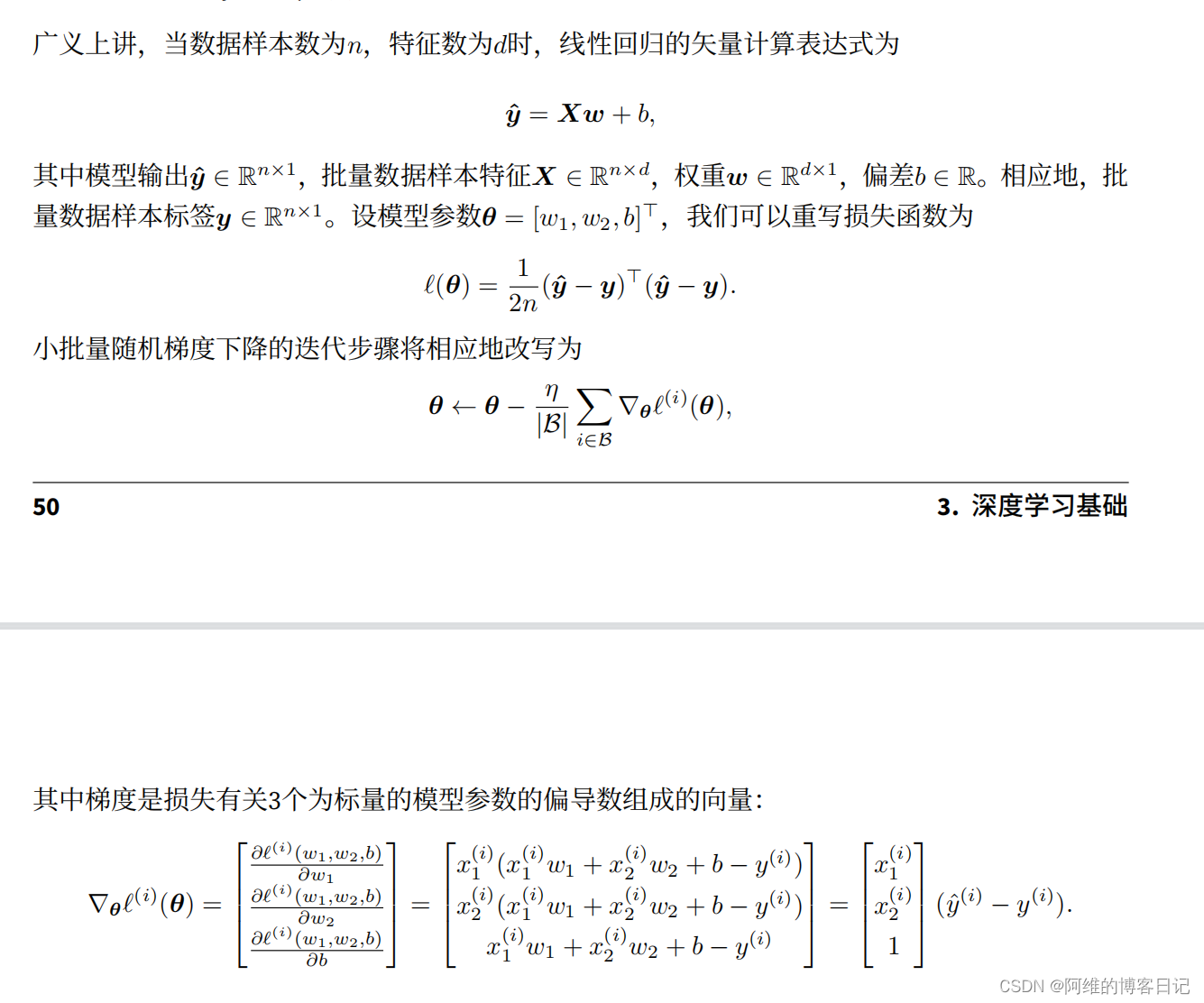

一,理论部分:

代码部分至今有一点不理解的是backward函数是怎么进行梯度累加的

# 随机梯度下降函数

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size

图片其中β与上面sgd函数的batch_size是一个意思,代表同一个含义

lr表示η

二,代码

使用pycharm或者datashell来运行ipynb文件

%matplotlib inline

import torch

from IPython import display

from matplotlib import pyplot as plt

import numpy as np

import random

num_inputs = 2

num_examples = 1000

true_w = [2, -3.4]

true_b = 4.2

# num_examples行,num_inputs列的numpy数组,特征值

features = torch.from_numpy(np.random.normal(0, 1, (num_examples, num_inputs)))

labels = true_w[0] * features[:, 0] + true_w[1] * features[:, 1] + true_b

labels += torch.from_numpy(np.random.normal(0, 0.01, size=labels.size()))

print(features[0], labels[0])

def use_svg_display():

display.set_matplotlib_formats('svg')

def set_figsize(figsize=(3.5, 2.5)):

use_svg_display()

plt.rcParams['figure.figsize'] = figsize

set_figsize()

# 0.5是代表一个点的大小

# features[:, 1].numpy()是所有的特征x1,x2

# labels.numpy()是所有的预测结果

plt.scatter(features[:, 1].numpy(), labels.numpy(), 0.5)

def data_iter(batch_size, features, labels):

num_examples = len(features)

indices = list(range(num_examples))

# 把indices列表彻底的打乱顺序,改变indices列表

random.shuffle(indices)

for i in range(0, num_examples, batch_size):

j = torch.LongTensor(indices[i:min(i + batch_size, num_examples)])

yield features.index_select(0, j), labels.index_select(0, j)

batch_size = 10

for x, y in data_iter(batch_size, features, labels):

print(x, y)

break

# 随机产生的符合正态分布的权重w数组

w = torch.tensor(np.random.normal(0, 0.01, (num_inputs, 1)), dtype=torch.double)

b = torch.zeros(1, dtype=torch.double)

w.requires_grad_(requires_grad=True)

b.requires_grad_(requires_grad=True)

# 矩阵相乘

def linreg(X, w, b):

return torch.mm(X, w) + b

# 平方误差函数

def squared_loss(y_hat, y):

#view与reshape函数基本等价

return (y_hat - y.view(y_hat.size())) ** 2 / 2

# 随机梯度下降函数

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size

lr = 0.03

num_epochs = 3

net = linreg

loss = squared_loss

# 训练模型需要num_epochs个迭代周期

for epoch in range(num_epochs):

for X, y in data_iter(batch_size, features, labels):

l = loss(net(X, w, b), y).sum()

l.backward()

sgd([w, b], lr, batch_size)

#w,b都进行梯度归零,防止梯度累加

w.grad.data.zero_()

b.grad.data.zero_()

train_l = loss(net(features, w, b), labels)

print('epoch %d ,loss %f' % (epoch + 1, train_l.mean().item()))

print('true_w = ', true_w)

print('w = ', w)

print('true_b = ', true_b)

print('b = ', b)

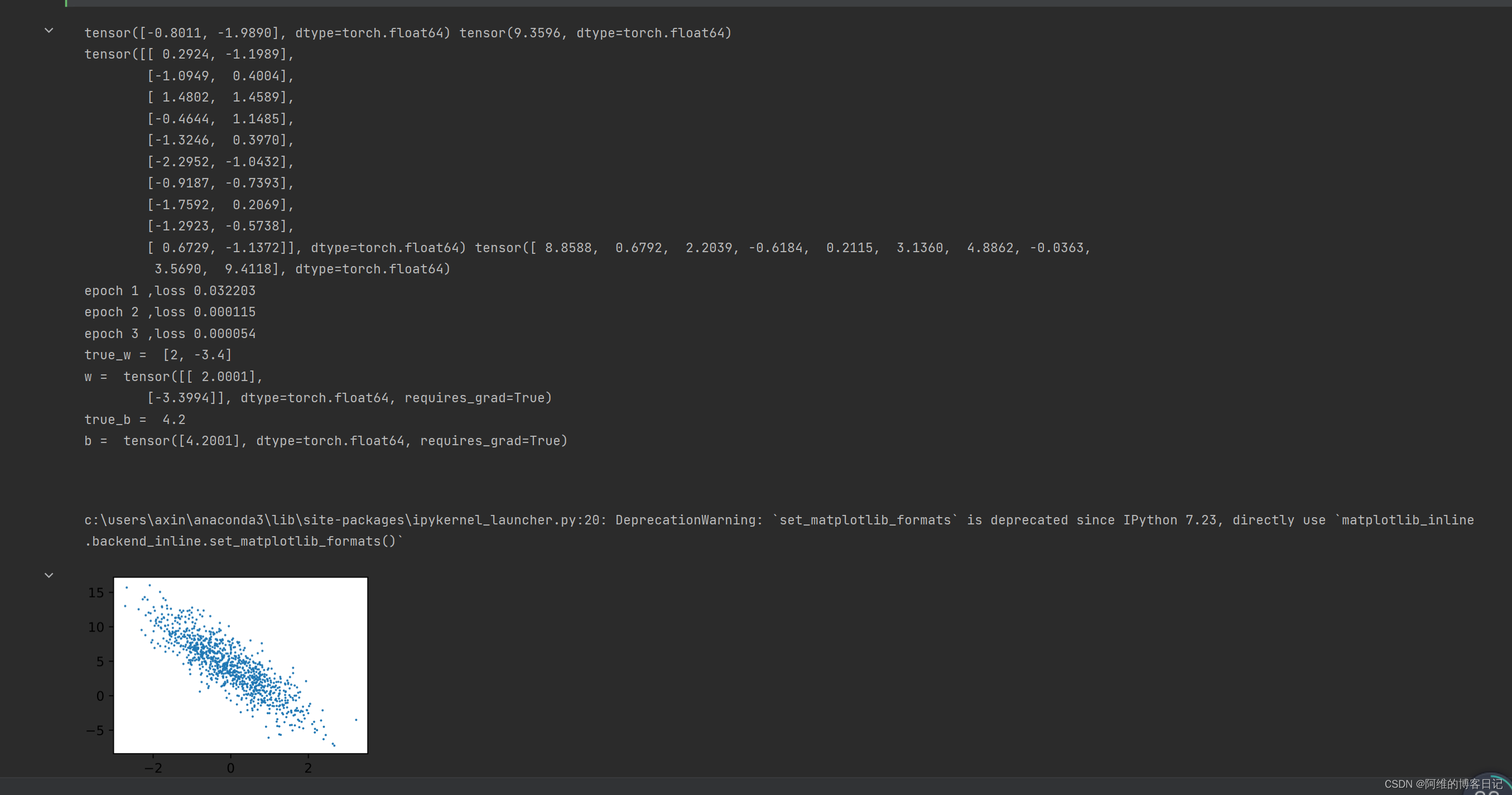

三,运行结果

如图所示:

8638

8638

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?