概述

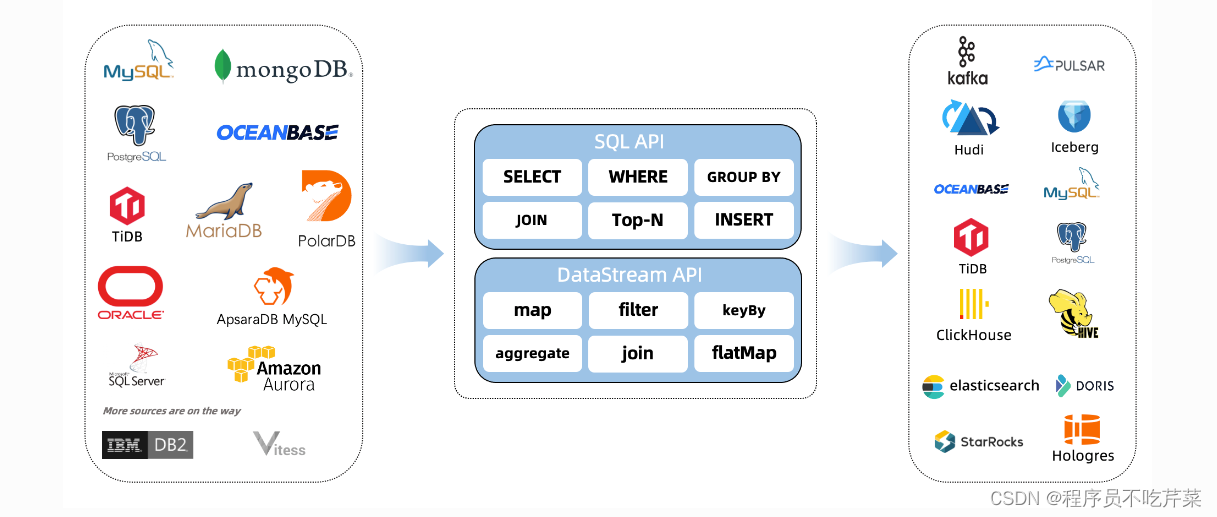

CDC Connectors for Apache Flink 是一组用于Apache Flink 的源连接器,使用变更数据捕获 (CDC) 从不同数据库获取变更。用于 Apache Flink 的 CDC 连接器将 Debezium 集成为捕获数据更改的引擎。所以它可以充分发挥 Debezium 的能力。

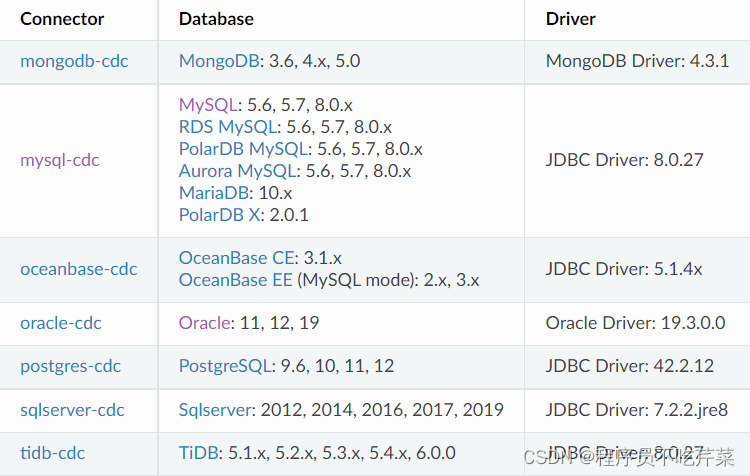

支持的连接器

支持的 flink

| flink cdc version | flink version |

|---|---|

| 1.0.0 | 1.11.* |

| 1.1.0 | 1.11.* |

| 1.2.0 | 1.12.* |

| 1.3.0 | 1.12.* |

| 1.4.0 | 1.13.* |

| 2.0.* | 1.13.* |

| 2.1.* | 1.13.* |

| 2.2.* | 1.13.*, 1.14.* |

特色

支持读取数据库快照,即使发生故障也能继续读取binlog,一次处理。

DataStream API 的 CDC 连接器,用户可以在单个作业中使用多个数据库和表的更改,而无需部署 Debezium 和 Kafka。

Table/SQL API 的 CDC 连接器,用户可以使用 SQL DDL 创建 CDC 源来监控单个表的更改。

Table SQL API

-- creates a mysql cdc table source

CREATE TABLE mysql_binlog (

id INT NOT NULL,

name STRING,

description STRING,

weight DECIMAL(10,3),

PRIMARY KEY(id) NOT ENFORCED

) WITH (

'connector' = 'mysql-cdc',

'hostname' = 'localhost',

'port' = '3306',

'username' = 'flinkuser',

'password' = 'flinkpw',

'database-name' = 'inventory',

'table-name' = 'products'

);

-- read snapshot and binlog data from mysql, and do some transformation, and show on the client

SELECT id, UPPER(name), description, weight FROM mysql_binlog;

DataStream API

<dependency>

<groupId>com.ververica</groupId>

<!-- add the dependency matching your database -->

<artifactId>flink-connector-mysql-cdc</artifactId>

<!-- The dependency is available only for stable releases, SNAPSHOT dependency need build by yourself. -->

<version>2.3-SNAPSHOT</version>

</dependency>

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import com.ververica.cdc.debezium.JsonDebeziumDeserializationSchema;

import com.ververica.cdc.connectors.mysql.source.MySqlSource;

public class MySqlBinlogSourceExample {

public static void main(String[] args) throws Exception {

MySqlSource<String> mySqlSource = MySqlSource.<String>builder()

.hostname("yourHostname")

.port(yourPort)

.databaseList("yourDatabaseName") // set captured database

.tableList("yourDatabaseName.yourTableName") // set captured table

.username("yourUsername")

.password("yourPassword")

.deserializer(new JsonDebeziumDeserializationSchema()) // converts SourceRecord to JSON String

.build();

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// enable checkpoint

env.enableCheckpointing(3000);

env

.fromSource(mySqlSource, WatermarkStrategy.noWatermarks(), "MySQL Source")

// set 4 parallel source tasks

.setParallelism(4)

.print().setParallelism(1); // use parallelism 1 for sink to keep message ordering

env.execute("Print MySQL Snapshot + Binlog");

}

}

数据

{

"before": {

"id": 111,

"name": "scooter",

"description": "Big 2-wheel scooter",

"weight": 5.18

},

"after": {

"id": 111,

"name": "scooter",

"description": "Big 2-wheel scooter",

"weight": 5.15

},

"source": {...},

"op": "u", // the operation type, "u" means this this is an update event

"ts_ms": 1589362330904, // the time at which the connector processed the event

"transaction": null

}

CDC Connectors for Apache Flink 是用于 Apache Flink 的源连接器,利用变更数据捕获从不同数据库获取变更,集成 Debezium 发挥其能力。它支持读取数据库快照,有 DataStream API 和 Table/SQL API 两种方式,可满足不同使用场景。

CDC Connectors for Apache Flink 是用于 Apache Flink 的源连接器,利用变更数据捕获从不同数据库获取变更,集成 Debezium 发挥其能力。它支持读取数据库快照,有 DataStream API 和 Table/SQL API 两种方式,可满足不同使用场景。

2208

2208

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?