kubernetes sealos 脚本安装 随笔

参考https://kuboard.cn/install/install-dashboard.html

https://github.com/fanux/sealos

准备条件所有节点必须配置不同主机名,并确认节点时间同步

hostnamectl set-hostname xxx

yum install -y chrony

systemctl enable --now chronyd

timedatectl set-timezone Asia/Shanghai

只需要准备好服务器,在任意一台服务器上执行下面命令即可

# 下载并安装sealos, sealos是个golang的二进制工具,直接下载拷贝到bin目录即可, release页面也可下载

$ wget -c https://sealyun.oss-cn-beijing.aliyuncs.com/latest/sealos && \

chmod +x sealos && mv sealos /usr/bin

# 下载离线资源包

$ wget -c https://sealyun.oss-cn-beijing.aliyuncs.com/7b6af025d4884fdd5cd51a674994359c-1.18.0/kube1.18.0.tar.gz

# 安装一个三master的kubernetes集群

$ sealos init --passwd '123456' \

--master 192.168.0.2 --master 192.168.0.3 --master 192.168.0.4 \

--node 192.168.0.5 \

--pkg-url /root/kube1.18.0.tar.gz \

--version v1.18.0

sealos init --passwd '*963..' \

--master 192.168.9.161 \

--node 192.168.9.162 \

--pkg-url /root/kube1.18.1.tar.gz \

--version v1.18.1

参数含义

| 参数名 | 含义 | 示例 |

|---|---|---|

| passwd | 服务器密码 | 123456 |

| master | k8s master节点IP地址 | 192.168.0.2 |

| node | k8s node节点IP地址 | 192.168.0.5 |

| pkg-url | 离线资源包地址,支持下载到本地,或者一个远程地址 | /root/kube1.16.0.tar.gz |

| version | 资源包对应的版本 |

自动补全命令

yum install -y bash-completion

[admin@minikube k8s]$ find / -name bash_completion

/usr/share/bash-completion

/usr/share/bash-completion/bash_completion

/usr/share/bash-completion/completions

/usr/share/bash-completion/helpers

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

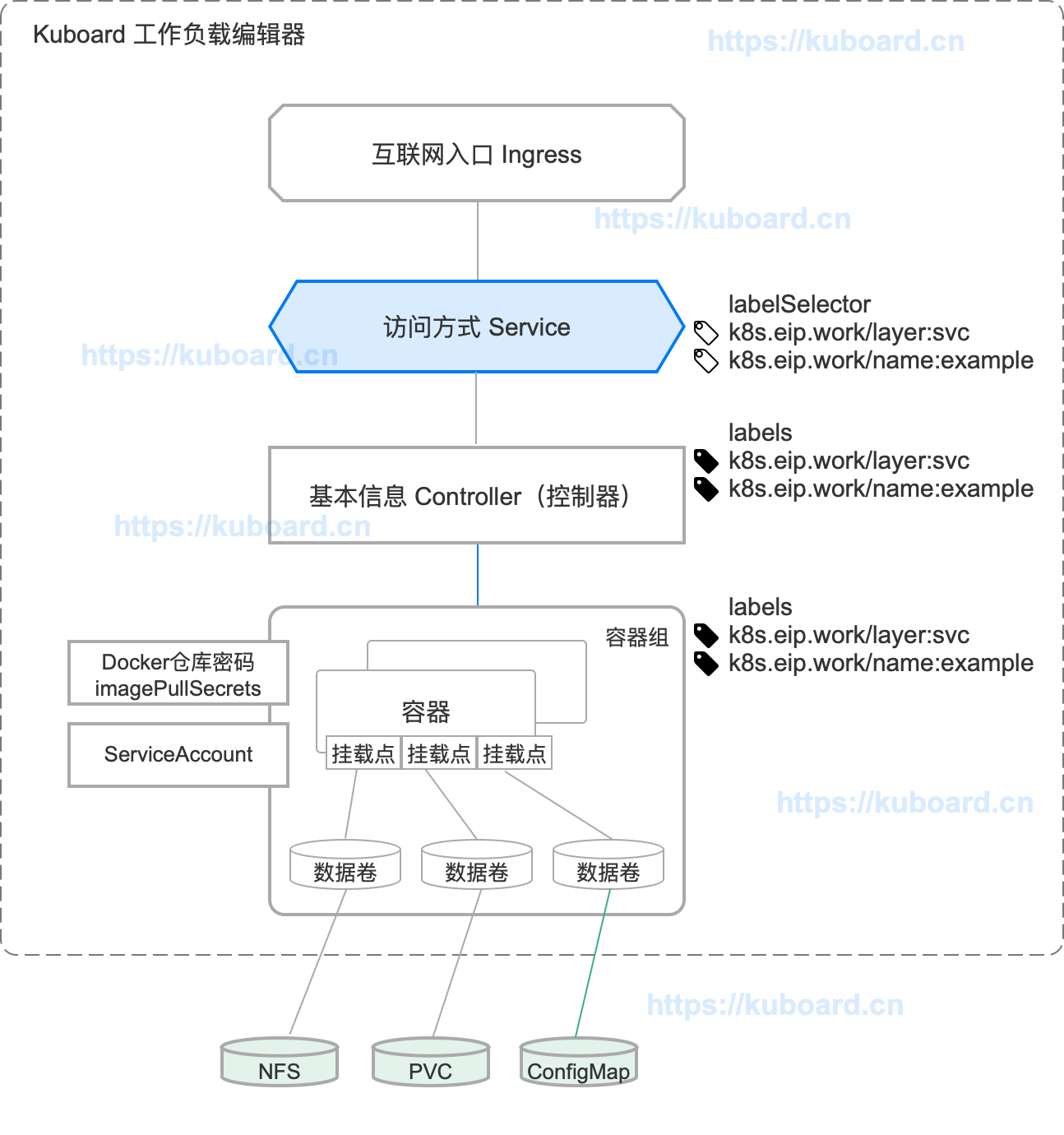

安装 Kuboard

拉取kuboard 镜像

docker pull eipwork/kuboard:latest

创建 kuboard-offline.yaml

#vim kuboard-offline.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kuboard

namespace: kube-system

annotations:

k8s.kuboard.cn/displayName: kuboard

k8s.kuboard.cn/ingress: "true"

k8s.kuboard.cn/service: NodePort

k8s.kuboard.cn/workload: kuboard

labels:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

spec:

replicas: 1

selector:

matchLabels:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

template:

metadata:

labels:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

spec:

nodeName: your-node-name #node name

containers:

- name: kuboard

image: eipwork/kuboard:latest

imagePullPolicy: IfNotPresent

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

apiVersion: v1

kind: Service

metadata:

name: kuboard

namespace: kube-system

spec:

type: NodePort

ports:

- name: http

port: 80

targetPort: 80

nodePort: 32567

selector:

k8s.kuboard.cn/layer: monitor

k8s.kuboard.cn/name: kuboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kuboard-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kuboard-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kuboard-user

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kuboard-viewer

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kuboard-viewer

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: view

subjects:

- kind: ServiceAccount

name: kuboard-viewer

namespace: kube-system

# ---

# apiVersion: extensions/v1beta1

# kind: Ingress

# metadata:

# name: kuboard

# namespace: kube-system

# annotations:

# k8s.kuboard.cn/displayName: kuboard

# k8s.kuboard.cn/workload: kuboard

# nginx.org/websocket-services: "kuboard"

# nginx.com/sticky-cookie-services: "serviceName=kuboard srv_id expires=1h path=/"

# spec:

# rules:

# - host: kuboard.yourdomain.com

# http:

# paths:

# - path: /

# backend:

# serviceName: kuboard

# servicePort: http

执行命令创建kuboard

kubectl apply -f kuboard-offline.yaml

使用token登录控制台

管理员权限,执行下面命令,会返回token

echo $(kubectl -n kube-system get secret $(kubectl -n kube-system get secret | grep kuboard-user | awk '{print $1}') -o go-template='{{.data.token}}' | base64 -d)

浏览器打开work节点地址,如:http://192.168.9.162:32567/

查看方式

[root@master k8s]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 5h26m

kuboard NodePort 10.97.75.218 <none> 80:32567/TCP 46m

nfs pv pvc

1、找台服务器安装nfs服务

#宿主机安装nfs

yum install nfs-utils

#修改/etc/exports

[root@192-136 ~]# cat /etc/exports

/home/k8s 192.168.9.0/24(sync,rw,no_root_squash)

#启动

systemctl start rpcbind

systemctl start nfs

#查看验证

rpcinfo -p

showmount -e 192.168.9.136

#注意关闭防火墙

#node 节点安装

yum install nfs-utils

2、pv

[root@master k8s]# cat mypv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv001

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteMany #多个用户读写

persistentVolumeReclaimPolicy: Recycle #

nfs:

path: /home/k8s/

server: 192.168.9.136

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: php-pv

labels:

type: php-pv

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteMany #多个用户读写

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /home/k8s/

server: 192.168.9.136

readOnly: false #只读

# 创建pv

kubectl create -f mypv.yaml

#更新

kubectl apply -f mypv.yaml

3、pvc

[root@master k8s]# cat mypvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: myclaim

spec:

accessModes:

- ReadWriteMany

resources: #资源

requests: #请求

storage: 3Gi

#查看

[root@master k8s]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

myclaim Bound pv001 5Gi RWX 5m35s

4、创建deployment pvc

[root@master k8s]# cat pvcpod.yaml

apiVersion: apps/v1 #与k8s集群版本有关,使用 kubectl api-versions 即可查看当前集群支持的版本

kind: Deployment #该配置的类型,我们使用的是 Deployment

metadata: #译名为元数据,即 Deployment 的一些基本属性和信息

name: nginx-deployment #Deployment 的名称

labels: #标签,可以灵活定位一个或多个资源,其中key和value均可自定义,可以定义多组,目前不需要理解

app: nginx #为该Deployment设置key为app,value为nginx的标签

spec: #这是关于该Deployment的描述,可以理解为你期待该Deployment在k8s中如何使用

replicas: 2 #使用该Deployment创建一个应用程序实例

selector: #标签选择器,与上面的标签共同作用,目前不需要理解

matchLabels: #选择包含标签app:nginx的资源

app: nginx

template: #这是选择或创建的Pod的模板

metadata: #Pod的元数据

labels: #Pod的标签,上面的selector即选择包含标签app:nginx的Pod

app: nginx

spec: #期望Pod实现的功能(即在pod中部署)

containers: #生成container,与docker中的container是同一种

- name: nginx #container的名称

image: nginx:1.7.9 #使用镜像nginx:1.7.9创建container,该container默认80端口可访问

imagePullPolicy: Always

volumeMounts:

- mountPath: "/usr/share/nginx/html/"

name: html

ports:

- containerPort: 80

volumes:

- name: html

persistentVolumeClaim:

claimName: myclaim #与pvc name 对应

5、创建svc

[root@master k8s]# cat nginx-service.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-service #Service 的名称

labels: #Service 自己的标签

app: nginx #为该 Service 设置 key 为 app,value 为 nginx 的标签

spec: #这是关于该 Service 的定义,描述了 Service 如何选择 Pod,如何被访问

selector: #标签选择器

app: nginx #选择包含标签 app:nginx 的 Pod

ports:

- name: nginx-port #端口的名字

protocol: TCP #协议类型 TCP/UDP

port: 80 #集群内的其他容器组可通过 80 端口访问 Service

nodePort: 32600 #通过任意节点的 32600 端口访问 Service

targetPort: 80 #将请求转发到匹配 Pod 的 80 端口

type: NodePort #Serive的类型,ClusterIP/NodePort/LoaderBalancer

6、验证

在nfs上创建文件 data > /home/k8s/1.html,验证浏览器http://192.168.9.161:32600/1.html

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-dmQUi4OS-1605755776756)(kubernetes.assets/1605252054143.png)]](https://img-blog.csdnimg.cn/20201119112348485.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3dlaXhpbl80OTEzODY3MQ==,size_16,color_FFFFFF,t_70#pic_center)

7、疑问删除pod --all 后,service不能匹配,

8、金丝雀灰度上线

创建不同名称的deployment 先创建1个ReplicaSet,没问题了删除旧的deployment

9、service

935

935

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?