文件分片上传

项目地址

效果

后端

使用了开源组件:x-file-storage gitee地址 组件官网:官网

代码

@Autowired

private FileStorageService fileStorageService;

/**

* 初始化 分片信息

* @param fileUploadInfo

* @return

*/

@GetMapping(value = "/uploader/chunk")

public FileInfo init(FileUploadInfo fileUploadInfo) {

return fileStorageService.initiateMultipartUpload()

.setSize(fileUploadInfo.getTotalSize())

.setOriginalFilename(fileUploadInfo.getFilename())

.init();

}

/**

* 上传 分片文件

* @param fileUploadInfo

* @return

*/

@PostMapping(value = "/uploader/chunk")

public void bigFile(FileUploadInfo fileUploadInfo) {

final FileInfo fileInfo = fileStorageService.getFileInfoByUrl(fileUploadInfo.getUrl());

final MultipartFileWrapper fileWrapper = new MultipartFileWrapper(

fileUploadInfo.getUpfile(),

fileUploadInfo.getUpfile().getOriginalFilename(),

fileUploadInfo.getUpfile().getContentType(),

fileUploadInfo.getUpfile().getSize());

fileStorageService.uploadPart(fileInfo, fileUploadInfo.getChunkNumber(),

fileWrapper, fileUploadInfo.getCurrentChunkSize().longValue()).upload();

}

/**

* 合并 分片文件

* @param url

* @return

*/

@PostMapping(value = "/uploader/merge")

public FileInfo merge(@RequestParam String url) {

final FileInfo fileInfo = fileStorageService.getFileInfoByUrl(url);

return fileStorageService.completeMultipartUpload(fileInfo).complete();

}

@Data

public class FileUploadInfo {

/**

* 操作 文件 的key

*/

private String url;

private Integer chunkNumber;

private Integer chunkSize;

private Integer currentChunkSize;

private Long totalSize;

private String identifier;

private String filename;

private String relativePath;

private Integer totalChunks;

private MultipartFile upfile;

}

配置

docker pull minio/minio

mkdir -p /opt/minio/{data,config}

docker run \

-p 9000:9000 \

-p 9090:9090 \

--name minio \

-e "MINIO_ROOT_USER=minio" \

-e "MINIO_ROOT_PASSWORD=minio123456" \

-v /opt/minio/data:/data \

-v /opt/minio/config:/root/.minio \

minio/minio server /data --console-address ":9090" -address ":9000"

server:

port: 8000

spring:

datasource:

url: jdbc:h2:file:./data/test_file/file;AUTO_SERVER=TRUE;MODE=MYSQL;DB_CLOSE_DELAY=-1;AUTO_RECONNECT=TRUE

username: root

password: 123456

driver-class-name: org.h2.Driver

servlet:

multipart:

max-file-size: 100MB

max-request-size: 201MB

dromara:

x-file-storage:

default-platform: minio

minio:

- platform: minio

enable-storage: true

access-key: zygoJJAKsIZHyXkdKfa4

secret-key: RBd3Coi6LJnJPEhv6IEUEEnhm0Mums4VMi1bS6SK

end-point: http://192.168.64.129:9000

bucket-name: test

domain:

base-path:

前端

代码

<template>

<div>

<!-- 上传器 -->

<uploader

ref="uploaderRef"

:options="options"

:autoStart="false"

:file-status-text="fileStatusText"

class="uploader-ui"

@file-added="onFileAdded"

@file-success="onFileSuccess"

@file-progress="onFileProgress"

@file-error="onFileError"

>

<uploader-unsupport></uploader-unsupport>

<uploader-drop>

<div>

<uploader-btn id="global-uploader-btn" ref="uploadBtn" :attrs="attrs">

选择文件

<el-icon><Upload /></el-icon>

</uploader-btn>

</div>

</uploader-drop>

<uploader-list></uploader-list>

</uploader>

</div>

</template>

<script setup>

import { ACCEPT_CONFIG } from '@/config/accept.ts';

import { reactive, ref } from 'vue';

import SparkMD5 from 'spark-md5';

import { mergeFile } from '@/api/fileUpload/index';

import { ElMessage } from 'element-plus';

const options = reactive({

//目标上传 URL,默认POST, import.meta.env.VITE_API_URL = api

// target ==》http://localhost:6666/api/uploader/chunk

target: import.meta.env.VITE_API_URL + '/uploader/chunk',

query: {},

headers: {

// 需要携带token信息,当然看各项目情况具体定义

token: "your_token",

},

//分块大小(单位:字节)

chunkSize: '5242880',

url: '',

//上传文件时文件内容的参数名,对应chunk里的Multipart对象名,默认对象名为file

fileParameterName: 'upfile',

//失败后最多自动重试上传次数

maxChunkRetries: 3,

//是否开启服务器分片校验,对应GET类型同名的target URL

testChunks: true,

// 服务器分片校验函数

checkChunkUploadedByResponse: function (chunk, response_msg) {

let objMessage = JSON.parse(response_msg);

if (!this.url) {

options.url = objMessage?.url;

}

if (objMessage?.attr?.skipUpload) {

return true;

}

return (objMessage.uploadedChunks || []).indexOf(chunk.offset + 1) >= 0;

},

processParams: function(params, file) {

if (!params.url) {

params.url = options.url

}

return params

}

});

const attrs = reactive({

accept: ACCEPT_CONFIG.getAll(),

});

const fileStatusText = reactive({

success: '上传成功',

error: '上传失败',

uploading: '上传中',

paused: '暂停',

waiting: '等待上传',

});

onMounted(() => {

});

function onFileAdded(file) {

computeMD5(file);

}

function onFileSuccess(rootFile, file, response, chunk) {

//refProjectId为预留字段,可关联附件所属目标,例如所属档案,所属工程等

file.refProjectId = '';

mergeFile(options.url)

.then((responseData) => {

if (responseData.data.code === 415) {

console.log('合并操作未成功,结果码:' + responseData.data.code);

}

ElMessage.success(responseData.data);

options.url = '';

})

.catch(function (error) {

console.log('合并后捕获的未知异常:' + error);

})

}

function onFileError(rootFile, file, response, chunk) {

console.log('上传完成后异常信息:' + response);

}

function onFileProgress(rootFile, file, chunk) {

// 文件进度的回调

// console.log('on-file-progress', rootFile, file, chunk)

}

/**

* 计算md5,实现断点续传及秒传

* @param file

*/

function computeMD5(file) {

file.pause();

//单个文件的大小限制2G

let fileSizeLimit = 2 * 1024 * 1024 * 1024;

console.log('文件大小:' + file.size);

console.log('限制大小:' + fileSizeLimit);

if (file.size > fileSizeLimit) {

file.cancel();

}

let fileReader = new FileReader();

let time = new Date().getTime();

let blobSlice =

File.prototype.slice ||

File.prototype.mozSlice ||

File.prototype.webkitSlice;

let currentChunk = 0;

const chunkSize = 10 * 1024 * 1000;

let chunks = Math.ceil(file.size / chunkSize);

let spark = new SparkMD5.ArrayBuffer();

//由于计算整个文件的Md5太慢,因此采用只计算第1块文件的md5的方式

let chunkNumberMD5 = 1;

loadNext();

fileReader.onload = (e) => {

spark.append(e.target.result);

if (currentChunk < chunkNumberMD5) {

loadNext();

} else {

let md5 = spark.end();

file.uniqueIdentifier = md5;

file.resume();

console.log(

`MD5计算完毕:${file.name} \nMD5:${md5} \n分片:${chunks} 大小:${

file.size

} 用时:${new Date().getTime() - time} ms`

);

}

};

fileReader.onerror = function () {

error(`文件${file.name}读取出错,请检查该文件`);

file.cancel();

};

function loadNext() {

let start = currentChunk * chunkSize;

let end = start + chunkSize >= file.size ? file.size : start + chunkSize;

fileReader.readAsArrayBuffer(blobSlice.call(file.file, start, end));

currentChunk++;

console.log('计算第' + currentChunk + '块');

}

}

const uploaderRef = ref();

function close() {

uploaderRef.value.cancel();

}

function error(msg) {

console.log(msg, 'msg');

}

</script>

<style scoped>

.uploader-ui {

padding: 15px;

margin: 40px auto 0;

font-size: 12px;

font-family: Microsoft YaHei;

box-shadow: 0 0 10px rgba(0, 0, 0, 0.4);

}

.uploader-ui .uploader-btn {

margin-right: 4px;

font-size: 12px;

border-radius: 3px;

color: #fff;

background-color: #409eff;

border-color: #409eff;

display: inline-block;

line-height: 1;

white-space: nowrap;

}

.uploader-ui .uploader-list {

max-height: 440px;

overflow: auto;

overflow-x: hidden;

overflow-y: auto;

}

</style>

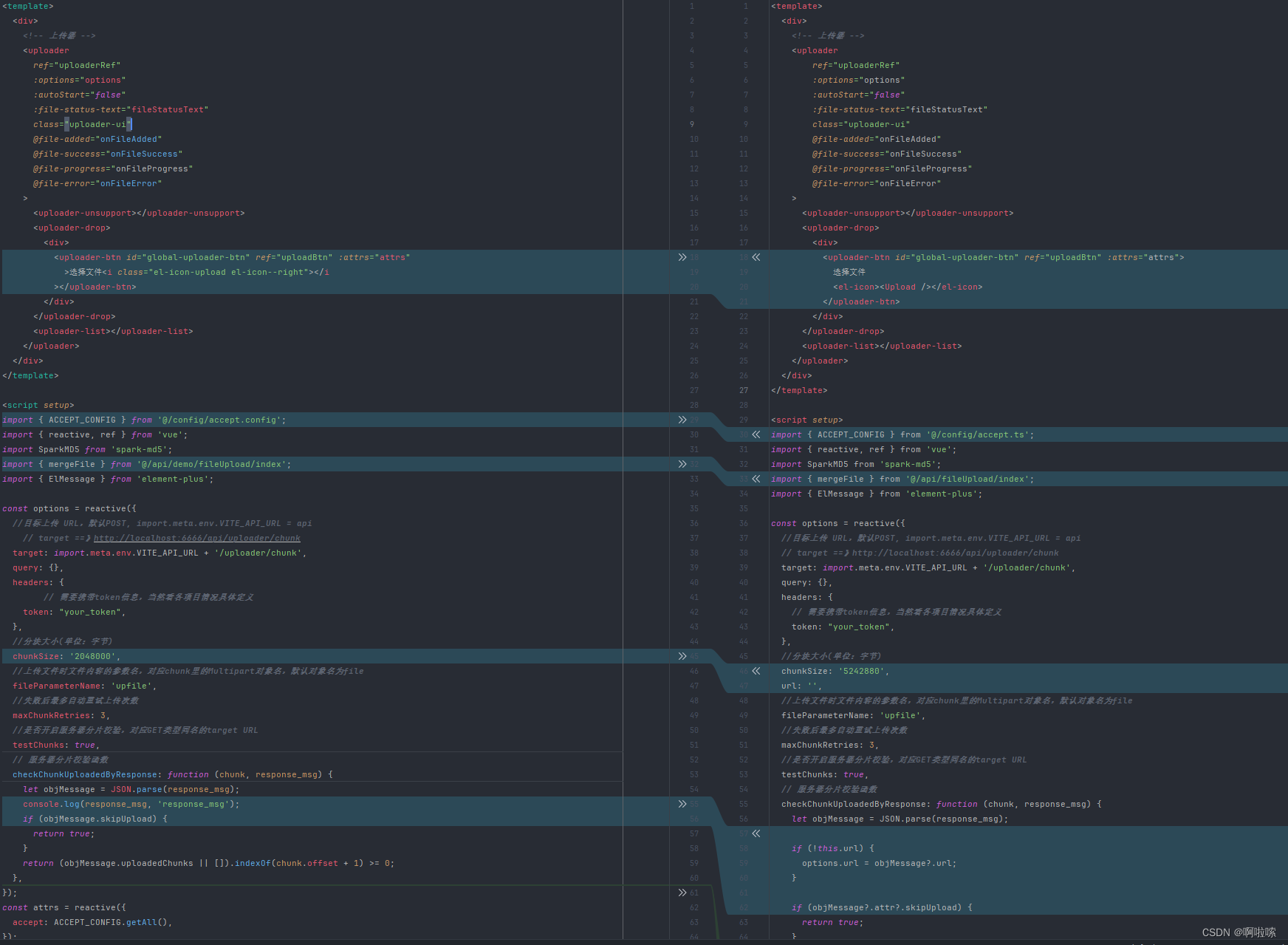

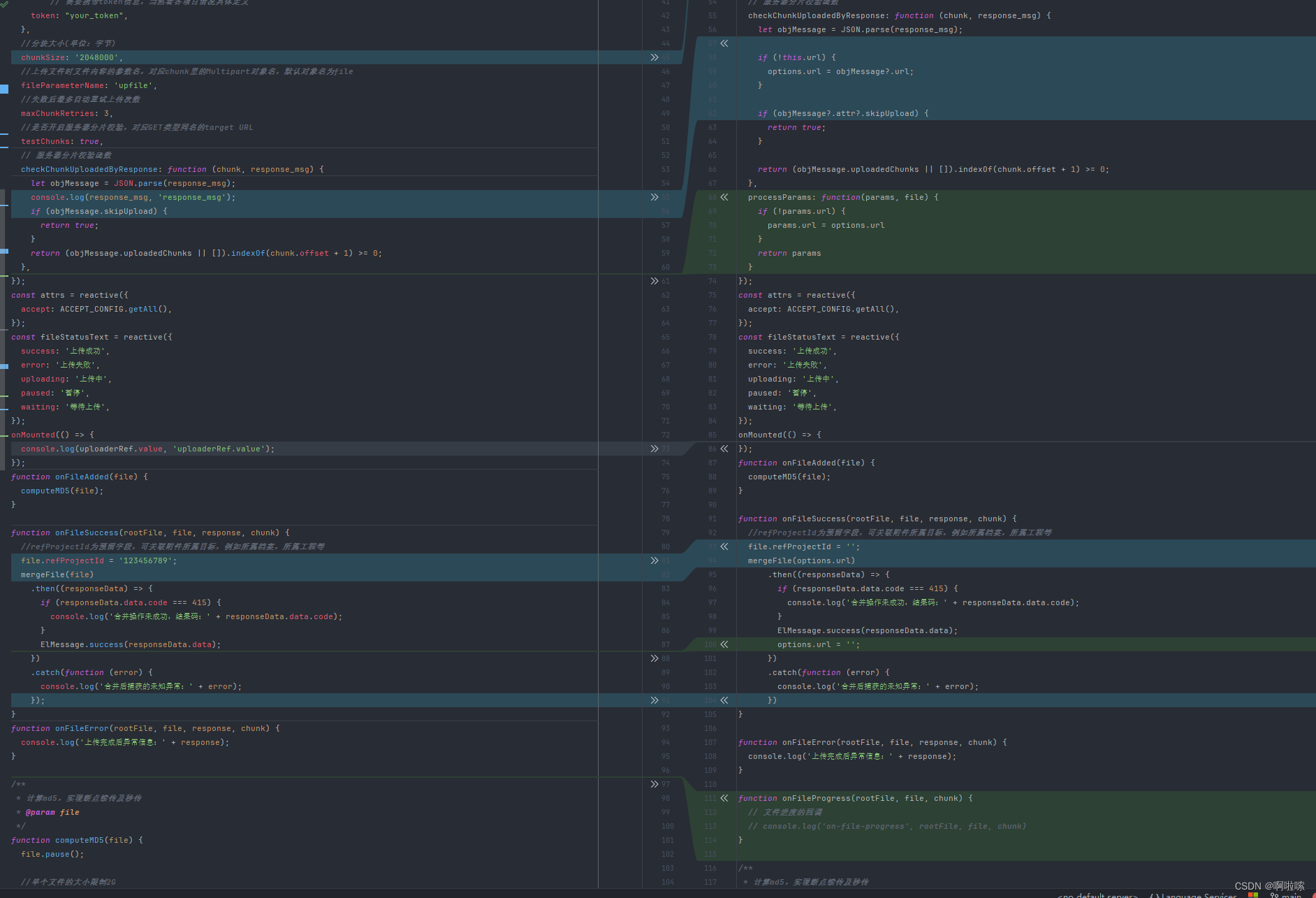

与上面的参考代码差不多,不过在兼容后端有一些调整。以下为差异点

1234

1234

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?