安装fastchat环境,

https://github.com/lm-sys/FastChat

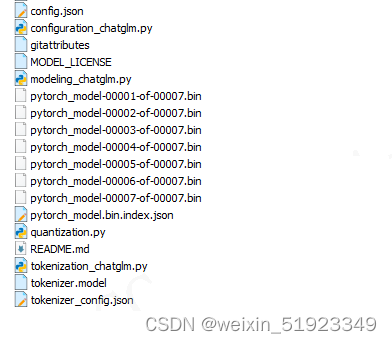

下载glm2-6b模型,链接和模型文件如下

https://huggingface.co/THUDM/chatglm2-6b/tree/main

执行下面的命令推理:

#执行环境变量

source /usr/local/Ascend/ascend-toolkit/set_env.sh

#用fastchat推理

llm_path=/PATH/TO/YOUR/GLM

python3 -m fastchat.serve.cli --model-path ${llm_path} --device npu

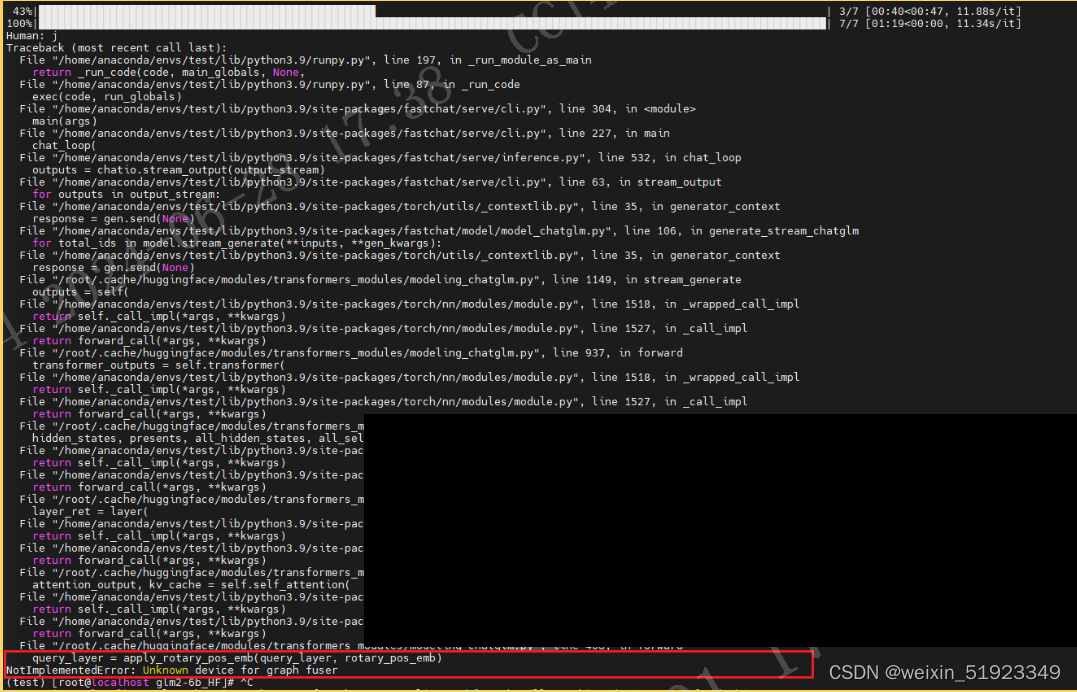

推理报错截图:

完整的报错内容:

Traceback (most recent call last):

File "/home/anaconda/envs/test/lib/python3.9/runpy.py", line 197, in _run_module_as_main

return _run_code(code, main_globals, None,

File "/home/anaconda/envs/test/lib/python3.9/runpy.py", line 87, in _run_code

exec(code, run_globals)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/fastchat/serve/cli.py", line 304, in <module>

main(args)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/fastchat/serve/cli.py", line 227, in main

chat_loop(

File "/home/anaconda/envs/test/lib/python3.9/site-packages/fastchat/serve/inference.py", line 532, in chat_loop

outputs = chatio.stream_output(output_stream)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/fastchat/serve/cli.py", line 63, in stream_output

for outputs in output_stream:

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/utils/_contextlib.py", line 35, in generator_context

response = gen.send(None)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/fastchat/model/model_chatglm.py", line 106, in generate_stream_chatglm

for total_ids in model.stream_generate(**inputs, **gen_kwargs):

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/utils/_contextlib.py", line 35, in generator_context

response = gen.send(None)

File "/root/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 1149, in stream_generate

outputs = self(

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/root/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 937, in forward

transformer_outputs = self.transformer(

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/root/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 830, in forward

hidden_states, presents, all_hidden_states, all_self_attentions = self.encoder(

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/root/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 640, in forward

layer_ret = layer(

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/root/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 544, in forward

attention_output, kv_cache = self.self_attention(

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/anaconda/envs/test/lib/python3.9/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/root/.cache/huggingface/modules/transformers_modules/modeling_chatglm.py", line 408, in forward

query_layer = apply_rotary_pos_emb(query_layer, rotary_pos_emb)

NotImplementedError: Unknown device for graph fuser

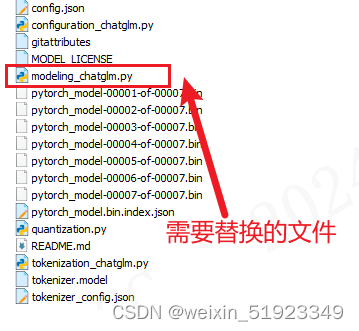

解决办法:

替换1个文件:

用https://gitee.com/ascend/ModelZoo-PyTorch/blob/master/PyTorch/built-in/foundation/ChatGLM2-6B/model/modeling_chatglm.py这个文件替换模型本来的modeling_chatglm.py,

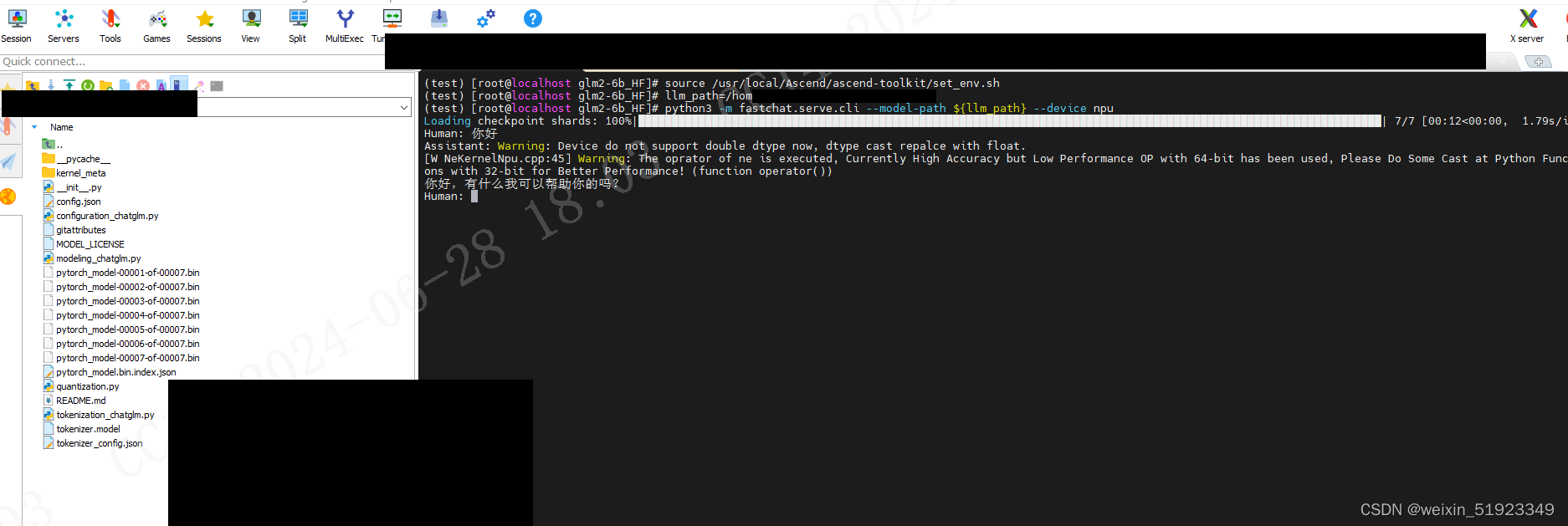

成功截图:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?