环境说明

CNI 插件:Calico VxLAN 模式

root@k8s-control-plane:/# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-control-plane Ready control-plane,master 12m v1.23.17 172.18.0.4 <none> Debian GNU/Linux 12 (bookworm) 6.5.0-35-generic containerd://1.7.13

k8s-worker Ready <none> 11m v1.23.17 172.18.0.3 <none> Debian GNU/Linux 12 (bookworm) 6.5.0-35-generic containerd://1.7.13

k8s-worker2 Ready <none> 11m v1.23.17 172.18.0.2 <none> Debian GNU/Linux 12 (bookworm) 6.5.0-35-generic containerd://1.7.13

ClusterIP

ClusterIP 类型的 Service 提供一个集群内部的访问点,它的 IP 地址是一个仅在集群内部可路由的虚拟 IP 地址,映射到后端所有处于就绪状态的 Pod。kube-proxy负责维护节点上的网络规则,使流经 ClusterIP 地址的流量能够正确路由到后端可用的 Pod

创建用于测试的 Pod,包含一个名为 client 的 Pod 和一个名为 server 的 DaemonSet

apiVersion: v1

kind: Pod

metadata:

name: client

labels:

app: client

spec:

containers:

- name: client

image: nginx:alpine

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: server

spec:

selector:

matchLabels:

app: server

template:

metadata:

labels:

app: server

spec:

containers:

- name: server

image: nginx:alpine

ports:

- containerPort: 80

查看创建的 Pod

root@k8s-control-plane:/# kubectl apply -f test.yaml

pod/client created

daemonset.apps/server created

root@k8s-control-plane:/# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

client 1/1 Running 0 36s 10.244.254.130 k8s-worker <none> <none>

server-p4lmn 1/1 Running 0 36s 10.244.254.129 k8s-worker <none> <none>

server-smp9k 1/1 Running 0 36s 10.244.126.5 k8s-worker2 <none> <none>

创建 ClusterIP Service

apiVersion: v1

kind: Service

metadata:

name: server-clusterip

spec:

selector:

app: server

ports:

- protocol: TCP

port: 80

targetPort: 80

type: ClusterIP

查看创建的 Service

root@k8s-control-plane:/# kubectl apply -f services-clusterip.yaml

service/server created

root@k8s-control-plane:/# kubectl get svc server

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

server-clusterip ClusterIP 10.96.203.213 <none> 80/TCP 5s

访问测试

root@k8s-control-plane:/# kubectl exec -it client -- sh

/ # curl -s -w "%{http_code}\n" server -o /dev/null

200

/ # curl -s -w "%{http_code}\n" 10.96.203.213 -o /dev/null

200

/ # curl -s -w "%{http_code}\n" 10.244.254.129 -o /dev/null

200

查看该 Service 对应的 Iptables 规则

root@k8s-worker:/# iptables -t nat -L | grep 10.96.203.213

KUBE-SVC-Z5ZTHZWXI2PR3CSY tcp -- anywhere 10.96.203.213 /* default/server cluster IP */ tcp dpt:http

KUBE-MARK-MASQ tcp -- !10.244.0.0/16 10.96.203.213 /* default/server cluster IP */ tcp dpt:http

查看KUBE-SVC-Z5ZTHZWXI2PR3CSY链的所有规则

root@k8s-worker:/# iptables -t nat -L KUBE-SVC-Z5ZTHZWXI2PR3CSY

Chain KUBE-SVC-Z5ZTHZWXI2PR3CSY (1 references)

target prot opt source destination

KUBE-MARK-MASQ tcp -- !10.244.0.0/16 10.96.203.213 /* default/server cluster IP */ tcp dpt:http

KUBE-SEP-ISVQMGFN2WES56JK all -- anywhere anywhere /* default/server */ statistic mode random probability 0.50000000000

KUBE-SEP-I6KQB5ZRXBO2YH32 all -- anywhere anywhere /* default/server */

进一步查看,可以看到 DNAT 字样,说明 TCP 数据包的目的 IP 地址将发生转换

root@k8s-worker:/# iptables -t nat -L KUBE-SEP-ISVQMGFN2WES56JK

Chain KUBE-SEP-ISVQMGFN2WES56JK (1 references)

target prot opt source destination

# 标记数据包,使返回的数据能够包转换回原来的地址

KUBE-MARK-MASQ all -- 10.244.126.5 anywhere /* default/server */

DNAT tcp -- anywhere anywhere /* default/server */ tcp to:10.244.126.5:80

root@k8s-worker:/# iptables -t nat -L KUBE-SEP-I6KQB5ZRXBO2YH32

Chain KUBE-SEP-I6KQB5ZRXBO2YH32 (1 references)

target prot opt source destination

KUBE-MARK-MASQ all -- 10.244.254.129 anywhere /* default/server */

DNAT tcp -- anywhere anywhere /* default/server */ tcp to:10.244.254.129:80

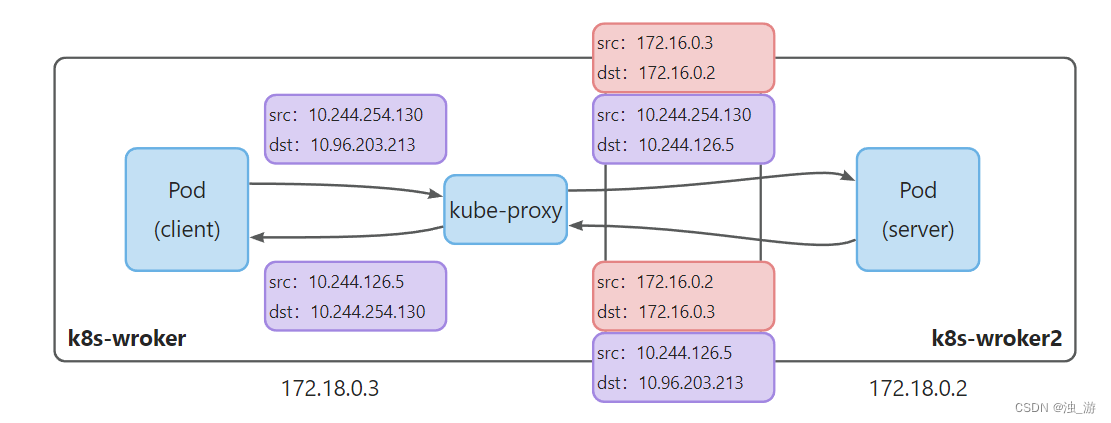

数据包地址转换过程如下:

NodePort

NodePort 类型的 Service 在所有的节点上暴露一个固定端口(30000~32767),外部流量可以通过这个端口到达集群内部的 Pod

externalTrafficPolicy 字段指示 Service 如何处理外部流量

Cluster:默认值。如果本地节点上没有对应的后端 Pod,流量会被转发到其它节点Local:保留客户端的源 IP 地址。如果本地节点上没有对应的后端 Pod,数据包会被丢弃

创建 NodePort Service

apiVersion: v1

kind: Service

metadata:

name: server-nodeport

spec:

type: NodePort

selector:

app: server

ports:

- protocol: TCP

port: 80

targetPort: 80

nodePort: 32000

查看创建的 Service

root@k8s-control-plane:/# kubectl apply -f services-nodeport.yaml

service/server-nodeport created

root@k8s-control-plane:/# kubectl get svc server-nodeport

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

server-nodeport NodePort 10.96.230.121 <none> 80:32000/TCP 38s

访问任意节点的 32000 端口

$ curl -s -w "%{http_code}\n" 172.18.0.3:32000 -o /dev/null

200```

$ curl -s -w "%{http_code}\n" 172.18.0.2:32000 -o /dev/null

200

查看该 Service 对应的 iptables 规则

root@k8s-worker:/# iptables -t nat -L | grep 32000

KUBE-SVC-3V3RLJNFHWQZHLRS tcp -- anywhere anywhere /* default/server-nodeport */ tcp dpt:32000

KUBE-MARK-MASQ tcp -- anywhere anywhere /* default/server-nodeport */ tcp dpt:32000

查看KUBE-SVC-3V3RLJNFHWQZHLRS链的所有规则

root@k8s-worker:/# iptables -t nat -L KUBE-SVC-3V3RLJNFHWQZHLRS

Chain KUBE-SVC-3V3RLJNFHWQZHLRS (2 references)

target prot opt source destination

KUBE-MARK-MASQ tcp -- !10.244.0.0/16 10.96.230.121 /* default/server-nodeport cluster IP */ tcp dpt:http

KUBE-MARK-MASQ tcp -- anywhere anywhere /* default/server-nodeport */ tcp dpt:32000

KUBE-SEP-OHUMVRELWAOZJIQ6 all -- anywhere anywhere /* default/server-nodeport */ statistic mode random probability 0.50000000000

KUBE-SEP-BGDVP23KI4VS7M6S all -- anywhere anywhere /* default/server-nodeport */

进一步查看,可以看到 DNAT 字样,说明 TCP 数据包的目的 IP 地址将发生转换

root@k8s-worker:/# iptables -t nat -L KUBE-SEP-OHUMVRELWAOZJIQ6

Chain KUBE-SEP-OHUMVRELWAOZJIQ6 (1 references)

target prot opt source destination

KUBE-MARK-MASQ all -- 10.244.126.5 anywhere /* default/server-nodeport */

DNAT tcp -- anywhere anywhere /* default/server-nodeport */ tcp to:10.244.126.5:80

root@k8s-worker:/# iptables -t nat -L KUBE-SEP-BGDVP23KI4VS7M6S

Chain KUBE-SEP-BGDVP23KI4VS7M6S (1 references)

target prot opt source destination

KUBE-MARK-MASQ all -- 10.244.254.129 anywhere /* default/server-nodeport */

DNAT tcp -- anywhere anywhere /* default/server-nodeport */ tcp to:10.244.254.129:80

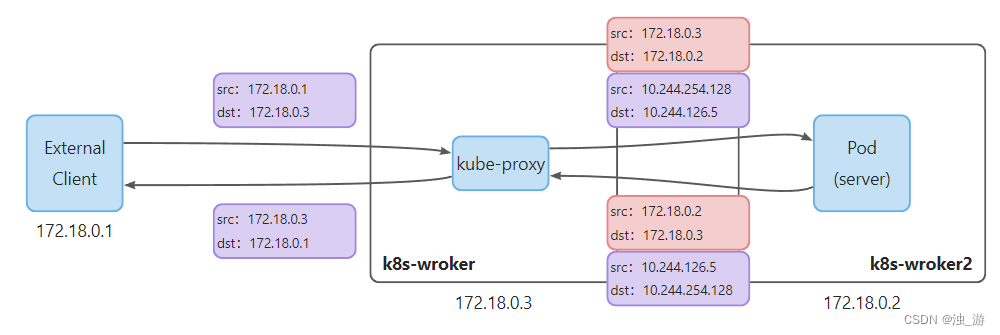

数据包地址转换过程如下:

- 10.244.254.128 是 VxLAN 接口的 IP 地址

- 外部流量策略设置为

Cluster时,转发到其它节点的数据包同时进行了原地址转换(SNAT)和目的地址转换(DNAT)

LoadBalancer

LoadBalancer 类型的 Service 将服务暴露到集群外部。它将 NodePort Service 的行为和外部负载均衡器相结合。LoadBalancer 处理 4 层流量,因此它适用于任何 TCP 或 UDP 的服务

创建 LoadBalancer Service

apiVersion: v1

kind: Service

metadata:

name: server-loadbalancer

labels:

app: app

spec:

selector:

app: server

ports:

- protocol: TCP

port: 80

targetPort: 80

type: LoadBalancer

查看创建的 Service

root@k8s-control-plane:/# kubectl get svc server-loadbalancer

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

server-loadbalancer LoadBalancer 10.96.131.121 172.18.0.100 80:31184/TCP 7s

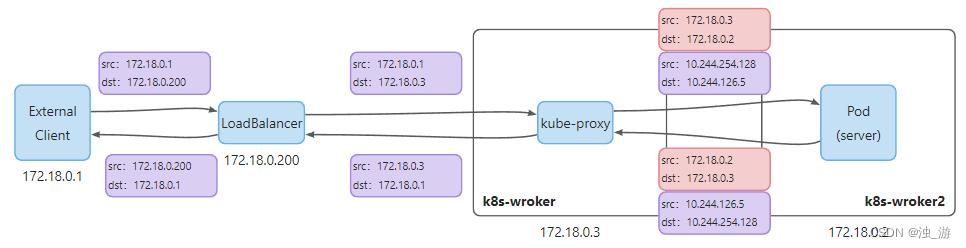

数据包地址转换过程如下:

4 层负载均衡器会直接转发流量,而不会终止与客户端的连接并重新与后端建立连接

323

323

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?