高并发内存池

什么是内存池

池化技术

所谓“池化技术”,就是程序先向系统申请过量的资源,然后自己管理以备不时之需。之所以要申请过量的资源,是因为每次申请该资源都有较大的开销,不如提前申请好,这样使用时就会变得非常快捷,大大提高程序运行效率。在计算机中,有很多使用“池”这种技术的地方,除了内存池,还有连接池、线程池、对象池等。

以服务器上的线程池为例,它的主要思想是:先启动若干数量的线程,让它们处于睡眠状态,当接收到客户端的请求时,唤醒池中某个睡眠的线程,让它来处理客户端的请求,当处理完这个请求,线程又进入睡眠状态。

内存池

内存池是指程序预先从操作系统申请一块足够大内存,此后,当程序中需要申请内存的时候,不是直接向操作系统申请,而是直接从内存池中获取;同理,当程序释放内存的时候,并不真正将内存返回给操作系统,而是返回内存池。当程序退出(或者特定时间)时,内存池才将之前申请的内存真正释放。内存池主要解决的问题

内存池主要解决的当然是效率的问题,其次,作为系统的内存分配器的角度,还需要解决一下内存碎片的问题。

内存碎片

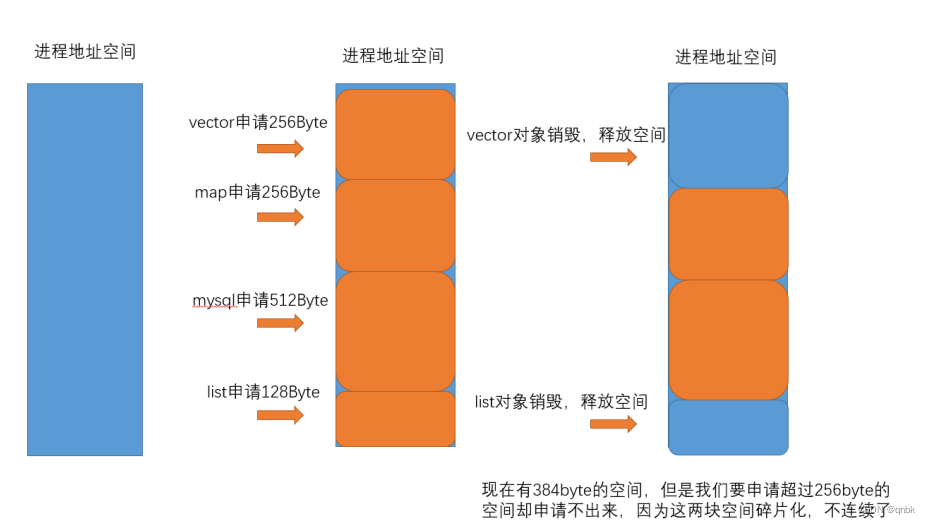

内存碎片分为外碎片和内碎片

- 外部碎片是一些空闲的连续内存区域太小,这些内存空间不连续,以至于合计的内存足够,但是不能满足一些的内存分配申请需求。

- 内部碎片是由于一些对齐的需求,导致分配出去的空间中一些内存无法被利用。

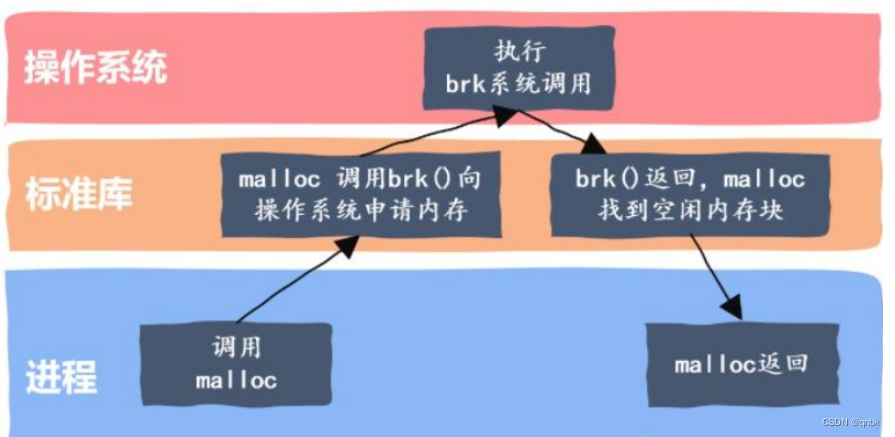

malloc

C/C++中我们要动态申请内存都是通过malloc去申请内存,实际我们不是直接去堆获取内存的。

而malloc就是一个内存池。malloc() 相当于向操作系统申请了一块较大的内存空间。当内存用完或程序有大量的内存需求时,再根据实际需求向操作系统“申请。

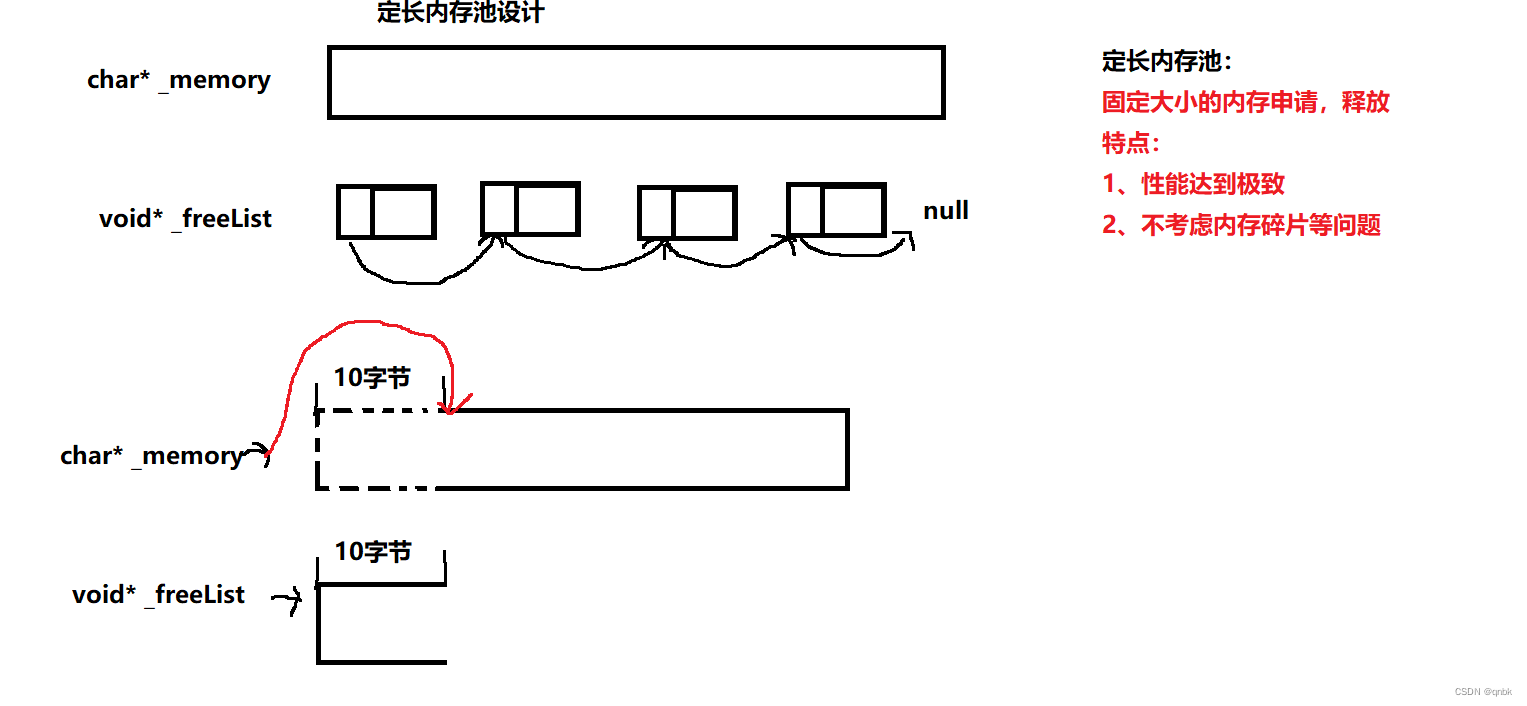

定长内存池

申请内存使用的是malloc,什么场景下都可以用,但是意味着什么场景下都不会有很高的性能,下面我们就先来设计一个定长内存池

ObjectPool.h

#pragma once

#include <iostream>

#include <vector>

#include <time.h>

using std::cout;

using std::endl;

//定长内存池

//template <size_t N>

//class ObjectPool

//{};

#ifdef _WIN32

#include <windows.h>

#else

#endif

inline static void* SystemAlloc(size_t kpage)//直接去堆上按页申请内存

{

#ifdef _WIN32

void* ptr = VirtualAlloc(0, kpage<<13, MEM_COMMIT | MEM_RESERVE,

PAGE_READWRITE);

#else

// linux下brk mmap等

#endif

if (ptr == nullptr)

throw std::bad_alloc();

return ptr;

}

template <class T>

class ObjectPool

{

public:

T* New()

{

T* obj = nullptr;

if (_freeList)

{

//优先把还回来的内存块再次重复利用

void* next = (*(void**)_freeList);

obj = (T*)_freeList;

_freeList = next;

}

else

{

//剩余内存不够一个对象大小时,重新开大块空间

if (remainBytes < sizeof(T))

{

remainBytes = 128 * 1024 ;

//_memory = (char*)malloc(remainBytes);

_memory = (char*)SystemAlloc(remainBytes >> 13);

if (_memory == nullptr)

{

throw std::bad_alloc();

}

}

obj = (T*)_memory;

size_t objSize = sizeof(T) < sizeof(void*) ? sizeof(void*) : sizeof(T);

_memory += objSize;

remainBytes -= objSize;

}

//定位new,显示调用T的构造函数初始化,对已有的空间初始化

new(obj)T;

return obj;

}

void Delete(T* obj)

{

//还回来

//显示调用析构函数清理对象

obj->~T();

if (_freeList == nullptr)

{

_freeList = obj;

//*(int*)obj = nullptr;//前四个字节用来保存下一个内存的地址 把obj强转成int* 再解引用->int 获得此地址 64位下跑不了

*(void**)obj = nullptr;//64位下解引用是void *,*(int**)也可以

}

else

{

//头插

*(void**)obj = _freeList;

_freeList = obj;

}

}

private:

char* _memory = nullptr;//指向大块内存,char是一个字节,好切分内存

size_t remainBytes = 0;//大块内存中剩余数

void* _freeList = nullptr;//管理换回来的内存(链表)的头指针

};

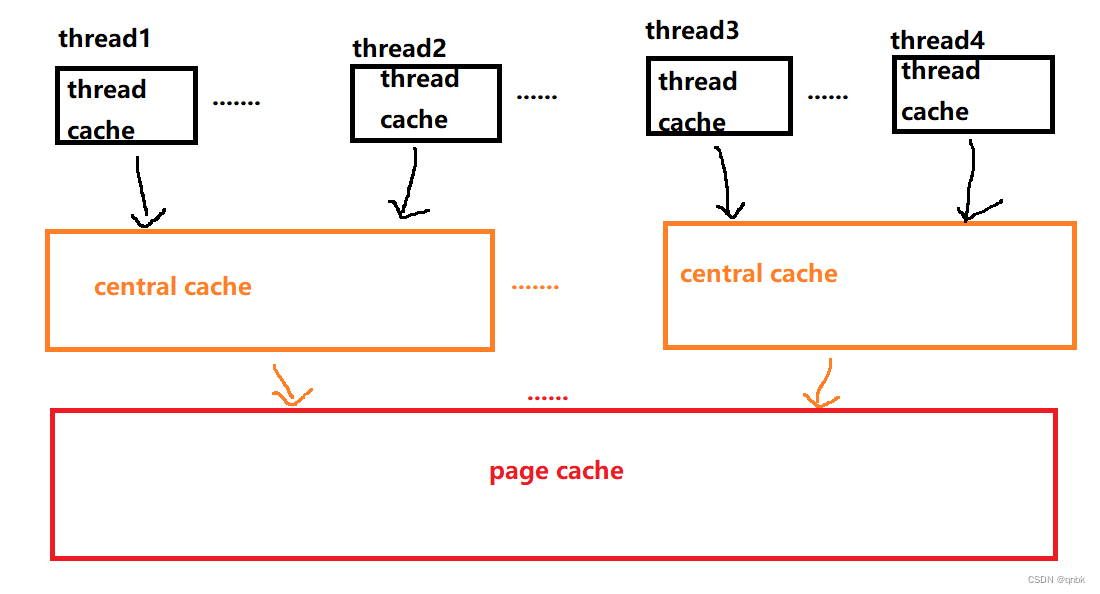

高并发内存池整体框架

现代很多的开发环境都是多核多线程,在申请内存的场景下,必然存在激烈的锁竞争问题。

内存池需要考虑以下几方面的问题。

- 性能问题。

- 多线程环境下,锁竞争问题。

- 内存碎片问题。

concurrent memory pool:

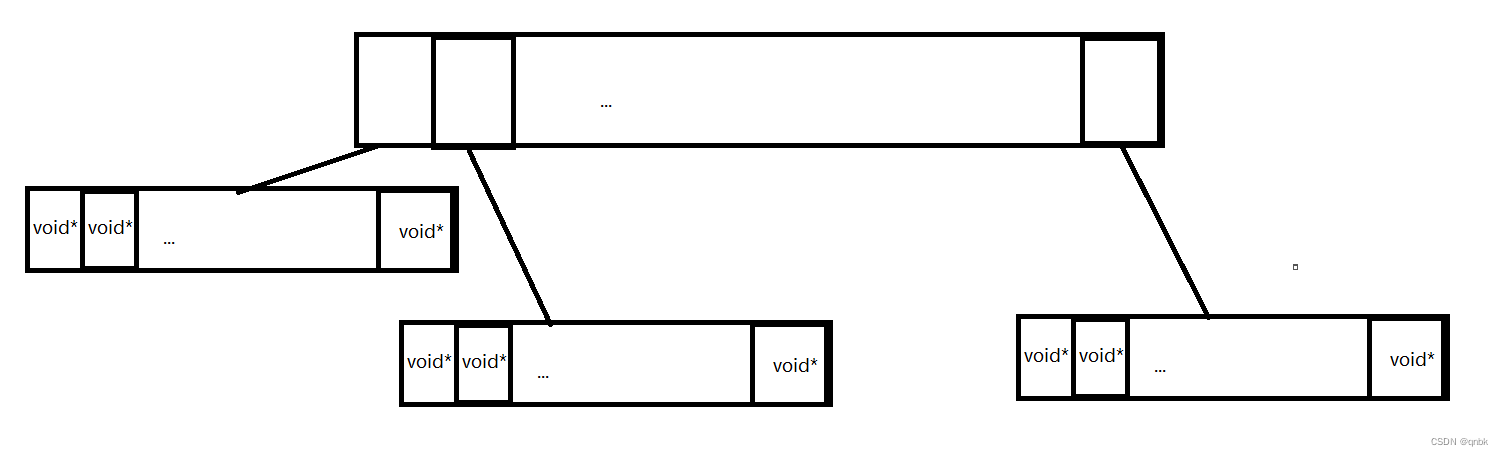

- thread cache:线程缓存是每个线程独有的,用于小于256KB的内存的分配,线程从这里申请内存不需要加锁,每个线程独享一个cache,这也就是这个并发线程池高效的地方。

- central cache:中心缓存是所有线程所共享,thread cache是按需从central cache中获取的对象。central cache合适的时机回收thread cache中的对象,避免一个线程占用了太多的内存,而其他线程的内存吃紧,达到内存分配在多个线程中更均衡的按需调度的目的。central cache是存在竞争的,所以从这里取内存对象是需要加锁,首先这里用的是桶锁,其次只有thread cache的没有内存对象时才会找central cache,所以这里竞争不会很激烈。

- page cache:页缓存是在central cache缓存上面的一层缓存,存储的内存是以页为单位存储及分配的,central cache没有内存对象时,从page cache分配出一定数量的page,并切割成定长大小的小块内存,分配给central cache。当一个span的几个跨度页的对象都回收以后,page cache会回收central cache满足条件的span对象,并且合并相邻的页,组成更大的页,缓解内存碎片的问题。

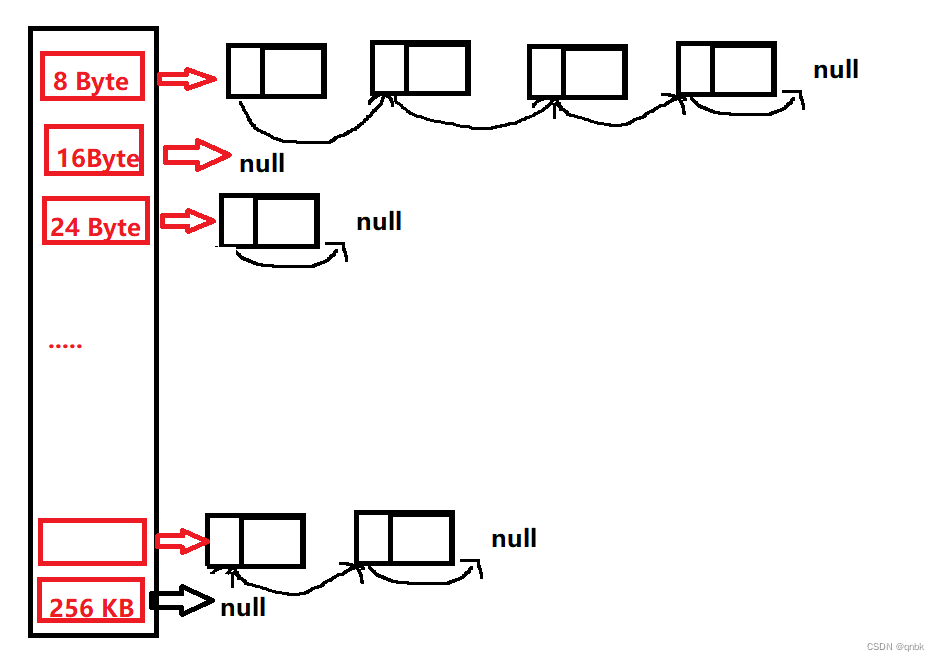

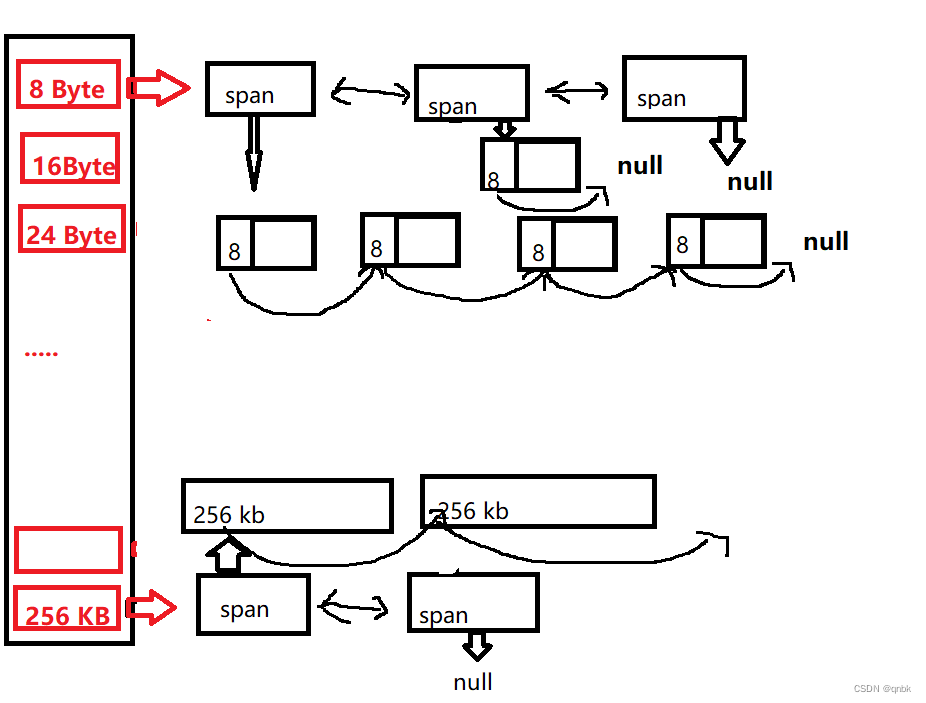

thread cache

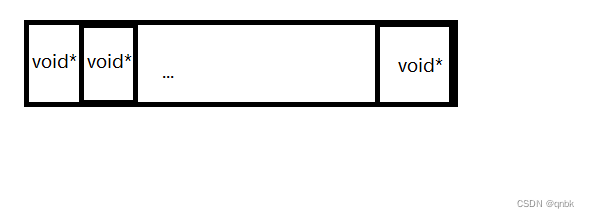

thread cache是哈希桶结构,每个桶是一个按桶位置映射大小的内存块对象的自由链表。每个线程都会有一个thread cache对象,这样每个线程在这里获取对象和释放对象时是无锁的。

自由链表的哈希桶跟对象大小的映射关系

class SizeClass//计算对象大小的对齐映射规则

{

public:

// 整体控制在最多10%左右的内碎片浪费

// [1,128] 8byte对齐 freelist[0,16)

// [128+1,1024] 16byte对齐 freelist[16,72)

// [1024+1,8*1024] 128byte对齐 freelist[72,128)

// [8*1024+1,64*1024] 1024byte对齐 freelist[128,184)

// [64*1024+1,256*1024] 8*1024byte对齐 freelist[184,208)

static inline size_t _RoundUp(size_t bytes, size_t alignNum)//计算对齐数

{

//size_t alignSize ;//对齐

//if (size % alignNum != 0)

//{

// alignSize = (size / alignNum + 1) * alignNum;

//}

//else

//{

// alignSize = size;

//}

//return alignSize;

return ((bytes + alignNum - 1) & ~(alignNum - 1));

}

static inline size_t RoundUp(size_t size)

{

if (size <= 128)

{

return _RoundUp(size, 8);

}

else if (size <= 1024)

{

return _RoundUp(size, 16);

}

else if (size <= 8 * 1024)

{

return _RoundUp(size, 128);

}

else if (size <= 64 * 1024)

{

return _RoundUp(size, 1024);

}

else if (size <= 256 * 1024)

{

return _RoundUp(size, 8 * 1024);

}

else

{

return _RoundUp(size, 1 << PAGE_SHIFT);

}

}

映射哪一个自由链表桶

static inline size_t _Index(size_t bytes, size_t alignNum)

{

/*if (bytes % alignNum == 0)

{

return bytes / alignNum - 1;

}

else

{

return bytes / alignNum;

}*/

return ((bytes + (1 << alignNum) - 1) >> alignNum) - 1;

}

// 计算映射的哪一个自由链表桶

static inline size_t Index(size_t bytes)

{

assert(bytes <= MAX_BYTES);

// 每个区间有多少个链

static int group_array[4] = { 16, 56, 56, 56 };

if (bytes <= 128) {

return _Index(bytes, 3);//8 2^3

}

else if (bytes <= 1024) {

return _Index(bytes - 128, 4) + group_array[0];//把前面128减掉,再加上前一个桶的数量

}

else if (bytes <= 8 * 1024) {

return _Index(bytes - 1024, 7) + group_array[1] + group_array[0];

}

else if (bytes <= 64 * 1024) {

return _Index(bytes - 8 * 1024, 10) + group_array[2] + group_array[1] + group_array[0];

}

else if (bytes <= 256 * 1024) {

return _Index(bytes - 64 * 1024, 13) + group_array[3] + group_array[2] + group_array[1] + group_array[0];

}

else {

assert(false);

}

return -1;

}

};

申请内存:

- 当内存申请size<=256KB,先获取到线程本地存储的thread cache对象,计算size映射的哈希桶自由链表下标i。

- 如果自由链表_freeLists[i]中有对象,则直接Pop一个内存对象返回。

- 如果_freeLists[i]中没有对象时,则批量从central cache中获取一定数量的对象,插入到自由链表并返回一个对象。

释放内存

- .当释放内存小于256k时将内存释放回thread cache,计算size映射自由链表桶位置i,将对象Push到_freeLists[i]。

- 当链表的长度过长,则回收一部分内存对象到central cache。

thread cache 设计

#pragma once

#include "Common.h"

class ThreadCache

{

public:

//申请和释放对象

void* Allocate(size_t size);

void Deallocate(void* ptr, size_t size);

//从中心缓存获取对象

void* FetchFromCentralCache(size_t index, size_t size);

void ListTooLong(FreeList& list, size_t size);//释放对象时,链表过长 ,回收内存到centrral cache

private:

FreeList _freeLists[NFREELISTS];//哈希表,每个位置挂的都是_freeList

};

// TLS:在线程内全局可访问,但不能被其他线程访问到->保持数据的独立性,不需要锁控制,减少成本

static _declspec(thread) ThreadCache * pTLSThreadCache = nullptr;

central cache

central cache也是一个哈希桶结构(t桶锁),他的哈希桶的映射关系跟thread cache是一样的。不同的是他的每个哈希桶位置挂是SpanList链表结构,不过每个映射桶下面的span中的大内存块被按映射关系切成了一个个小内存块对象挂在span的自由链表中。

申请内存:

- 当thread cache中没有内存时,就会批量向central cache申请一些内存对象,这里的批量获取对 象的数量使用了类似网络tcp协议拥塞控制的慢开始算法;central cache也有一个哈希映射的spanlist,spanlist中挂着span,从span中取出对象给thread cache,这个过程是需要加锁的,这里使用的是一个桶锁,尽可能提高效率。

- central cache映射的spanlist中所有span的都没有内存以后,则需要向page cache申请一个新的span对象,拿到span以后将span管理的内存按大小切好作为自由链表链接到一起。然后从span中取对象给thread cache

- central cache的中挂的span中use_count记录分配了多少个对象出去,分配一个对象给threadcache,就++use_count

释放内存

- 当thread_cache过长或者线程销毁,则会将内存释放回central cache中的,释放回来时–use_count。当use_count减到0时则表示所有对象都回到了span,则将span释放回page cache,page cache中会对前后相邻的空闲页进行合并。

以页为单位的大内存管理span的定义及spanlist定义

struct Span//管理多个连续大块内存跨度结构

{

PAGE_ID _pageId = 0;//大块内存起始页号

size_t n = 0;//页的数量

Span* _next = nullptr;//双向链表

Span* _prev = nullptr;//双向链表

size_t _objSize = 0;//切好的小对象的大小

size_t _useCount = 0;//切好的小块内存,被分给thread cache计数

void* _freeList = nullptr;//切好的小块内存自由链表

bool _isUse = false;//是否被使用

};

class SpanList//带头双向链表

{

public:

SpanList()

{

_head = new Span;

_head->_next = _head;

_head->_prev = _head;

}

Span* Begin()

{

return _head->_next;

}

Span* End()

{

return _head;

}

bool Empty()

{

//cout << "heool spanlist empty" << endl;

return _head->_next == _head;

}

void PushFront(Span* span)

{

//cout << "hello common pushfront" << endl;

Insert(Begin(), span);

}

Span* PopFront()

{

//cout << "hello commom popfront" << endl;

Span* front = _head->_next;

Erase(front);

return front;

}

void Insert(Span* pos, Span* newSpan)

{

//cout << "hello commom insert" << endl;

assert(pos);

assert(newSpan);

Span* prev = pos->_prev;

prev->_next = newSpan;

newSpan->_prev = prev;

newSpan->_next = pos;

pos->_prev = newSpan;

}

void Erase(Span* pos)

{

assert(pos);

assert(pos != _head);

Span* prev = pos->_prev;

Span* next = pos->_next;

prev->_next = next;

next->_prev = prev;

}

private:

Span* _head;

public:

std::mutex _mtx;//桶锁

};

central cache整体设计

#pragma once

#include "Common.h"

//单例模式

class CentralCache

{

public:

static CentralCache* GetInstance()

{

return &_sInst;

}

// 获取一个非空的span

Span* GetOneSpan(SpanList& list, size_t byte_size);

// 从中心缓存获取一定数量的对象给thread cache

size_t FetchRangeObj(void*& start, void*& end, size_t batchNum, size_t size);

// 将一定数量的对象释放到span跨度

void ReleaseListToSpans(void* start, size_t byte_size);

private:

SpanList _spanLists[NFREELISTS];//在ThreadCache是几号桶,在CentralCache就是几号桶

private:

CentralCache() //把构造函数放在私有:别人不能创建对象

{}

CentralCache(const CentralCache&) = delete;

static CentralCache _sInst;

};

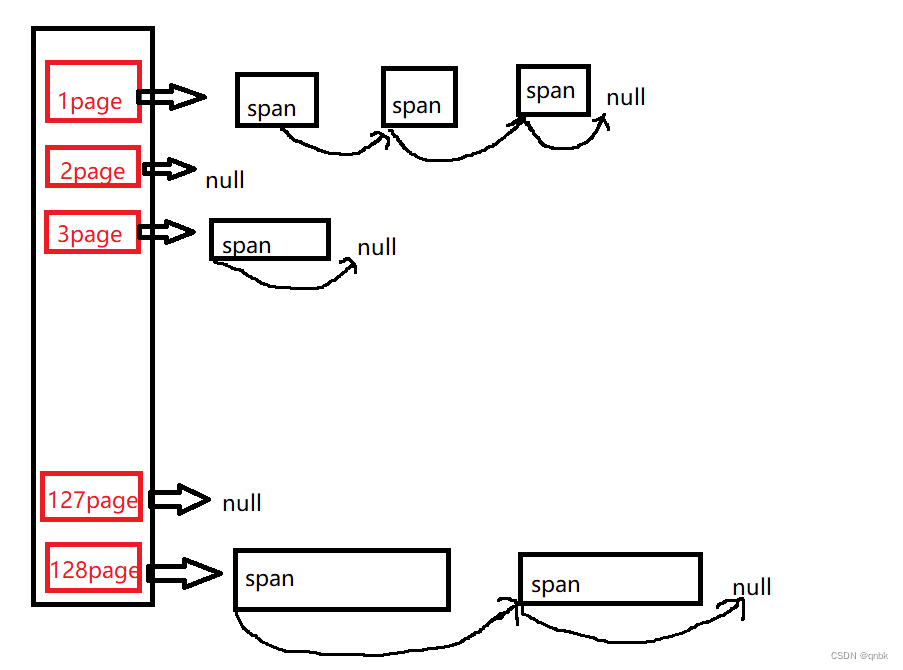

page cache

申请内存:

- 当central cache向page cache申请内存时,page cache先检查对应位置有没有span,如果没有则向更大页寻找一个span,如果找到则分裂成两个。比如:申请的是4页page,4页page后面没有挂span,则向后面寻找更大的span,假设在10页page位置找到一个span,则将10页page span分裂为一个4页pagespan和一个6页page span

- 如果找到_spanList[128]都没有合适的span,则向系统使用mmap、brk或者是VirtualAlloc等方式申请128页page span挂在自由链表中,再重复1中的过程。

- 需要注意的是central cache和page cache 的核心结构都是spanlist的哈希桶,但是他们是有本质区别的,central cache中哈希桶,是按跟thread cache一样的大小对齐关系映射的,他的spanlist中挂的span中的内存都被按映射关系切好链接成小块内存的自由链表。而page cache 中的spanlist则是按下标桶号映射的,也就是说第i号桶中挂的span都是i页内存。

释放内存:

- 如果central cache释放回一个span,则依次寻找span的前后page id的没有在使用的空闲span,看是否可以合并,如果合并继续向前寻找。这样就可以将切小的内存合并收缩成大的span,减少内存碎片

- 如果Central Cache中的span usecount=0说明切分给 thread cache的小块内存都回来了则Central Cache 把这个span还给page cache,page cache通过页号查看前后相邻页是否空闲,是就合并出更大的页

整体设计

#pragma once

#include "Common.h"

#include "ObjectPool.h"

class PageCache

{

public:

static PageCache* GetInstance()

{

//cout << "hello Page cache getinstance" << endl;

return &_sInst;

}

Span* MapObjectToSpan(void* obj);//获取对象到span的映射

Span* NewSpan(size_t k);//获取一个k页的span

void ReleaseSpanToPageCache(Span* span);//释放空闲span,合并相邻的span

std::mutex _pageMtx;

private:

SpanList _spanList[NPAGES];

ObjectPool<Span> spanPool;

std::unordered_map<PAGE_ID,Span*> _idSpanMap;//页号跟span的映射

PageCache() {}

PageCache(const PageCache&) = delete;

static PageCache _sInst;

};

代码总体实现

ObjectPool.h

#pragma once

#pragma once

#include <iostream>

#include <vector>

#include <time.h>

#include "Common.h"

using std::cout;

using std::endl;

//定长内存池

//template <size_t N>

//class ObjectPool

//{};

/*

#ifdef _WIN32

#include <windows.h>

#else

#endif

inline static void* SystemAlloc(size_t kpage)//直接去堆上按页申请内存

{

#ifdef _WIN32

void* ptr = VirtualAlloc(0, kpage << 13, MEM_COMMIT | MEM_RESERVE,

PAGE_READWRITE);

#else

// linux下brk mmap等

#endif

if (ptr == nullptr)

throw std::bad_alloc();

return ptr;

}

*/

template <class T>

class ObjectPool

{

public:

T* New()

{

T* obj = nullptr;

if (_freeList)

{

//优先把还回来的内存块再次重复利用

void* next = (*(void**)_freeList);

obj = (T*)_freeList;

_freeList = next;

}

else

{

//剩余内存不够一个对象大小时,重新开大块空间

if (remainBytes < sizeof(T))

{

remainBytes = 128 * 1024;

//_memory = (char*)malloc(remainBytes);

_memory = (char*)SystemAlloc(remainBytes >> 13);

if (_memory == nullptr)

{

throw std::bad_alloc();

}

}

obj = (T*)_memory;

size_t objSize = sizeof(T) < sizeof(void*) ? sizeof(void*) : sizeof(T);

_memory += objSize;

remainBytes -= objSize;

}

//定位new,显示调用T的构造函数初始化,对已有的空间初始化

new(obj)T;

return obj;

}

void Delete(T* obj)

{

//还回来

//显示调用析构函数清理对象

obj->~T();

if (_freeList == nullptr)

{

_freeList = obj;

//*(int*)obj = nullptr;//前四个字节用来保存下一个内存的地址 把obj强转成int* 再解引用->int 获得此地址 64位下跑不了

*(void**)obj = nullptr;//64位下解引用是void *,*(int**)也可以

}

else

{

//头插

*(void**)obj = _freeList;

_freeList = obj;

}

}

private:

char* _memory = nullptr;//指向大块内存,char是一个字节,好切分内存

size_t remainBytes = 0;//大块内存中剩余数

void* _freeList = nullptr;//管理换回来的内存(链表)的头指针

};

Common.h

#pragma once

//公共文件

#include <iostream>

#include <vector>

#include <time.h>

#include <assert.h>

#include <thread>

#include <mutex>

#include <algorithm>

#include <windows.h>

#include <unordered_map>

#include <map>

using std::cout;

using std::endl;

static const size_t MAX_BYTES = 256 * 1024;//256 KB

static const size_t NFREELISTS = 208;//桶的总数量

static const size_t NPAGES = 129;//页的数量

static const size_t PAGE_SHIFT = 13;//

#ifdef _WIN64

typedef unsigned long long PAGE_ID;

#elif _WIN32

typedef size_t PAGE_ID;

#else

//linux

#endif

inline static void* SystemAlloc(size_t kpage)//直接去堆上按页申请内存

{

#ifdef _WIN32

void* ptr = VirtualAlloc(0, kpage << 13, MEM_COMMIT | MEM_RESERVE, PAGE_READWRITE);

#else

// linux下brk mmap等

#endif

if (ptr == nullptr)

throw std::bad_alloc();

return ptr;

}

inline static void SystemFree(void* ptr)

{

#ifdef _WIN32

VirtualFree(ptr, 0, MEM_RELEASE);

#else

// sbrk unmmap等

#endif

}

static void*& NextObj(void* obj)//取对象的头4/8字节

{

return *(void**)obj;

}

class FreeList //管理切分好的小对象的自由链表

{

public:

void Push(void* obj)//插入

{

//头插

//*(void**)obj = _freeList;

NextObj(obj) = _freeList;

_freeList = obj;

_size++;

}

void PushRange(void* start, void* end, size_t n)

{

//cout << "hello common pushrange" << endl;

NextObj(end) = _freeList;

_freeList = start;

/*

//测试验证+条件断点

int i = 0;

void* cur = start;

while (cur)

{

i++;

cur = NextObj(cur);

}

if (n != i)

{

//int x = 0;

cout << "不匹配" << endl;

}

*/

_size += n;

}

void PopRange(void*& start, void*& end, size_t n)

{

assert(n >= _size);

start = _freeList;

end = start;

for (size_t i = 0; i < n - 1; i++)

{

end = NextObj(end);

}

_freeList = NextObj(end);

NextObj(end) = nullptr;

_size -= n;

}

void* Pop()//弹出对象

{

//头删

assert(_freeList);

void* obj = _freeList;

_freeList = NextObj(obj);

_size--;

return obj;

}

bool Empty()

{

return _freeList == nullptr;

}

size_t& MaxSize()

{

return _maxSize;

}

size_t Size()

{

return _size;

}

private:

void* _freeList = nullptr;

size_t _maxSize = 1;//

size_t _size = 0;//个数

};

class SizeClass//计算对象大小的对齐映射规则

{

public:

// 整体控制在最多10%左右的内碎片浪费

// [1,128] 8byte对齐 freelist[0,16)

// [128+1,1024] 16byte对齐 freelist[16,72)

// [1024+1,8*1024] 128byte对齐 freelist[72,128)

// [8*1024+1,64*1024] 1024byte对齐 freelist[128,184)

// [64*1024+1,256*1024] 8*1024byte对齐 freelist[184,208)

static inline size_t _RoundUp(size_t bytes, size_t alignNum)//计算对齐数

{

//size_t alignSize ;//对齐

//if (size % 8 != 0)

//{

// alignSize = (size / alignNum + 1) * alignNum;

//}

//else

//{

// alignSize = size;

//}

//return alignSize;

return ((bytes + alignNum - 1) & ~(alignNum - 1));

}

static inline size_t RoundUp(size_t size)//计算对齐数

{

if (size <= 128)

{

return _RoundUp(size, 8);

}

else if (size <= 1024)

{

return _RoundUp(size, 16);

}

else if (size <= 8 * 1024)

{

return _RoundUp(size, 128);

}

else if (size <= 64 * 1024)

{

return _RoundUp(size, 1024);

}

else if (size <= 256 * 1024)

{

return _RoundUp(size, 8 * 1024);

}

else

{

return _RoundUp(size, 1 << PAGE_SHIFT);

//assert(false);

//return -1;

}

}

static inline size_t _Index(size_t bytes, size_t alignNum)

{

/*if (bytes % alignNum == 0)

{

return bytes / alignNum - 1;

}

else

{

return bytes / alignNum;

}*/

return ((bytes + (1 << alignNum) - 1) >> alignNum) - 1;

}

// 计算映射的哪一个自由链表桶

static inline size_t Index(size_t bytes)

{

assert(bytes <= MAX_BYTES);

// 每个区间有多少个链

static int group_array[4] = { 16, 56, 56, 56 };

if (bytes <= 128) {

return _Index(bytes, 3);//8 2^3

}

else if (bytes <= 1024) {

return _Index(bytes - 128, 4) + group_array[0];//把前面128减掉,再加上前一个桶的数量

}

else if (bytes <= 8 * 1024) {

return _Index(bytes - 1024, 7) + group_array[1] + group_array[0];

}

else if (bytes <= 64 * 1024) {

return _Index(bytes - 8 * 1024, 10) + group_array[2] + group_array[1] + group_array[0];

}

else if (bytes <= 256 * 1024) {

return _Index(bytes - 64 * 1024, 13) + group_array[3] + group_array[2] + group_array[1] + group_array[0];

}

else {

assert(false);

}

return -1;

}

static size_t NumMoveSize(size_t size)// 一次thread cache从中心缓存获取多少个对象

{

assert(size > 0);

// [2, 512],一次批量移动多少个对象的(慢启动)上限值

// 小对象一次批量上限高

// 小对象一次批量上限低

int num = MAX_BYTES / size;

if (num < 2)

num = 2;

if (num > 512)

num = 512;

return num;

}

static size_t NumMovePage(size_t size)// 计算一次向系统获取几个页

{

// 单个对象 8byte

// ...

// 单个对象 256KB

size_t num = NumMoveSize(size);

size_t npage = num * size;

npage >>= PAGE_SHIFT;

if (npage == 0)

npage = 1;

return npage;

}

};

struct Span//管理多个连续大块内存跨度结构

{

PAGE_ID _pageId = 0;//大块内存起始页号

size_t n = 0;//页的数量

Span* _next = nullptr;//双向链表

Span* _prev = nullptr;//双向链表

size_t _objSize = 0;//切好的小对象的大小

size_t _useCount = 0;//切好的小块内存,被分给thread cache计数

void* _freeList = nullptr;//切好的小块内存自由链表

bool _isUse = false;//是否被使用

};

class SpanList//带头双向链表

{

public:

SpanList()

{

_head = new Span;

_head->_next = _head;

_head->_prev = _head;

}

Span* Begin()

{

return _head->_next;

}

Span* End()

{

return _head;

}

bool Empty()

{

//cout << "heool spanlist empty" << endl;

return _head->_next == _head;

}

void PushFront(Span* span)

{

//cout << "hello common pushfront" << endl;

Insert(Begin(), span);

}

Span* PopFront()

{

//cout << "hello commom popfront" << endl;

Span* front = _head->_next;

Erase(front);

return front;

}

void Insert(Span* pos, Span* newSpan)

{

//cout << "hello commom insert" << endl;

assert(pos);

assert(newSpan);

Span* prev = pos->_prev;

prev->_next = newSpan;

newSpan->_prev = prev;

newSpan->_next = pos;

pos->_prev = newSpan;

}

void Erase(Span* pos)

{

assert(pos);

//assert(pos != _head);

//1、条件断点

//2、查看栈帧

/*

if (pos = _head)

{

int x = 0;

}

*/

Span* prev = pos->_prev;

Span* next = pos->_next;

prev->_next = next;

next->_prev = prev;

}

private:

Span* _head;

public:

std::mutex _mtx;//桶锁

};

ConcurrentAlloc.h

#pragma once

#include "Common.h"

#include "ThreadCache.h"

#include "PageCache.h"

#include "ObjectPool.h"

static void* ConcurrentAlloc(size_t size)//线程调用申请内存

{

//通过TLS 每个线程无锁的获取自己的专属ThreadCache对象

if (size > MAX_BYTES)

{

size_t alignSize = SizeClass::RoundUp(size);//对齐

size_t kpage = alignSize >> PAGE_SHIFT;//获取页数

PageCache::GetInstance()->_pageMtx.lock();

Span* span = PageCache::GetInstance()->NewSpan(kpage);

//span->_objSize = size;

PageCache::GetInstance()->_pageMtx.unlock();

void* ptr = (void*)(span->_pageId << PAGE_SHIFT);

return ptr;

}

else

{

if (pTLSThreadCache == nullptr)

{

//pTLSThreadCache = new ThreadCache;

static ObjectPool<ThreadCache> tcPool;

pTLSThreadCache = tcPool.New();

}

cout << std::this_thread::get_id() << ":" << pTLSThreadCache << endl;

return pTLSThreadCache->Allocate(size);

}

}

static void ConcurrentFree(void* ptr)

{

//size:不给大小不知道要还给桶的哪个位置

Span* span = PageCache::GetInstance()->MapObjectToSpan(ptr);

size_t size = span->_objSize;//对齐以后的大小

if (size > MAX_BYTES)

{

PageCache::GetInstance()->_pageMtx.lock();

PageCache::GetInstance()->ReleaseSpanToPageCache(span);

PageCache::GetInstance()->_pageMtx.unlock();

}

else

{

assert(pTLSThreadCache);

pTLSThreadCache->Deallocate(ptr, size);

}

}

ThreadCache.h

#pragma once

#include "Common.h"

class ThreadCache

{

public:

//申请和释放对象

void* Allocate(size_t size);

void Deallocate(void* ptr, size_t size);

//从中心缓存获取对象

void* FetchFromCentralCache(size_t index, size_t size);

void ListTooLong(FreeList& list, size_t size);//释放对象时,链表过长 ,回收内存到centrral cache

private:

FreeList _freeLists[NFREELISTS];//哈希表,每个位置挂的都是_freeList

};

// TLS:在线程内全局可访问,但不能被其他线程访问到->保持数据的独立性,不需要锁控制,减少成本

static _declspec(thread) ThreadCache * pTLSThreadCache = nullptr;

ThreadCache.cpp

#include "ThreadCache.h"

#include "CentralCache.h"

#include "Common.h"

void* ThreadCache::FetchFromCentralCache(size_t index, size_t size)

{

cout << "hello common fecthcenrercache" << endl;

//慢开始反馈调节算法

//最开始不会向 central cache要太多因为可能用不完,如果不要size大小需求batchNum会不断增长直到上限;

//size越大一次向central cache要的越小,size越小一次向central cache要的越大

size_t batchNum = min(_freeLists[index].MaxSize(), SizeClass::NumMoveSize(size));

if (_freeLists[index].MaxSize() == batchNum)

{

_freeLists[index].MaxSize() += 1;

}

void* start = nullptr;

void* end = nullptr;

size_t actualNum = CentralCache::GetInstance()->FetchRangeObj(start, end, batchNum,size);

assert(actualNum > 0);

if (actualNum == 1)

{

assert(start == end);

return start;

}

else

{

_freeLists[index].PushRange(NextObj(start), end, actualNum - 1);

return start;

}

return nullptr;

}

void* ThreadCache::Allocate(size_t size)//申请对象

{

//

assert(size <= MAX_BYTES);

size_t alignSize = SizeClass::RoundUp(size);//获取对其数

size_t index = SizeClass::Index(size);//在哪一个桶-》获取桶的位置

if (!_freeLists[index].Empty())

{

return _freeLists[index].Pop();

}

else

{

return FetchFromCentralCache(index,alignSize);//从中心缓存获取对象

}

}

void ThreadCache::Deallocate(void* ptr, size_t size)//释放对象

{

assert(size <= MAX_BYTES);

assert(ptr);

//找出自由链表映射的桶,对象插入

size_t index = SizeClass::Index(size);//属于哪个桶

_freeLists[index].Push(ptr);

//当链表长度大于等于一次批量申请的内存时就开始还一段内存给central cache

if (_freeLists[index].Size() >= _freeLists[index].MaxSize())

{ ListTooLong(_freeLists[index], size);

}

}

void ThreadCache::ListTooLong(FreeList& list, size_t size)

{

void* start = nullptr;

void* end = nullptr;

list.PopRange(start,end,list.MaxSize());//取出内存

//把内存还给下一层:central cache

CentralCache::GetInstance()->ReleaseListToSpans(start,size);

}

CentralCache.h

#include "CentralCache.h"

#include "PageCache.h"

CentralCache CentralCache::_sInst;

Span* CentralCache::GetOneSpan(SpanList& list, size_t size)

{

//cout << "hello central getonspan" << endl;

// 从SpanLists或者Page cache 获取一个非空的span

//查看当前spanlist中是否还有非空的/还未分配对象的

Span* it = list.Begin();

while (it != list.End())

{

if (it->_freeList != nullptr)

{

//挂着对象

return it;

}

else

{

it = it->_next;

}

}

//先把central cache的桶锁解掉,这样如果其他线程释放内存对象回来不会阻塞

list._mtx.unlock();

//没有空闲span,找 page cache要

PageCache::GetInstance()->_pageMtx.lock();

Span* span = PageCache::GetInstance()->NewSpan(SizeClass::NumMovePage(size));

span->_isUse = true;

span->_objSize = size;

PageCache::GetInstance()->_pageMtx.unlock();

//对获取的span进行切分吧、,不需要加锁,因为其他线程访问不到这个span

//通过页号计算起始地址: 页号<<PAGE_SHIFT

char* start = (char*)(span->_pageId << PAGE_SHIFT);

size_t bytes = span->n << PAGE_SHIFT;//计算span的大块起始地址和大块内存的大小(字节数)

char* end = start + bytes;

//把大块内存切成自由链表连接起来

//先切一块下来去做头,方便尾插

span->_freeList = start;

start += size;

int i = 1;

void* tail = span->_freeList;

while (start < end)

{

i++;

NextObj(tail) = start;

tail = NextObj(tail);//tail = start

start += size;

}

NextObj(tail) = nullptr;//尾插最后一位需要置空

list._mtx.lock();//切好span以后需要把span挂到桶里去再加锁

list.PushFront(span);

return span;

}

size_t CentralCache::FetchRangeObj(void*& start, void*& end, size_t batchNum, size_t size)// 从中心缓存获取一定数量的对象给thread cache

{

//cout << "hello hello central getonspan" << endl;

size_t index = SizeClass::Index(size);//先查看是哪个桶的

_spanLists[index]._mtx.lock();

Span* span = GetOneSpan(_spanLists[index], size);//先去找一个非空的span

assert(span);

assert(span->_freeList);

//从span中获取batchNum个对象

//如果不够batchNum,有多少拿多少

start = span->_freeList;

end = start;

size_t i = 0;

size_t actualNum = 1;

while (i < batchNum - 1 && NextObj(end) != nullptr)//end 往后走batchNum -1个

{

end = NextObj(end);

++i;

++actualNum;

}

span->_freeList = NextObj(end);

NextObj(end) = nullptr;

span->_useCount += actualNum;//被使用的个数

_spanLists[index]._mtx.unlock();

return actualNum;

}

void CentralCache::ReleaseListToSpans(void* start, size_t size)

{

size_t index = SizeClass::Index(size);//属于哪一个桶

_spanLists[index]._mtx.lock();

while (start)

{

void* next = NextObj(start);

Span* span = PageCache::GetInstance()->MapObjectToSpan(start);//找出对应的span

NextObj(start) = span->_freeList;

span->_freeList = start;

span->_useCount--;

if (span->_useCount == 0)//说明span切分出去的的所有小内存都回来了,

{//该span可以归还给page cache,page cache再可以去做前后页的合并

_spanLists[index].Erase(span);

span->_freeList = nullptr;

span->_next = nullptr;

span->_prev = nullptr;

//span还给下一层

_spanLists[index]._mtx.unlock();

//释放span给Page cache时,使用page cache锁

//这时把桶锁解掉

PageCache::GetInstance()->_pageMtx.lock();

PageCache::GetInstance()->ReleaseSpanToPageCache(span);

PageCache::GetInstance()->_pageMtx.unlock();

_spanLists[index]._mtx.lock();

}

start = next;

}

_spanLists[index]._mtx.unlock();

}

Pagecache.h

#pragma once

#include "Common.h"

#include "ObjectPool.h"

class PageCache

{

public:

static PageCache* GetInstance()

{

//cout << "hello Page cache getinstance" << endl;

return &_sInst;

}

Span* MapObjectToSpan(void* obj);//获取对象到span的映射

Span* NewSpan(size_t k);//获取一个k页的span

void ReleaseSpanToPageCache(Span* span);//释放空闲span,合并相邻的span

std::mutex _pageMtx;

private:

SpanList _spanList[NPAGES];

ObjectPool<Span> spanPool;

std::map<PAGE_ID, Span*> _idSpanMap;//页号跟span的映射

//std::unordered_map<PAGE_ID,Span*> _idSpanMap;//页号跟span的映射

PageCache() {}

PageCache(const PageCache&) = delete;

static PageCache _sInst;

};

PageCache.cpp

#include "PageCache.h"

PageCache PageCache::_sInst;

Span* PageCache::NewSpan(size_t k)//获取k页的span

{

//eg:只有一个128页的,需要两页的-》128分为2span和126span,2返回给central cache,126挂道对应的桶上

//如果central cache中的span usecount=0,说明切分给thread cache小块内存都还回来了,

//则central cache把span还给page cache,page cache通过页号查看相邻页是否空闲,是就合并出更大的page,解决内存碎片问题

assert(k > 0 && k < NPAGES);

if (k > NPAGES - 1)

{

void* ptr = SystemAlloc(k);//大于最大页数直接找堆要

//Span* span = new Span;

Span* span = spanPool.New();

span->_pageId = (PAGE_ID)ptr >> PAGE_SHIFT;

span->n = k;

_idSpanMap[span->_pageId] = span;

return span;

}

if (!_spanList[k].Empty())//第k个桶里面有没有span

{

Span* KSpan = _spanList[k].PopFront();

//建立id和span的映射关系方便centralcache回收小块内存时查找对应的span

for (PAGE_ID i = 0; i < KSpan->n; i++)

{

_idSpanMap[KSpan->_pageId + i] = KSpan;

}

return KSpan;

}

//第k个桶里是空的,检测后面的桶里有没有span,如果有进行切分

//切分成一个k页的span和一个 n-k 页的span

//k页的span返回给central cache,n-k 页的span挂到第 n-k 号桶中去

for (size_t i = k ; i < NPAGES; i++)

{

if (!_spanList[i].Empty())

{

Span* nSpan = _spanList[i].PopFront();

//Span* KSpan = new Span;

Span* KSpan = spanPool.New();

//在nSpan的头部切下K页

//k页span返回,nSpan再挂到对应映射

KSpan->_pageId = nSpan->_pageId;

KSpan->n = k;//kSpan页数变为k

nSpan->_pageId += k;//nSpan 页号变为 += k

nSpan->n -= k;

_spanList[nSpan->n].PushFront(nSpan);//把剩余的页挂到对应的位置

//存储nSpan的首尾页号跟span映射,方便page cache回收内存时进行合并查找

_idSpanMap[nSpan->_pageId] = nSpan;

_idSpanMap[nSpan->_pageId + nSpan->n - 1] = nSpan;

//建立id和span的映射,方便central cache回收查找对应的span

for (PAGE_ID i = 0; i < KSpan->n; i++)

{

_idSpanMap[KSpan->_pageId + i] = KSpan;

}

return KSpan;

}

}

//没有大页span

//找堆要128页的span

Span* bigSpan = spanPool.New();

//Span* bigSpan = new Span;

void* ptr = SystemAlloc(NPAGES - 1);

bigSpan->_pageId = (PAGE_ID)ptr >> PAGE_SHIFT;

bigSpan->n = NPAGES - 1;

_spanList[bigSpan->n].PushFront(bigSpan);

return NewSpan(k);

}

Span* PageCache::MapObjectToSpan(void* obj)

{

PAGE_ID id = ((PAGE_ID)obj >> PAGE_SHIFT);//找出页号

std::unique_lock<std::mutex> lock(_pageMtx);

auto ret = _idSpanMap.find(id);

if (ret != _idSpanMap.end())

{

return ret->second;//返回span的指针

}

else

{

assert(false);

return nullptr;

}

}

void PageCache::ReleaseSpanToPageCache(Span* span)

{

//对span前后的页进行合并,解决内存碎片问题

if (span->n > NPAGES - 1)

{

//大于128页的直接还给堆

void* ptr = (void*)(span->_pageId << PAGE_SHIFT);

SystemFree(ptr);

//delete span;

spanPool.Delete(span);

return;

}

//向前合并

while (1)

{

PAGE_ID prevId = span->_pageId - 1;

auto ret = _idSpanMap.find(prevId);

if (ret == _idSpanMap.end())

{//前面的页号没有,不合并

break;

}

Span* prevspan = ret->second;

if (prevspan->_isUse == true)

{//前面相邻页的span在使用

break;

}

if (prevspan->n + span->n > NPAGES - 1)

{

//合并数超过128,没办法管理

break;

}

//合并

span->_pageId = prevspan->_pageId;

span->n += prevspan->n;

_spanList[prevspan->n].Erase(prevspan);

//delete prevspan;

spanPool.Delete(prevspan);

}

//向后合并

while (1)

{

PAGE_ID nextId = span->_pageId + span->n;

auto ret = _idSpanMap.find(nextId);

if (ret == _idSpanMap.end())

{

break;

}

Span* nextspan = ret->second;

if (nextspan->_isUse == true)

{

break;

}

if (nextspan->n + span->n > NPAGES - 1)

{

break;

}

span->n += nextspan->n;

_spanList[nextspan->n].Erase(nextspan);

//delete(nextspan);

spanPool.Delete(nextspan);

}

//前后都合并过了

_spanList[span->n].PushFront(span);

span->_isUse = false;

//方便其他把此span合并

_idSpanMap[span->_pageId] = span;

_idSpanMap[span->_pageId + span->n - 1] = span;

}

复杂问题的调试技巧

- 条件断点:一般情况下可以直接运行程序,通过报错来查找问题。如果是断言错误,那么可以直接定位到报错位置,然后将此处的断言改为与if判断,在if语句里面打上一个断点(空语句是无法打断点可以随便在if里面加上一句代码),条件断点也客设置为普通断点,设置相应的条件,程序满足该条件则会停下。

- 查看函数栈帧:当前函数栈帧的调用情况(黄色箭头指向的是当前所在的函数栈帧)双击函数栈帧中的其他函数可以跳转对应的栈帧(浅灰色箭头指向的就是当前跳转到的函数栈帧)

- 死循环时中断程序:调试→全部中断,程序会在当前运行的地方停下

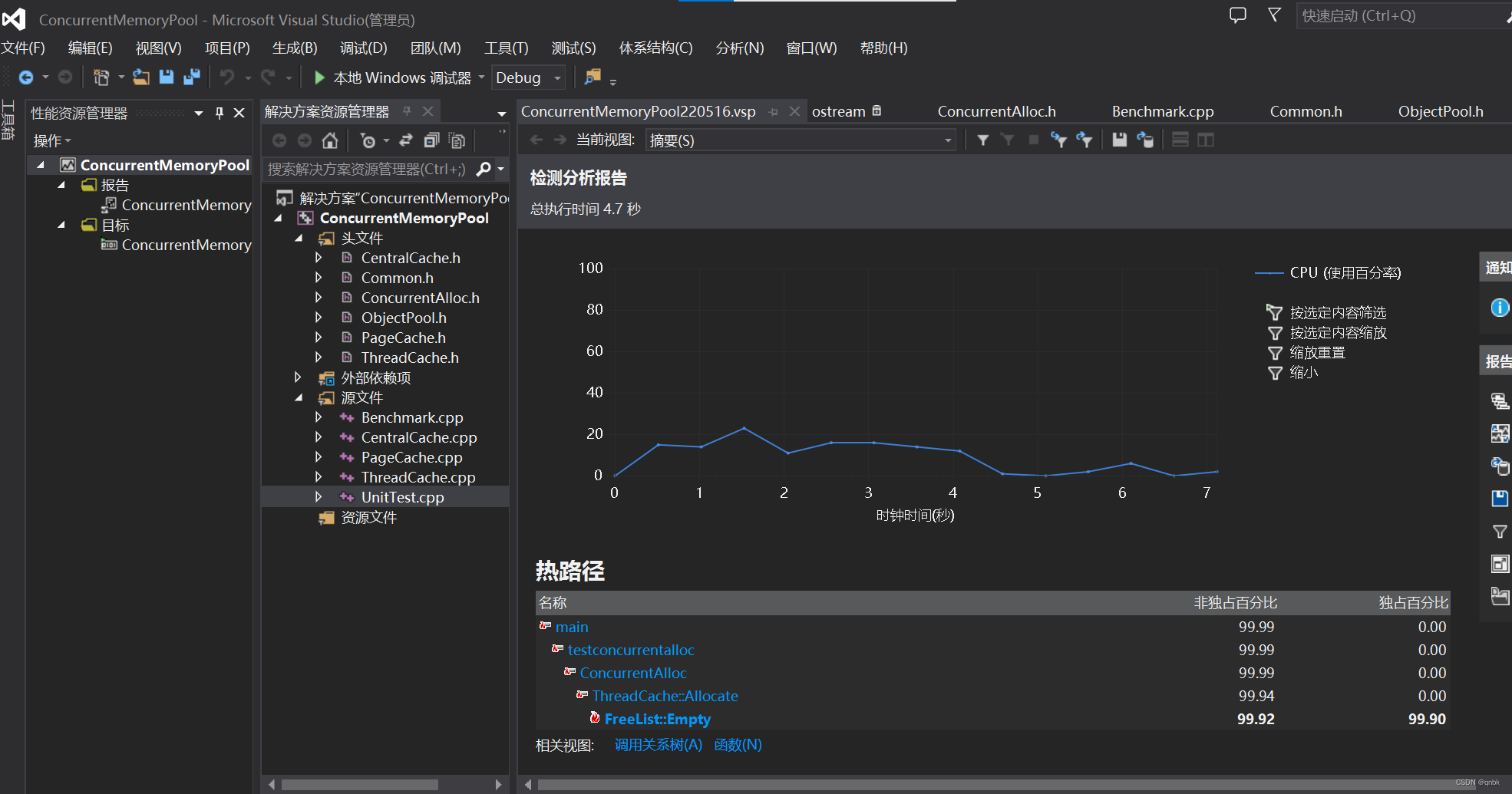

vs2013性能分析

调试-》性能与诊断-》开始-》检测

实现基数树进行优化

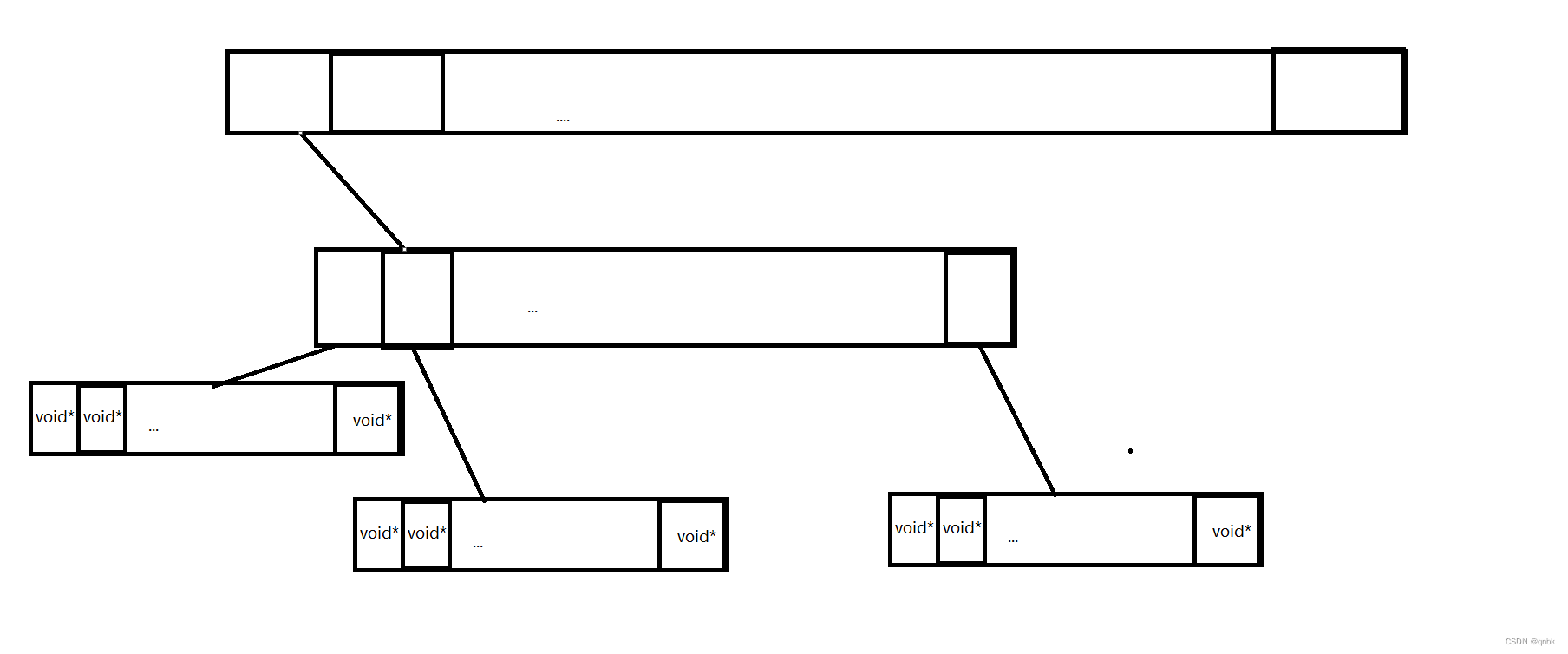

单层基数树

实际采用的就是直接定址法,每一个页号对应span的地址就存储数组中在以该页号为下标的位置

二层基数树

这里还是以32位平台下,一页的大小为8K为例来说明,此时存储页号最多需要19个比特位。而二层基数树实际上就是把这19个比特位分为两次进行映射。

三层基数树

上面一层基数树和二层基数树都适用于32位平台,而对于64位的平台就需要用三层基数树了。三层基数树与二层基数树类似,三层基数树实际上就是把存储页号的若干比特位分为三次进行映射。

代码实现

PageMap.h

#pragma once

#include "Common.h"

#include "ObjectPool.h"

template <int BITS>

class TCMalloc_PageMap1 {

private:

static const int LENGTH = 1 << BITS;

void** array_;

public:

typedef uintptr_t Number;

explicit TCMalloc_PageMap1(void* (*allocator)(size_t)) {

//array_ = reinterpret_cast<void**>((*allocator)(sizeof(void*) << BITS));

size_t size = sizeof(void*) << BITS;

size_t alignSize = SizeClass::_RoundUp(size,1 << PAGE_SHIFT);

array_ = SystemAlloc(alignSize >> PAGE_SHIFT);

memset(array_, 0, sizeof(void*) << BITS);

}

// Return the current value for KEY. Returns NULL if not yet set,

// or if k is out of range.

void* get(Number k) const {

if ((k >> BITS) > 0) {

return NULL;

}

return array_[k];

}

// REQUIRES "k" is in range "[0,2^BITS-1]".

// REQUIRES "k" has been ensured before.

//

// Sets the value 'v' for key 'k'.

void set(Number k, void* v) {

array_[k] = v;

}

};

// Two-level radix tree

template <int BITS>

class TCMalloc_PageMap2 {

private:

// Put 32 entries in the root and (2^BITS)/32 entries in each leaf.

static const int ROOT_BITS = 5;

static const int ROOT_LENGTH = 1 << ROOT_BITS;

static const int LEAF_BITS = BITS - ROOT_BITS;

static const int LEAF_LENGTH = 1 << LEAF_BITS;

// Leaf node

struct Leaf {

void* values[LEAF_LENGTH];

};

Leaf* root_[ROOT_LENGTH]; // Pointers to 32 child nodes

void* (*allocator_)(size_t); // Memory allocator

public:

typedef uintptr_t Number;

//explicit TCMalloc_PageMap2(void* (*allocator)(size_t))

explicit TCMalloc_PageMap2() {

//allocator_ = allocator;

memset(root_, 0, sizeof(root_));

}

void* get(Number k) const {

const Number i1 = k >> LEAF_BITS;

const Number i2 = k & (LEAF_LENGTH - 1);

if ((k >> BITS) > 0 || root_[i1] == NULL) {

return NULL;

}

return root_[i1]->values[i2];

}

void set(Number k, void* v) {

const Number i1 = k >> LEAF_BITS;

const Number i2 = k & (LEAF_LENGTH - 1);

ASSERT(i1 < ROOT_LENGTH);

root_[i1]->values[i2] = v;

}

bool Ensure(Number start, size_t n) {

for (Number key = start; key <= start + n - 1;) {

const Number i1 = key >> LEAF_BITS;

// Check for overflow

if (i1 >= ROOT_LENGTH)

return false;

// Make 2nd level node if necessary

if (root_[i1] == NULL) {

//Leaf* leaf = reinterpret_cast<Leaf*>((*allocator_)(sizeof(Leaf)));

//if (leaf == NULL) return false;

static ObjectPool<Leaf> leafpool;

//Leaf* leaf = reinterpret_cast<Leaf*>((*allocator_)

Leaf* leaf = (Leaf*)leafpool.New();

memset(leaf, 0, sizeof(*leaf));

root_[i1] = leaf;

}

// Advance key past whatever is covered by this leaf node

key = ((key >> LEAF_BITS) + 1) << LEAF_BITS;

}

return true;

}

void PreallocateMoreMemory() {

// Allocate enough to keep track of all possible pages

Ensure(0, 1 << BITS);

}

};

// Three-level radix tree

template <int BITS>

class TCMalloc_PageMap3 {

private:

// How many bits should we consume at each interior level

static const int INTERIOR_BITS = (BITS + 2) / 3; // Round-up

static const int INTERIOR_LENGTH = 1 << INTERIOR_BITS;

// How many bits should we consume at leaf level

static const int LEAF_BITS = BITS - 2 * INTERIOR_BITS;

static const int LEAF_LENGTH = 1 << LEAF_BITS;

// Interior node

struct Node {

Node* ptrs[INTERIOR_LENGTH];

};

// Leaf node

struct Leaf {

void* values[LEAF_LENGTH];

};

Node* root_; // Root of radix tree

void* (*allocator_)(size_t); // Memory allocator

Node* NewNode() {

Node* result = reinterpret_cast<Node*>((*allocator_)(sizeof(Node)));

if (result != NULL) {

memset(result, 0, sizeof(*result));

}

return result;

}

public:

typedef uintptr_t Number;

explicit TCMalloc_PageMap3(void* (*allocator)(size_t)) {

allocator_ = allocator;

root_ = NewNode();

}

void* get(Number k) const {

const Number i1 = k >> (LEAF_BITS + INTERIOR_BITS);

const Number i2 = (k >> LEAF_BITS) & (INTERIOR_LENGTH - 1);

const Number i3 = k & (LEAF_LENGTH - 1);

if ((k >> BITS) > 0 ||

root_->ptrs[i1] == NULL || root_->ptrs[i1]->ptrs[i2] == NULL) {

return NULL;

}

return reinterpret_cast<Leaf*>(root_->ptrs[i1]->ptrs[i2])->values[i3];

}

void set(Number k, void* v) {

ASSERT(k >> BITS == 0);

const Number i1 = k >> (LEAF_BITS + INTERIOR_BITS);

const Number i2 = (k >> LEAF_BITS) & (INTERIOR_LENGTH - 1);

const Number i3 = k & (LEAF_LENGTH - 1);

reinterpret_cast<Leaf*>(root_->ptrs[i1]->ptrs[i2])->values[i3] = v;

}

bool Ensure(Number start, size_t n) {

for (Number key = start; key <= start + n - 1;) {

const Number i1 = key >> (LEAF_BITS + INTERIOR_BITS);

const Number i2 = (key >> LEAF_BITS) & (INTERIOR_LENGTH - 1);

// Check for overflow

if (i1 >= INTERIOR_LENGTH || i2 >= INTERIOR_LENGTH)

return false;

// Make 2nd level node if necessary

if (root_->ptrs[i1] == NULL) {

Node* n = NewNode();

if (n == NULL) return false;

root_->ptrs[i1] = n;

}

// Make leaf node if necessary

if (root_->ptrs[i1]->ptrs[i2] == NULL) {

Leaf* leaf = reinterpret_cast<Leaf*>((*allocator_)

(sizeof(Leaf)));

if (leaf == NULL) return false;

memset(leaf, 0, sizeof(*leaf));

root_->ptrs[i1]->ptrs[i2] = reinterpret_cast<Node*>(leaf);

}

// Advance key past whatever is covered by this leaf node

key = ((key >> LEAF_BITS) + 1) << LEAF_BITS;

}

return true;

}

void PreallocateMoreMemory() {

}

};

PageCache.h

#pragma once

#include "Common.h"

#include "ObjectPool.h"

template <int BITS>

class TCMalloc_PageMap1 {

private:

static const int LENGTH = 1 << BITS;

void** array_;

public:

typedef uintptr_t Number;

explicit TCMalloc_PageMap1(void* (*allocator)(size_t)) {

//array_ = reinterpret_cast<void**>((*allocator)(sizeof(void*) << BITS));

size_t size = sizeof(void*) << BITS;

size_t alignSize = SizeClass::_RoundUp(size,1 << PAGE_SHIFT);

array_ = SystemAlloc(alignSize >> PAGE_SHIFT);

memset(array_, 0, sizeof(void*) << BITS);

}

// Return the current value for KEY. Returns NULL if not yet set,

// or if k is out of range.

void* get(Number k) const {

if ((k >> BITS) > 0) {

return NULL;

}

return array_[k];

}

// REQUIRES "k" is in range "[0,2^BITS-1]".

// REQUIRES "k" has been ensured before.

//

// Sets the value 'v' for key 'k'.

void set(Number k, void* v) {

array_[k] = v;

}

};

// Two-level radix tree

template <int BITS>

class TCMalloc_PageMap2 {

private:

// Put 32 entries in the root and (2^BITS)/32 entries in each leaf.

static const int ROOT_BITS = 5;

static const int ROOT_LENGTH = 1 << ROOT_BITS;

static const int LEAF_BITS = BITS - ROOT_BITS;

static const int LEAF_LENGTH = 1 << LEAF_BITS;

比特就业课

// Leaf node

struct Leaf {

void* values[LEAF_LENGTH];

};

Leaf* root_[ROOT_LENGTH]; // Pointers to 32 child nodes

void* (*allocator_)(size_t); // Memory allocator

public:

typedef uintptr_t Number;

//explicit TCMalloc_PageMap2(void* (*allocator)(size_t))

explicit TCMalloc_PageMap2() {

//allocator_ = allocator;

memset(root_, 0, sizeof(root_));

}

void* get(Number k) const {

const Number i1 = k >> LEAF_BITS;

const Number i2 = k & (LEAF_LENGTH - 1);

if ((k >> BITS) > 0 || root_[i1] == NULL) {

return NULL;

}

return root_[i1]->values[i2];

}

void set(Number k, void* v) {

const Number i1 = k >> LEAF_BITS;

const Number i2 = k & (LEAF_LENGTH - 1);

ASSERT(i1 < ROOT_LENGTH);

root_[i1]->values[i2] = v;

}

bool Ensure(Number start, size_t n) {

for (Number key = start; key <= start + n - 1;) {

const Number i1 = key >> LEAF_BITS;

// Check for overflow

if (i1 >= ROOT_LENGTH)

return false;

// Make 2nd level node if necessary

if (root_[i1] == NULL) {

//Leaf* leaf = reinterpret_cast<Leaf*>((*allocator_)(sizeof(Leaf)));

//if (leaf == NULL) return false;

static ObjectPool<Leaf> leafpool;

//Leaf* leaf = reinterpret_cast<Leaf*>((*allocator_)

Leaf* leaf = (Leaf*)leafpool.New();

memset(leaf, 0, sizeof(*leaf));

root_[i1] = leaf;

}

// Advance key past whatever is covered by this leaf node

key = ((key >> LEAF_BITS) + 1) << LEAF_BITS;

}

return true;

}

void PreallocateMoreMemory() {

// Allocate enough to keep track of all possible pages

Ensure(0, 1 << BITS);

}

};

// Three-level radix tree

template <int BITS>

class TCMalloc_PageMap3 {

private:

// How many bits should we consume at each interior level

static const int INTERIOR_BITS = (BITS + 2) / 3; // Round-up

static const int INTERIOR_LENGTH = 1 << INTERIOR_BITS;

// How many bits should we consume at leaf level

static const int LEAF_BITS = BITS - 2 * INTERIOR_BITS;

static const int LEAF_LENGTH = 1 << LEAF_BITS;

// Interior node

struct Node {

Node* ptrs[INTERIOR_LENGTH];

};

// Leaf node

struct Leaf {

void* values[LEAF_LENGTH];

};

Node* root_; // Root of radix tree

void* (*allocator_)(size_t); // Memory allocator

Node* NewNode() {

Node* result = reinterpret_cast<Node*>((*allocator_)(sizeof(Node)));

if (result != NULL) {

memset(result, 0, sizeof(*result));

}

return result;

}

public:

typedef uintptr_t Number;

explicit TCMalloc_PageMap3(void* (*allocator)(size_t)) {

allocator_ = allocator;

root_ = NewNode();

}

void* get(Number k) const {

const Number i1 = k >> (LEAF_BITS + INTERIOR_BITS);

const Number i2 = (k >> LEAF_BITS) & (INTERIOR_LENGTH - 1);

const Number i3 = k & (LEAF_LENGTH - 1);

if ((k >> BITS) > 0 ||

root_->ptrs[i1] == NULL || root_->ptrs[i1]->ptrs[i2] == NULL) {

return NULL;

}

return reinterpret_cast<Leaf*>(root_->ptrs[i1]->ptrs[i2])->values[i3];

}

void set(Number k, void* v) {

ASSERT(k >> BITS == 0);

const Number i1 = k >> (LEAF_BITS + INTERIOR_BITS);

const Number i2 = (k >> LEAF_BITS) & (INTERIOR_LENGTH - 1);

const Number i3 = k & (LEAF_LENGTH - 1);

reinterpret_cast<Leaf*>(root_->ptrs[i1]->ptrs[i2])->values[i3] = v;

}

bool Ensure(Number start, size_t n) {

for (Number key = start; key <= start + n - 1;) {

const Number i1 = key >> (LEAF_BITS + INTERIOR_BITS);

const Number i2 = (key >> LEAF_BITS) & (INTERIOR_LENGTH - 1);

// Check for overflow

if (i1 >= INTERIOR_LENGTH || i2 >= INTERIOR_LENGTH)

return false;

// Make 2nd level node if necessary

if (root_->ptrs[i1] == NULL) {

Node* n = NewNode();

if (n == NULL) return false;

root_->ptrs[i1] = n;

}

// Make leaf node if necessary

if (root_->ptrs[i1]->ptrs[i2] == NULL) {

Leaf* leaf = reinterpret_cast<Leaf*>((*allocator_)

(sizeof(Leaf)));

if (leaf == NULL) return false;

memset(leaf, 0, sizeof(*leaf));

root_->ptrs[i1]->ptrs[i2] = reinterpret_cast<Node*>(leaf);

}

// Advance key past whatever is covered by this leaf node

key = ((key >> LEAF_BITS) + 1) << LEAF_BITS;

}

return true;

}

void PreallocateMoreMemory() {

}

};

PageCache.cpp

#include "PageCache.h"

PageCache PageCache::_sInst;

Span* PageCache::NewSpan(size_t k)//获取k页的span

{

//eg:只有一个128页的,需要两页的-》128分为2span和126span,2返回给central cache,126挂道对应的桶上

//如果central cache中的span usecount=0,说明切分给thread cache小块内存都还回来了,

//则central cache把span还给page cache,page cache通过页号查看相邻页是否空闲,是就合并出更大的page,解决内存碎片问题

assert(k > 0 && k < NPAGES);

if (k > NPAGES - 1)

{

void* ptr = SystemAlloc(k);//大于最大页数直接找堆要

//Span* span = new Span;

Span* span = spanPool.New();

span->_pageId = (PAGE_ID)ptr >> PAGE_SHIFT;

span->n = k;

//_idSpanMap[span->_pageId] = span;

_idSpanMap.set(span->_pageId,span);

return span;

}

if (!_spanList[k].Empty())//第k个桶里面有没有span

{

Span* KSpan = _spanList[k].PopFront();

//建立id和span的映射关系方便centralcache回收小块内存时查找对应的span

for (PAGE_ID i = 0; i < KSpan->n; i++)

{

//_idSpanMap[KSpan->_pageId + i] = KSpan;

_idSpanMap.set(KSpan->_pageId + i, KSpan);

}

return KSpan;

}

//第k个桶里是空的,检测后面的桶里有没有span,如果有进行切分

//切分成一个k页的span和一个 n-k 页的span

//k页的span返回给central cache,n-k 页的span挂到第 n-k 号桶中去

for (size_t i = k ; i < NPAGES; i++)

{

if (!_spanList[i].Empty())

{

Span* nSpan = _spanList[i].PopFront();

//Span* KSpan = new Span;

Span* KSpan = spanPool.New();

//在nSpan的头部切下K页

//k页span返回,nSpan再挂到对应映射

KSpan->_pageId = nSpan->_pageId;

KSpan->n = k;//kSpan页数变为k

nSpan->_pageId += k;//nSpan 页号变为 += k

nSpan->n -= k;

_spanList[nSpan->n].PushFront(nSpan);//把剩余的页挂到对应的位置

//存储nSpan的首尾页号跟span映射,方便page cache回收内存时进行合并查找

//_idSpanMap[nSpan->_pageId] = nSpan;

//_idSpanMap[nSpan->_pageId + nSpan->n - 1] = nSpan

_idSpanMap.set(nSpan->_pageId, nSpan);

_idSpanMap.set(nSpan->_pageId + nSpan->n - 1, nSpan);

//建立id和span的映射,方便central cache回收查找对应的span

for (PAGE_ID i = 0; i < KSpan->n; i++)

{

//_idSpanMap[KSpan->_pageId + i] = KSpan;

_idSpanMap.set(KSpan->_pageId + i, KSpan);

}

return KSpan;

}

}

//没有大页span

//找堆要128页的span

Span* bigSpan = spanPool.New();

//Span* bigSpan = new Span;

void* ptr = SystemAlloc(NPAGES - 1);

bigSpan->_pageId = (PAGE_ID)ptr >> PAGE_SHIFT;

bigSpan->n = NPAGES - 1;

_spanList[bigSpan->n].PushFront(bigSpan);

return NewSpan(k);

}

Span* PageCache::MapObjectToSpan(void* obj)

{

PAGE_ID id = ((PAGE_ID)obj >> PAGE_SHIFT);//找出页号

auto ret = (Span*)_idSpanMap.get(id);

assert(ret != nullptr);

return ret;

}

void PageCache::ReleaseSpanToPageCache(Span* span)

{

//对span前后的页进行合并,解决内存碎片问题

if (span->n > NPAGES - 1)

{

//大于128页的直接还给堆

void* ptr = (void*)(span->_pageId << PAGE_SHIFT);

SystemFree(ptr);

//delete span;

spanPool.Delete(span);

return;

}

//向前合并

while (1)

{

PAGE_ID prevId = span->_pageId - 1;

auto ret = (Span*)_idSpanMap.get(prevId);

if (ret == nullptr)

{

break;

}

Span* prevspan = ret;

if (prevspan->_isUse == true)

{//前面相邻页的span在使用

break;

}

if (prevspan->n + span->n > NPAGES - 1)

{

//合并数超过128,没办法管理

break;

}

//合并

span->_pageId = prevspan->_pageId;

span->n += prevspan->n;

_spanList[prevspan->n].Erase(prevspan);

//delete prevspan;

spanPool.Delete(prevspan);

}

//向后合并

while (1)

{

PAGE_ID nextId = span->_pageId + span->n;

auto ret = (Span*)_idSpanMap.get(nextId);

if (ret == nullptr)

{

break;

}

Span* nextspan = ret;

if (nextspan->_isUse == true)

{

break;

}

if (nextspan->n + span->n > NPAGES - 1)

{

break;

}

span->n += nextspan->n;

_spanList[nextspan->n].Erase(nextspan);

//delete(nextspan);

spanPool.Delete(nextspan);

}

//前后都合并过了

_spanList[span->n].PushFront(span);

span->_isUse = false;

//方便其他把此span合并

//_idSpanMap[span->_pageId] = span;

//_idSpanMap[span->_pageId + span->n - 1] = span;

_idSpanMap.set(span->_pageId, span);

_idSpanMap.set(span->_pageId + span->n - 1, span);

}

//只有Span* NewSpan(size_t k) void ReleaseSpanToPageCache(Span* span)

//这两个函数中去建立id和span的映射(回去写)

//基数树,写之前会提前开好空间,写数据过程中,不会动数据结构

//读写是分离的。线程1对一个位置读写的时候,线程2不可以对这个位置读写

576

576

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?