一、简介

本次爬虫用到的第三方库如下:

import random

import requests

from lxml import etree

import time二、打开网页

打开链家官网,进入二手房页面,可以看到该城市房源总数以及房源列表数据。

三、所有源码

import random

import requests

from lxml import etree

import time

class LianJia(object):

def __init__(self):

self.url = 'https://bj.lianjia.com/ershoufang/pg{}/'

self.headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/102.0.5005.63 Safari/537.36 Edg/102.0.1245.30'}

# 获取响应

def get_page(self, url):

req = requests.get(url, headers=self.headers)

html = req.text

# 调用解析函数

self.parse_page(html)

# 解析数据 提取数据

def parse_page(self, html):

parse_html = etree.HTML(html)

li_list = parse_html.xpath('//*[@id="content"]/div[1]/ul/li')

# 传入字典

house_dict = {}

for li in li_list:

# 传入字典

house_dict['名称'] = li.xpath('.//div[@class="positionInfo"]/a[1]/text()')

# # 总价

# price=li.xpath().strip()

house_dict['总价-万'] = li.xpath('//div[@id="content"]/div[1]/ul/li[6]/div[1]/div[6]/div[1]/span/text()')

# # 单价

house_dict['单价'] = li.xpath('//div[@id="content"]/div[1]/ul/li[6]/div/div/div[2]/span/text()')

print(house_dict)

# 保存数据

def write_page(self):

pass

# 主函数

def main(self):

# 爬取页数

for pg in range(1, 5):

url = self.url.format(pg)

self.get_page(url)

# 休眠

time.sleep(random.randint(0, 2))

if __name__ == '__main__':

start = time.time()

spider = LianJia()

spider.main()

end = time.time()

print('执行时间:%.2f' % (end - start))

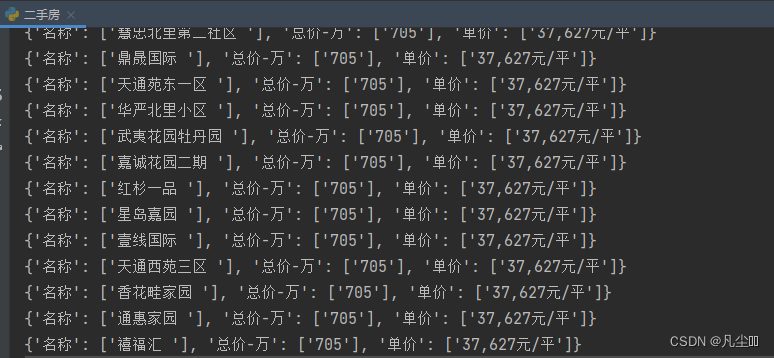

爬取内容展示

1196

1196

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?