注:map阶段会读取一行进行整合

1.创建项目如下:

2.进行导包:

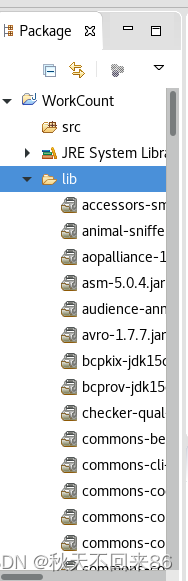

导入的jar包如下:

3.选中lib中的所有jar包,点击右键,Build Path,Add to Build Path

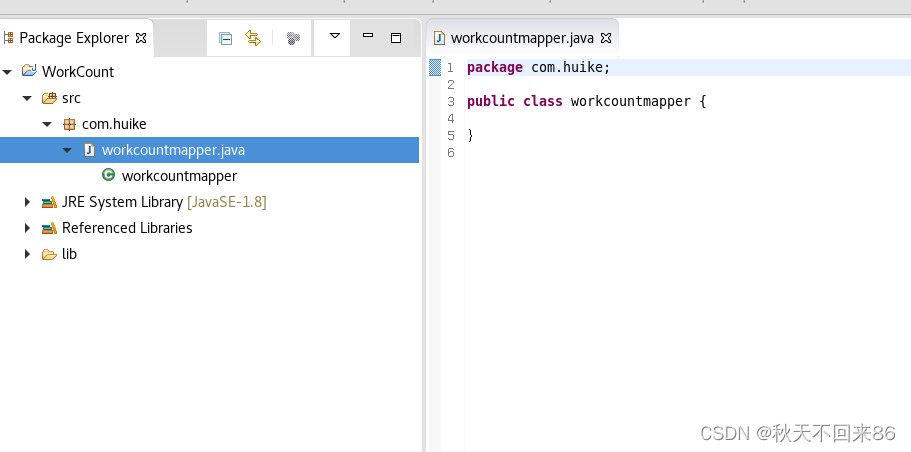

4.开发

在src上点击右键,New-->Package

map阶段:

<key,value>

map阶段读取:key(行),value(一行的值)

map 阶段输出:key,value 为程序员定义

本例为:key(单词)value(出现次数)

//LongWritable 输入key

//Text 输入 value

//Text 输出 key

//IntWritable

5.reduce阶段:

参数从左到右:

输入key,输入value 输出key,输出value

package hzy.com.wordcounthight;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class wordcounthightreduce extends Reducer<Text, IntWritable, Text, IntWritable>{

@Override

protected void reduce(Text arg0, Iterable<IntWritable> arg1,

Reducer<Text, IntWritable, Text, IntWritable>.Context arg2)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable intWritable : arg1) {

sum = sum + intWritable.get();

}

arg2.write(arg0,new IntWritable(sum));

}

}

6。main函数

package hzy.com.wordcounthight;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class wordcounthighapp {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//1.连接hadoop

Configuration configuration = new Configuration();

configuration.set("fs.defaultFS","hdfs://hadoop0:9000/");

//2,创建job,设置入口

Job job = Job.getInstance(configuration);

job.setJarByClass(wordcounthighapp.class);

//读取文件

FileInputFormat.setInputPaths(job, new Path("/data/source.txt"));

//进行mapper计算

job.setMapperClass(wordcounthighmapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//进行reduce计算

job.setReducerClass(wordcounthightreduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//写结果到hdfs:

FileOutputFormat.setOutputPath(job, new Path("/data/xuting"));

//提交任务

job.waitForCompletion(true);

}

}

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?