网络爬虫之爬虫框架【Scrapy】

先下载Scrapy(注意网络)

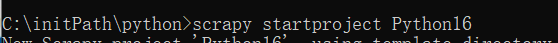

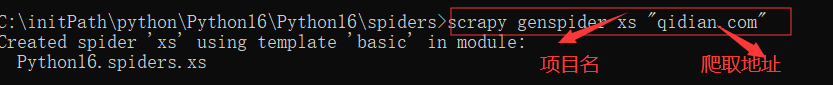

接下俩创建项目scrapy startproject 项目名

抓取的小说名字

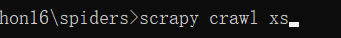

scrapy crawl xs

项目名和路径

class XsSpider(scrapy.Spider):

name = 'xs'

allowed_domains = ['qidian.com']

start_urls = ['https://www.qidian.com/all?orderId=&style=1&pageSize=20&siteid=1&pubflag=0&hiddenField=0&page=1']

获取一页的数据

def parse(self, response):

# 获取到网页返回的数据

# print(response.body.decode("UTF-8"))

# 解析网页数据 xpath

li_list = response.xpath("//ul[contains(@class,'all-img-list')]/li")

for li in li_list:

# .//获取到当前目录

item = Python16Item()

name = li.xpath(".//div[@class='book-mid-info']/h4/a/text()").extract_first()

author = li.xpath(".//div[@class='book-mid-info']/p[@class='author']/a/text()").extract_first()

content = str(li.xpath(".//div[@class='book-mid-info']/p[@class='intro']/text()").extract_first()).strip()

item['name'] = name

item['author'] = author

item['content'] = content

# 赋值之后给管道 yield(保存)

yield item

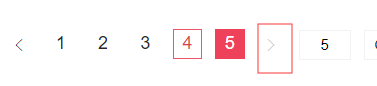

获取右箭头的数据获取数据

获取下一页的超链接

# 获取下一页的超链接

nextUrl = response.xpath("//a[contains(@class,'lbf-pagination-next')]/@href").extract_first()

if nextUrl != "javascript:;":

yield scrapy.Request(url="http:"+nextUrl, callback=self.parse)

在Setting里面加入

LOG_LEVEL = "WARNING"

去掉警告

去掉警告运行的

.extract_first() 获取第一个内容

name = li.xpath(".//div[@class ='book-mid-info']/h4/a/text()").extract_first()

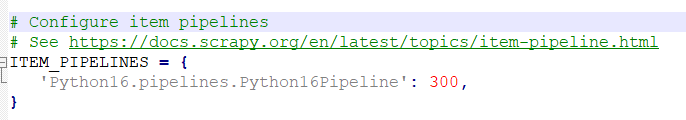

优先级,后面跟的数字越小优先级越高

相当于Java里面的entity

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/items.html

import scrapy

class Python16Item(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

name = scrapy.Field()

author = scrapy.Field()

content = scrapy.Field()

保存到csv里面

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html

import csv

class Python16Pipeline(object):

def __init__(self):

self.f = open("起点中文网.csv", "w", newline="")

self.writer = csv.writer(self.f)

self.writer.writerow(["书名", "作者", "简介"])

def process_item(self, item, spider):

# 保存到csv文件中

name = item["name"]

author = item["author"]

content = item["content"]

self.writer.writerow([name, author, content])

return item

这里是保存数据的

pipelines.py

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html

class Python16Pipeline(object):

def process_item(self, item, spider):

print("这里是保存数据的")

print(item)

return item

yield 管道一条一条发送,不能运用数组

自动执行cmd 命令 crawl xs

from scrapy import cmdline

cmdline.execute("scrapy crawl xs".split())

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?