参考文档

https://www.cnblogs.com/wen123456/p/14373713.html

数据包传输:应用层-内核-硬件_应用层读取gadget 数据-CSDN博客

usb设备控制器之uvc数据传输底层实现_usb uvc请求命令-CSDN博客

ioctl—>/drivers/usb/gadget/function/uvc_v4l2.c:v4l2_file_operations uvc_v4l2_fops—>video_ioctl2(该函数在/drivers/media/v4l2-core/v4l2-ioctl.c)—>video_usercopy—>__video_do_ioctl—>v4l2_ioctls(这是个数组,找到对应的cmd执行对应的ioctl)—>v4l_qbuf(入队ioctl)—>ops->vidioc_qbuf(file, fh, p)—>uvc_v4l2_qbuf—>uvcg_video_pump—>uvcg_video_ep_queue—>usb_ep_queue—>ep->ops->queue(ep, req, gfp_flags)—>dwc3_gadget_ep_queue—>__dwc3_gadget_ep_queue(相关dma数据拷贝操作之后调用后面函数使能发送)—>__dwc3_gadget_kick_transfer

原文链接:https://blog.csdn.net/qq_18804879/article/details/118333486

static int

uvc_v4l2_qbuf(struct file *file, void *fh, struct v4l2_buffer *b)

{

struct video_device *vdev = video_devdata(file);

struct uvc_device *uvc = video_get_drvdata(vdev);

struct uvc_video *video = &uvc->video;

int ret;

ret = uvcg_queue_buffer(&video->queue, b);

if (ret < 0)

return ret;

return uvcg_video_pump(video);

}

/*

* uvcg_video_pump - Pump video data into the USB requests

*

* This function fills the available USB requests (listed in req_free) with

* video data from the queued buffers.

*/

int uvcg_video_pump(struct uvc_video *video)

{

struct uvc_video_queue *queue = &video->queue;

struct usb_request *req;

struct uvc_buffer *buf;

unsigned long flags;

int ret;

/* FIXME TODO Race between uvcg_video_pump and requests completion

* handler ???

*/

while (1) {

/* Retrieve the first available USB request, protected by the

* request lock.

*/

spin_lock_irqsave(&video->req_lock, flags);

if (list_empty(&video->req_free)) {

spin_unlock_irqrestore(&video->req_lock, flags);

return 0;

}

req = list_first_entry(&video->req_free, struct usb_request,

list);

list_del(&req->list);

spin_unlock_irqrestore(&video->req_lock, flags);

/* Retrieve the first available video buffer and fill the

* request, protected by the video queue irqlock.

*/

spin_lock_irqsave(&queue->irqlock, flags);

buf = uvcg_queue_head(queue);

if (buf == NULL) {

spin_unlock_irqrestore(&queue->irqlock, flags);

break;

}

video->encode(req, video, buf);

/* Queue the USB request */

ret = uvcg_video_ep_queue(video, req);

spin_unlock_irqrestore(&queue->irqlock, flags);

if (ret < 0) {

uvcg_queue_cancel(queue, 0);

break;

}

}

spin_lock_irqsave(&video->req_lock, flags);

list_add_tail(&req->list, &video->req_free);

spin_unlock_irqrestore(&video->req_lock, flags);

return 0;

}

int usb_ep_queue(struct usb_ep *ep,

struct usb_request *req, gfp_t gfp_flags)

{

int ret = 0;

if (WARN_ON_ONCE(!ep->enabled && ep->address)) {

ret = -ESHUTDOWN;

goto out;

}

//调用dwc3_gadget_ep_queue

ret = ep->ops->queue(ep, req, gfp_flags);

out:

trace_usb_ep_queue(ep, req, ret);

return ret;

}

EXPORT_SYMBOL_GPL(usb_ep_queue);

static int dwc3_gadget_ep_queue(struct usb_ep *ep, struct usb_request *request,

gfp_t gfp_flags)

{

struct dwc3_request *req = to_dwc3_request(request);

struct dwc3_ep *dep = to_dwc3_ep(ep);

struct dwc3 *dwc = dep->dwc;

unsigned long flags;

int ret;

if (dwc3_gadget_is_suspended(dwc))

return -EAGAIN;

spin_lock_irqsave(&dwc->lock, flags);

ret = __dwc3_gadget_ep_queue(dep, req);

spin_unlock_irqrestore(&dwc->lock, flags);

return ret;

}

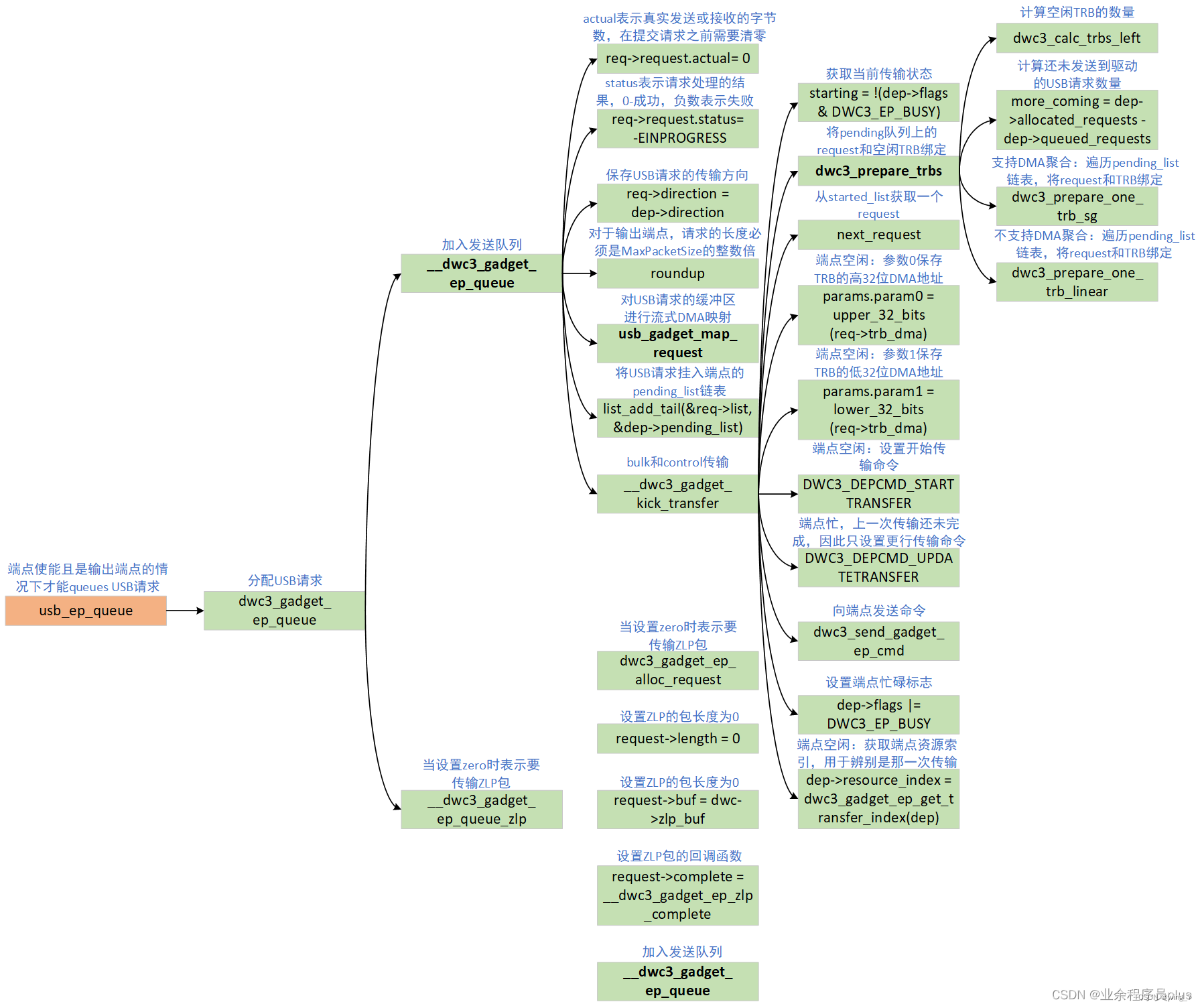

2.6.1.2.向非端点0提交USB请求

usb_ep_queue向非端点0提交USB请求的过程如下图所示,最终通过__dwc3_gadget_ep_queue函数提交。下面分析一下向非端点0提交USB请求的主要工作内容:

设置USB请求的actual、status、direction、epnum和length等字段,对于输出端点,length必须是MaxPacketSize的整数倍。

对USB请求的缓冲区进行流式DMA映射。USB请求缓冲区是Function驱动使用kmalloc等函数分配,DMA不能直接使用,需要进行DMA映射,具体的映射过程在2.7小结介绍。

将提交的USB请求先放到pending_list链表。

调用__dwc3_gadget_kick_transfer函数驱动USB设备控制器发送USB请求。这里有几个特殊情况,图里面没有画出,下面简要说明一下。

bulk和control传输,则直接调用__dwc3_gadget_kick_transfer发送USB请求。

int和isoc传输,需要处理XferNotReady、XferInProgress、Stream Capable Bulk Endpoints等情况。

调用dwc3_prepare_trbs函数遍历pending_list将TRB和request绑定,执行完后pending_list链表上的request都会被放到started_list链表上。该函数会根据DMA缓冲区的使用形式做不同的处理,若DMA支持Scatter-gather,则调用dwc3_prepare_one_trb_sg函数,否则调用dwc3_prepare_one_trb_linear函数,最终都是通过dwc3_prepare_one_trb将TRB和request绑定。

从started_list链表中获取一个USB请求。若端点空闲,将其绑定的TRB DMA地址设置到param0和param1寄存器中,命令设置为DWC3_DEPCMD_STARTTRANSFER,开始传输。若端点忙碌,则将命令设置为DWC3_DEPCMD_UPDATETRANSFER,只更新传输,则不发送本次的USB请求。端点是否忙碌通过DWC3_EP_BUSY标志判断。

若端点空闲,则获取端点资源索引,用于辨别是那一次传输。

原文链接:https://blog.csdn.net/u011037593/article/details/123467147

只需要知道一点 usb设备控制器处理的是一个trb结构体指针,控制器自己分析里面数据启动传输

在ioctl qbuf的时候调用到__dwc3_gadget_ep_queue—>usb_gadget_map_request

int usb_gadget_map_request_by_dev(struct device *dev,

struct usb_request *req, int is_in)

{

if (req->length == 0)

return 0;

if (req->num_sgs) {

int mapped;

mapped = dma_map_sg(dev, req->sg, req->num_sgs,

is_in ? DMA_TO_DEVICE : DMA_FROM_DEVICE);

if (mapped == 0) {

dev_err(dev, "failed to map SGs\n");

return -EFAULT;

}

req->num_mapped_sgs = mapped;

} else {

req->dma = dma_map_single(dev, req->buf, req->length,

is_in ? DMA_TO_DEVICE : DMA_FROM_DEVICE);在这映射到dma内存种

if (dma_mapping_error(dev, req->dma)) {

dev_err(dev, "failed to map buffer\n");

return -EFAULT;

}

}

return 0;

}__dwc3_gadget_ep_queue—>__dwc3_gadget_kick_transfer—>dwc3_prepare_trbs—>dwc3_prepare_one_trb_linear—>dwc3_prepare_one_trb

启动传输

static int __dwc3_gadget_kick_transfer(struct dwc3_ep *dep)

{

struct dwc3_gadget_ep_cmd_params params;

struct dwc3_request *req;

struct dwc3 *dwc = dep->dwc;

int starting;

int ret;

u32 cmd;

if (!dwc3_calc_trbs_left(dep))

return 0;

starting = !(dep->flags & DWC3_EP_TRANSFER_STARTED);

//dma相关准备工作

//将数据dma地址赋值给dma指向的传输的trb结构体中的地址变量

dwc3_prepare_trbs(dep);

req = next_request(&dep->started_list);

if (!req) {

dep->flags |= DWC3_EP_PENDING_REQUEST;

dbg_event(dep->number, "NO REQ", 0);

return 0;

}

memset(¶ms, 0, sizeof(params));

if (starting) {

//这里将指向trb结构体的dma地址赋值给参数,后面分别写进两个寄存器中

//控制器通过该地址拿到直接存储数据的dma地址以及数据长度,一包数据调用一次

params.param0 = upper_32_bits(req->trb_dma);

params.param1 = lower_32_bits(req->trb_dma);

cmd = DWC3_DEPCMD_STARTTRANSFER;

if (dep->stream_capable)

cmd |= DWC3_DEPCMD_PARAM(req->request.stream_id);

if (usb_endpoint_xfer_isoc(dep->endpoint.desc))

cmd |= DWC3_DEPCMD_PARAM(dep->frame_number);

} else {

cmd = DWC3_DEPCMD_UPDATETRANSFER |

DWC3_DEPCMD_PARAM(dep->resource_index);

}

//将dma 地址写入寄存器

ret = dwc3_send_gadget_ep_cmd(dep, cmd, ¶ms);

if (ret < 0) {

struct dwc3_request *tmp;

if (ret == -EAGAIN)

return ret;

dbg_log_string("dep:%s cmd failed ret:%d", dep->name, ret);

dwc3_stop_active_transfer(dep, true, true);

list_for_each_entry_safe(req, tmp, &dep->started_list, list)

dwc3_gadget_move_cancelled_request(req);

/* If ep isn't started, then there's no end transfer pending */

if (!(dep->flags & DWC3_EP_END_TRANSFER_PENDING))

dwc3_gadget_ep_cleanup_cancelled_requests(dep);

return ret;

}

return 0;

}

传输函数 准备工作

dwc3_prepare_trbs

* dwc3_prepare_trbs - setup TRBs from requests

* @dep: endpoint for which requests are being prepared

*

* The function goes through the requests list and sets up TRBs for the

* transfers. The function returns once there are no more TRBs available or

* it runs out of requests.

*/

static void dwc3_prepare_trbs(struct dwc3_ep *dep)

{

struct dwc3_request *req, *n;

BUILD_BUG_ON_NOT_POWER_OF_2(DWC3_TRB_NUM);

/*

* We can get in a situation where there's a request in the started list

* but there weren't enough TRBs to fully kick it in the first time

* around, so it has been waiting for more TRBs to be freed up.

*

* In that case, we should check if we have a request with pending_sgs

* in the started list and prepare TRBs for that request first,

* otherwise we will prepare TRBs completely out of order and that will

* break things.

*/

list_for_each_entry(req, &dep->started_list, list) {

if (req->num_pending_sgs > 0)

dwc3_prepare_one_trb_sg(dep, req);

if (!dwc3_calc_trbs_left(dep))

return;

}

list_for_each_entry_safe(req, n, &dep->pending_list, list) {

struct dwc3 *dwc = dep->dwc;

int ret;

ret = usb_gadget_map_request_by_dev(dwc->sysdev, &req->request,

dep->direction);

if (ret)

return;

req->sg = req->request.sg;

req->start_sg = req->sg;

req->num_queued_sgs = 0;

req->num_pending_sgs = req->request.num_mapped_sgs;

if (req->num_pending_sgs > 0)

dwc3_prepare_one_trb_sg(dep, req);

else

dwc3_prepare_one_trb_linear(dep, req);

dbg_ep_map(dep->number, req);

if (!dwc3_calc_trbs_left(dep))

return;

}

}

dwc3_prepare_one_trb_sg

static void dwc3_prepare_one_trb_sg(struct dwc3_ep *dep,

struct dwc3_request *req)

{

struct scatterlist *sg = req->start_sg;

struct scatterlist *s;

int i;

unsigned int length = req->request.length;

unsigned int maxp = usb_endpoint_maxp(dep->endpoint.desc);

unsigned int rem = length % maxp;

unsigned int remaining = req->request.num_mapped_sgs

- req->num_queued_sgs;

/*

* If we resume preparing the request, then get the remaining length of

* the request and resume where we left off.

*/

for_each_sg(req->request.sg, s, req->num_queued_sgs, i)

length -= sg_dma_len(s);

for_each_sg(sg, s, remaining, i) {

unsigned int trb_length;

unsigned chain = true;

trb_length = min_t(unsigned int, length, sg_dma_len(s));

length -= trb_length;

/*

* IOMMU driver is coalescing the list of sgs which shares a

* page boundary into one and giving it to USB driver. With

* this the number of sgs mapped is not equal to the number of

* sgs passed. So mark the chain bit to false if it isthe last

* mapped sg.

*/

if ((i == remaining - 1) || !length)

chain = false;

if (rem && usb_endpoint_dir_out(dep->endpoint.desc) && !chain) {

struct dwc3 *dwc = dep->dwc;

struct dwc3_trb *trb;

req->needs_extra_trb = true;

/* prepare normal TRB */

dwc3_prepare_one_trb(dep, req, trb_length, true, i);

/* Now prepare one extra TRB to align transfer size */

trb = &dep->trb_pool[dep->trb_enqueue];

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dwc->bounce_addr,

maxp - rem, false, 1,

req->request.stream_id,

req->request.short_not_ok,

req->request.no_interrupt);

} else if (req->request.zero && req->request.length &&

!usb_endpoint_xfer_isoc(dep->endpoint.desc) &&

!rem && !chain) {

struct dwc3 *dwc = dep->dwc;

struct dwc3_trb *trb;

req->needs_extra_trb = true;

/* Prepare normal TRB */

dwc3_prepare_one_trb(dep, req, trb_length, true, i);

/* Prepare one extra TRB to handle ZLP */

trb = &dep->trb_pool[dep->trb_enqueue];

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dwc->bounce_addr, 0,

!req->direction, 1,

req->request.stream_id,

req->request.short_not_ok,

req->request.no_interrupt);

/* Prepare one more TRB to handle MPS alignment */

if (!req->direction) {

trb = &dep->trb_pool[dep->trb_enqueue];

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dwc->bounce_addr, maxp,

false, 1, req->request.stream_id,

req->request.short_not_ok,

req->request.no_interrupt);

}

} else {

dwc3_prepare_one_trb(dep, req, trb_length, chain, i);

}

/*

* There can be a situation where all sgs in sglist are not

* queued because of insufficient trb number. To handle this

* case, update start_sg to next sg to be queued, so that

* we have free trbs we can continue queuing from where we

* previously stopped

*/

if (chain)

req->start_sg = sg_next(s);

req->num_queued_sgs++;

req->num_pending_sgs--;

/*

* The number of pending SG entries may not correspond to the

* number of mapped SG entries. If all the data are queued, then

* don't include unused SG entries.

*/

if (length == 0) {

req->num_pending_sgs = 0;

break;

}

if (!dwc3_calc_trbs_left(dep))

break;

}

}

dwc3_prepare_one_trb_linear

static void dwc3_prepare_one_trb_linear(struct dwc3_ep *dep,

struct dwc3_request *req)

{

unsigned int length = req->request.length;

unsigned int maxp = usb_endpoint_maxp(dep->endpoint.desc);

unsigned int rem = length % maxp;

if ((!length || rem) && usb_endpoint_dir_out(dep->endpoint.desc)) {

struct dwc3 *dwc = dep->dwc;

struct dwc3_trb *trb;

req->needs_extra_trb = true;

/* prepare normal TRB */

dwc3_prepare_one_trb(dep, req, length, true, 0);

/* Now prepare one extra TRB to align transfer size */

trb = &dep->trb_pool[dep->trb_enqueue];

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dwc->bounce_addr, maxp - rem,

false, 1, req->request.stream_id,

req->request.short_not_ok,

req->request.no_interrupt);

} else if (req->request.zero && req->request.length &&

!usb_endpoint_xfer_isoc(dep->endpoint.desc) &&

(IS_ALIGNED(req->request.length, maxp))) {

struct dwc3 *dwc = dep->dwc;

struct dwc3_trb *trb;

req->needs_extra_trb = true;

/* prepare normal TRB */

dwc3_prepare_one_trb(dep, req, length, true, 0);

/* Prepare one extra TRB to handle ZLP */

trb = &dep->trb_pool[dep->trb_enqueue];

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dwc->bounce_addr, 0,

!req->direction, 1, req->request.stream_id,

req->request.short_not_ok,

req->request.no_interrupt);

/* Prepare one more TRB to handle MPS alignment for OUT */

if (!req->direction) {

trb = &dep->trb_pool[dep->trb_enqueue];

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dwc->bounce_addr, maxp,

false, 1, req->request.stream_id,

req->request.short_not_ok,

req->request.no_interrupt);

}

} else {

dwc3_prepare_one_trb(dep, req, length, false, 0);

}

}

dwc3_prepare_one_trb

/**

* dwc3_prepare_one_trb - setup one TRB from one request

* @dep: endpoint for which this request is prepared

* @req: dwc3_request pointer

* @trb_length: buffer size of the TRB

* @chain: should this TRB be chained to the next?

* @node: only for isochronous endpoints. First TRB needs different type.

*/

static void dwc3_prepare_one_trb(struct dwc3_ep *dep,

struct dwc3_request *req, unsigned int trb_length,

unsigned chain, unsigned node)

{

struct dwc3_trb *trb;

dma_addr_t dma;

unsigned stream_id = req->request.stream_id;

unsigned short_not_ok = req->request.short_not_ok;

unsigned no_interrupt = req->request.no_interrupt;

if (req->request.num_sgs > 0)

dma = sg_dma_address(req->start_sg);

else

dma = req->request.dma;

trb = &dep->trb_pool[dep->trb_enqueue];

if (!req->trb) {

dwc3_gadget_move_started_request(req);

req->trb = trb; //trb虚拟地址

req->trb_dma = dwc3_trb_dma_offset(dep, trb);

//dma地址实际指向上面trb结构体

}

req->num_trbs++;

__dwc3_prepare_one_trb(dep, trb, dma, trb_length, chain, node,

stream_id, short_not_ok, no_interrupt);

}

__dwc3_prepare_one_trb

static void __dwc3_prepare_one_trb(struct dwc3_ep *dep, struct dwc3_trb *trb,

dma_addr_t dma, unsigned length, unsigned chain, unsigned node,

unsigned stream_id, unsigned short_not_ok, unsigned no_interrupt)

{

struct dwc3 *dwc = dep->dwc;

struct usb_gadget *gadget = &dwc->gadget;

enum usb_device_speed speed = gadget->speed;

trb->size = DWC3_TRB_SIZE_LENGTH(length);

//将前面需要传输数据的dma映射地址赋值给trb低32位

trb->bpl = lower_32_bits(dma);

trb->bph = upper_32_bits(dma);

switch (usb_endpoint_type(dep->endpoint.desc)) {

case USB_ENDPOINT_XFER_CONTROL:

trb->ctrl = DWC3_TRBCTL_CONTROL_SETUP;

break;

case USB_ENDPOINT_XFER_ISOC:

if (!node) {

trb->ctrl = DWC3_TRBCTL_ISOCHRONOUS_FIRST;

/*

* USB Specification 2.0 Section 5.9.2 states that: "If

* there is only a single transaction in the microframe,

* only a DATA0 data packet PID is used. If there are

* two transactions per microframe, DATA1 is used for

* the first transaction data packet and DATA0 is used

* for the second transaction data packet. If there are

* three transactions per microframe, DATA2 is used for

* the first transaction data packet, DATA1 is used for

* the second, and DATA0 is used for the third."

*

* IOW, we should satisfy the following cases:

*

* 1) length <= maxpacket

* - DATA0

*

* 2) maxpacket < length <= (2 * maxpacket)

* - DATA1, DATA0

*

* 3) (2 * maxpacket) < length <= (3 * maxpacket)

* - DATA2, DATA1, DATA0

*/

if (speed == USB_SPEED_HIGH) {

struct usb_ep *ep = &dep->endpoint;

unsigned int mult = 2;

unsigned int maxp = usb_endpoint_maxp(ep->desc);

if (length <= (2 * maxp))

mult--;

if (length <= maxp)

mult--;

trb->size |= DWC3_TRB_SIZE_PCM1(mult);

}

} else {

trb->ctrl = DWC3_TRBCTL_ISOCHRONOUS;

}

/* always enable Interrupt on Missed ISOC */

trb->ctrl |= DWC3_TRB_CTRL_ISP_IMI;

break;

case USB_ENDPOINT_XFER_BULK:

case USB_ENDPOINT_XFER_INT:

trb->ctrl = DWC3_TRBCTL_NORMAL;

break;

default:

/*

* This is only possible with faulty memory because we

* checked it already :)

*/

dev_WARN(dwc->dev, "Unknown endpoint type %d\n",

usb_endpoint_type(dep->endpoint.desc));

}

/*

* Enable Continue on Short Packet

* when endpoint is not a stream capable

*/

if (usb_endpoint_dir_out(dep->endpoint.desc)) {

if (!dep->stream_capable)

trb->ctrl |= DWC3_TRB_CTRL_CSP;

if (short_not_ok)

trb->ctrl |= DWC3_TRB_CTRL_ISP_IMI;

}

if ((!no_interrupt && !chain) ||

(dwc3_calc_trbs_left(dep) == 1))

trb->ctrl |= DWC3_TRB_CTRL_IOC;

if (chain)

trb->ctrl |= DWC3_TRB_CTRL_CHN;

if (usb_endpoint_xfer_bulk(dep->endpoint.desc) && dep->stream_capable)

trb->ctrl |= DWC3_TRB_CTRL_SID_SOFN(stream_id);

/*

* As per data book 4.2.3.2TRB Control Bit Rules section

*

* The controller autonomously checks the HWO field of a TRB to determine if the

* entire TRB is valid. Therefore, software must ensure that the rest of the TRB

* is valid before setting the HWO field to '1'. In most systems, this means that

* software must update the fourth DWORD of a TRB last.

*

* However there is a possibility of CPU re-ordering here which can cause

* controller to observe the HWO bit set prematurely.

* Add a write memory barrier to prevent CPU re-ordering.

*/

wmb();

trb->ctrl |= DWC3_TRB_CTRL_HWO;

dwc3_ep_inc_enq(dep);

trace_dwc3_prepare_trb(dep, trb);

}

使能发送数据

int dwc3_send_gadget_ep_cmd(struct dwc3_ep *dep, unsigned cmd,

struct dwc3_gadget_ep_cmd_params *params)

{

struct dwc3 *dwc = dep->dwc;

u32 timeout = 5000;

u32 reg;

int cmd_status = 0;

int susphy = false;

int ret = -EINVAL;

/*

* Synopsys Databook 2.60a states, on section 6.3.2.5.[1-8], that if

* we're issuing an endpoint command, we must check if

* GUSB2PHYCFG.SUSPHY bit is set. If it is, then we need to clear it.

*

* We will also set SUSPHY bit to what it was before returning as stated

* by the same section on Synopsys databook.

*/

if (dwc->gadget.speed <= USB_SPEED_HIGH) {

reg = dwc3_readl(dwc->regs, DWC3_GUSB2PHYCFG(0));

if (unlikely(reg & DWC3_GUSB2PHYCFG_SUSPHY)) {

susphy = true;

reg &= ~DWC3_GUSB2PHYCFG_SUSPHY;

dwc3_writel(dwc->regs, DWC3_GUSB2PHYCFG(0), reg);

}

}

if (cmd == DWC3_DEPCMD_STARTTRANSFER) {

int needs_wakeup;

needs_wakeup = (dwc->link_state == DWC3_LINK_STATE_U1 ||

dwc->link_state == DWC3_LINK_STATE_U2 ||

dwc->link_state == DWC3_LINK_STATE_U3);

if (unlikely(needs_wakeup)) {

ret = __dwc3_gadget_wakeup(dwc);

dev_WARN_ONCE(dwc->dev, ret, "wakeup failed --> %d\n",

ret);

}

}

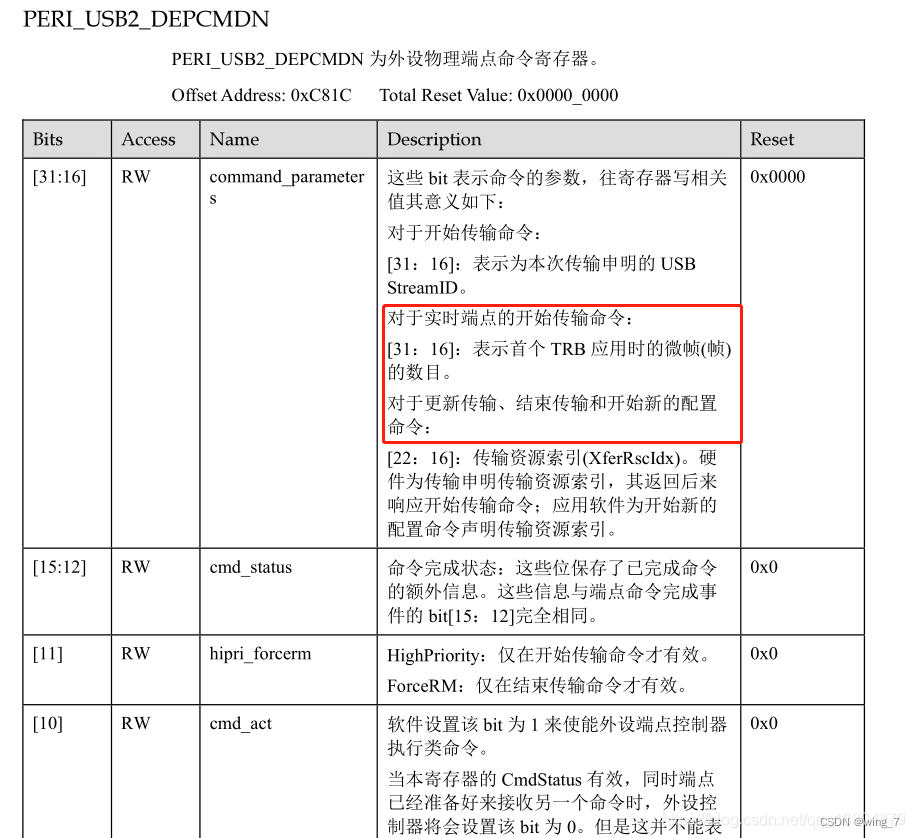

进行dma 映射 讲params->param0地址写入到寄存器

dwc3_writel(dep->regs, DWC3_DEPCMDPAR0, params->param0);将dma地址写入寄存器

dwc3_writel(dep->regs, DWC3_DEPCMDPAR1, params->param1);

dwc3_writel(dep->regs, DWC3_DEPCMDPAR2, params->param2);

dwc3_writel(dep->regs, DWC3_DEPCMD, cmd | DWC3_DEPCMD_CMDACT);使能usb传输

省略代码。。。

}

原文链接:https://blog.csdn.net/qq_18804879/article/details/118387147

irqreturn_t dwc3_interrupt(int irq, void *_dwc)

{

struct dwc3 *dwc = _dwc;

irqreturn_t ret = IRQ_NONE;

irqreturn_t status;

status = dwc3_check_event_buf(dwc->ev_buf);

if (status == IRQ_WAKE_THREAD)

ret = status;

if (ret == IRQ_WAKE_THREAD)

//触发中断下半部处理中断的事件

queue_work(dwc->dwc_wq, &dwc->bh_work);

return IRQ_HANDLED;

}

dwc3_bh_work

void dwc3_bh_work(struct work_struct *w)

{

//w = dwc->bh_work

struct dwc3 *dwc = container_of(w, struct dwc3, bh_work);

pm_runtime_get_sync(dwc->dev);

dwc3_thread_interrupt(dwc->irq, dwc->ev_buf);

pm_runtime_put(dwc->dev);

}

dwc3_thread_interrupt

static irqreturn_t dwc3_thread_interrupt(int irq, void *_evt)

{

struct dwc3_event_buffer *evt = _evt;

struct dwc3 *dwc = evt->dwc;

unsigned long flags;

irqreturn_t ret = IRQ_NONE;

ktime_t start_time;

start_time = ktime_get();

local_bh_disable();

spin_lock_irqsave(&dwc->lock, flags);

dwc->bh_handled_evt_cnt[dwc->irq_dbg_index] = 0;

ret = dwc3_process_event_buf(evt);

spin_unlock_irqrestore(&dwc->lock, flags);

local_bh_enable();

dwc->bh_start_time[dwc->bh_dbg_index] = start_time;

dwc->bh_completion_time[dwc->bh_dbg_index] =

ktime_to_us(ktime_sub(ktime_get(), start_time));

dwc->bh_dbg_index = (dwc->bh_dbg_index + 1) % MAX_INTR_STATS;

return ret;

}

dwc3_process_event_buf

static irqreturn_t dwc3_process_event_buf(struct dwc3_event_buffer *evt)

{

struct dwc3 *dwc = evt->dwc;

irqreturn_t ret = IRQ_NONE;

int left;

u32 reg;

left = evt->count;

if (!(evt->flags & DWC3_EVENT_PENDING))

return IRQ_NONE;

while (left > 0) {

union dwc3_event event;

event.raw = *(u32 *) (evt->cache + evt->lpos);

dwc3_process_event_entry(dwc, &event);

if (dwc->err_evt_seen) {

/*

* if erratic error, skip remaining events

* while controller undergoes reset

*/

evt->lpos = (evt->lpos + left) %

DWC3_EVENT_BUFFERS_SIZE;

if (dwc3_notify_event(dwc,

DWC3_CONTROLLER_ERROR_EVENT, 0))

dwc->err_evt_seen = 0;

dwc->retries_on_error++;

break;

}

/*

* FIXME we wrap around correctly to the next entry as

* almost all entries are 4 bytes in size. There is one

* entry which has 12 bytes which is a regular entry

* followed by 8 bytes data. ATM I don't know how

* things are organized if we get next to the a

* boundary so I worry about that once we try to handle

* that.

*/

evt->lpos = (evt->lpos + 4) % evt->length;

left -= 4;

}

dwc->bh_handled_evt_cnt[dwc->bh_dbg_index] += (evt->count / 4);

evt->count = 0;

ret = IRQ_HANDLED;

/* Unmask interrupt */

reg = dwc3_readl(dwc->regs, DWC3_GEVNTSIZ(0));

reg &= ~DWC3_GEVNTSIZ_INTMASK;

dwc3_writel(dwc->regs, DWC3_GEVNTSIZ(0), reg);

if (dwc->imod_interval) {

dwc3_writel(dwc->regs, DWC3_GEVNTCOUNT(0), DWC3_GEVNTCOUNT_EHB);

dwc3_writel(dwc->regs, DWC3_DEV_IMOD(0), dwc->imod_interval);

}

/* Keep the clearing of DWC3_EVENT_PENDING at the end */

evt->flags &= ~DWC3_EVENT_PENDING;

return ret;

}

dwc3_process_event_entry

static void dwc3_process_event_entry(struct dwc3 *dwc,

const union dwc3_event *event)

{

trace_dwc3_event(event->raw, dwc);

if (!event->type.is_devspec)

dwc3_endpoint_interrupt(dwc, &event->depevt);

else if (event->type.type == DWC3_EVENT_TYPE_DEV)

dwc3_gadget_interrupt(dwc, &event->devt);

else

dev_err(dwc->dev, "UNKNOWN IRQ type %d\n", event->raw);

}

[drivers/usb/dwc3/core.h]

/* dwc3 USB设备端点事件宏定义 */

#define DWC3_DEPEVT_XFERCOMPLETE 0x01 // 传输完成

#define DWC3_DEPEVT_XFERINPROGRESS 0x02 // 传输正在处理中

#define DWC3_DEPEVT_XFERNOTREADY 0x03 // 传输未准备好

// 接收和发送FIFO事件 (IN->Underrun, OUT->Overrun)

#define DWC3_DEPEVT_RXTXFIFOEVT 0x04

#define DWC3_DEPEVT_STREAMEVT 0x06 // 流事件

#define DWC3_DEPEVT_EPCMDCMPLT 0x07 // 端点命令完成

struct dwc3_event_depevt { /* USB设备端点事件 */

u32 one_bit:1; // 未使用

u32 endpoint_number:5; // 端点编号

u32 endpoint_event:4; // 端点事件

u32 reserved11_10:2;

u32 status:4; // 端点事件状态

u32 parameters:16;

} __packed;

/* dwc3 USB设备控制器事件类型 */

#define DWC3_DEVICE_EVENT_DISCONNECT 0

#define DWC3_DEVICE_EVENT_RESET 1

#define DWC3_DEVICE_EVENT_CONNECT_DONE 2

#define DWC3_DEVICE_EVENT_LINK_STATUS_CHANGE 3

#define DWC3_DEVICE_EVENT_WAKEUP 4

#define DWC3_DEVICE_EVENT_HIBER_REQ 5

#define DWC3_DEVICE_EVENT_EOPF 6

#define DWC3_DEVICE_EVENT_SOF 7

#define DWC3_DEVICE_EVENT_ERRATIC_ERROR 9

#define DWC3_DEVICE_EVENT_CMD_CMPL 10

#define DWC3_DEVICE_EVENT_OVERFLOW 11

// VndrDevTstRcved 12

struct dwc3_event_devt { /* USB设备事件 */

u32 one_bit:1; // 未使用

u32 device_event:7; // 设备事件

u32 type:4; // 设备事件类型

u32 reserved15_12:4;

u32 event_info:9; // 事件信息

u32 reserved31_25:7;

} __packed;

/* Other Core Events */

struct dwc3_event_gevt {

u32 one_bit:1; // 未使用

u32 device_event:7; // (0x03) Carkit or (0x04) I2C event

u32 phy_port_number:4; // self-explanatory

u32 reserved31_12:20;

} __packed;

dwc3_endpoint_interrupt

static void dwc3_endpoint_interrupt(struct dwc3 *dwc,

const struct dwc3_event_depevt *event)

{

struct dwc3_ep *dep;

u8 epnum = event->endpoint_number;

u8 cmd;

dep = dwc->eps[epnum];

if (!(dep->flags & DWC3_EP_ENABLED)) {

if (!(dep->flags & DWC3_EP_TRANSFER_STARTED))

return;

/* Handle only EPCMDCMPLT when EP disabled */

if (event->endpoint_event != DWC3_DEPEVT_EPCMDCMPLT)

return;

}

if (epnum == 0 || epnum == 1) {

dwc3_ep0_interrupt(dwc, event);

return;

}

dep->dbg_ep_events.total++;

switch (event->endpoint_event) {

case DWC3_DEPEVT_XFERINPROGRESS:

dep->dbg_ep_events.xferinprogress++;

dwc3_gadget_endpoint_transfer_in_progress(dep, event);

break;

case DWC3_DEPEVT_XFERNOTREADY:

dep->dbg_ep_events.xfernotready++;

dwc3_gadget_endpoint_transfer_not_ready(dep, event);

break;

case DWC3_DEPEVT_EPCMDCMPLT:

dep->dbg_ep_events.epcmdcomplete++;

cmd = DEPEVT_PARAMETER_CMD(event->parameters);

if (cmd == DWC3_DEPCMD_ENDTRANSFER &&

(dep->flags & DWC3_EP_END_TRANSFER_PENDING)) {

dep->flags &= ~DWC3_EP_END_TRANSFER_PENDING;

dep->flags &= ~DWC3_EP_TRANSFER_STARTED;

dwc3_gadget_ep_cleanup_cancelled_requests(dep);

dbg_log_string("DWC3_DEPEVT_EPCMDCMPLT (%d)",

dep->number);

if (dep->flags & DWC3_EP_PENDING_CLEAR_STALL) {

struct dwc3 *dwc = dep->dwc;

dep->flags &= ~DWC3_EP_PENDING_CLEAR_STALL;

if (dwc3_send_clear_stall_ep_cmd(dep)) {

struct usb_ep *ep0 = &dwc->eps[0]->endpoint;

dev_err(dwc->dev, "failed to clear STALL on %s\n",

dep->name);

if (dwc->delayed_status)

__dwc3_gadget_ep0_set_halt(ep0, 1);

return;

}

dep->flags &= ~(DWC3_EP_STALL | DWC3_EP_WEDGE);

if (dwc->clear_stall_protocol == dep->number)

dwc3_ep0_send_delayed_status(dwc);

}

if ((dep->flags & DWC3_EP_DELAY_START) &&

!usb_endpoint_xfer_isoc(dep->endpoint.desc))

__dwc3_gadget_kick_transfer(dep);

dep->flags &= ~DWC3_EP_DELAY_START;

}

break;

case DWC3_DEPEVT_STREAMEVT:

dep->dbg_ep_events.streamevent++;

break;

case DWC3_DEPEVT_XFERCOMPLETE:

dep->dbg_ep_events.xfercomplete++;

break;

case DWC3_DEPEVT_RXTXFIFOEVT:

dep->dbg_ep_events.rxtxfifoevent++;

break;

}

}

dwc3_gadget_endpoint_transfer_in_progress

static void dwc3_gadget_endpoint_transfer_in_progress(struct dwc3_ep *dep,

const struct dwc3_event_depevt *event)

{

struct dwc3 *dwc = dep->dwc;

unsigned status = 0;

bool stop = false;

dwc3_gadget_endpoint_frame_from_event(dep, event);

if (event->status & DEPEVT_STATUS_BUSERR)

status = -ECONNRESET;

dwc3_gadget_ep_cleanup_completed_requests(dep, event, status);

if (event->status & DEPEVT_STATUS_MISSED_ISOC) {

status = -EXDEV;

dep->missed_isoc_packets++;

dbg_event(dep->number, "MISSEDISOC", dep->missed_isoc_packets);

}

if (usb_endpoint_xfer_isoc(dep->endpoint.desc) &&

(list_empty(&dep->started_list))) {

stop = true;

dbg_event(dep->number, "STOPXFER", dep->frame_number);

}

if (dep->flags & DWC3_EP_END_TRANSFER_PENDING)

goto out;

if (stop)

dwc3_stop_active_transfer(dep, true, true);

else if (dwc3_gadget_ep_should_continue(dep))

__dwc3_gadget_kick_transfer(dep);

out:

/*

* WORKAROUND: This is the 2nd half of U1/U2 -> U0 workaround.

* See dwc3_gadget_linksts_change_interrupt() for 1st half.

*/

if (dwc->revision < DWC3_REVISION_183A) {

u32 reg;

int i;

for (i = 0; i < DWC3_ENDPOINTS_NUM; i++) {

dep = dwc->eps[i];

if (!(dep->flags & DWC3_EP_ENABLED))

continue;

if (!list_empty(&dep->started_list))

return;

}

reg = dwc3_readl(dwc->regs, DWC3_DCTL);

reg |= dwc->u1u2;

dwc3_writel(dwc->regs, DWC3_DCTL, reg);

dwc->u1u2 = 0;

}

}

dwc3_gadget_ep_cleanup_completed_requests

static void dwc3_gadget_ep_cleanup_completed_requests(struct dwc3_ep *dep,

const struct dwc3_event_depevt *event, int status)

{

struct dwc3_request *req;

while (!list_empty(&dep->started_list)) {

int ret;

req = next_request(&dep->started_list);

ret = dwc3_gadget_ep_cleanup_completed_request(dep, event,

req, status);

if (ret)

break;

}

}

dwc3_gadget_ep_cleanup_completed_request

static int dwc3_gadget_ep_cleanup_completed_request(struct dwc3_ep *dep,

const struct dwc3_event_depevt *event,

struct dwc3_request *req, int status)

{

struct dwc3 *dwc = dep->dwc;

int request_status;

int ret;

/*

* If the HWO is set, it implies the TRB is still being

* processed by the core. Hence do not reclaim it until

* it is processed by the core.

*/

if (req->trb->ctrl & DWC3_TRB_CTRL_HWO) {

dbg_event(0xFF, "PEND TRB", dep->number);

return 1;

}

if (req->request.num_mapped_sgs)

ret = dwc3_gadget_ep_reclaim_trb_sg(dep, req, event,

status);

else

ret = dwc3_gadget_ep_reclaim_trb_linear(dep, req, event,

status);

req->request.actual = req->request.length - req->remaining;

if (!dwc3_gadget_ep_request_completed(req))

goto out;

if (req->needs_extra_trb) {

unsigned int maxp = usb_endpoint_maxp(dep->endpoint.desc);

ret = dwc3_gadget_ep_reclaim_trb_linear(dep, req, event,

status);

/* Reclaim MPS padding TRB for ZLP */

if (!req->direction && req->request.zero && req->request.length &&

!usb_endpoint_xfer_isoc(dep->endpoint.desc) &&

(IS_ALIGNED(req->request.length, maxp)))

ret = dwc3_gadget_ep_reclaim_trb_linear(dep, req, event, status);

req->needs_extra_trb = false;

}

/*

* The event status only reflects the status of the TRB with IOC set.

* For the requests that don't set interrupt on completion, the driver

* needs to check and return the status of the completed TRBs associated

* with the request. Use the status of the last TRB of the request.

*/

if (req->request.no_interrupt) {

struct dwc3_trb *trb;

trb = dwc3_ep_prev_trb(dep, dep->trb_dequeue);

switch (DWC3_TRB_SIZE_TRBSTS(trb->size)) {

case DWC3_TRBSTS_MISSED_ISOC:

/* Isoc endpoint only */

request_status = -EXDEV;

break;

case DWC3_TRB_STS_XFER_IN_PROG:

/* Applicable when End Transfer with ForceRM=0 */

case DWC3_TRBSTS_SETUP_PENDING:

/* Control endpoint only */

case DWC3_TRBSTS_OK:

default:

request_status = 0;

break;

}

} else {

request_status = status;

}

dwc3_gadget_giveback(dep, req, request_status);

out:

return ret;

}

dwc3_gadget_giveback

/**

* dwc3_gadget_giveback - call struct usb_request's ->complete callback

* @dep: The endpoint to whom the request belongs to

* @req: The request we're giving back

* @status: completion code for the request

*

* Must be called with controller's lock held and interrupts disabled. This

* function will unmap @req and call its ->complete() callback to notify upper

* layers that it has completed.

*/

void dwc3_gadget_giveback(struct dwc3_ep *dep, struct dwc3_request *req,

int status)

{

struct dwc3 *dwc = dep->dwc;

dwc3_gadget_del_and_unmap_request(dep, req, status);

req->status = DWC3_REQUEST_STATUS_COMPLETED;

if (dep->endpoint.desc && usb_endpoint_xfer_isoc(dep->endpoint.desc) &&

(list_empty(&dep->started_list))) {

dep->flags |= DWC3_EP_PENDING_REQUEST;

dbg_event(dep->number, "STARTEDLISTEMPTY", 0);

}

spin_unlock(&dwc->lock);

usb_gadget_giveback_request(&dep->endpoint, &req->request);

spin_lock(&dwc->lock);

}

usb_gadget_giveback_request

/**

* usb_gadget_giveback_request - give the request back to the gadget layer

* Context: in_interrupt()

*

* This is called by device controller drivers in order to return the

* completed request back to the gadget layer.

*/

void usb_gadget_giveback_request(struct usb_ep *ep,

struct usb_request *req)

{

if (likely(req->status == 0))

usb_led_activity(USB_LED_EVENT_GADGET);

trace_usb_gadget_giveback_request(ep, req, 0);

req->complete(ep, req);//调用对应的function的complete uvc_video_complete

}

EXPORT_SYMBOL_GPL(usb_gadget_giveback_request);

uvc_video_complete 进行循环enqueue & dequeue buf

*

* The USB request completion handler will get the buffer at the irqqueue head

* under protection of the queue spinlock. If the queue is empty, the streaming

* paused flag will be set. Right after releasing the spinlock a userspace

* application can queue a buffer. The flag will then cleared, and the ioctl

* handler will restart the video stream.

*/

static void

uvc_video_complete(struct usb_ep *ep, struct usb_request *req)

{

struct uvc_video *video = req->context;

struct uvc_video_queue *queue = &video->queue;

struct uvc_buffer *buf;

unsigned long flags;

int ret;

switch (req->status) {

case 0:

break;

case -ESHUTDOWN: /* disconnect from host. */

uvcg_dbg(&video->uvc->func, "VS request cancelled.\n");

uvcg_queue_cancel(queue, 1);

goto requeue;

default:

uvcg_warn(&video->uvc->func,

"VS request completed with status %d.\n",

req->status);

uvcg_queue_cancel(queue, 0);

goto requeue;

}

spin_lock_irqsave(&video->queue.irqlock, flags);

buf = uvcg_queue_head(&video->queue);

if (buf == NULL) {

spin_unlock_irqrestore(&video->queue.irqlock, flags);

goto requeue;

}

video->encode(req, video, buf);

ret = uvcg_video_ep_queue(video, req);

spin_unlock_irqrestore(&video->queue.irqlock, flags);

if (ret < 0) {

uvcg_queue_cancel(queue, 0);

goto requeue;

}

return;

requeue:

spin_lock_irqsave(&video->req_lock, flags);

list_add_tail(&req->list, &video->req_free);

spin_unlock_irqrestore(&video->req_lock, flags);

}

1118

1118

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?