目录

六: 中国大学排名定向爬虫(仅对输入URL进行爬取,不扩展爬取)

一:Requests:自动爬取html,自动网络提交

二:网络爬虫排除标准

三:爬虫实战项目

3.1、京东商品页面爬取

import requests

def getHTMLText(url, headers):

try:

# 超时时间是30秒

r = requests.get(url, headers=headers, timeout=30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text[:500]

except:

return "产生异常"

if __name__ == "__main__":

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.100 Safari/537.36'

}

url = "https://item.jd.com/100013255294.html"

print(getHTMLText(url, headers))

3.2、亚马逊和百度搜索引擎(反爬失效)

需要反爬虫...

3.3、360搜索

import requests

kv = "python"

try:

kv = {'q': kv}

r = requests.get("http://www.so.com/s", params=kv)

r.raise_for_status()

print(r.text[:1000])

except:

print("错误")3.4、保存指定路径图片

import requests

import os

url = "https://attach.52pojie.cn/forum/201908/18/020300rqz63o54npqxwq7x.png"

root = "D://"

path = root + url.split('/')[-1]

try:

if not os.path.exists(root):

os.mkdir(root)

if not os.path.exists(path):

r = requests.get(url)

with open(path, 'wb') as f:

f.write(r.content)

f.close()

print("图片保存成功")

else:

print("图片已存在")

except:

print("错误")

3.5、根据IP地址查找归属地

import re

import json

import requests

def getSource(ip):

url = 'https://www.ip138.com/iplookup.asp?ip=' + ip + '&action=2'

print("要查询的地址为: " + url)

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36 SE 2.X MetaSr 1.0"}

r = requests.get(url, headers=headers)

r.raise_for_status()

r.encoding = r.apparent_encoding

response = r.text

# print(response)

res = re.search("ip_result?\s\W\s\W\W.+}", response).group()

# res = res.replace(']}', '')

res = res.replace('ip_result = ', '')

new_res = json.loads(res)

for k, v in new_res.items():

print(k, v)

if __name__ == "__main__":

ip = '220.196.241.222' # 自定义ip

getSource(ip)运行结果:

三:Beautiful Soup库入门(bs4)

Beautiful Soup库是解析、遍历、维护“标签树”的功能库

import requests

from bs4 import BeautifulSoup

r = requests.get("https://python123.io/ws/demo.html")

demo = r.text

soup = BeautifulSoup(demo, "html.parser")

# print(soup)

# print(soup.title) # 打印title标签

# print(soup.a.name) # 每个<tag>都有自己的名字,通过<tag>.name获取,字符串类型

# print(soup.a.parent.name) # 打印a标签的父标签

# print(soup.a.parent.parent.name) # 打印a标签的爷爷标签

print("a标签完整内容: ")

print(soup.a)

print("a标签完整属性: ")

print(soup.a.attrs) # 打印a标签完整的一个

print("a标签单一属性'href': ")

print(soup.a.attrs["href"]) # 打印a标签的属性

print("a标签字符串内容: ")

print(soup.a.string) # 打印a标签的字符串内容

print("========更美观地显示========")

print(soup.prettify())

运行结果:

四:信息标记与提取方法

三种形式:XML、JSON、YAML

import requests

from bs4 import BeautifulSoup

r = requests.get("https://python123.io/ws/demo.html")

demo = r.text

soup = BeautifulSoup(demo, "html.parser")

for link in soup.find_all('a'):

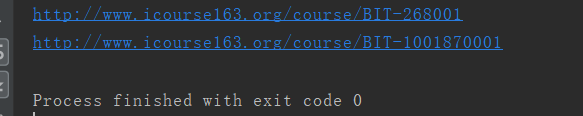

print(link.get('href'))运行结果:

五:基于bs4库的HTML内容查找方法

recursive:是否对子孙全部检索,默认τrue

1、根据标签名来找对应的字符串

soup.find_all('a')

2、用标签的属性值来找

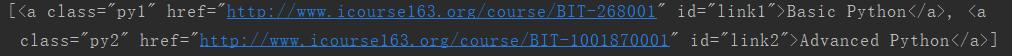

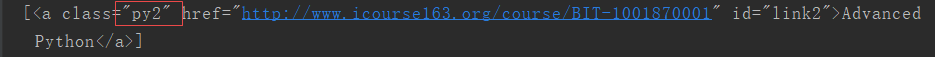

print(soup.find_all('a', 'py2')) 找class是py2的a标签

3、找包含某个字符串的内容

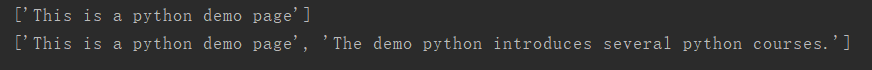

print(soup.find_all(string='This is a python demo page')) # 必须存在,不然为空(适合已知结果的)

print(soup.find_all(string=re.compile('python'))) # 包含python的字符串都出现运行结果:

六: 中国大学排名定向爬虫(仅对输入URL进行爬取,不扩展爬取)

import requests

import bs4

def getHTMLText(url):

try:

r = requests.get(url, timeout=30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

return ""

def fillUnivList(uList, html):

soup = bs4.BeautifulSoup(html, "html.parser")

for tr in soup.find('tbody').children:

if isinstance(tr, bs4.element.Tag):

tds = tr('td')

uList.append([tds[0].string, tds[1].string, tds[3].string])

def printUnivList(uList, num):

print("{:^10}\t{:^6}\t{:^10}".format("排名", "学校名称", "总分"))

for i in range(num):

u = uList[i]

print("{:^10}\t{:^6}\t{:^10}". format(u[0], u[1], u[2]))

def main():

uinfo = []

url = 'http://www.zuihaodaxue.cn/zuihaodaxuepaiming2016.html'

html = getHTMLText(url)

fillUnivList(uinfo, html)

printUnivList(uinfo, 20)

if __name__ == '__main__':

main()运行结果:

def printUnivList(uList, num):

tplt = "{0:^10}\t{1:{3}^10}\t{2:^10}"

print(tplt.format("排名", "学校名称", "总分", chr(12288)))

for i in range(num):

u = uList[i]

print(tplt.format(u[0], u[1], u[2], chr(12288)))运行结果:

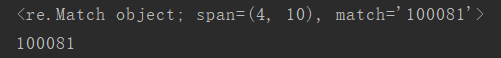

七:Re(正则表达式)库入门 提取页面关键信息

import re

match = re.search(r'[1-9]\d{5}', 'BIT 100081')

print(match)

print(match.group(0))

八: 实战项目一:淘宝商品比价定向爬虫

淘宝链接规则发生变化:以后再填坑,而且要登陆的.

https://s.taobao.com/search?q=书包&imgfile=&js=1&stats_click=search_radio_all

%3A1&initiative_id=staobaoz_20200623&ie=utf8 &bcoffset=3&ntoffset=3&p4ppushleft=1%2C48&s=44

https://s.taobao.com/search?q=书包&imgfile=&js=1&stats_click=search_radio_all

%3A1&initiative_id=staobaoz_20200623&ie=utf8&bcoffset=3&ntoffset=3&p4ppushleft=1%2C48&s=44

https://s.taobao.com/search?q=书包&imgfile=&js=1&stats_click=search_radio_all

%3A1&initiative_id=staobaoz_20200623&ie=utf8&bcoffset=0&ntoffset=6&p4ppushleft=1%2C48&s=88

https://s.taobao.com/search?q=书包&imgfile=&js=1&stats_click=search_radio_all

%3A1&initiative_id=staobaoz_20200623&ie=utf8&bcoffset=-3&ntoffset=-3&p4ppushleft=1%2C48&s=132九: 实战项目二:股票数据定向爬虫

目标网站:https://www.eastmoney.com/

等待填坑

Scrapy爬虫框架

3078

3078

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?