目录

前提条件(具体配置文件信息会粘帖在最后面):

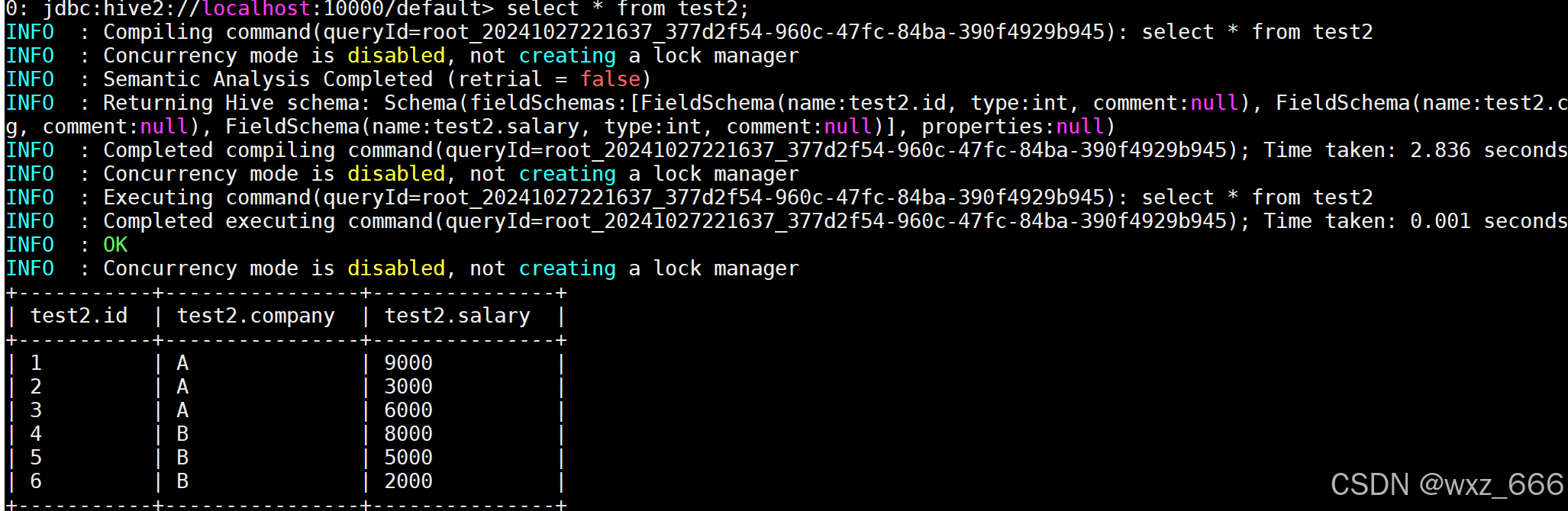

先在linux平台查询hive测试表数据:

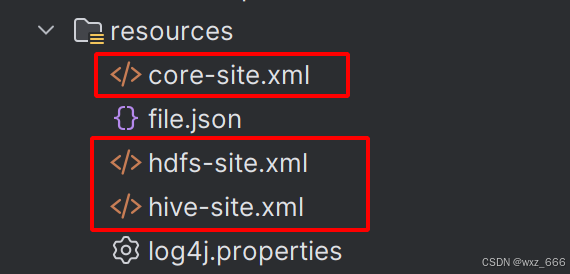

第一步:配置文件

先需要连接的配置文件导入到idea的resourse下面:

第二步:测试连接(java和scala)

编写代码java:

package org.hive;

import org.apache.spark.SparkConf;

import org.apache.spark.sql.SparkSession;

public class SparkJavaReadHIveData {

public static void main(String[] args) {

SparkConf sc = new SparkConf();

sc.setAppName("SparkJavaReadHIveData").setMaster("local[*]");

SparkSession ssc = SparkSession.builder().config(sc).enableHiveSupport().getOrCreate();

ssc.sql("select * from default.test2").show();

ssc.stop();

}

}

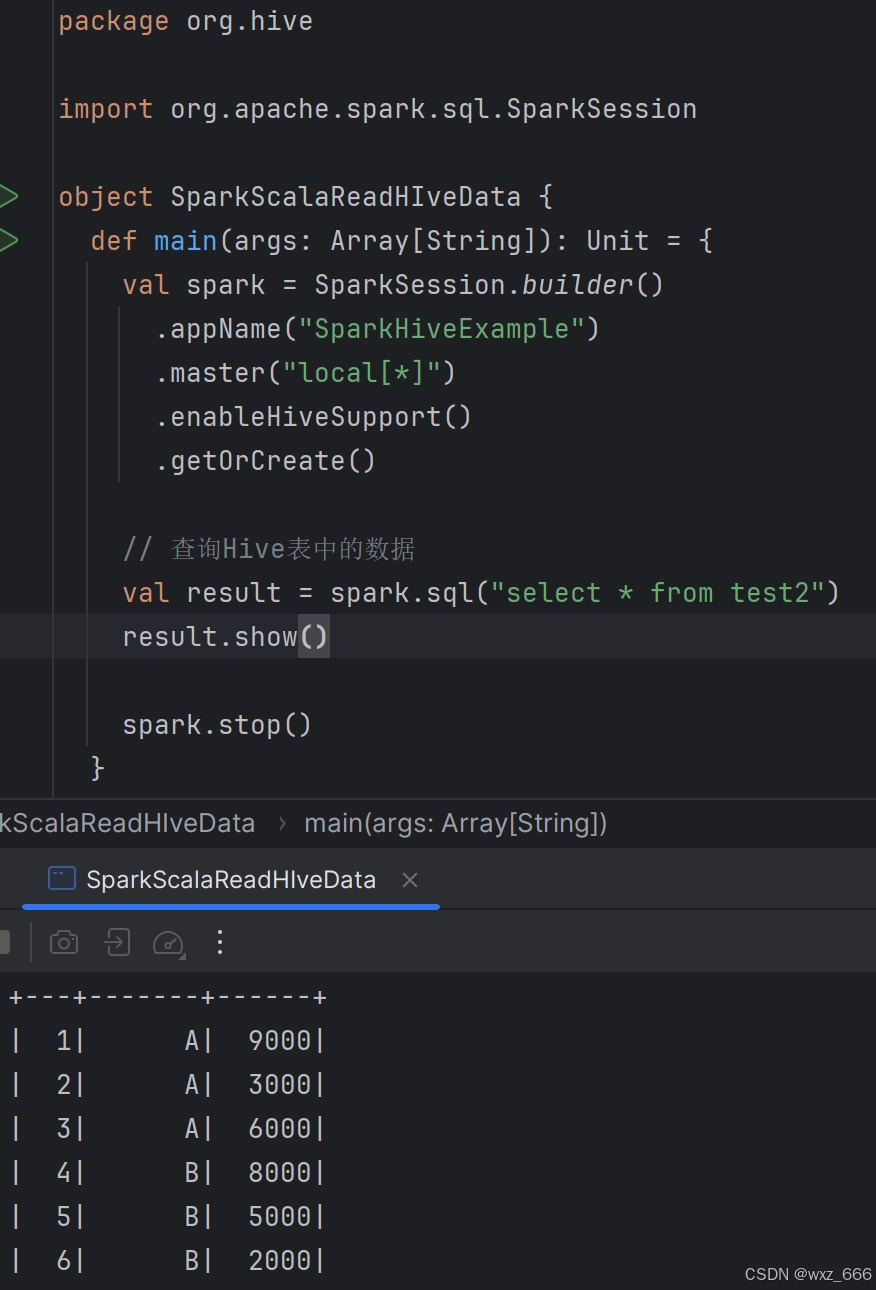

编写代码scala:

package org.hive

import org.apache.spark.sql.SparkSession

object SparkScalaReadHIveData {

def main(args: Array[String]): Unit = {

val spark = SparkSession.builder()

.appName("SparkHiveExample")

.master("local[*]")

.enableHiveSupport()

.getOrCreate()

// 查询Hive表数据

val result = spark.sql("select * from test2")

result.show()

spark.stop()

}

}第三步:测试结果

两个测试结果都一样(此处只粘贴一个):

注意:如果报错了,则需要开启hive的元数据执行以下语句,重新执行spark任务即可

nohup hive --service metastore 2>&1 &

配置文件信息:

pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>SparkTestScala</artifactId>

<version>1.0-SNAPSHOT</version>

<packaging>jar</packaging>

<name>SparkTestScala</name>

<url>http://maven.apache.org</url>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<hadoop.version>3.3.1</hadoop.version>

<scala.version>2.12.10</scala.version>

<scala.binary.version>2.12</scala.binary.version>

<spark.version>3.1.1</spark.version>

<mysql.version>8.0.23</mysql.version>

<hive.version>3.1.3</hive.version>

<!--provided/compile-->

<scope.change>provided</scope.change>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.12</artifactId>

<version>${hive.version}</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>${mysql.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-auth</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-compiler</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-reflect</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

</dependency>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>3.1.3</version>

<exclusions>

<exclusion>

<groupId>org.pentaho</groupId>

<artifactId>pentaho-aggdesigner-algorithm</artifactId>

</exclusion>

</exclusions>

</dependency>

</dependencies>

</project>

hive.site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- jdbc连接的URL -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://master:3306/hive?useSSL=false</value>

</property>

<!-- jdbc连接的Driver,有些是带cj的driver,这里不影响-->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<!-- jdbc连接的username-->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<!-- jdbc连接的password -->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<!-- Hive默认在HDFS的工作目录 -->

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<!--元数据存储授权-->

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

<!-- Hive 默认在 HDFS 的工作目录 -->

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<!-- 开启元数据 -->

<property>

<name>hive.metastore.uris</name>

<value>thrift://master:9083</value>

</property>

</configuration>

hdfs.site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!-- NameNode web端访问地址-->

<property>

<name>dfs.namenode.http-address</name>

<value>master:9870</value>

</property>

<!-- SecondNameNode web端访问地址-->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>master:9868</value>

</property>

<property>

<name>dfs.client.use.datanode.hostname</name>

<value>true</value>

</property>

</configuration>

core.site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!--NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:8020</value>

</property>

<!--hadoop数据的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop-3.2.3/data</value>

</property>

<!-- 配置HDFS网页用户为hadoop -->

<property>

<name>hadoop.http.staticuser.user</name>

<value>hadoop</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>master:2181,server1:2181,server2:2181</value>

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.users</name>

<value>*</value>

</property>

<property>

<name>hadoop.native.lib</name>

<value>true</value>

</property>

</configuration>

1306

1306

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?