mac版

伪分布式

首先安装py4j

在 ~/.bash_profile 里添加:

#Spark environment configs

export SPARK_HOME=/usr/local/Cellar/apache-spark/1.6.0/libexec

export PATH=$PATH:${SPARK_HOME}/bin

#Python environment configs

PYTHONPATH=/Users/xiaocong/Envs/py/bin/python

PYTHONPATH=$SPARK_HOME/libexec/python:$SPARK_HOME/libexec/python/build:$PYTHONPATH

export PYTHONPATH=$SPARK_HOME/libexec/python/:$SPARK_HOME/libexec/python/lib/py4j-0.9-src.zip:$PYTHONPATH

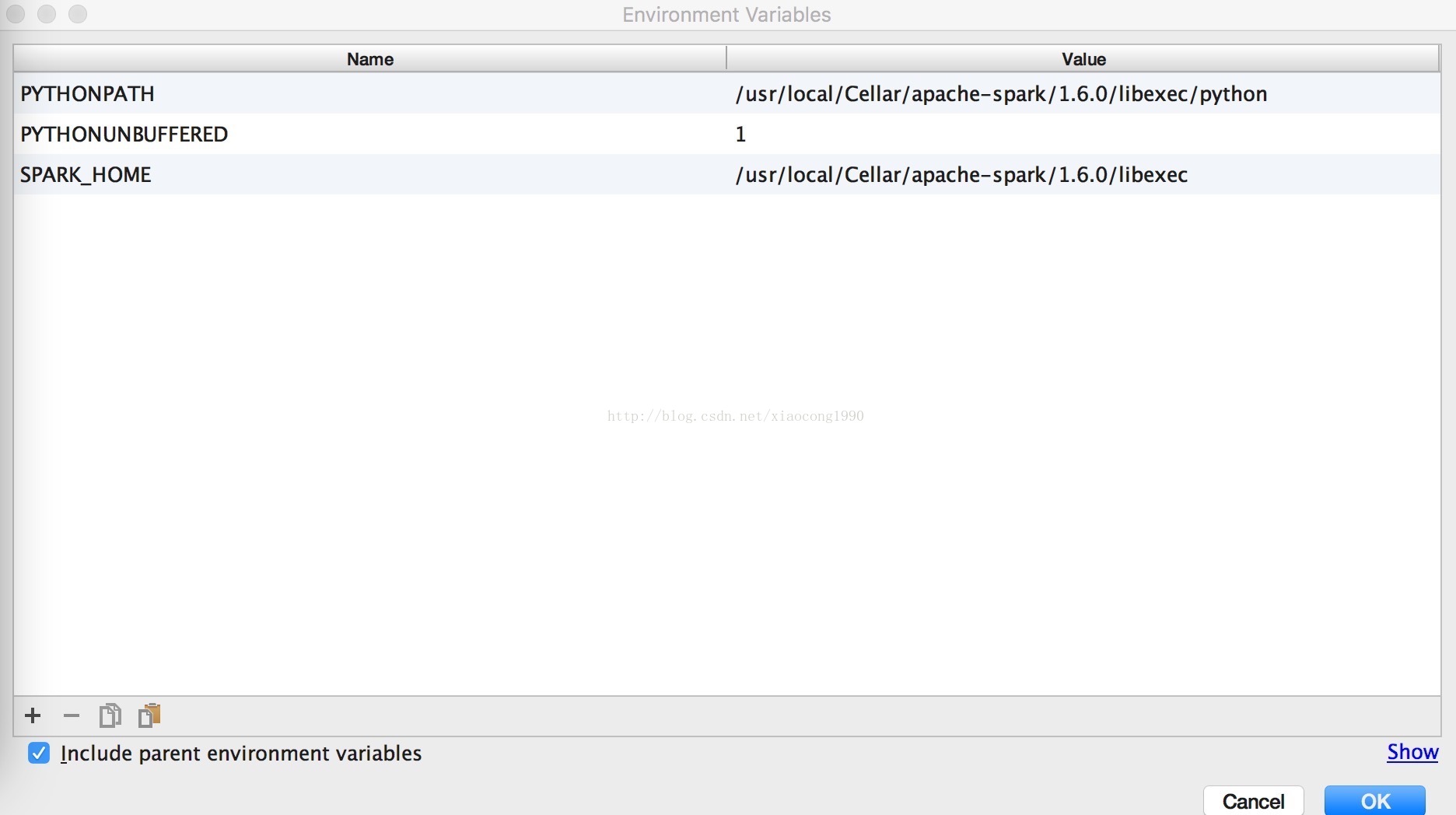

export PATH="/usr/local/sbin:$PATH"打开PyCharm,创建一个Project。然后选择"Run"->"Edit Configurations"->"Environment variables"

增加SPARK_HOME目录和PYTHONPATH目录。

其中,SPARK_HOME:Spark安装目录

PYTHONPATH:Spark安装目录下的Python目录

试运行:

from pyspark import SparkConf,SparkContext

sc=SparkContext("local[2]","first")

user_data=sc.textFile("u.user")

user_fields=user_data.map(lambda line:line.split("|"))

print user_fields

612

612

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?