本文由@星沉阁冰不语出品,转载请注明作者和出处。

文章链接:http://blog.csdn.net/xingchenbingbuyu/article/details/53674544

微博:http://weibo.com/xingchenbing

闲言少叙,直接开始。

既然是要用C++来实现,那么我们自然而然的想到设计一个神经网络类来表示神经网络,这里我称之为Net类。由于这个类名太过普遍,很有可能跟其他人写的程序冲突,所以我的所有程序都包含在namespace liu中,由此不难想到我姓刘。在之前的博客反向传播算法资源整理中,我列举了几个比较不错的资源。对于理论不熟悉而且学习精神的同学可以出门左转去看看这篇文章的资源。这里假设读者对于神经网络的基本理论有一定的了解。

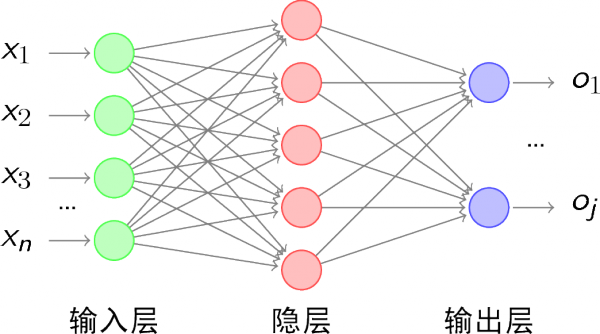

在真正开始coding之前还是有必要交代一下神经网络基础,其实也就是设计类和写程序的思路。

简而言之,神经网络的包含几大要素:神经元节点、层(layer)、权值(weights)和偏置项(bias)。神经网络的两大计算过程分别是前向传播和反向传播过程。每层的前向传播分别包含加权求和(卷积?)的线性运算和激活函数的非线性运算。反向传播主要是用BP算法更新权值。

虽然里面还有很多细节,但是对于作为第一篇的本文来说,以上内容足够了。神经网络中的计算几乎都可以用矩阵计算的形式表示,这也是我用opencv的Mat类的原因之一,另一个原因是我最熟悉的库就是opencv...。有很多比较好的库和框架在实现神经网络的时候会用很多类来表示不同的部分。比如Blob类表示数据,Layer类表示各种层,Optimizer类来表示各种优化算法。但是我这里没那么复杂,主要还是我能力有限,只用一个Net类表示神经网络。

还是直接让程序说话,Net类包含在Net.h中,大致如下:

#ifndef NET_H

#define NET_H

#endif // NET_H

#pragma once

#include <iostream>

#include<opencv2\core\core.hpp>

#include<opencv2\highgui\highgui.hpp>

//#include<iomanip>

#include"Function.h"

namespace liu

{

class Net

{

public:

std::vector<int> layer_neuron_num;

std::vector<cv::Mat> layer;

std::vector<cv::Mat> weights;

std::vector<cv::Mat> bias;

public:

Net() {};

~Net() {};

//Initialize net:genetate weights matrices、layer matrices and bias matrices

// bias default all zero

void initNet(std::vector<int> layer_neuron_num_);

//Initialise the weights matrices.

void initWeights(int type = 0, double a = 0., double b = 0.1);

//Initialise the bias matrices.

void initBias(cv::Scalar& bias);

//Forward

void farward();

//Forward

void backward();

protected:

//initialise the weight matrix.if type =0,Gaussian.else uniform.

void initWeight(cv::Mat &dst, int type, double a, double b);

//Activation function

cv::Mat activationFunction(cv::Mat &x, std::string func_type);

//Compute delta error

void deltaError();

//Update weights

void updateWeights();

};

}这不是完整的形态,只是对应于本文内容的一个简化版,简化之后看起来更加清晰明了。

现在Net类只有四个成员变量,分别是:

每一层神经元数目(layer_neuron_num)

层(layer)

权值矩阵(weights)

偏置项(bias)

权值用矩阵表示就不用说了,需要说明的是,为了计算方便,这里每一层和偏置项也用Mat表示,每一层和偏置都用一个单列矩阵来表示。

Net类的成员函数除了默认的构造函数和析构函数,还有:

initNet():用来初始化神经网络

initWeights():初始化权值矩阵,调用initWeight()函数

initBias():初始化偏置项

forward():执行前向运算,包括线性运算和非线性激活,同时计算误差

backward():执行反向传播,调用updateWeights()函数更新权值。

这些函数已经是神经网络程序核心中的核心。剩下的内容就是慢慢实现了,实现的时候需要什么添加什么,逢山开路,遇河架桥。

先说一下initNet()函数,这个函数只接受一个参数——每一层神经元数目,然后借此初始化神经网络。这里所谓初始化神经网络的含义是:生成每一层的矩阵、每一个权值矩阵和每一个偏置矩阵。听起来很简单,其实也很简单。

实现代码在Net.cpp中:

//Initialize net

void Net::initNet(std::vector<int> layer_neuron_num_)

{

layer_neuron_num = layer_neuron_num_;

//Generate every layer.

layer.resize(layer_neuron_num.size());

for (int i = 0; i < layer.size(); i++)

{

layer[i].create(layer_neuron_num[i], 1, CV_32FC1);

}

std::cout << "Generate layers, successfully!" << std::endl;

//Generate every weights matrix and bias

weights.resize(layer.size() - 1);

bias.resize(layer.size() - 1);

for (int i = 0; i < (layer.size() - 1); ++i)

{

weights[i].create(layer[i + 1].rows, layer[i].rows, CV_32FC1);

//bias[i].create(layer[i + 1].rows, 1, CV_32FC1);

bias[i] = cv::Mat::zeros(layer[i + 1].rows, 1, CV_32FC1);

}

std::cout << "Generate weights matrices and bias, successfully!" << std::endl;

std::cout << "Initialise Net, done!" << std::endl;

}这里生成各种矩阵没啥难点,唯一需要留心的是权值矩阵的行数和列数的确定。值得一提的是这里把圈子默认全设为0。

权值初始化函数initWeights()调用initWeight()函数,其实就是初始化一个和多个的区别。

//initialise the weights matrix.if type =0,Gaussian.else uniform.

void Net::initWeight(cv::Mat &dst, int type, double a, double b)

{

if (type == 0)

{

randn(dst, a, b);

}

else

{

randu(dst, a, b);

}

}

//initialise the weights matrix.

void Net::initWeights(int type, double a, double b)

{

//Initialise weights cv::Matrices and bias

for (int i = 0; i < weights.size(); ++i)

{

initWeight(weights[i], 0, 0., 0.1);

}

}偏置初始化是给所有的偏置赋相同的值。这里用Scalar对象来给矩阵赋值。

//Initialise the bias matrices.

void Net::initBias(cv::Scalar& bias_)

{

for (int i = 0; i < bias.size(); i++)

{

bias[i] = bias_;

}

}至此,神经网络需要初始化的部分已经全部初始化完成了。

我们可以用下面的代码来初始化一个神经网络,虽然没有什么功能,但是至少可以测试下现在的代码是否有BUG:

#include"../include/Net.h"

//<opencv2\opencv.hpp>

using namespace std;

using namespace cv;

using namespace liu;

int main(int argc, char *argv[])

{

//Set neuron number of every layer

vector<int> layer_neuron_num = { 784,100,10 };

// Initialise Net and weights

Net net;

net.initNet(layer_neuron_num);

net.initWeights(0, 0., 0.01);

net.initBias(Scalar(0.05));

getchar();

return 0;

}亲测没有问题。

本文先到这里,前向传播和反向传播放在下一篇内容里面。所有的代码都已经托管在Github上面,感兴趣的可以去下载查看。欢迎提意见。

768

768

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?