1. 前言

需求如下

满足1、2任意一个条件就向OpenTSDB写入数据,3是额外需求:

1. 堆积数据,攒够 100 条后批量发送

2. 另新起线程,每 5 秒强制发送和清空当前等待队列

3. 考虑多线程情况,在同一个时间戳,有多个客户端同时写数据到opentsdb(策略:同个时间戳如果有多个数据值,则把相同时间戳的数据值进行累加)

实现:

基于python socket、threading、request包实现

2. 代码

开启一个服务端,可接受多个客户端的数据,采用tcp进行通信。

socket 服务端代码:

# coding=utf-8

import socket

import requests

import threading

import json

import collections

class WriteOpentsdbServer(object):

def __init__(self):

self.host = '127.0.0.1'

self.port = 9994

self.batch_num = 20

self.sleep_time = 10

self.if_timing_flag = True

self.session = requests.Session()

self.json_map = collections.OrderedDict()

self.opentsdb_url = "http://localhost:4242/api/put?details"

self.mutex = threading.Lock()

def send_json_data(self):

send_tmp_data = None

if len(self.json_map) != 0:

print 'self.json_map', self.json_map

if self.mutex.acquire():

send_tmp_data = self.json_map.values()[:]

self.mutex.release()

self.session.post(url=self.opentsdb_url, json=send_tmp_data)

self.if_timing_flag = True

self.json_map = collections.OrderedDict()

def start_timing(self):

threading.Timer(self.sleep_time, self.send_json_data).start()

self.if_timing_flag = False

def add_to_map(self, json_data):

key = str(json_data['timestamp']) + str(json_data['metric']) + json.dumps(json_data['tags'])

if key not in self.json_map.keys():

self.json_map[key] = json_data

else:

self.json_map.get(key)['value'] = self.json_map.get(key)['value'] + json_data['value']

def run(self):

s = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

s.bind((self.host, self.port))

s.listen(5)

print 'Waiting for connection...'

while True:

# 接受一个新连接:

sock, addr = s.accept()

print 'Accept new connection from %s:%s...' % addr

data = sock.recv(1024)

sock.close()

self.add_to_map(json.loads(data))

if self.if_timing_flag:

self.start_timing()

if len(self.json_map) == self.batch_num:

self.send_json_data()

if __name__ == '__main__':

wos = WriteOpentsdbServer()

wos.run()socket 客户端代码:

# coding=utf-8

import socket

import time

import json

class WriteOpentsdbClient(object):

def __init__(self):

self.host = '127.0.0.1'

self.port = 9994

@staticmethod

def build_json_data(metric, value):

data = {

"metric": metric,

"timestamp": int(time.time()),

"value": value,

"tags": {

"host": "localhost"

}

}

return json.dumps(data)

def send_by_tcp(self, metric, value):

s = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

# 建立连接:

s.connect((self.host, self.port))

# 发送数据:

send_data = self.build_json_data(metric, value)

print send_data

s.send(send_data)

s.close()

if __name__ == '__main__':

woc = WriteOpentsdbClient()

woc.send_by_tcp('sys.batch.xyd_4', 0.3)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.4)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.5)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.6)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.7)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.8)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.9)

time.sleep(1)

woc.send_by_tcp('sys.batch.xyd_4', 0.95)

for _ in range(1000):

woc.send_by_tcp('sys.batch.xyd_4', 0.01)3. 结果

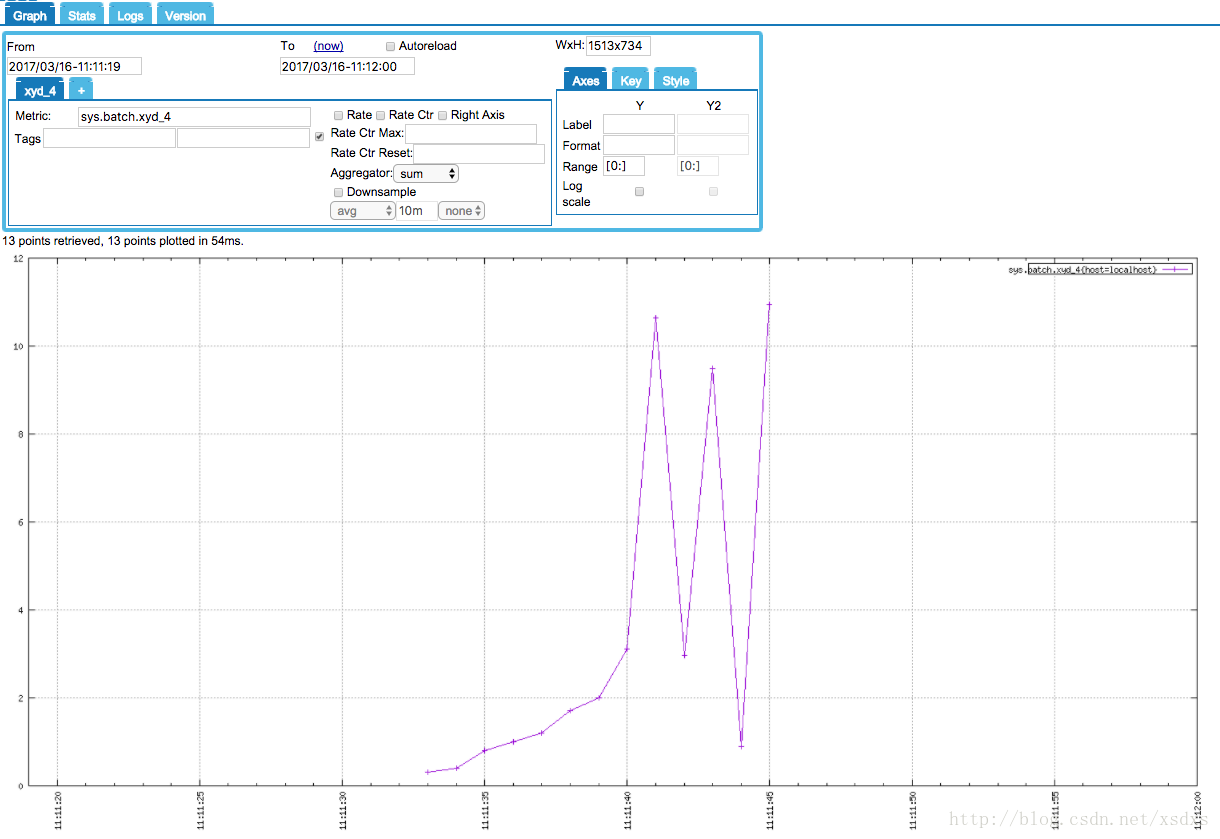

实际运行中,开了三个客户端,同时写入。结果如下图:

需要解释的是,图中数值突变的原因在于:相同时间戳的数据值进行累加了。这是我们想要的结果~

2554

2554

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?