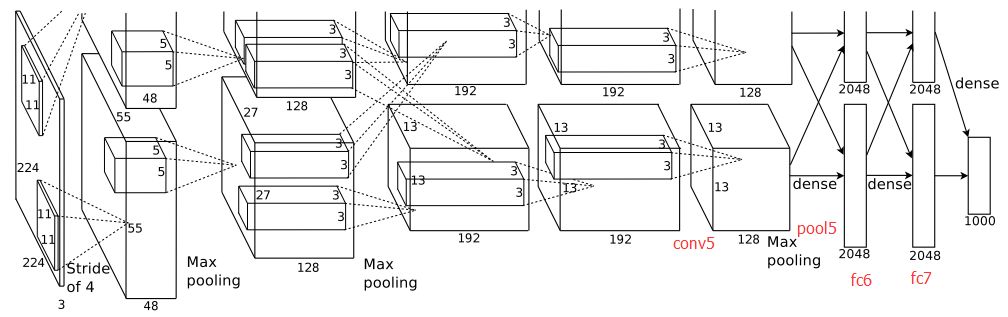

2012年,AlexNet赢得ImageNet比赛冠军。

AlexNet是8层神经网络,包含5个卷积层和3个全连接层。

结构如下:

层次大小计算公式:

imgsize−filtersize+2∗paddingstride+1=newfeturesize

每一层结构:

第一层:conv - relu - pool - LRN

data:227*227*96

conv:output_channel=96,kernel=11,stride=4

data:55*55*96 (

227−114+1=55

)

relu

data:55*55*96

pool:kernel=3,stride=2

data:27*27*96 (

55−32+1=27

)

norm

data:27*27*96

第二层:conv - relu - pool - LRN

data:27*27*96

conv:output_channel=256,kernel=5,stride=1,pad=2,group=2

data:27*27*256 (

27−5+2∗21+1=27

)

relu

data:27*27*256

pool:kernel=3,stride=2

data:13*13*256 (

27−32+1=13

)

norm

data:13*13*256

第三层:conv - relu

data:13*13*256

conv:output_channel=384,kernel=3,stride=1,pad=1

data:13*13*384 (

13−3+1∗21+1=13

)

relu

data:13*13*384

第四层:conv - relu

data:13*13*384

conv:output_channel=384,kernel=3,stride=1,pad=1

data:13*13*384 (

13−3+1∗21+1=13

)

relu

data:13*13*384

第五层:conv - relu - pool

data:13*13*384

conv:output_channel=256,kernel=3,stride=1,pad=1

data:13*13*256 (

13−3+1∗21+1=13

)

relu

data:13*13*256

pool:kernel=3,stride=2

data:6*6*256(

13−32+1=6

)

第六层:fc - relu - dropout

data:6*6*256

fc

data:4096

relu

data:4096

dropout

data:4096

第七层:fc - relu - dropout

data:4096

fc

data:4096

relu

data:4096

dropout

data:4096

第八层:fc - softmax

data:4096

fc

data:1000

使用gluon实现简易代码如下:

net = nn.Sequential()

with net.name_scope():

net.add(

# 第一层

nn.Conv2D(channels=96, kernel_size=11,

strides=4, activation='relu'),

nn.MaxPool2D(pool_size=3, strides=2),

# 第二层

nn.Conv2D(channels=256, kernel_size=5,

padding=2, activation='relu'),

nn.MaxPool2D(pool_size=3, strides=2),

# conv3

nn.Conv2D(channels=384, kernel_size=3,

padding=1, activation='relu'),

#conv4

nn.Conv2D(channels=384, kernel_size=3,

padding=1, activation='relu'),

conv5

nn.Conv2D(channels=256, kernel_size=3,

padding=1, activation='relu'),

nn.MaxPool2D(pool_size=3, strides=2),

# R1

nn.Flatten(),

nn.Dense(4096, activation="relu"),

nn.Dropout(.5),

# R2

nn.Dense(4096, activation="relu"),

nn.Dropout(.5),

# R3

nn.Dense(1000)

)

347

347

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?