下面在Windows中进行操作

1.安装配置JDK

①官网下载Java开发工具安装包jdk-8u201-windows-x64.exe:https://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

②配置jdk环境变量

2.安装Eclipse

官网下载Eclipse安装包eclipse-inst-win64:https://www.eclipse.org/downloads/

并安装

3.安装Maven

① Maven官网http://maven.apache.org/download.cgi,选择最近的镜像,选择Maven压缩包apache-maven-3.6.0-bin.tar.gz开始下载。

②解压Maven压缩包apache-maven-3.6.0-bin.tar.gz,解压后的文件夹\apache-maven-3.6.0,将其考入自定义路径,如C:\eclipse\apache-maven-3.6.0。

③配置Maven 环境变量,Path添加Maven的\bin的安装路径,cmd命令行运行mvn -v,查看是否成功安装配置。

4.Eclipse配置Maven

①修改settings.xml

在安装所在文件夹\apache-maven-3.6.0下面,新建\repository文件夹,作为Maven本地仓库。在文件settings.xml里添加 C:\eclipse\apache-maven-3.6.0\repository

②配置Maven的installation和User Settings

【Preferences】→【Maven】→【Installations】配置Maven安装路径,【User Settings】配置settings.xml的路径。

③添加pom.xml依赖

依赖(Maven Repository: hadoop)所在网址:https://mvnrepository.com/tags/hadoop ,找到对应版本的三个依赖(如下),拷贝至pom.xml的与之间,保存之后自动生成Maven Dependencies。

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.3</version>

</dependency>

</dependencies>

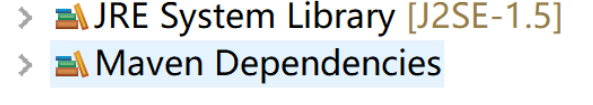

耐心等待 成功后项目书增加两项新的

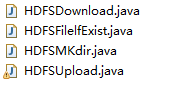

5.编写Java程序并运行

文件下载:

>import java.io.FileOutputStream; import java.io.IOException; import

java.io.InputStream; import java.io.OutputStream;

import org.apache.hadoop.conf.Configuration; import

org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path;

public class HDFSDownload {

private static InputStream input; private static OutputStream

output; public static void main(String[] args) throws IOException {

//设置root权限 System.setProperty("HADOOP_USER_NAME", "root"); //创建HDFS连接对象client Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://izwz97mvztltnke7bj93idz:9000");

FileSystem client=FileSystem.get(conf);

output = new FileOutputStream("c:\\hdfs\\bbout.txt"); //创建HDFS的输入流 input=client.open((new Path("/bb.txt")));

byte[] buffer= new byte[1024]; int len=0; while ((len=input.read(buffer))!=-1) {

output.write(buffer, 0, len);

}

output.flush(); input.close(); output.close();

} }

文件上传:

import java.io.FileInputStream; import java.io.IOException; import

java.io.InputStream; import java.io.OutputStream;

>import org.apache.hadoop.conf.Configuration; import

org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path;

public class HDFSUpload {

private static InputStream input; private static OutputStream

output; public static void main(String[] args) throws IOException {

//设置root权限 System.setProperty("HADOOP_USER_NAME", "root"); //创建HDFS连接对象client Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://izwz97mvztltnke7bj93idz:9000");

conf.set("dfs.client.use.datanode.hostname", "true"); FileSystem

client = FileSystem.get(conf);

/*//要上传的资源路径 String src = "C:/Users/Desktop/bcdf.txt"; //要上传的hdfs路径 String hdfsDst = "/aadir";

client.copyFromLocalFile(new Path(src), new Path(hdfsDst));

System.out.println("Success");*/

//创建HDFS的输入流 input = new FileInputStream("D:\\xx编程\\bcdf.txt"); //创建HDFS的输出流 output = client.create(new Path("/aadir/about.txt"));

//写文件到HDFS byte[] buffer = new byte[1024]; int len=0; while

((len=input.read(buffer))!=-1) {

output.write(buffer, 0, len);

}

//防止输出数据不完整 output.flush(); //使用工具类IOUtils上传或下载 input.close(); output.close();

} }

文件创建:

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

public class HDFSMKdir {

public static void main(String[] args) throws IOException {

//设置root权限

System.setProperty("HADOOP_USER_NAME", "root");

//创建HDFS连接对象client

Configuration conf = new Configuration();

conf.set("fs.defaultFS","hdfs://izwz97mvztltnke7bj93idz:9000");

FileSystem client = FileSystem.get(conf);

client.mkdirs(new Path("/aadir"));

client.close();

System.out.println("successfully!");

}

}

查看文件是否存在:

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

public class HDFSFilelfExist {

public static void main(String[] args) throws IOException {

//设置root权限

System.setProperty("HADOOP_USER_NAME", "root");

//创建HDFS连接对象client

Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://izwz97mvztltnke7bj93idz:9000");

FileSystem client = FileSystem.get(conf);

//声明对象文件

String fileName="/bb.txt";

if (client.exists(new Path(fileName))) {

System.out.println("文件存在");

}else {

System.out.println("文件不存在");

}

}

}

HDFS基本命令:

hdfs dfs -ls / 查看hdfs根目录下文件和目录

hdfs dfs -ls -R / 查看hdfs根目录下包括子目录在内的所有文件和目录

hdfs dfs -mkdir /aa/bb 在hdfs的/aa目录下新建/bb目录

hdfs dfs -rm -r /aa/bb 删除hdfs的/aa目录下的/bb目录

hdfs dfs -rm /aa/out.txt 删除hdfs的/aa目录下的out.txt文件

hdfs dfs -put /root/mk.txt /aa 把本地文件上传到hdfs

hdfs dfs -copyFromLocal a.txt / 把本地文件上传到hdfs

hdfs dfs -get /bb.txt bbcopy.txt 从hdfs下载文件到本地

hdfs dfs -copyToLocal /bb.txt bbcopy.txt 从hdfs下载文件到本地

996

996

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?