学习记录

模型建立

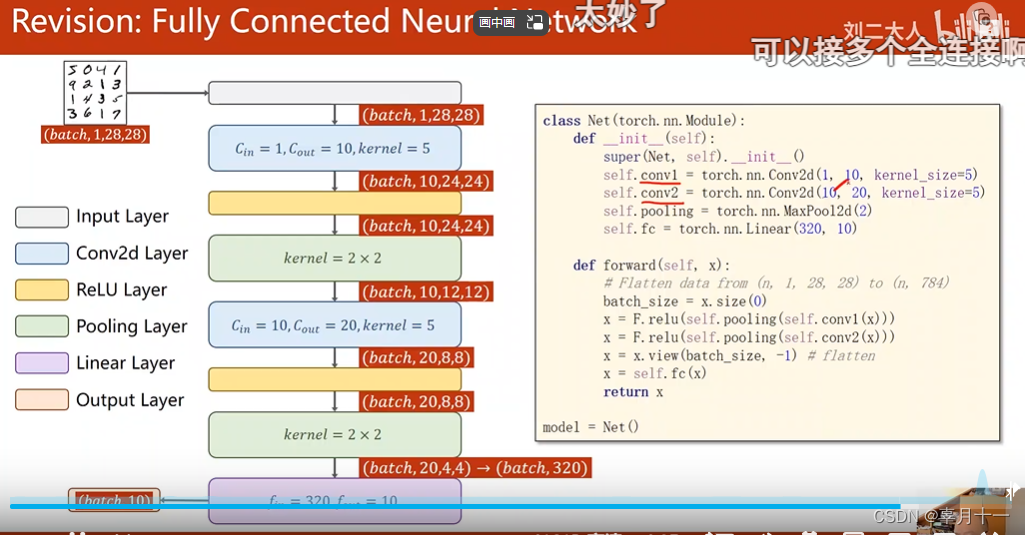

示意图

import torch.nn as nn

class ConvNet(nn.Module):

def __init__(self):

super(ConvNet, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(1, 10, kernel_size=5, stride=1, padding=0),

nn.ReLU(),

nn.MaxPool2d(2)

)

self.conv2 = nn.Sequential(

nn.Conv2d(10, 20, kernel_size=5, stride=1, padding=0),

nn.ReLU(),

nn.MaxPool2d(2)

)

# 全连接层展开

self.fc = nn.Sequential(

nn.Linear(320, 128),

nn.ReLU(),

nn.Linear(128, 64),

nn.ReLU(),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = x.view(x.size(0), -1)

#x = x.view(-1, 320) # 展平操作,为全连接层准备

x = self.fc(x)

return x模型训练

from model_mnist.Net import ConvNet

import torch

from data.data_ import transform

from torch.utils.data import DataLoader

from torchvision.datasets import mnist

#加载数据集

train_set = mnist.MNIST('./data', train=True, transform=transform, download=False)

train_loader = DataLoader(dataset = train_set,shuffle=True,batch_size=64,drop_last=True)

#实例化模型

model = ConvNet()

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(),lr = 0.01, momentum=0.5)

def train(epoch):

'''训练'''

for index, data in enumerate(train_loader, 0):

inputs, target = data # 取数据

optimizer.zero_grad() # 梯度清零

outputs = model(inputs) # 训练

loss = criterion(outputs, target) # 算损失

loss.backward() # 反向传播

optimizer.step() # 优化梯度

# 累计loss ,这里要用item()取数据要不回构建计算图

if epoch% 20== 19:

torch.save(model.state_dict(), "model_mnist/model.pth") # 保存模型

torch.save(optimizer.state_dict(), "model_mnist/optimizer.pth")

print("训练次数为:{},损失值为:{}".format(index, loss.item()))

if __name__ == '__main__':

for epoch in range(20):

print({"————————第{}轮测试开始——————".format(epoch+ 1)})

train(epoch)模型测试

import os

import torch

from data.data_ import transform

from torch.utils.data import DataLoader

from torchvision.datasets import mnist

from model_mnist.Net import ConvNet

test_set = mnist.MNIST('./data', train=False, transform=transform, download=False)

test_loader = DataLoader(dataset = test_set,shuffle=True,batch_size=64,drop_last=True)

model = ConvNet()

if os.path.exists('model_mnist/model.pth'):

print("ok")

model.load_state_dict(torch.load("model_mnist/model.pth"))

def test():

correct = 0 # 正确预测的个数

total = 0 # 总数

with torch.no_grad(): # 测试不用计算梯度

for data in test_loader:

input, labels = data

output = model(input)

# output输出10个预测取值,概率最大的为预测数

probability, predict = torch.max(input=output.data, dim=1) #dim =1是行

# 返回一个元祖,第一个为最大概率值,第二个为最大概率值的下标

total += labels.shape[0] # labels是形状为(batch_size,1)的张量,使用size(0)取出该批的大小

correct += (predict == labels).sum().item() # predict 和labels 均为(batch_size,1)的矩阵,sum求出相等的个数

print("测试准确率为:%.6f" %(correct / total))

if __name__ == '__main__':

test()准确率

269

269

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?