机器学习建模与评估

知识点:

1.数据集的划分

2.机器学习模型建模的三行代码

3.机器学习模型分类问题的评估

作业:尝试对心脏病数据集采用机器学习模型建模和评估

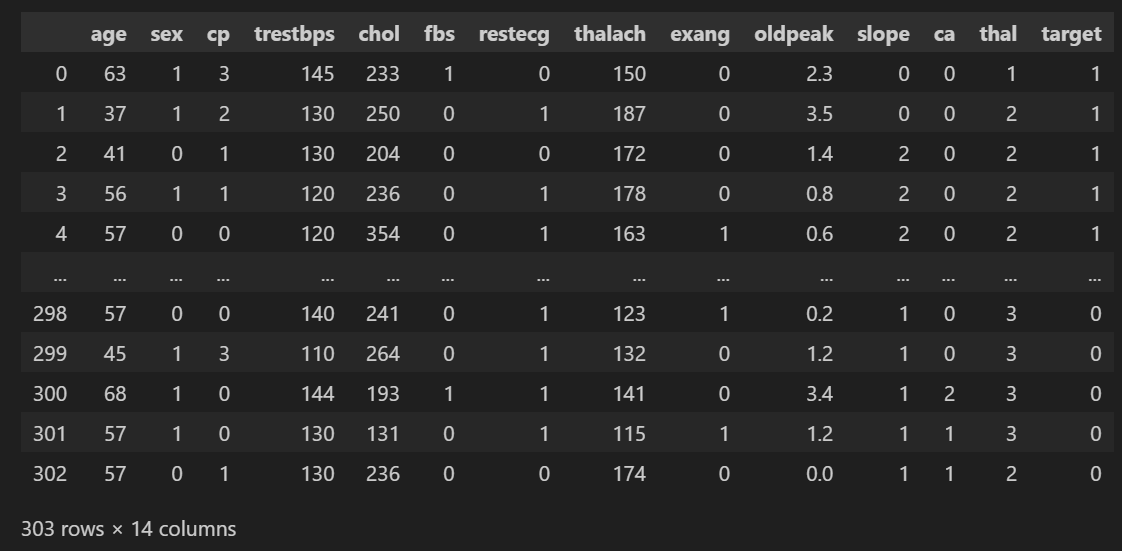

导入数据并查看

import pandas as pd

data = pd.read_csv(r'heart.csv')

data

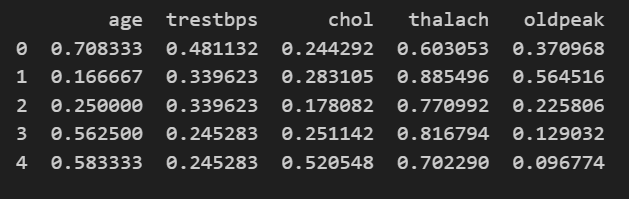

连续特征归一化

from sklearn.preprocessing import MinMaxScaler

# 定义需要归一化的特征列表

features_to_scale = ["age", "trestbps", "chol", "thalach", "oldpeak"]

# 初始化归一化器(范围默认 [0, 1])

scaler = MinMaxScaler()

# 对选定特征进行归一化

data[features_to_scale] = scaler.fit_transform(data[features_to_scale])

# 查看结果

print(data[features_to_scale].head())

数据划分

# 划分训练集和测试集

from sklearn.model_selection import train_test_split

X = data.drop(['target'], axis=1) # 特征,axis=1表示按列删除

y = data['target'] # 标签

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42) # 划分数据集,20%作为测试集,随机种子为42

# 训练集和测试集的形状

print(f"训练集形状: {X_train.shape}, 测试集形状: {X_test.shape}") # 打印训练集和测试集的形状模型训练

# #安装xgboost库

!pip install xgboost -i https://pypi.tuna.tsinghua.edu.cn/simple/

# #安装lightgbm库

!pip install lightgbm -i https://pypi.tuna.tsinghua.edu.cn/simple/

# #安装catboost库

!pip install catboost -i https://pypi.tuna.tsinghua.edu.cn/simple/

from sklearn.svm import SVC #支持向量机分类器

from sklearn.neighbors import KNeighborsClassifier #K近邻分类器

from sklearn.linear_model import LogisticRegression #逻辑回归分类器

import xgboost as xgb #XGBoost分类器

import lightgbm as lgb #LightGBM分类器

from sklearn.ensemble import RandomForestClassifier #随机森林分类器

from catboost import CatBoostClassifier #CatBoost分类器

from sklearn.tree import DecisionTreeClassifier #决策树分类器

from sklearn.naive_bayes import GaussianNB #高斯朴素贝叶斯分类器

from sklearn.metrics import accuracy_score, precision_score, recall_score, f1_score # 用于评估分类器性能的指标

from sklearn.metrics import classification_report, confusion_matrix #用于生成分类报告和混淆矩阵

import warnings #用于忽略警告信息

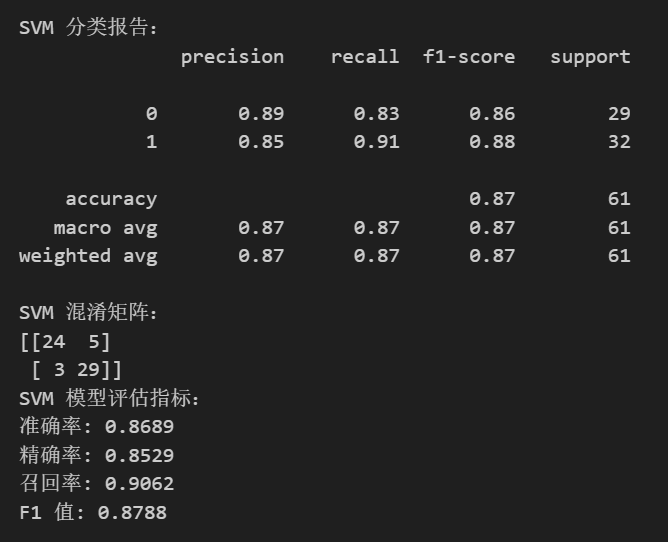

warnings.filterwarnings("ignore") # 忽略所有警告信息SVM

svm_model = SVC(random_state=42)

svm_model.fit(X_train, y_train)

svm_pred = svm_model.predict(X_test)

print("\nSVM 分类报告:")

print(classification_report(y_test, svm_pred)) # 打印分类报告

print("SVM 混淆矩阵:")

print(confusion_matrix(y_test, svm_pred)) # 打印混淆矩阵

# 计算 SVM 评估指标,这些指标默认计算正类的性能

svm_accuracy = accuracy_score(y_test, svm_pred)

svm_precision = precision_score(y_test, svm_pred)

svm_recall = recall_score(y_test, svm_pred)

svm_f1 = f1_score(y_test, svm_pred)

print("SVM 模型评估指标:")

print(f"准确率: {svm_accuracy:.4f}")

print(f"精确率: {svm_precision:.4f}")

print(f"召回率: {svm_recall:.4f}")

print(f"F1 值: {svm_f1:.4f}")

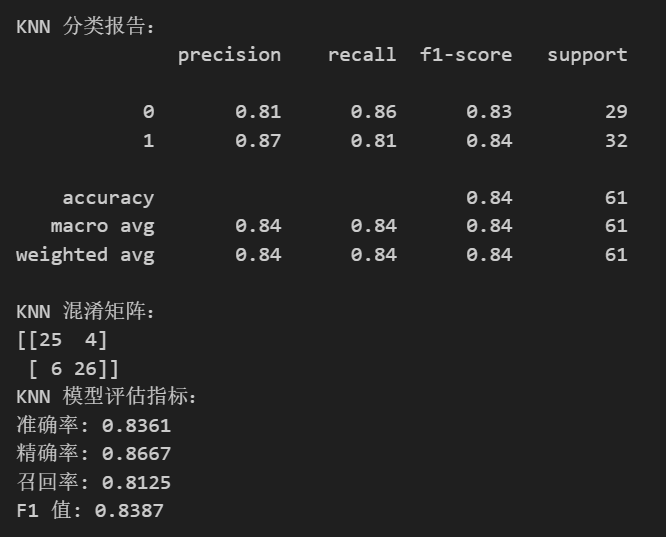

KNN

knn_model = KNeighborsClassifier()

knn_model.fit(X_train, y_train)

knn_pred = knn_model.predict(X_test)

print("\nKNN 分类报告:")

print(classification_report(y_test, knn_pred))

print("KNN 混淆矩阵:")

print(confusion_matrix(y_test, knn_pred))

knn_accuracy = accuracy_score(y_test, knn_pred)

knn_precision = precision_score(y_test, knn_pred)

knn_recall = recall_score(y_test, knn_pred)

knn_f1 = f1_score(y_test, knn_pred)

print("KNN 模型评估指标:")

print(f"准确率: {knn_accuracy:.4f}")

print(f"精确率: {knn_precision:.4f}")

print(f"召回率: {knn_recall:.4f}")

print(f"F1 值: {knn_f1:.4f}")

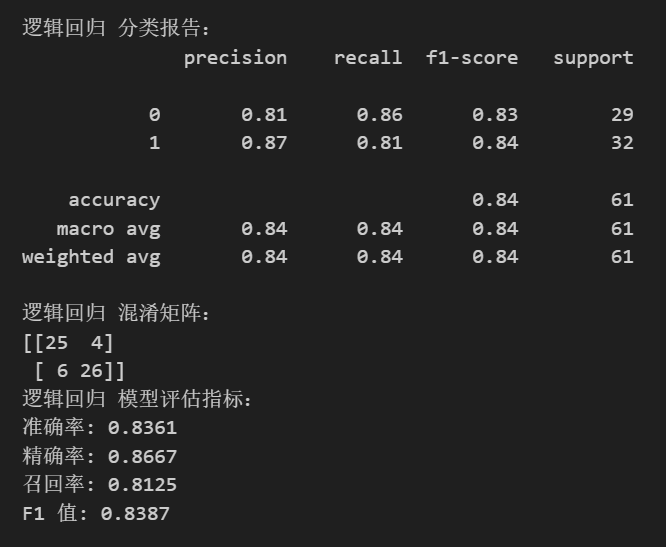

逻辑回归

logreg_model = LogisticRegression(random_state=42)

logreg_model.fit(X_train, y_train)

logreg_pred = logreg_model.predict(X_test)

print("\n逻辑回归 分类报告:")

print(classification_report(y_test, logreg_pred))

print("逻辑回归 混淆矩阵:")

print(confusion_matrix(y_test, logreg_pred))

logreg_accuracy = accuracy_score(y_test, logreg_pred)

logreg_precision = precision_score(y_test, logreg_pred)

logreg_recall = recall_score(y_test, logreg_pred)

logreg_f1 = f1_score(y_test, logreg_pred)

print("逻辑回归 模型评估指标:")

print(f"准确率: {logreg_accuracy:.4f}")

print(f"精确率: {logreg_precision:.4f}")

print(f"召回率: {logreg_recall:.4f}")

print(f"F1 值: {logreg_f1:.4f}")

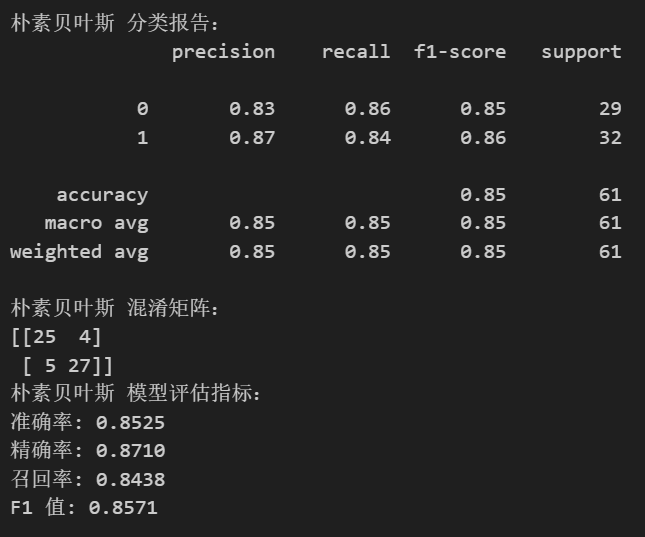

朴素贝叶斯

nb_model = GaussianNB()

nb_model.fit(X_train, y_train)

nb_pred = nb_model.predict(X_test)

print("\n朴素贝叶斯 分类报告:")

print(classification_report(y_test, nb_pred))

print("朴素贝叶斯 混淆矩阵:")

print(confusion_matrix(y_test, nb_pred))

nb_accuracy = accuracy_score(y_test, nb_pred)

nb_precision = precision_score(y_test, nb_pred)

nb_recall = recall_score(y_test, nb_pred)

nb_f1 = f1_score(y_test, nb_pred)

print("朴素贝叶斯 模型评估指标:")

print(f"准确率: {nb_accuracy:.4f}")

print(f"精确率: {nb_precision:.4f}")

print(f"召回率: {nb_recall:.4f}")

print(f"F1 值: {nb_f1:.4f}")

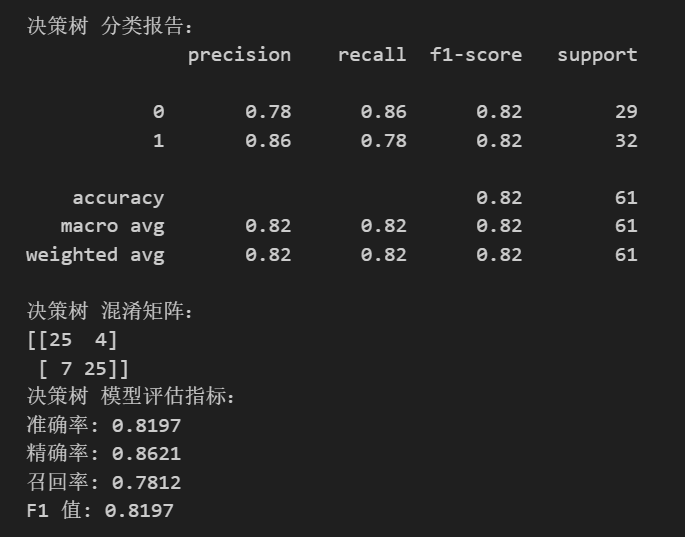

决策树

dt_model = DecisionTreeClassifier(random_state=42)

dt_model.fit(X_train, y_train)

dt_pred = dt_model.predict(X_test)

print("\n决策树 分类报告:")

print(classification_report(y_test, dt_pred))

print("决策树 混淆矩阵:")

print(confusion_matrix(y_test, dt_pred))

dt_accuracy = accuracy_score(y_test, dt_pred)

dt_precision = precision_score(y_test, dt_pred)

dt_recall = recall_score(y_test, dt_pred)

dt_f1 = f1_score(y_test, dt_pred)

print("决策树 模型评估指标:")

print(f"准确率: {dt_accuracy:.4f}")

print(f"精确率: {dt_precision:.4f}")

print(f"召回率: {dt_recall:.4f}")

print(f"F1 值: {dt_f1:.4f}")

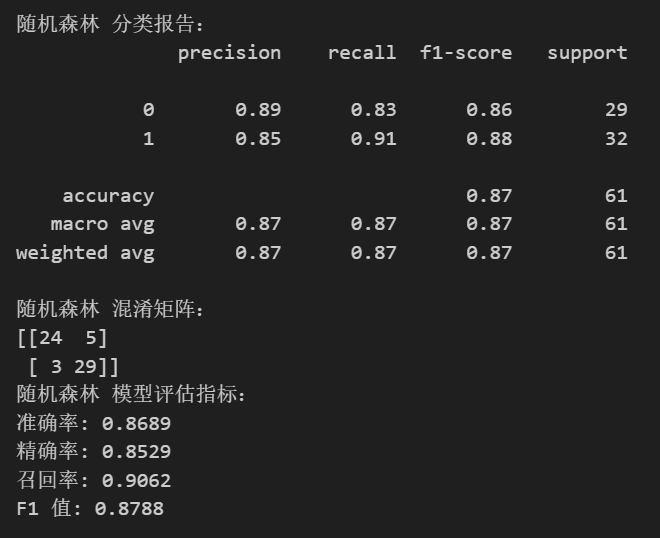

随机森林

rf_model = RandomForestClassifier(random_state=42)

rf_model.fit(X_train, y_train)

rf_pred = rf_model.predict(X_test)

print("\n随机森林 分类报告:")

print(classification_report(y_test, rf_pred))

print("随机森林 混淆矩阵:")

print(confusion_matrix(y_test, rf_pred))

rf_accuracy = accuracy_score(y_test, rf_pred)

rf_precision = precision_score(y_test, rf_pred)

rf_recall = recall_score(y_test, rf_pred)

rf_f1 = f1_score(y_test, rf_pred)

print("随机森林 模型评估指标:")

print(f"准确率: {rf_accuracy:.4f}")

print(f"精确率: {rf_precision:.4f}")

print(f"召回率: {rf_recall:.4f}")

print(f"F1 值: {rf_f1:.4f}")

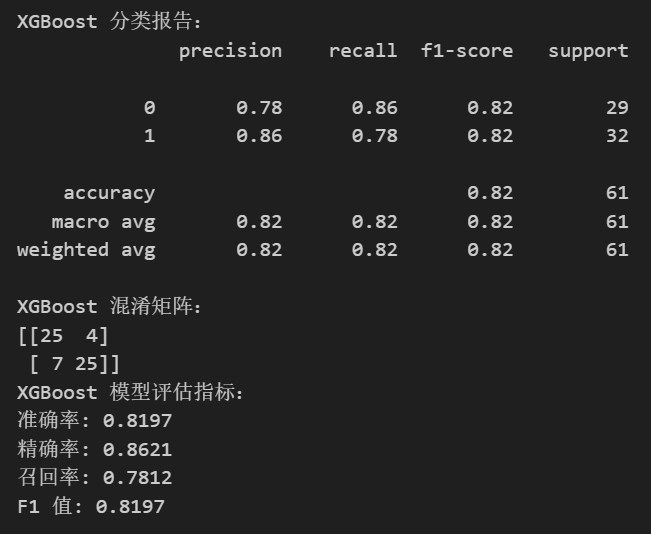

XGBoost

xgb_model = xgb.XGBClassifier(random_state=42)

xgb_model.fit(X_train, y_train)

xgb_pred = xgb_model.predict(X_test)

print("\nXGBoost 分类报告:")

print(classification_report(y_test, xgb_pred))

print("XGBoost 混淆矩阵:")

print(confusion_matrix(y_test, xgb_pred))

xgb_accuracy = accuracy_score(y_test, xgb_pred)

xgb_precision = precision_score(y_test, xgb_pred)

xgb_recall = recall_score(y_test, xgb_pred)

xgb_f1 = f1_score(y_test, xgb_pred)

print("XGBoost 模型评估指标:")

print(f"准确率: {xgb_accuracy:.4f}")

print(f"精确率: {xgb_precision:.4f}")

print(f"召回率: {xgb_recall:.4f}")

print(f"F1 值: {xgb_f1:.4f}")

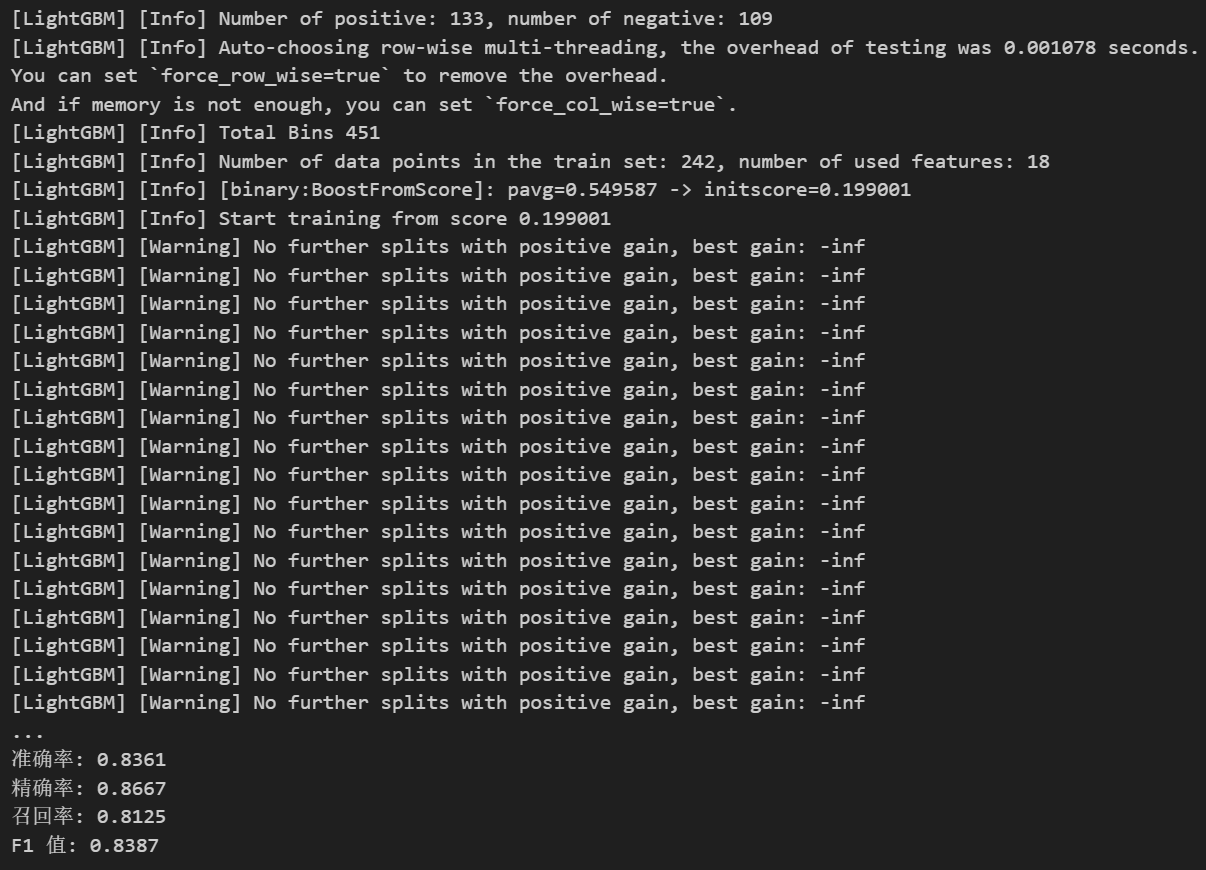

LightGBM

lgb_model = lgb.LGBMClassifier(random_state=42)

lgb_model.fit(X_train, y_train)

lgb_pred = lgb_model.predict(X_test)

print("\nLightGBM 分类报告:")

print(classification_report(y_test, lgb_pred))

print("LightGBM 混淆矩阵:")

print(confusion_matrix(y_test, lgb_pred))

lgb_accuracy = accuracy_score(y_test, lgb_pred)

lgb_precision = precision_score(y_test, lgb_pred)

lgb_recall = recall_score(y_test, lgb_pred)

lgb_f1 = f1_score(y_test, lgb_pred)

print("LightGBM 模型评估指标:")

print(f"准确率: {lgb_accuracy:.4f}")

print(f"精确率: {lgb_precision:.4f}")

print(f"召回率: {lgb_recall:.4f}")

print(f"F1 值: {lgb_f1:.4f}")

没有看到分类报告和混淆矩阵,询问deepseek得知,分类报告和混淆矩阵实际上已经生成,但可能被 LightGBM 的训练信息日志覆盖了。

解决方案:

方法一:抑制 LightGBM 的训练日志

在初始化 LGBMClassifier 时,设置 verbosity=-1 关闭训练日志

方法二:捕获并清理输出(适用于 Notebook)

在 Jupyter Notebook 中,使用 %%capture 魔法命令捕获所有输出

方法三:手动滚动控制台查看完整输出

如果使用终端或 IDE,直接向上滚动查找 LightGBM 分类报告 和 LightGBM 混淆矩阵 的输出

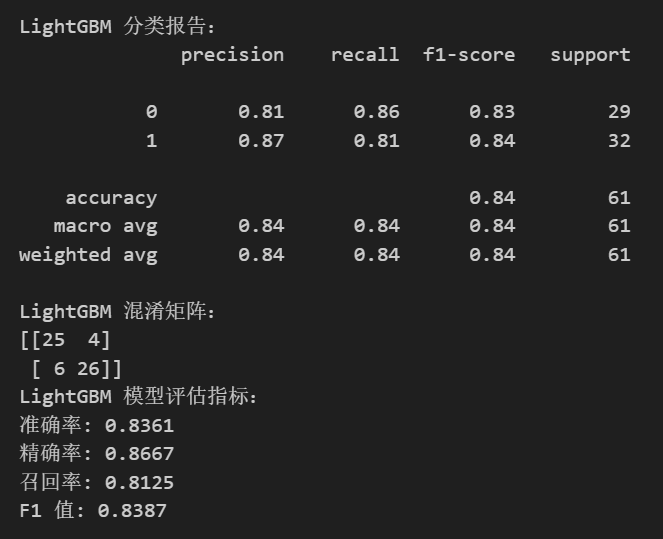

修改后的代码(关闭日志)

lgb_model = lgb.LGBMClassifier(random_state=42, verbosity=-1) # 关闭日志

lgb_model.fit(X_train, y_train)

lgb_pred = lgb_model.predict(X_test)

print("\nLightGBM 分类报告:")

print(classification_report(y_test, lgb_pred))

print("LightGBM 混淆矩阵:")

print(confusion_matrix(y_test, lgb_pred))

lgb_accuracy = accuracy_score(y_test, lgb_pred)

lgb_precision = precision_score(y_test, lgb_pred)

lgb_recall = recall_score(y_test, lgb_pred)

lgb_f1 = f1_score(y_test, lgb_pred)

print("LightGBM 模型评估指标:")

print(f"准确率: {lgb_accuracy:.4f}")

print(f"精确率: {lgb_precision:.4f}")

print(f"召回率: {lgb_recall:.4f}")

print(f"F1 值: {lgb_f1:.4f}")

@浙大疏锦行

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?