触发分区平衡的原因(Rebalance)

-

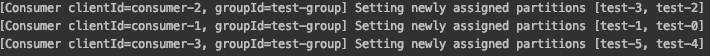

group有新的consumer加入

-

topic分区数变更

-

broker和consumer之间无心跳

默认 session.timeout.ms = 10000,heartbeat.interval.ms = 3000

session.timeout.ms >= n * heartbeat.interval.ms

间隔3秒心跳一次,当超过session.timeout.ms没有心跳时,该consumer实例被移除,然后剩余消费者进行分区平衡。

-

consumer处理业务逻辑时间过长、max.poll.interval.ms设置的太小、max.poll.records设置的太大,这3种情况也可能会触发Rebalance。

场景,consumer实例在分配的分区上进行poll()后处理消息,当处理时间间隔超过了设置的max.poll.interval.ms值,下一次poll()迟迟不能进行。group会开启Rebalance进行分区平衡,如果设置的是手动提交(enable.auto.commit = false),当下一次poll()时,会触发CommitFailedException异常,因为分区已经转,,本次提交offset失败。问题是,该分区新的consumer并不知道offset提交失败这件事,从而导致了重复消费问题。

org.apache.kafka.clients.consumer.CommitFailedException: Commit cannot be completed since the group has already rebalanced and assigned the partitions to another member. This means that the time between subsequent calls to poll() was longer than the configured max.poll.interval.ms, which typically implies that the poll loop is spending too much time message processing. You can address this either by increasing the session timeout or by reducing the maximum size of batches returned in poll() with max.poll.records.

分区平衡策略

Range

p:分区数 c:消费者数 p/c = n p%c = m

表示前m个消费者分配n+1个分区

5个分区

6个分区

RoundRobin(轮询调度)

整理所有的消费者和分区信息,然后轮询分配,分配比较均匀。但是有如下场景会不均匀。

Sticky(粘性分配)

粘性分配有两个作用。首先,它保证分配尽可能平衡。当进行Rebalance时,

RoundRobin 就会重新整理所有分区和消费者信息再重新进行分区分配,分区转移数量大,速度较慢。

Sticky 保留尽可能多的现有分配,记录需要转移的分区进行分配,分区转移数量小,速度快。

场景:

如果同一个消费组内的消费者所订阅的topic是不相同的,那么在执行分区分配的时候轮询分配可能会导致分区分配的不均匀。如果消费者没有订阅该topic,则永远不会分到该topic的分区。如:

test 6个分区 test1 3个分区

c1订阅test,test1

c2订阅test

c3订阅test

roundrobin分配就会不均匀

sticky在相同条件下的表现更好,test1将完全分布在,可以分区分配更均匀。

自定义随机分区分配策略

props.put("partition.assignment.strategy", Collections.singletonList(MyAssignor.class))

package com.springboot.learnning;

import org.apache.kafka.clients.consumer.internals.AbstractPartitionAssignor;

import org.apache.kafka.common.TopicPartition;

import java.util.*;

/**

*

* @author weizeyuan

*/

public class MyAssignor extends AbstractPartitionAssignor {

@Override

public String name() {

return "my";

}

private Map<String, List<String>> consumersPerTopic(Map<String, Subscription> consumerMetadata) {

Map<String, List<String>> topicConsumer = new HashMap<>();

for (Map.Entry<String, Subscription> subscriptionEntry : consumerMetadata.entrySet()) {

String consumerId = subscriptionEntry.getKey();

for (String topic : subscriptionEntry.getValue().topics()) {

put(topicConsumer, topic, consumerId);

}

}

return topicConsumer;

}

@Override

public Map<String, List<TopicPartition>> assign(Map<String, Integer> partitionsPerTopic,

Map<String, Subscription> subscriptions) {

//分区分配信息存储

Map<String, List<TopicPartition>> assignment = new HashMap<>();

for (String memberId : subscriptions.keySet()) {

assignment.put(memberId, new ArrayList<>());

}

//topic订阅者信息

Map<String, List<String>> topicConsumer = consumersPerTopic(subscriptions);

topicConsumer.forEach((topicStr, consumerList) -> {

int consumerNum = consumerList.size();

Integer partitionNum = partitionsPerTopic.get(topicStr);

if (partitionNum == null) {

return;

}

//当前topic下分区

List<TopicPartition> partitions = AbstractPartitionAssignor.partitions(topicStr, partitionNum);

partitions.forEach(partition -> {

//随机消费者

String consumer = consumerList.get(new Random().nextInt(consumerNum));

assignment.get(consumer).add(partition);

});

});

return assignment;

}

}

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?