Kafka集群环境安装

相关下载

- 相关下载:

JDK要求1.8版本以上。

JDK安装教程:http://blog.csdn.net/yuan_xw/article/details/49948285

Zookeeper安装教程:http://blog.csdn.net/yuan_xw/article/details/47148401

Kafka下载地址:http://mirrors.shu.edu.cn/apache/kafka/1.0.0/kafka_2.11-1.0.0.tgz

Kafka集群规划

| 主机名 | IP | 安装软件 |

|---|---|---|

| Kafka1 | 192.168.1.221 | Jdk、Zookeeper、Kafka |

| Kafka2 | 192.168.1.222 | Jdk、Zookeeper、Kafka |

| Kafka3 | 192.168.1.223 | Jdk、Zookeeper、Kafka |

1. 配置ssh免密码登录:

产生密钥,执行命令:ssh-keygen -t rsa,按4回车,密钥文件位于\~/.ssh文件

在192.168.1.221上生产一对钥匙,将公钥拷贝到其他节点,包括自己,执行命令:

ssh-copy-id 192.168.1.221

ssh-copy-id 192.168.1.222

ssh-copy-id 192.168.1.223

在192.168.1.222上生产一对钥匙,将公钥拷贝到其他节点,包括自己,执行命令:

ssh-copy-id 192.168.1.221

ssh-copy-id 192.168.1.222

ssh-copy-id 192.168.1.223

在192.168.1.223上生产一对钥匙,将公钥拷贝到其他节点,包括自己,执行命令:

ssh-copy-id 192.168.1.221

ssh-copy-id 192.168.1.222

ssh-copy-id 192.168.1.223

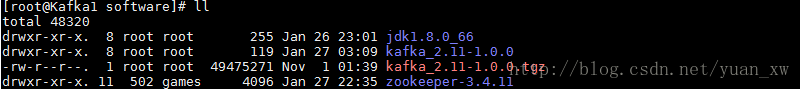

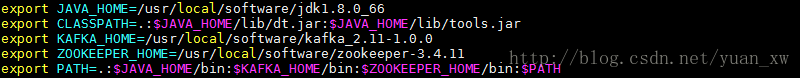

- 在所有的服务器上设置环境变量:

export JAVA_HOME=/usr/local/software/jdk1.8.0_66

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export KAFKA_HOME=/usr/local/software/kafka_2.11-1.0.0

export ZOOKEEPER_HOME=/usr/local/software/zookeeper-3.4.11

export PATH=.:$JAVA_HOME/bin:$KAFKA_HOME/bin:$ZOOKEEPER_HOME/bin:$PATH刷新环境变量:source /etc/profile

- 关闭所有服务器上的防火墙:

systemctl stop firewalld.service

system

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

4816

4816

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?