[root@~]#vi /etc/sysconfig/network-scripts/ifcfg-ens3

BOOTPROTO=static

IPADDR=192.168.63.101

GATEWAY=192.168.63.2

DNS1=8.8.8.8

ONBOOT=yes

[root@~]#systemctl restart network

[root@~]#hostnamectl set-hostname hadoop101

[root@~]#hostnamectl set-hostname hadoop102

[root@~]#hostnamectl set-hostname hadoop103

[root@~]#vi /etc/hosts

192.168.63.101 hadoop101

192.168.63.102 hadoop102

192.168.63.103 hadoop103

开始单机:

[root@~]#mkdir module

[root@~]#mkdir software

传输jdk,hadoop到/software

[root@~]#tar -zxvf jdk-8u241-linux-x64.tar.gz -C /opt/module/

[root@~]#tar -zxvf hadoop-2.7.2.tar.gz -C /opt/module/

[root@~]#vi /etc/profile

#JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_241

export PATH=$PATH:$JAVA_HOME/bin

##HADOOP_HOME

export HADOOP_HOME=/opt/module/hadoop-2.7.2

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

[root@~]#source /etc/profile

[root@~]#hadoop version

[root@~]#yum -y install rsync

[root@~]#yum -y install rsync

[root@~]#yum -y install rsync

[root@~]#mkdir bin

[root@~]#cd bin

[root@~]#vi xsync

#!/bin/bash

#1 获取输入参数个数,如果没有参数,直接退出

pcount=$#

if((pcount==0)); then

echo no args;

exit;

fi

#2 获取文件名称

p1=$1

fname=`basename $p1`

echo fname=$fname

#3 获取上级目录到绝对路径

pdir=`cd -P $(dirname $p1); pwd`

echo pdir=$pdir

#4 获取当前用户名称

user=`whoami`

#5 循环

for((host=101; host<104; host++)); do

echo --------------------- hadoop$host ----------------

rsync -rvl $pdir/$fname $user@hadoop$host:$pdir

done

[root@~]#chmod 777 xsync

[root@~]#ssh-keygen -t rsa

[root@~]#ssh-copy-id hadoop101

[root@~]#ssh-copy-id hadoop102

[root@~]#ssh-copy-id hadoop103

[root@~]# ./xsync /root/

[root@~]# ./xsync /opt/module/

[root@~]# ./xsync /etc/profile

[root@~]#source /etc/profile

[root@~]#hadoop version

[root@~]#cd /opt/module/hadoop-2.7.2/etc/hadoop/

[root@~]#vi hadoop-env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_241

[root@~]#vi yarn-env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_241

[root@~]#vi mapred-env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_241

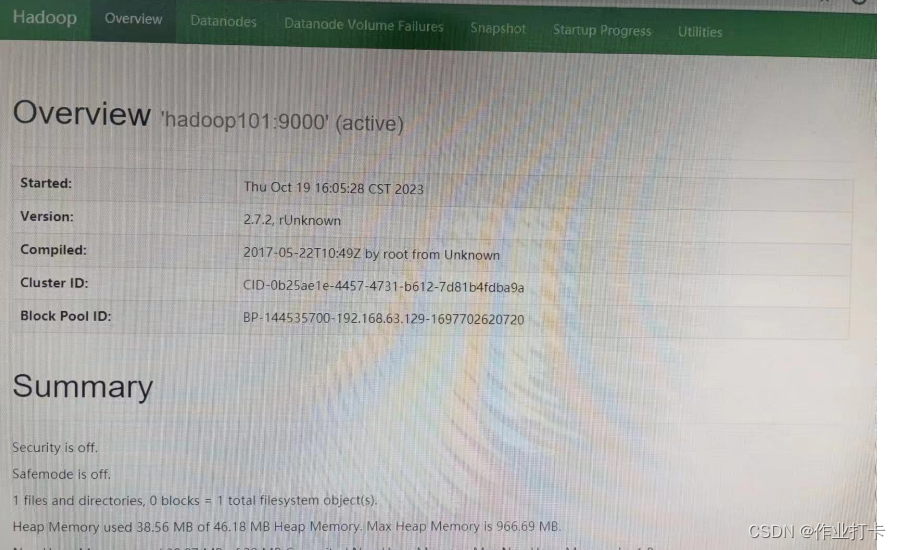

[root@~]#vi core-site.xml

<!-- 指定HDFS中NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop101:9000</value>

</property>

<!-- 指定hadoop运行时产生文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.7.2/data/tmp</value>

</property>

[root@~]#vi hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop103:50090</value>

</property>

[root@~]#vi yarn-site.xml

<!-- reducer获取数据的方式 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 指定YARN的ResourceManager的地址 -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop102</value>

</property>

cp mapred-site.xml.template mapred-site.xml

vi mapred-site.xml

<!-- 指定mr运行在yarn上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

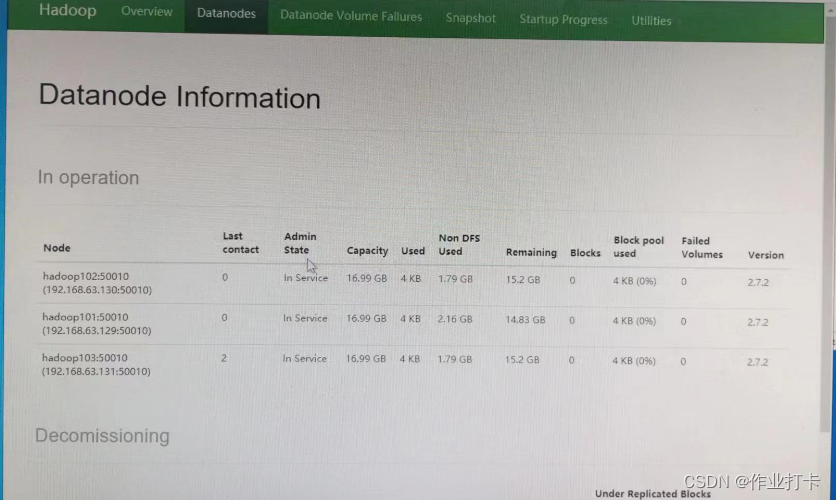

[root@~]#vi slaves

hadoop101

hadoop102

hadoop103

[root@~]#./xsync: /root/bin/xsync /opt/module/hadoop-2.7.2/

[root@~]#hadoop namenode -format

[root@~]#start-all.sh

[root@~]#jps

[root@~]#systemctl stop firewalld.service && systemctl disable firewalld.service

[root@~]#vi /etc/selinux/config

[root@~]# ./xsync: /root/bin/xsync /etc/selinux/config

关于重新格式化:

1.停止集群

2.rm -rf /opt/module/hadoop-3.1.3/data

rm -rf /opt/module/hadoop-3.1.3/logs

rm -rf /tmp/*

3.hdfs namenode -format

4.再次启动集群

分享脚本

#!/bin/bash

for host in hadoop101 hadoop102 hadoop103

do

ssh $host rm -rf /opt/module/hadoop-3.1.3/data

ssh $host rm -rf /opt/module/hadoop-3.1.3/logs

ssh $host rm -rf /tmp/*

done

echo 清除完成

2200

2200

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?