OpenCorr是一个开源C++库,用于研究和开发数字图像相关(DIC)技术它提供了2D和3D-DIC以及数字体积相关(DVC)的功能模块。最近正在学习OpenCorr,为了更深层次地理解ICGN,我打算浅浅剖析Opencorr的代码,学习的过程中写下这篇学习笔记,以后忘记了还能回来看看。同时,作为一名在读研究生,希望能得到社区大佬的指点,以及跟同学们一起学习进步。

——————————————————————————————————————————

这一期主要讲解Eipipolar search的原理,并且附上部分代码解析。

在OpenCorr的Epipolar search原文如下:

Path independent stereo digital image correlation with high speed and analysis resolution

主要是利用了对极几何来匹配双目视图, 在极限约束下,点的搜索可以沿一条直线进行,显著提高搜索效率,节省计算时间。本文主要解释匹配的过程,以及部分公式的推导。

一、原理

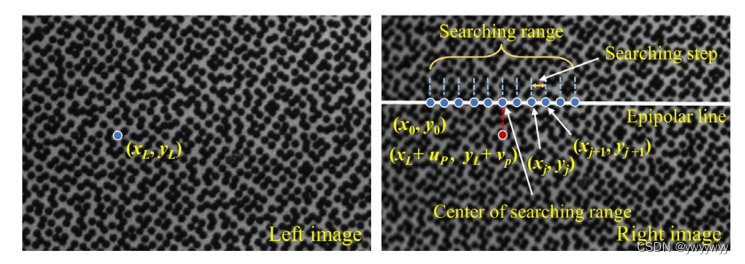

上图是作者原文中关于极线搜索的图,非常清晰地描述了整个搜索过程。其中,点是左图的某点,现在要在右图的极线上寻找其对应点。

首先需要说明的是,在进行极线搜索之前,需要预设起始搜索点、搜索半径和搜索步长。其中起始搜索点是通过定义一个偏移向量来确定的,在官方的示例中称为parallex_guess(u, v),其中u是x的偏移,v是y方向的偏移。

搜索条件都设置好后,由parallex点处向右图对应的极线做一条垂线,如右图,红点就是parallex点,白线是极线。左图对应的极线方程可以用下式进行计算:

其中, 为极线,

为基础矩阵,

为左图点的齐次坐标。

设极线方程为

设parallex坐标为,则通过该点的垂线方程为

设交点坐标为,联立上述两个方程可得

从交点开始向左和向右按照设定的步距来寻找对应点。在找到对应点后,即得到了初始位移,在此基础上使用一阶ICGN计算其亚像素位移,比较所有候选点的ZNCC值,找到最大的作为匹配点,至此就得到了左图的匹配点。

二、代码解析

下面是Epipolar search中compute的代码

void EpipolarSearch::compute(POI2D* poi)

{

//estimate parallax

parallax.x = parallax_x[0] * (poi->x - int(ref_img->width / 2)) + parallax_x[1] * (poi->y - int(ref_img->height / 2)) + parallax_x[2];

parallax.y = parallax_y[0] * (poi->x - int(ref_img->width / 2)) + parallax_y[1] * (poi->y - int(ref_img->height / 2)) + parallax_y[2];

//convert locatoin of left POI to a vector

Eigen::Vector3f view1_vector;

view1_vector << (poi->x + poi->deformation.u), (poi->y + poi->deformation.v), 1;

//get the projection of POI in the primary view on the epipolar line in the secondary view

Eigen::Vector3f view2_epipolar = fundamental_matrix * view1_vector;

float line_slope = -view2_epipolar(0) / view2_epipolar(1);

float line_intercept = -view2_epipolar(2) / view2_epipolar(1);

int x_view2 = (int)((line_slope * (poi->y + poi->deformation.v + parallax.y - line_intercept)

+ poi->x + poi->deformation.u + parallax.x) / (line_slope * line_slope + 1));

int y_view2 = (int)(line_slope * x_view2 + line_intercept);

//get the center of searching region

std::vector<POI2D> poi_candidates;

POI2D current_poi(poi->x, poi->y);

current_poi.deformation.u = x_view2 - poi->x;

current_poi.deformation.v = y_view2 - poi->y;

poi_candidates.push_back(current_poi);

//get the other trial locations in searching region

int x_trial, y_trial;

for (int i = search_step; i < search_radius; i += search_step) {

x_trial = x_view2 + i;

y_trial = (int)(line_slope * x_trial + line_intercept);

current_poi.deformation.u = x_trial - poi->x;

current_poi.deformation.v = y_trial - poi->y;

if (x_trial - icgn1->subset_radius_x > 0 && x_trial + icgn1->subset_radius_x < icgn1->ref_img->width - 1

&& y_trial - icgn1->subset_radius_y > 0 && y_trial + icgn1->subset_radius_y < icgn1->ref_img->height - 1)

{

poi_candidates.push_back(current_poi);

}

x_trial = x_view2 - i;

y_trial = (int)(line_slope * x_trial + line_intercept);

current_poi.deformation.u = x_trial - poi->x;

current_poi.deformation.v = y_trial - poi->y;

if (x_trial - icgn1->subset_radius_x > 0 && x_trial + icgn1->subset_radius_x < icgn1->ref_img->width - 1

&& y_trial - icgn1->subset_radius_y > 0 && y_trial + icgn1->subset_radius_y < icgn1->ref_img->height - 1)

{

poi_candidates.push_back(current_poi);

}

}

//coarse check using ICGN1

int queue_size = (int)poi_candidates.size();

#pragma omp parallel for

for (int i = 0; i < queue_size; i++)

{

icgn1->compute(&poi_candidates[i]);

}

//take the one with the highest ZNCC value

std::sort(poi_candidates.begin(), poi_candidates.end(), sortByZNCC);

poi->deformation = poi_candidates[0].deformation;

poi->deformation = poi_candidates[0].deformation;

poi->result = poi_candidates[0].result;

poi->result = poi_candidates[0].result;

}接下来逐段解析:

//estimate parallax

parallax.x = parallax_x[0] * (poi->x - int(ref_img->width / 2)) + parallax_x[1] * (poi->y - int(ref_img->height / 2)) + parallax_x[2];

parallax.y = parallax_y[0] * (poi->x - int(ref_img->width / 2)) + parallax_y[1] * (poi->y - int(ref_img->height / 2)) + parallax_y[2];如果你没有认为设定,那么这一段可以忽略,相当于parallax.x = parallax.x。

//convert locatoin of left POI to a vector

Eigen::Vector3f view1_vector;

view1_vector << (poi->x + poi->deformation.u), (poi->y + poi->deformation.v), 1;

//get the projection of POI in the primary view on the epipolar line in the secondary view

Eigen::Vector3f view2_epipolar = fundamental_matrix * view1_vector;

float line_slope = -view2_epipolar(0) / view2_epipolar(1);

float line_intercept = -view2_epipolar(2) / view2_epipolar(1);

int x_view2 = (int)((line_slope * (poi->y + poi->deformation.v + parallax.y - line_intercept)

+ poi->x + poi->deformation.u + parallax.x) / (line_slope * line_slope + 1));

int y_view2 = (int)(line_slope * x_view2 + line_intercept);这一段就是求交点的过程,跟原理中推导的过程一致,需要提醒的是,view2_epipolar是一个float数组,保存着极线的三个系数a,b,c。

所以我们就知道了line_slope其实就是极线的斜率 k,而line_intercept就是极线的截距 b。

而仔细看x_view2的计算公式中,poi->y+poi->deformation.v+parallax.y这一长串,其实就是原理中设定的parallax坐标,x_view2和y_view2就是原理中联立后解出的结果

。

想明白了这一部分,后面的就比较容易了。

//get the center of searching region

std::vector<POI2D> poi_candidates;

POI2D current_poi(poi->x, poi->y);

current_poi.deformation.u = x_view2 - poi->x;

current_poi.deformation.v = y_view2 - poi->y;

poi_candidates.push_back(current_poi);

//get the other trial locations in searching region

int x_trial, y_trial;

for (int i = search_step; i < search_radius; i += search_step) {

x_trial = x_view2 + i;

y_trial = (int)(line_slope * x_trial + line_intercept);

current_poi.deformation.u = x_trial - poi->x;

current_poi.deformation.v = y_trial - poi->y;

if (x_trial - icgn1->subset_radius_x > 0 && x_trial + icgn1->subset_radius_x < icgn1->ref_img->width - 1

&& y_trial - icgn1->subset_radius_y > 0 && y_trial + icgn1->subset_radius_y < icgn1->ref_img->height - 1)

{

poi_candidates.push_back(current_poi);

}

x_trial = x_view2 - i;

y_trial = (int)(line_slope * x_trial + line_intercept);

current_poi.deformation.u = x_trial - poi->x;

current_poi.deformation.v = y_trial - poi->y;

if (x_trial - icgn1->subset_radius_x > 0 && x_trial + icgn1->subset_radius_x < icgn1->ref_img->width - 1

&& y_trial - icgn1->subset_radius_y > 0 && y_trial + icgn1->subset_radius_y < icgn1->ref_img->height - 1)

{

poi_candidates.push_back(current_poi);

}

}这一段就是由交点出发,向左右同时搜索,每次步进search_step,直到超过search_radius,循环结束后就会得到一系列的候选点。

//coarse check using ICGN1

int queue_size = (int)poi_candidates.size();

#pragma omp parallel for

for (int i = 0; i < queue_size; i++)

{

icgn1->compute(&poi_candidates[i]);

}

//take the one with the highest ZNCC value

std::sort(poi_candidates.begin(), poi_candidates.end(), sortByZNCC);

poi->deformation = poi_candidates[0].deformation;

poi->deformation = poi_candidates[0].deformation;

poi->result = poi_candidates[0].result;

poi->result = poi_candidates[0].result;对全部候选点使用icgn,计算出亚像素位移,最后取zncc系数最高的点作为匹配点。关于ICGN可以看我之前写的文章。

至此Eipiplor search的全过程都结束了。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?