-

问题描述

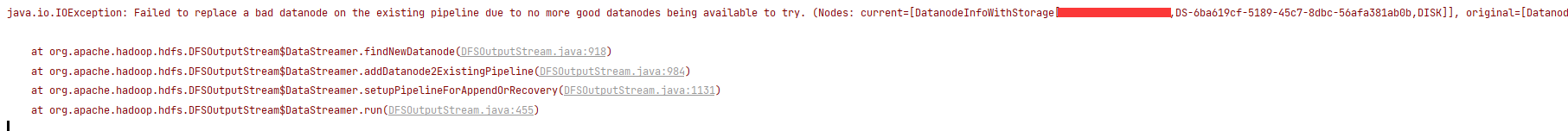

在使用hdfs api追加内容操作,从windows电脑上的idea对aliyun服务器上的hdfs中的文件追加内容时,出现错误,如下:java.io.IOException: Failed to replace a bad datanode on the existing pipeline due to no more good datanodes being available to try. (Nodes: current=[DatanodeInfoWithStorage[172.25.55.228:9866,DS-6ba619cf-5189-45c7-8dbc-56afa381ab0b,DISK]], original=[DatanodeInfoWithStorage[172.25.55.228:9866,DS-6ba619cf-5189-45c7-8dbc-56afa381ab0b,DISK]]). The current failed datanode replacement policy is DEFAULT, and a client may configure this via ‘dfs.client.block.write.replace-datanode-on-failure.policy’ in its configuration.

at org.apache.hadoop.hdfs.DFSOutputStream D a t a S t r e a m e r . f i n d N e w D a t a n o d e ( D F S O u t p u t S t r e a m . j a v a : 918 ) a t o r g . a p a c h e . h a d o o p . h d f s . D F S O u t p u t S t r e a m DataStreamer.findNewDatanode(DFSOutputStream.java:918) at org.apache.hadoop.hdfs.DFSOutputStream DataStreamer.findNewDatanode(DFSOutputStream.java:918)atorg.apache.hadoop.hdfs.DFSOutputStreamDataStreamer.addDatanode2ExistingPipeline(DFSOutputStream.java:984)

at org.apache.hadoop.hdfs.DFSOutputStream D a t a S t r e a m e r . s e t u p P i p e l i n e F o r A p p e n d O r R e c o v e r y ( D F S O u t p u t S t r e a m . j a v a : 1131 ) a t o r g . a p a c h e . h a d o o p . h d f s . D F S O u t p u t S t r e a m DataStreamer.setupPipelineForAppendOrRecovery(DFSOutputStream.java:1131) at org.apache.hadoop.hdfs.DFSOutputStream DataStreamer.setupPipelineForAppendOrRecovery(DFSOutputStream.java:1131)atorg.apache.hadoop.hdfs.DFSOutputStreamDataStreamer.run(DFSOutputStream.java:455)截图:

-

问题解决

在idea代码中添加

configuration.set("dfs.client.block.write.replace-datanode-on-failure.policy", "NEVER");

2274

2274

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?