windows+eclipse 连接hadoop集群

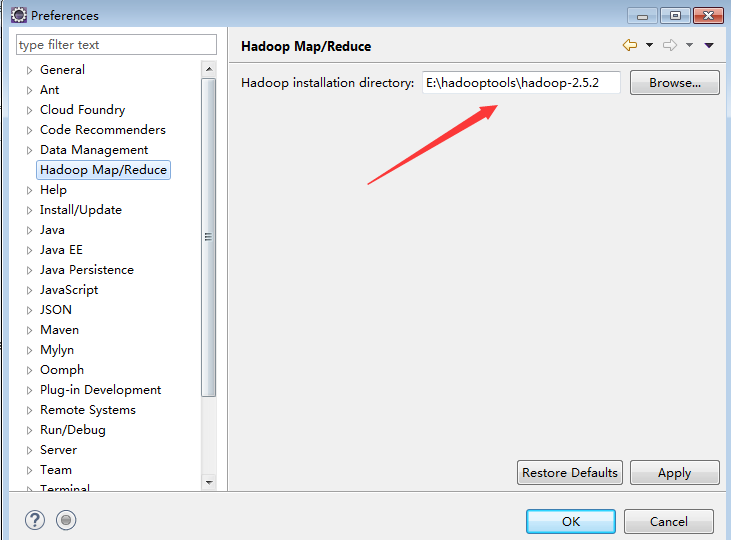

1. 配置Eclipse-hadoop插件

.将编译好的hadoop-eclipse-plugin-2.5.2.jar拷贝至eclipse的plugins目录下,然后重启eclipse , 打开菜单栏->windows –> preferances-> HadoopMap/Reduce 添加本地hadoop目录位置。该hadoop目录为在集群中配置好的的hadoop目录,直接copy到本地即可。

2. windows 配置winutils.exe以及hadoop.dll

将hadoop对应版本的winutils.exe 拷贝到hadoop目录的bin下

将hadoop.dll拷贝到 windows/system32目录下

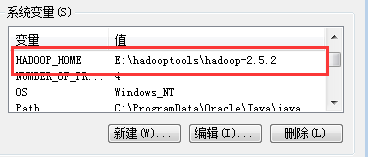

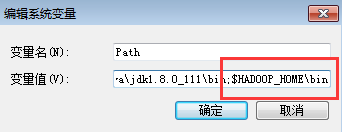

配置系统环境变量:

1. HADOOP_HOME : hadoop本地目录

2. path: $HADOOP_HOME/bin

3. eclipse中配置hadoop参数

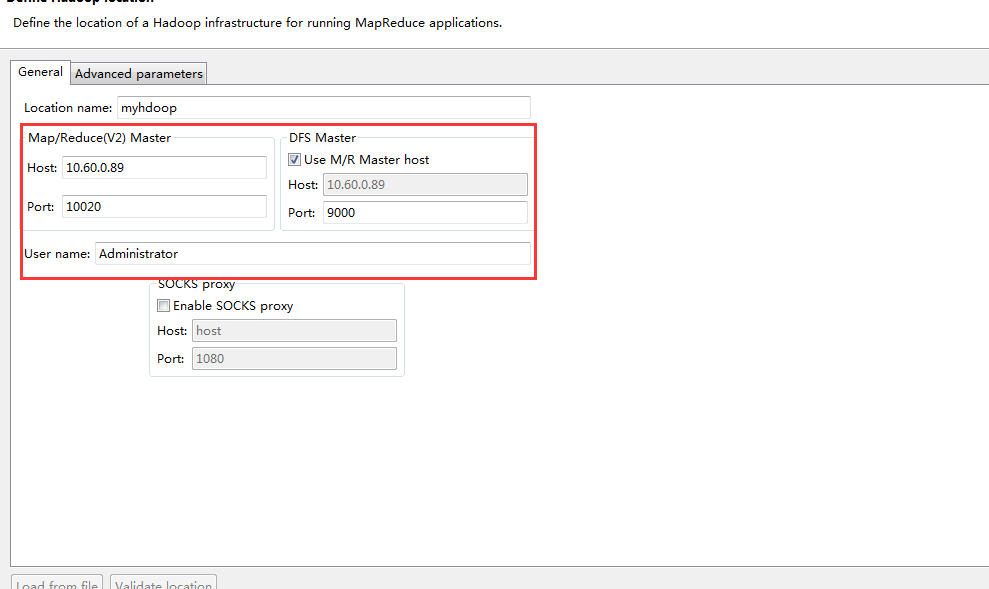

在eclipse菜单栏中打开windows->show view->other… 选择Map/Reduce locations. , 并在Map/Reduce locations.中,右键new Map/Reduce location,如下图所示

在 general 选项卡中配置基本信息:

location name: (随意命名)

hosts: 集群中ResourceManger 主机IP地址

port: 默认是10020 (可在mapred-site.xml自定义)

DFS hosts: namenode 主机IP地址

port默认是9000(可在hdfs-site.xml配置)

!!!重启系统!!!!

打开Eclipse,连接DFS locations.

在run configuration配置好运行参数后,运行wordcount 配置好运行参数!!

**

常见异常:

**

Failed to locate the winutils binary in the hadoop binary path java.io.IOException: Could not locate executable null \bin\winutils.exe in the Hadoop binaries.

查看源码shell.class:

//获取调用checkHadoopHome本地hadoop的主目录路径,

private static String HADOOP_HOME_DIR = checkHadoopHome();

//调用getWinUtilsPath获得winUtils的路径

public static final String WINUTILS = getWinUtilsPath();//checkHadoopName

private static String checkHadoopHome() {

String home = System.getProperty("hadoop.home.dir");

// 首先获取系统变量HADOOP_HOME的值(这就是配置HADOOP_HOME的原因)

if (home == null) {

home = System.getenv("HADOOP_HOME");

}

try {

// couldn't find either setting for hadoop's home directory

if (home == null) { //如果为空抛出的异常

throw new IOException("HADOOP_HOME or hadoop.home.dir are not set.");

}

if (home.startsWith("\"") && home.endsWith("\"")) {

home = home.substring(1, home.length()-1);

}

// check that the home setting is actually a directory that exists

File homedir = new File(home);

if (!homedir.isAbsolute() || !homedir.exists() || !homedir.isDirectory()) {

throw new IOException("Hadoop home directory " + homedir

+ " does not exist, is not a directory, or is not an absolute path.");

}

home = homedir.getCanonicalPath();

} catch (IOException ioe) {

if (LOG.isDebugEnabled()) {

LOG.debug("Failed to detect a valid hadoop home directory", ioe);

}

home = null;

}

return home;

}//获取winUtils路径,内部调用getQualifiedBinPath()方法

public static final String getWinUtilsPath() {

String winUtilsPath = null;

try {

if (WINDOWS) {

//这里调用getQualifiedBinPath方法

winUtilsPath = getQualifiedBinPath("winutils.exe");

}

} catch (IOException ioe) {

LOG.error("Failed to locate the winutils binary in the hadoop binary path",

ioe);

}

return winUtilsPath;

}- 文件权限问题

出现该问题主要是windows的用户与hadoop集群的用户操作权限问题,在hdfs上windows操作隶属于文件权限中的其他用户组,有操作限制。需要修改源码。

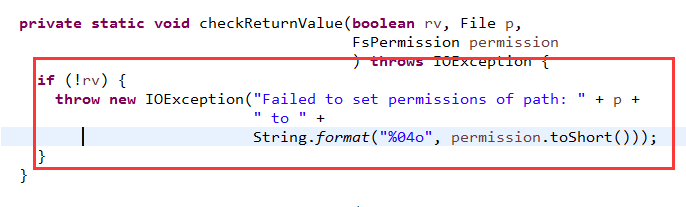

1.一种方法就是修改Hadoop-src源码,即FileUtil.java中的代码,如下图所示,将checkReturnValue()方法内的代码注释掉,使命maven重新编译源码。

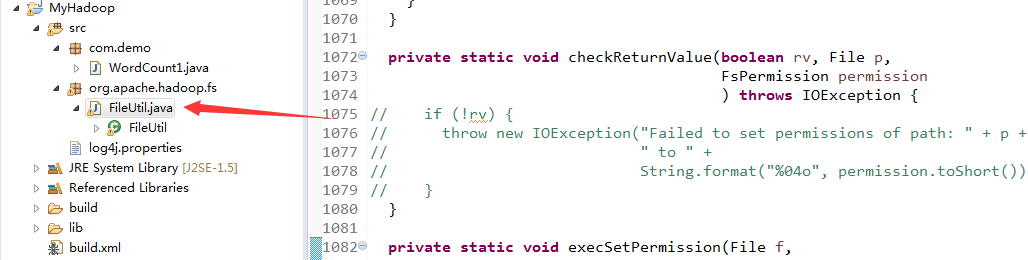

2.就是在项目中新建一个和FileUtil.java一模一样的包和文件,供项目使用。如图所示。

5246

5246

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?