客户机配置 Flume Client

# clientAgent

clientAgent.channels = c1

clientAgent.sources = s1

clientAgent.sinks = k1 k2

# clientAgent sinks group

clientAgent.sinkgroups = g1

# clientAgent Spooling Directory Source

clientAgent.sources.s1.type = spooldir

clientAgent.sources.s1.spoolDir =/home/lkm/logdata/

clientAgent.sources.s1.fileHeader = true

clientAgent.sources.s1.deletePolicy =immediate

clientAgent.sources.s1.batchSize =1000

clientAgent.sources.s1.channels =c1

clientAgent.sources.s1.deserializer.maxLineLength =1048576

# clientAgent FileChannel

clientAgent.channels.c1.type = file

clientAgent.channels.c1.checkpointDir = /flume/spool/checkpoint

clientAgent.channels.c1.dataDirs = /flume/spool/data

clientAgent.channels.c1.capacity = 200000000

clientAgent.channels.c1.keep-alive = 30

clientAgent.channels.c1.write-timeout = 30

clientAgent.channels.c1.checkpoint-timeout=600

# clientAgent Sinks

# k1 sink

clientAgent.sinks.k1.channel = c1

clientAgent.sinks.k1.type = avro

# connect to serverAgent

clientAgent.sinks.k1.hostname = r09n10

clientAgent.sinks.k1.port = 41415

# k2 sink

clientAgent.sinks.k2.channel = c1

clientAgent.sinks.k2.type = avro

# connect to CollectorBackupAgent

clientAgent.sinks.k2.hostname = r09n09

clientAgent.sinks.k2.port = 41415

# clientAgent sinks group

clientAgent.sinkgroups.g1.sinks = k1 k2

# load_balance type

clientAgent.sinkgroups.g1.processor.type = load_balance

clientAgent.sinkgroups.g1.processor.backoff = true

#clientAgent.sinkgroups.g1.processor.selector = random

clientAgent.sinkgroups.g1.processor.priority.k1 = 10

clientAgent.sinkgroups.g1.processor.priority.k2 = 9

执行命令:

flume-ng agent -n clientAgent -f client.conf -Dflume.root.logger=INFO,console

服务端配置Flume Server

# serverAgent

serverAgent.channels = c2

serverAgent.sources = s2

serverAgent.sinks =k1 k2

# serverAgent AvroSource

serverAgent.sources.s2.type = avro

serverAgent.sources.s2.bind = r09n10

serverAgent.sources.s2.port = 41415

serverAgent.sources.s2.channels = c2

# serverAgent FileChannel

serverAgent.channels.c2.type = file

serverAgent.channels.c2.checkpointDir =/flume/spool/checkpoint

serverAgent.channels.c2.dataDirs = /flume/spool/data,/flume/work/spool/data

serverAgent.channels.c2.capacity = 200000000

serverAgent.channels.c2.transactionCapacity=6000

serverAgent.channels.c2.checkpointInterval=60000

# serverAgent hdfsSink

serverAgent.sinks.k2.type = hdfs

serverAgent.sinks.k2.channel = c2

serverAgent.sinks.k2.hdfs.path = hdfs://nameservice1/flume/flume1

serverAgent.sinks.k2.hdfs.filePrefix =k2_%{file}

serverAgent.sinks.k2.hdfs.inUsePrefix =_

serverAgent.sinks.k2.hdfs.inUseSuffix =.tmp

serverAgent.sinks.k2.hdfs.rollSize = 0

serverAgent.sinks.k2.hdfs.rollCount = 0

serverAgent.sinks.k2.hdfs.rollInterval = 240

serverAgent.sinks.k2.hdfs.writeFormat = Text

serverAgent.sinks.k2.hdfs.fileType = DataStream

serverAgent.sinks.k2.hdfs.batchSize = 6000

serverAgent.sinks.k2.hdfs.callTimeout = 60000

serverAgent.sinks.k1.type = hdfs

serverAgent.sinks.k1.channel = c2

serverAgent.sinks.k1.hdfs.path = hdfs://nameservice1/flume/flume2

serverAgent.sinks.k1.hdfs.filePrefix =k1_%{file}

serverAgent.sinks.k1.hdfs.inUsePrefix =_

serverAgent.sinks.k1.hdfs.inUseSuffix =.tmp

serverAgent.sinks.k1.hdfs.rollSize = 0

serverAgent.sinks.k1.hdfs.rollCount = 0

serverAgent.sinks.k1.hdfs.rollInterval = 240

serverAgent.sinks.k1.hdfs.writeFormat = Text

serverAgent.sinks.k1.hdfs.fileType = DataStream

serverAgent.sinks.k1.hdfs.batchSize = 6000

serverAgent.sinks.k1.hdfs.callTimeout = 60000

执行命令:

flume-ng agent -n serverAgent -f server.conf -Dflume.root.logger=INFO,console

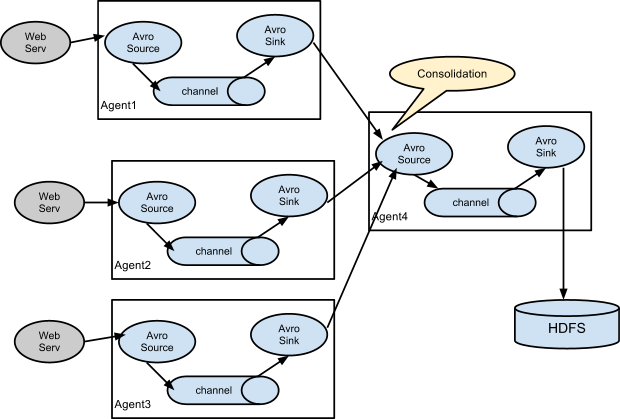

上面采用的就是类似 cs 架构,各个 flume agent 节点先将各台机器的日志汇总到 Consolidation 节点,然后再由这些节点统一写入 HDFS,并且采用了负载均衡的方式,你还可以配置高可用的模式等等。

2587

2587

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?