版本:

hbase-0.98.21-hadoop2-bin.tar.gz

phoenix-4.8.0-HBase-0.98-bin.tar.gz

apache-hive-1.2.1-bin.tar.gz

--------------------------------------------------

首先需要phoenix整合hbase

hive整合hbase,此处参照之前的笔记

将phoenix{core,queryserver,4.8.0-HBase-0.98,hive}拷贝到$hive/lib/

根据官网要求修改配置文件

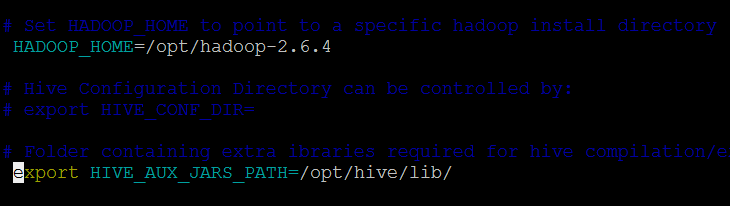

> vim conf/hive-env.sh

> vim conf/hive-site.xml

启动:

> hive -hiveconf phoenix.zookeeper.quorum=hadoop01:2181

创建内部表

create table phoenix_table (

s1 string,

i1 int,

f1 float,

d1 double

)

STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler'

TBLPROPERTIES (

"phoenix.table.name" = "phoenix_table",

"phoenix.zookeeper.quorum" = "hadoop01",

"phoenix.zookeeper.znode.parent" = "/hbase",

"phoenix.zookeeper.client.port" = "2181",

"phoenix.rowkeys" = "s1, i1",

"phoenix.column.mapping" = "s1:s1, i1:i1, f1:f1, d1:d1",

"phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'"

);

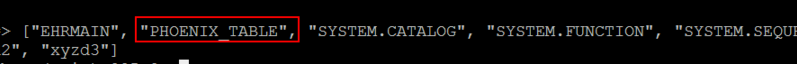

创建成功。查询phoenix和hbase中都有相应的表生成:phoenix

hbase:

属性

- phoenix.table.name

-

- phoenix指定表名

- 默认值:hive一样的表

- phoenix.zookeeper.quorum

-

- 指定ZK地址

- 默认值:localhost

- phoenix.zookeeper.znode.parent

-

- 指定HBase在ZK的目录

- 默认值:/ hbase

- phoenix.zookeeper.client.port

-

- 指定ZK端口

- 默认值:2181

- phoenix.rowkeys

-

- 指定phoenix的rowkey,即hbase的rowkey

- 要求

- phoenix.column.mapping

-

- hive与phoenix之间的列映射。

插入数据

使用hive测试表pokes导入数据

> insert into table phoenix_table select bar,foo,12.3 as fl,22.2 as dl from pokes;

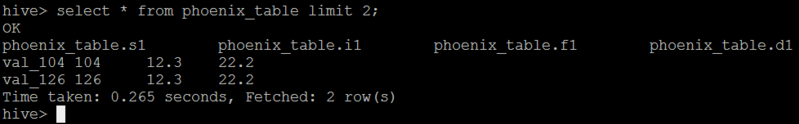

成功、查询

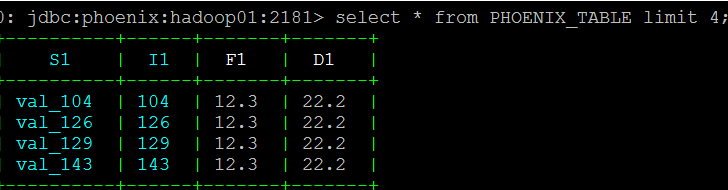

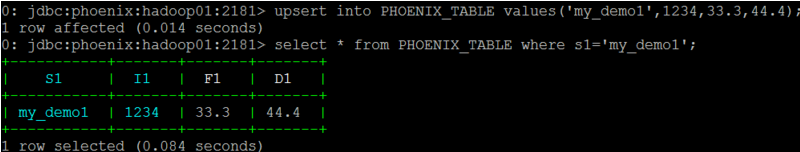

在phoenix中查询

还可以使用phoenix导入数据,看官网的解释

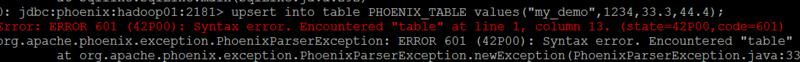

注意:phoenix4.8认为加tbale关键字为语法错误,其他版本没试,不知道官网怎么没说明

创建外部表

For external tables Hive works with an existing Phoenix table and manages only Hive metadata. Deleting an external table from Hive only deletes Hive metadata and keeps Phoenix table

首先在phoenix创建表

phoenix> create table PHOENIX_TABLE_EXT(aa varchar not null primary key,bb varchar);

再在hive中创建外部表:

create external table phoenix_table_ext_1 ( aa string, bb string ) STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler' TBLPROPERTIES ( "phoenix.table.name" = "phoenix_table_ext ", "phoenix.zookeeper.quorum" = "hadoop01", "phoenix.zookeeper.znode.parent" = "/hbase", "phoenix.zookeeper.client.port" = "2181", "phoenix.rowkeys" = "aa", "phoenix.column.mapping" = "aa:aa, bb:bb" );

创建成功,插入成功

这些选项可以设置在hive CLI

性能调优

| 参数 | 默认值 | 描述 |

| phoenix.upsert.batch.size | 1000 | 批量大小插入。 |

| [phoenix-table-name].disable.wal | false | 它暂时设置表属性DISABLE_WAL = true。可用于提高性能 |

| [phoenix-table-name].auto.flush | false | 当WAL是disabled 的flush又为真,则按文件刷进库 |

查询数据

可以使用HiveQL在phoenix表查询数据。一个简单表查询当hive.fetch.task.conversion=more and hive.exec.parallel=true.就可以像在Phoenix CLI一样快。

| 参数 | 默认值 | 描述 |

| hbase.scan.cache | 100 | 为一个单位请求读取行大小。 |

| hbase.scan.cacheblock | false | 是否缓存块。 |

| split.by.stats | false | If true, mappers will use table statistics. One mapper per guide post. |

| [hive-table-name].reducer.count | 1 | reducer的数量. In tez mode is affected only single-table query. See Limitations |

| [phoenix-table-name].query.hint | Hint for phoenix query (like NO_INDEX) |

遇到的问题:

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. org.apache.hadoop.hbase.client.Scan.isReversed()Z

最开始我用的hbase-0.96.2-hadoop2版本,不能整合,这个是需要hbase-client-0.98.21-hadoop2.jar包,更换这个jar包就解决了,但是还是会报下面的错

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. MetaException(message:ERROR 103 (08004): Unable to establish connection.

于是更换了hbase的版本为0.98.21的 ok了

---------

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. java.lang.StringIndexOutOfBoundsException: String index out of range: -1

因为字段对应不一样

create table phoenix_table_3 (a string,b int) STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler' TBLPROPERTIES ("phoenix.table.name" = "phoenix_table_3","phoenix.zookeeper.quorum" = "hadoop01","phoenix.zookeeper.znode.parent" = "/hbase","phoenix.zookeeper.client.port" = "2181","phoenix.rowkeys" = "a1","phoenix.column.mapping" = "a:a1, b:b1","phoenix.table.options" = "SALT_BUCKETS=10, DATA_BLOCK_ENCODING='DIFF'");

hive表字段与phoenix字段一样就可以了

----------

创建成功,插入也能成功,就是hive查询的时候报错找不到a1列,因为phoenix是aa列

Failed with exception java.io.IOException:java.lang.RuntimeException: org.apache.phoenix.schema.ColumnNotFoundException: ERROR 504 (42703): Undefined column. columnName=A1

create external table phoenix_table_ext (a1 string,b1 string)STORED BY 'org.apache.phoenix.hive.PhoenixStorageHandler' TBLPROPERTIES ("phoenix.table.name" = "phoenix_table_ext","phoenix.zookeeper.quorum" = "hadoop01","phoenix.zookeeper.znode.parent" = "/hbase","phoenix.zookeeper.client.port" = "2181","phoenix.rowkeys" = "aa","phoenix.column.mapping" = "a1:aa, b1:bb");

解决办法:同上hive表字段与phoenix字段一样就可以了

1082

1082

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?