一:K折交叉验证

from sklearn.model_selection import cross_val_score

from sklearn.datasets import load_iris

from sklearn.linear_model import LogisticRegression

iris = load_iris()

logreg = LogisticRegression()

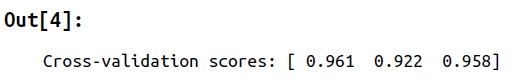

scores = cross_val_score(logreg, iris.data, iris.target, cv=3)

print("Cross-validation scores: {}".format(scores))

注意有另一种类型StratifiedKFold

#添加随机化

from sklearn.model_selection import KFold

kfold = KFold(n_splits=3, shuffle=True, random_state=0)

cross_val_score(logreg, iris.data, iris.target, cv=kfold)

二:Leave-one-out cross-validation

#适用小数据集,大数据集很耗时间

from sklearn.model_selection import LeaveOneOut

loo = LeaveOneOut()

scores = cross_val_score(logreg, iris.data, iris.target, cv=loo)

print("Number of cv iterations: ", len(scores))

print("Mean accuracy: {:.2f}".format(scores.mean()))

三:Shuffle-split cross-validation

from sklearn.model_selection import ShuffleSplit

shuffle_split = ShuffleSplit(test_size=.5, train_size=.5, n_splits=10)

scores = cross_val_score(logreg, iris.data, iris.target, cv=shuffle_split)

四:Cross-validation with groups

from sklearn.model_selection import GroupKFold

# create synthetic dataset

X, y = make_blobs(n_samples=12, random_state=0)

# assume the first three samples belong to the same group,

# then the next four, etc.

groups = [0, 0, 0, 1, 1, 1, 1, 2, 2, 3, 3, 3]

scores = cross_val_score(logreg, X, y, groups, cv=GroupKFold(n_split

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

578

578

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?