米骑app是一个中高端共享单车平台,主要可供全国范围内旅游景点的单车店发布单车,用户可以在线上预定租车,还车。

下载地址:http://www.misbike.com

或在应用商店里搜索“米骑”。

下面使用米骑测试服务器3个月生成的nginx日志文件,统计kpi指标。日志文件大小:58M。24万条记录(测试数据)。

kpi指标通常情况下,指:

1. PV(PageView): 页面访问量统计

2. IP: 页面独立IP的访问量统计

3. Time: 用户每小时PV的统计

4. Source: 用户来源域名的统计

5. Browser: 用户的访问设备统计

本文只讨论特定接口的pv访问统计。

(1)用Maven构建一个java项目,然后下载hadoop所需要的依赖包。

参见:

http://blog.csdn.net/cafebar123/article/details/73649588

(2)写一个定义请求日志类。包括客户端的ip地址,访问时间与时区,访问状态等。

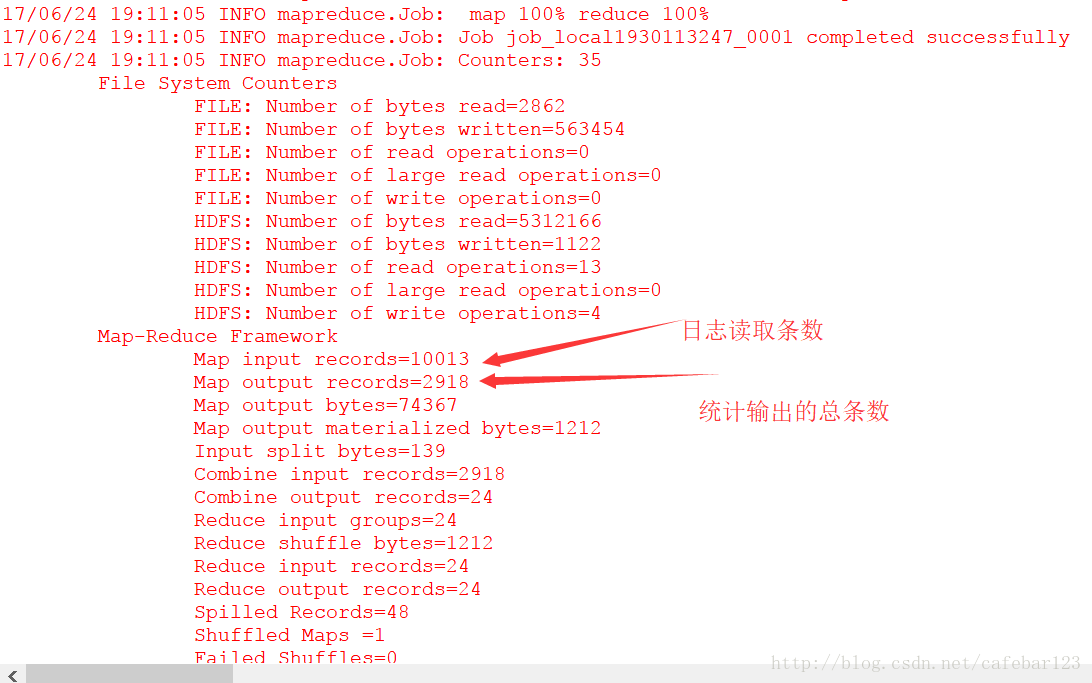

发现是日志中某条请求日志太长,而且是乱码格式,导致程序卡停了。由于日志中发现有不少记录跟这条数据类似,这样下去,程序肯定运行不下去。于是截取日志文件的一小部分,一共1万条日志,2.5M大小,以这个作为统计样本。

至此,我们已经粗略的获取了部分接口的pv。

下载地址:http://www.misbike.com

或在应用商店里搜索“米骑”。

下面使用米骑测试服务器3个月生成的nginx日志文件,统计kpi指标。日志文件大小:58M。24万条记录(测试数据)。

kpi指标通常情况下,指:

1. PV(PageView): 页面访问量统计

2. IP: 页面独立IP的访问量统计

3. Time: 用户每小时PV的统计

4. Source: 用户来源域名的统计

5. Browser: 用户的访问设备统计

本文只讨论特定接口的pv访问统计。

(1)用Maven构建一个java项目,然后下载hadoop所需要的依赖包。

参见:

http://blog.csdn.net/cafebar123/article/details/73649588

(2)写一个定义请求日志类。包括客户端的ip地址,访问时间与时区,访问状态等。

代码:

package org.myhadoop.miqizuchecount;

import java.text.ParseException;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.HashSet;

import java.util.Locale;

import java.util.Set;

/** 请求日志类

* @ClassName: Kpi

* @Description: TODO

* @author zhouyangzyi@163.com

* @date 2017年6月24日

*

*/

public class Kpi {

private String remote_addr;// 记录客户端的ip地址

private String remote_user;// 记录客户端用户名称,忽略属性"-"

private String time_local;// 记录访问时间与时区

private String request;// 记录请求的url与http协议

private String status;// 记录请求状态;成功是200

private String body_bytes_sent;// 记录发送给客户端文件主体内容大小

private String http_referer;// 用来记录从那个页面链接访问过来的

private String http_user_agent;// 记录客户浏览器的相关信息

private boolean valid = true;// 判断数据是否合法

@Override

public String toString() {

StringBuilder sb = new StringBuilder();

sb.append("valid:" + this.valid);

sb.append("\nremote_addr:" + this.remote_addr);

sb.append("\nremote_user:" + this.remote_user);

sb.append("\ntime_local:" + this.time_local);

sb.append("\nrequest:" + this.request);

sb.append("\nstatus:" + this.status);

sb.append("\nbody_bytes_sent:" + this.body_bytes_sent);

sb.append("\nhttp_referer:" + this.http_referer);

sb.append("\nhttp_user_agent:" + this.http_user_agent);

return sb.toString();

}

public String getRemote_addr() {

return remote_addr;

}

public void setRemote_addr(String remote_addr) {

this.remote_addr = remote_addr;

}

public String getRemote_user() {

return remote_user;

}

public void setRemote_user(String remote_user) {

this.remote_user = remote_user;

}

public String getTime_local() {

return time_local;

}

public Date getTime_local_Date() throws ParseException {

SimpleDateFormat df = new SimpleDateFormat("dd/MMM/yyyy:HH:mm:ss", Locale.US);

return df.parse(this.time_local);

}

public String getTime_local_Date_hour() throws ParseException{

SimpleDateFormat df = new SimpleDateFormat("yyyyMMddHH");

return df.format(this.getTime_local_Date());

}

public void setTime_local(String time_local) {

this.time_local = time_local;

}

public String getRequest() {

return request;

}

public void setRequest(String request) {

this.request = request;

}

public String getStatus() {

return status;

}

public void setStatus(String status) {

this.status = status;

}

public String getBody_bytes_sent() {

return body_bytes_sent;

}

public void setBody_bytes_sent(String body_bytes_sent) {

this.body_bytes_sent = body_bytes_sent;

}

public String getHttp_referer() {

return http_referer;

}

public String getHttp_referer_domain(){

if(http_referer.length()<8){

return http_referer;

}

String str=this.http_referer.replace("\"", "").replace("http://", "").replace("https://", "");

return str.indexOf("/")>0?str.substring(0, str.indexOf("/")):str;

}

public void setHttp_referer(String http_referer) {

this.http_referer = http_referer;

}

public String getHttp_user_agent() {

return http_user_agent;

}

public void setHttp_user_agent(String http_user_agent) {

this.http_user_agent = http_user_agent;

}

public boolean isValid() {

return valid;

}

public void setValid(boolean valid) {

this.valid = valid;

}

public static void main(String args[]) {

//String line = "222.68.172.190 - - [18/Sep/2013:06:49:57 +0000] \"GET /images/my.jpg HTTP/1.1\" 200 19939 \"http://www.angularjs.cn/A00n\" \"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36\"";

String line = "183.136.190.40 - - [18/Mar/2017:03:56:58 +0800] \"GET / HTTP/1.1\" 502 574 \"-\" \"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/35.0.1916.153 Safari/537.36\"";

System.out.println(line);

Kpi kpi = new Kpi();

String[] arr = line.split(" ");

kpi.setRemote_addr(arr[0]);

kpi.setRemote_user(arr[1]);

kpi.setTime_local(arr[3].substring(1));

kpi.setRequest(arr[6]);

kpi.setStatus(arr[8]);

kpi.setBody_bytes_sent(arr[9]);

kpi.setHttp_referer(arr[10]);

kpi.setHttp_user_agent(arr[11] + " " + arr[12]);

System.out.println(kpi);

try {

SimpleDateFormat df = new SimpleDateFormat("yyyy.MM.dd:HH:mm:ss", Locale.US);

System.out.println(df.format(kpi.getTime_local_Date()));

System.out.println(kpi.getTime_local_Date_hour());

System.out.println(kpi.getHttp_referer_domain());

} catch (ParseException e) {

e.printStackTrace();

}

}

private static Kpi parser(String line) {

System.out.println(line);

Kpi kpi = new Kpi();

String[] arr = line.split(" ");

if (arr.length > 11) {

kpi.setRemote_addr(arr[0]);

kpi.setRemote_user(arr[1]);

kpi.setTime_local(arr[3].substring(1));

kpi.setRequest(arr[6]);

kpi.setStatus(arr[8]);

kpi.setBody_bytes_sent(arr[9]);

kpi.setHttp_referer(arr[10]);

if (arr.length > 12) {

kpi.setHttp_user_agent(arr[11] + " " + arr[12]);

} else {

kpi.setHttp_user_agent(arr[11]);

}

if (Integer.parseInt(kpi.getStatus()) >= 400) {// 大于400,HTTP错误

kpi.setValid(false);

}

} else {

kpi.setValid(false);

}

return kpi;

}

/**

* 按page的pv分类

*/

public static Kpi filterPVs(String line) {

Kpi kpi = parser(line);

Set<String> pages = new HashSet<String>();

pages.add("/api/handler.shtml");

pages.add("/cyclingTravelHtml");

pages.add("/campaignpage");

boolean flag = false;

for(String str : pages){

if(kpi.getRequest().indexOf(str)>-1)

flag = true;

}

if(!flag)

kpi.setValid(false);

return kpi;

}

}(3)接着统计不同接口的pv,然后run java application

提示报错:

17/06/24 17:53:09 INFO mapred.MapTask: Starting flush of map output

17/06/24 17:53:09 INFO mapred.LocalJobRunner: map task executor complete.

17/06/24 17:53:09 WARN mapred.LocalJobRunner: job_local1293020776_0001

java.lang.Exception: java.lang.NumberFormatException: For input string: "jing"

at org.apache.hadoop.mapred.LocalJobRunner$Job.runTasks(LocalJobRunner.java:462)

at org.apache.hadoop.mapred.LocalJobRunner$Job.run(LocalJobRunner.java:522)

Caused by: java.lang.NumberFormatException: For input string: "jing"

at java.lang.NumberFormatException.forInputString(Unknown Source)

at java.lang.Integer.parseInt(Unknown Source)

at java.lang.Integer.parseInt(Unknown Source)

at org.myhadoop.miqizuchecount.Kpi.parser(Kpi.java:175)

at org.myhadoop.miqizuchecount.Kpi.filterPVs(Kpi.java:188)

at org.myhadoop.miqizuchecount.Kpipv2$TokenizerMapper.map(Kpipv2.java:28)

at org.myhadoop.miqizuchecount.Kpipv2$TokenizerMapper.map(Kpipv2.java:1)

at org.apache.hadoop.mapreduce.Mapper.run(Mapper.java:146)

at org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:787)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:341)

at org.apache.hadoop.mapred.LocalJobRunner$Job$MapTaskRunnable.run(LocalJobRunner.java:243)

at java.util.concurrent.Executors$RunnableAdapter.call(Unknown Source)

at java.util.concurrent.FutureTask.run(Unknown Source)

at java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source)

at java.lang.Thread.run(Unknown Source)

17/06/24 17:53:09 INFO mapreduce.Job: Job job_local1293020776_0001 failed with state FAILED due to: NA

17/06/24 17:53:09 INFO mapreduce.Job: Counters: 23

File System Counters

FILE: Number of bytes read=194

FILE: Number of bytes written=279848

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=7405568

HDFS: Number of bytes written=0

HDFS: Number of read operations=4

HDFS: Number of large read operations=0

HDFS: Number of write operations=1

Map-Reduce Framework

Map input records=30306

Map output records=0

Map output bytes=0

Map output materialized bytes=0

Input split bytes=129

Combine input records=0

Combine output records=0

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=25

Total committed heap usage (bytes)=264765440

File Input Format Counters

Bytes Read=7405568发现是日志中某条请求日志太长,而且是乱码格式,导致程序卡停了。由于日志中发现有不少记录跟这条数据类似,这样下去,程序肯定运行不下去。于是截取日志文件的一小部分,一共1万条日志,2.5M大小,以这个作为统计样本。

运行结果:

统计结果(粗略的结果,还需要优化过滤程序):

/api/handler.shtml 2469

/campaignpage/img/348837100136587818_meitu_1.png 9

/campaignpage/js/mbk.395bf4e.js 308

/campaignpage/js/mbk.395bf4e.js?333&x9wj=0i 1

/campaignpage/js/mbk.395bf4e.js?_fp362=0.3624106306044319 1

/campaignpage/js/mbk.395bf4e.js?cHVzaA=101231 1

/campaignpage/js/mbk.395bf4e.js?cHVzaA=101233 2

/campaignpage/js/mbk.395bf4e.js?visitDstTime=1 1

/campaignpage/js/sharebicycleDetailjs1.js 23

/campaignpage/js/sharebicycleDetailjs2.js 22

/cyclingTravelHtml/css/activity_detail.css 13

/cyclingTravelHtml/css/header.css 13

/cyclingTravelHtml/html/201703201120215876666.html 1

/cyclingTravelHtml/html/201703211519406467873.html 2

/cyclingTravelHtml/html/201703211528147768737.html 1

/cyclingTravelHtml/html/201703211626464629498.html 7

/cyclingTravelHtml/html/201703221435207189347.html 1

/cyclingTravelHtml/html/201703221457239432616.html 1

/cyclingTravelHtml/html/201703221507417198177.html 1

/cyclingTravelHtml/html/201703221522425389318.html 1

/cyclingTravelHtml/img/miqiLogo.png 1

/cyclingTravelHtml/js/activity_detail.js 13

/cyclingTravelHtml/js/header.js 13

/cyclingTravelHtml/js/jquery-1.11.1.min.js 13至此,我们已经粗略的获取了部分接口的pv。

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?