林炳文Evankaka原创作品。转载请注明出处http://blog.csdn.net/evankaka

摘要:本文使用hadoop分析每秒的搜索量并保存到mysql存储和展示

工程源码下载:https://github.com/appleappleapple/BigDataLearning/tree/master/Hadoop-Demo

一、环境与数据

1、本地开发环境Windows7 + Eclipse Luna

hadoop版本:2.6.2

JDK版本:1.8

2、数据来源:

搜狗实验室

http://www.sogou.com/labs/resource/q.php

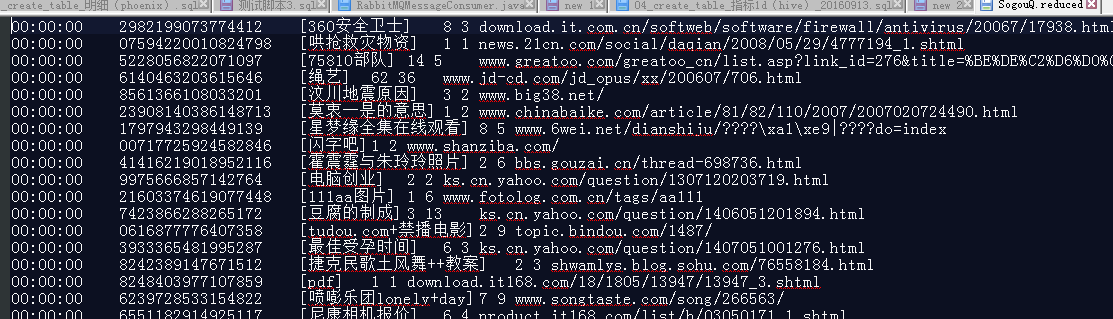

3、数据格式

访问时间\t用户ID\t[查询词]\t该URL在返回结果中的排名\t用户点击的顺序号\t用户点击的URL

其中,用户ID是根据用户使用浏览器访问搜索引擎时的Cookie信息自动赋值,即同一次使用浏览器输入的不同查询对应同一个用户ID

样例:(注意,比 Hadoop实战演练:搜索数据分析----数据去重 (1)多了第一列搜索的时间)

4、统计

本文要统计出每一秒的搜索人数并将结果存储到mysql

二、编程实现

1、统计

package com.lin.counttime;

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

/**

* 功能概要:

*

* @author linbingwen

* @since 2016年8月1日

*/

public class CountTime {

public static class Map extends Mapper<Object, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

// 实现map函数

@Override

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

// 将输入的纯文本文件的数据转化成String

String line = value.toString();

// 将输入的数据首先按行进行分割

StringTokenizer tokenizerArticle = new StringTokenizer(line, "\n");

// 分别对每一行进行处理

while (tokenizerArticle.hasMoreElements()) {

// 每行按空格划分

StringTokenizer tokenizerLine = new StringTokenizer(tokenizerArticle.nextToken());

String c1 = tokenizerLine.nextToken();//

Text newline = new Text(c1);

context.write(newline, one);

}

}

}

public static class Reduce extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result =new IntWritable();

// 实现reduce函数

@Override

public void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException {

int count = 0;

for(IntWritable val:values){

count += val.get();

}

result.set(count);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

//设置hadoop的机器、端口

conf.set("mapred.job.tracker", "10.75.201.125:9000");

//设置输入输出文件目录

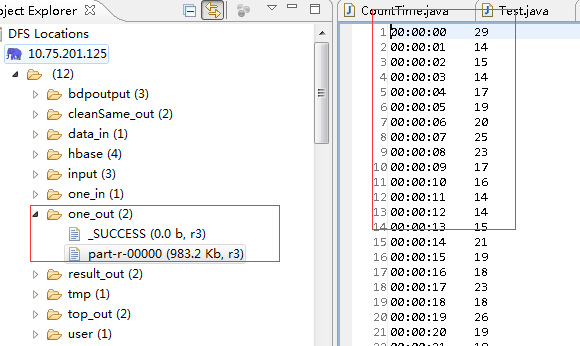

String[] ioArgs = new String[] { "hdfs://hmaster:9000/one_in", "hdfs://hmaster:9000/one_out" };

String[] otherArgs = new GenericOptionsParser(conf, ioArgs).getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println("Usage: <in> <out>");

System.exit(2);

}

//设置一个job

Job job = Job.getInstance(conf, "key Word count");

job.setJarByClass(CountTime.class);

// 设置Map、Combine和Reduce处理类

job.setMapperClass(CountTime.Map.class);

job.setCombinerClass(CountTime.Reduce.class);

job.setReducerClass(CountTime.Reduce.class);

// 设置输出类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 将输入的数据集分割成小数据块splites,提供一个RecordReder的实现

job.setInputFormatClass(TextInputFormat.class);

// 提供一个RecordWriter的实现,负责数据输出

job.setOutputFormatClass(TextOutputFormat.class);

// 设置输入和输出目录

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

2、结果输出到mysql:

package com.lin.counttime;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

import java.sql.SQLException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.db.DBConfiguration;

import org.apache.hadoop.mapreduce.lib.db.DBOutputFormat;

import org.apache.hadoop.mapreduce.lib.db.DBWritable;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

/**

* 功能概要:将top 搜索词保存到数据库

*

* @author linbingwen

*/

public class SaveCountTimeResult {

/**

* 实现DBWritable

*

* TblsWritable需要向mysql中写入数据

*/

public static class TblsWritable implements Writable, DBWritable {

String tbl_name;

int tbl_age;

public TblsWritable() {

}

public TblsWritable(String name, int age) {

this.tbl_name = name;

this.tbl_age = age;

}

@Override

public void write(PreparedStatement statement) throws SQLException {

statement.setString(1, this.tbl_name);

statement.setInt(2, this.tbl_age);

}

@Override

public void readFields(ResultSet resultSet) throws SQLException {

this.tbl_name = resultSet.getString(1);

this.tbl_age = resultSet.getInt(2);

}

@Override

public void write(DataOutput out) throws IOException {

out.writeUTF(this.tbl_name);

out.writeInt(this.tbl_age);

}

@Override

public void readFields(DataInput in) throws IOException {

this.tbl_name = in.readUTF();

this.tbl_age = in.readInt();

}

public String toString() {

return new String(this.tbl_name + " " + this.tbl_age);

}

}

public static class StudentMapper extends Mapper<LongWritable, Text, LongWritable, Text>{

@Override

protected void map(LongWritable key, Text value,Context context) throws IOException, InterruptedException {

context.write(key, value);

}

}

public static class StudentReducer extends Reducer<LongWritable, Text, TblsWritable, TblsWritable> {

@Override

protected void reduce(LongWritable key, Iterable<Text> values,Context context) throws IOException, InterruptedException {

// values只有一个值,因为key没有相同的

StringBuilder value = new StringBuilder();

for(Text text : values){

value.append(text);

}

String[] studentArr = value.toString().split("\t");

if(studentArr[0] != null){

String name = studentArr[0].trim();

int age = 0;

try{

age = Integer.parseInt(studentArr[1].trim());

}catch(NumberFormatException e){

}

context.write(new TblsWritable(name, age), null);

}

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

DBConfiguration.configureDB(conf, "com.mysql.cj.jdbc.Driver","jdbc:mysql://localhost:3306/learning?serverTimezone=UTC","root", "linlin");

//设置hadoop的机器、端口

conf.set("mapred.job.tracker", "10.75.201.125:9000");

//设置输入输出文件目录

String[] ioArgs = new String[] { "hdfs://hmaster:9000/one_out"};

String[] otherArgs = new GenericOptionsParser(conf, ioArgs).getRemainingArgs();

if (otherArgs.length != 1) {

System.err.println("Usage: <in> <out>");

System.exit(2);

}

//设置一个job

Job job = Job.getInstance(conf, "SaveResult");

job.setJarByClass(SaveCountTimeResult.class);

// 输入路径

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

// Mapper

job.setMapperClass(StudentMapper.class);

// Reducer

job.setReducerClass(StudentReducer.class);

// mapper输出格式

job.setOutputKeyClass(LongWritable.class);

job.setOutputValueClass(Text.class);

// 输入格式,默认就是TextInputFormat

job.setOutputFormatClass(DBOutputFormat.class);

// 输出到哪些表、字段

DBOutputFormat.setOutput(job, "count_time", "time", "total");

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

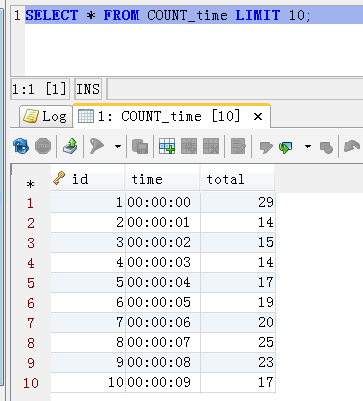

结果成功保存

建表sql:

CREATE TABLE `count_time` (

`id` bigint(20) unsigned NOT NULL AUTO_INCREMENT,

`time` varchar(255) DEFAULT NULL,

`total` bigint(20) DEFAULT NULL,

PRIMARY KEY (`id`)

) ENGINE=InnoDB DEFAULT CHARSET=utf8

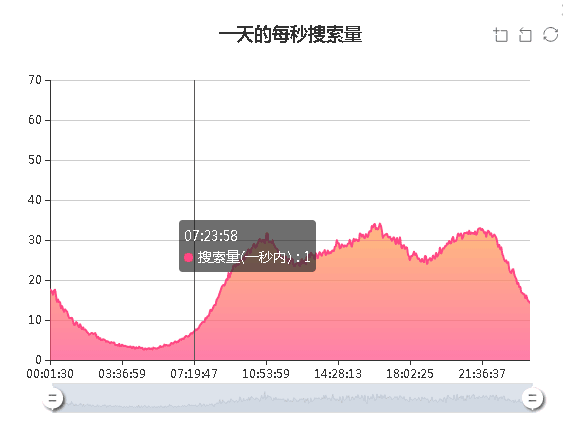

3、结果展示

笔者用百度Echars做了一个页面展示如下:

101

101

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?