关于逻辑回归的公式推导和实现可以参考: http://blog.csdn.net/fengbingchun/article/details/79346691

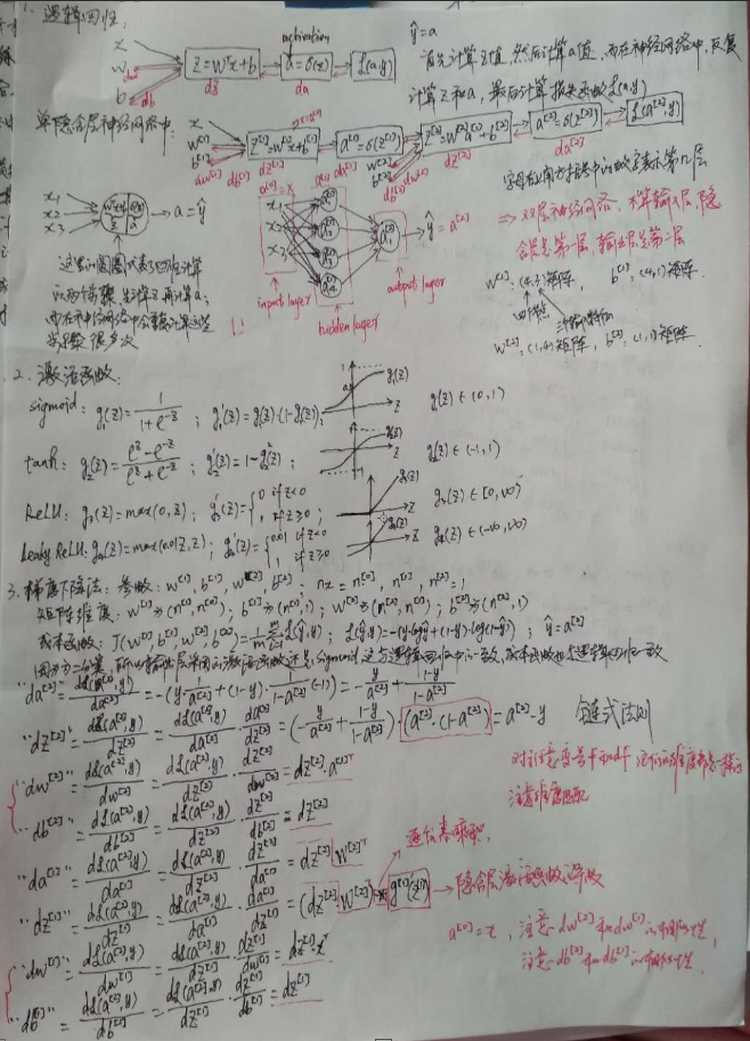

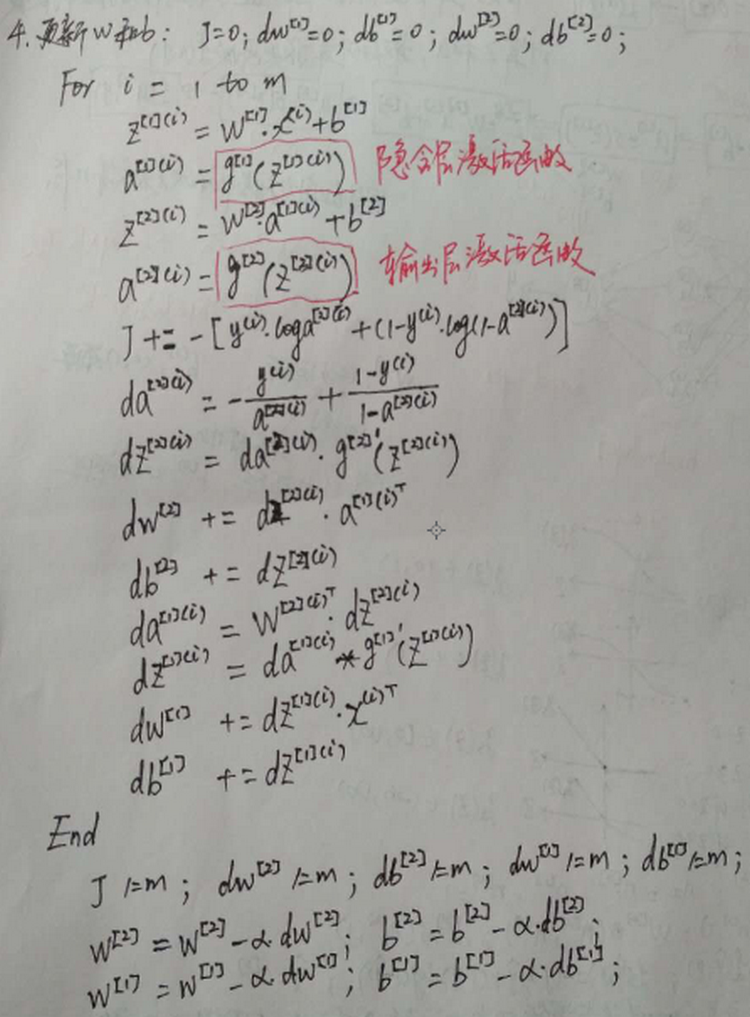

下面是在逻辑回归的基础上,对单隐含层的神经网络进行公式推导:

选择激活函数时的一些经验:不同层的激活函数可以不一样。如果输出层值是0或1,在做二元分类,可以选择sigmoid作为输出层的激活函数;其它层可以选择默认(不确定情况下)使用ReLU作为激活函数。使用ReLU作为激活函数一般比使用sigmoid或tanh在使用梯度下降法时学习速度会快很多。一般在深度学习中都需要使用非线性激活函数。唯一能用线性激活函数的地方通常也就只有输出层。

深度学习中的权值w不能初始化为0,偏置b可以初始化为0.

反向传播中的求导需要使用微积分的链式法则。

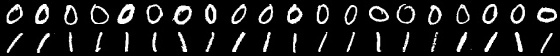

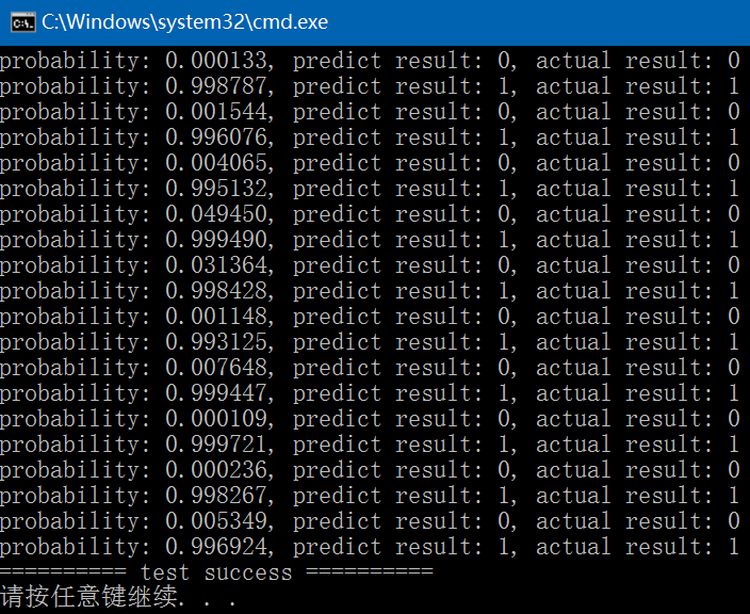

以下code是完全按照上面的推导公式进行实现的,对数字0和1进行二分类。训练数据集为从MNIST中train中随机选取的0、1各10个图像;测试数据集为从MNIST中test中随机选取的0、1各10个图像,如下图,其中第一排前10个0用于训练,后10个0用于测试;第二排前10个1用于训练,后10个1用于测试:

关于数据集MNIST的介绍可以参考: http://blog.csdn.net/fengbingchun/article/details/49611549

single_hidden_layer.hpp:

#ifndef FBC_SRC_NN_SINGLE_HIDDEN_LAYER_HPP_

#define FBC_SRC_NN_SINGLE_HIDDEN_LAYER_HPP_

#include <string>

#include <vector>

namespace ANN {

template<typename T>

class SingleHiddenLayer { // two categories

public:

typedef enum ActivationFunctionType {

Sigmoid = 0,

TanH = 1,

ReLU = 2,

Leaky_ReLU = 3

} ActivationFunctionType;

SingleHiddenLayer() = default;

int init(const T* data, const T* labels, int train_num, int feature_length,

int hidden_layer_node_num = 20, T learning_rate = 0.00001, int iterations = 10000, int hidden_layer_activation_type = 2, int output_layer_activation_type = 0);

int train(const std::string& model);

int load_model(const std::string& model);

T predict(const T* data, int feature_length) const;

private:

T calculate_activation_function(T value, ActivationFunctionType type) const;

T calcuate_activation_function_derivative(T value, ActivationFunctionType type) const;

int store_model(const std::string& model) const;

void init_train_variable();

void init_w_and_b();

ActivationFunctionType hidden_layer_activation_type = ReLU;

ActivationFunctionType output_layer_activation_type = Sigmoid;

std::vector<std::vector<T>> x; // training set

std::vector<T> y; // ground truth labels

int iterations = 10000;

int m = 0; // train samples num

int feature_length = 0;

T alpha = (T)0.00001; // learning rate

std::vector<std::vector<T>> w1, w2; // weights

std::vector<T> b1, b2; // threshold

int hidden_layer_node_num = 10;

int output_layer_node_num = 1;

T J = (T)0.;

std::vector<std::vector<T>> dw1, dw2;

std::vector<T> db1, db2;

std::vector<std::vector<T>> z1, a1, z2, a2, da2, dz2, da1, dz1;

}; // class SingleHiddenLayer

} // namespace ANN

#endif // FBC_SRC_NN_SINGLE_HIDDEN_LAYER_HPP_

#include "single_hidden_layer.hpp"

#include <algorithm>

#include <cmath>

#include <random>

#include <memory>

#include "common.hpp"

namespace ANN {

template<typename T>

int SingleHiddenLayer<T>::init(const T* data, const T* labels, int train_num, int feature_length,

int hidden_layer_node_num, T learning_rate, int iterations, int hidden_layer_activation_type, int output_layer_activation_type)

{

CHECK(train_num > 2 && feature_length > 0 && hidden_layer_node_num > 0 && learning_rate > 0 && iterations > 0);

CHECK(hidden_layer_activation_type >= 0 && hidden_layer_activation_type < 4);

CHECK(output_layer_activation_type >= 0 && output_layer_activation_type < 4);

this->hidden_layer_node_num = hidden_layer_node_num;

this->alpha = learning_rate;

this->iterations = iterations;

this->hidden_layer_activation_type = static_cast<ActivationFunctionType>(hidden_layer_activation_type);

this->output_layer_activation_type = static_cast<ActivationFunctionType>(output_layer_activation_type);

this->m = train_num;

this->feature_length = feature_length;

this->x.resize(train_num);

this->y.resize(train_num);

for (int i = 0; i < train_num; ++i) {

const T* p = data + i * feature_length;

this->x[i].resize(feature_length);

for (int j = 0; j < feature_length; ++j) {

this->x[i][j] = p[j];

}

this->y[i] = labels[i];

}

return 0;

}

template<typename T>

void SingleHiddenLayer<T>::init_train_variable()

{

J = (T)0.;

dw1.resize(this->hidden_layer_node_num);

db1.resize(this->hidden_layer_node_num);

for (int i = 0; i < this->hidden_layer_node_num; ++i) {

dw1[i].resize(this->feature_length);

for (int j = 0; j < this->feature_length; ++j) {

dw1[i][j] = (T)0.;

}

db1[i] = (T)0.;

}

dw2.resize(this->output_layer_node_num);

db2.resize(this->output_layer_node_num);

for (int i = 0; i < this->output_layer_node_num; ++i) {

dw2[i].resize(this->hidden_layer_node_num);

for (int j = 0; j < this->hidden_layer_node_num; ++j) {

dw2[i][j] = (T)0.;

}

db2[i] = (T)0.;

}

z1.resize(this->m); a1.resize(this->m); da1.resize(this->m); dz1.resize(this->m);

for (int i = 0; i < this->m; ++i) {

z1[i].resize(this->hidden_layer_node_num);

a1[i].resize(this->hidden_layer_node_num);

dz1[i].resize(this->hidden_layer_node_num);

da1[i].resize(this->hidden_layer_node_num);

for (int j = 0; j < this->hidden_layer_node_num; ++j) {

z1[i][j] = (T)0.;

a1[i][j] = (T)0.;

dz1[i][j] = (T)0.;

da1[i][j] = (T)0.;

}

}

z2.resize(this->m); a2.resize(this->m); da2.resize(this->m); dz2.resize(this->m);

for (int i = 0; i < this->m; ++i) {

z2[i].resize(this->output_layer_node_num);

a2[i].resize(this->output_layer_node_num);

dz2[i].resize(this->output_layer_node_num);

da2[i].resize(this->output_layer_node_num);

for (int j = 0; j < this->output_layer_node_num; ++j) {

z2[i][j] = (T)0.;

a2[i][j] = (T)0.;

dz2[i][j] = (T)0.;

da2[i][j] = (T)0.;

}

}

}

template<typename T>

void SingleHiddenLayer<T>::init_w_and_b()

{

w1.resize(this->hidden_layer_node_num); // (hidden_layer_node_num, feature_length)

b1.resize(this->hidden_layer_node_num); // (hidden_layer_node_num, 1)

w2.resize(this->output_layer_node_num); // (output_layer_node_num, hidden_layer_node_num)

b2.resize(this->output_layer_node_num); // (output_layer_node_num, 1)

std::random_device rd;

std::mt19937 generator(rd());

std::uniform_real_distribution<T> distribution(-0.01, 0.01);

for (int i = 0; i < this->hidden_layer_node_num; ++i) {

w1[i].resize(this->feature_length);

for (int j = 0; j < this->feature_length; ++j) {

w1[i][j] = distribution(generator);

}

b1[i] = distribution(generator);

}

for (int i = 0; i < this->output_layer_node_num; ++i) {

w2[i].resize(this->hidden_layer_node_num);

for (int j = 0; j < this->hidden_layer_node_num; ++j) {

w2[i][j] = distribution(generator);

}

b2[i] = distribution(generator);

}

}

template<typename T>

int SingleHiddenLayer<T>::train(const std::string& model)

{

CHECK(x.size() == y.size());

CHECK(output_layer_node_num == 1);

init_w_and_b();

for (int iter = 0; iter < this->iterations; ++iter) {

init_train_variable();

for (int i = 0; i < this->m; ++i) {

for (int p = 0; p < this->hidden_layer_node_num; ++p) {

for (int q = 0; q < this->feature_length; ++q) {

z1[i][p] += w1[p][q] * x[i][q];

}

z1[i][p] += b1[p]; // z[1](i)=w[1]*x(i)+b[1]

a1[i][p] = calculate_activation_function(z1[i][p], this->hidden_layer_activation_type); // a[1](i)=g[1](z[1](i))

}

for (int p = 0; p < this->output_layer_node_num; ++p) {

for (int q = 0; q < this->hidden_layer_node_num; ++q) {

z2[i][p] += w2[p][q] * a1[i][q];

}

z2[i][p] += b2[p]; // z[2](i)=w[2]*a[1](i)+b[2]

a2[i][p] = calculate_activation_function(z2[i][p], this->output_layer_activation_type); // a[2](i)=g[2](z[2](i))

}

for (int p = 0; p < this->output_layer_node_num; ++p) {

J += -(y[i] * std::log(a2[i][p]) + (1 - y[i] * std::log(1 - a2[i][p]))); // J+=-[y(i)*loga[2](i)+(1-y(i))*log(1-a[2](i))]

}

for (int p = 0; p < this->output_layer_node_num; ++p) {

da2[i][p] = -(y[i] / a2[i][p]) + ((1. - y[i]) / (1. - a2[i][p])); // da[2](i)=-(y(i)/a[2](i))+((1-y(i))/(1.-a[2](i)))

dz2[i][p] = da2[i][p] * calcuate_activation_function_derivative(z2[i][p], this->output_layer_activation_type); // dz[2](i)=da[2](i)*g[2]'(z[2](i))

}

for (int p = 0; p < this->output_layer_node_num; ++p) {

for (int q = 0; q < this->hidden_layer_node_num; ++q) {

dw2[p][q] += dz2[i][p] * a1[i][q]; // dw[2]+=dz[2](i)*(a[1](i)^T)

}

db2[p] += dz2[i][p]; // db[2]+=dz[2](i)

}

for (int p = 0; p < this->hidden_layer_node_num; ++p) {

for (int q = 0; q < this->output_layer_node_num; ++q) {

da1[i][p] = w2[q][p] * dz2[i][q]; // (da[1](i)=w[2](i)^T)*dz[2](i)

dz1[i][p] = da1[i][p] * calcuate_activation_function_derivative(z1[i][p], this->hidden_layer_activation_type); // dz[1](i)=da[1](i)*(g[1]'(z[1](i)))

}

}

for (int p = 0; p < this->hidden_layer_node_num; ++p) {

for (int q = 0; q < this->feature_length; ++q) {

dw1[p][q] += dz1[i][p] * x[i][q]; // dw[1]+=dz[1](i)*(x(i)^T)

}

db1[p] += dz1[i][p]; // db[1]+=dz[1](i)

}

}

J /= m;

for (int p = 0; p < this->output_layer_node_num; ++p) {

for (int q = 0; q < this->hidden_layer_node_num; ++q) {

dw2[p][q] = dw2[p][q] / m; // dw[2] /=m

}

db2[p] = db2[p] / m; // db[2] /=m

}

for (int p = 0; p < this->hidden_layer_node_num; ++p) {

for (int q = 0; q < this->feature_length; ++q) {

dw1[p][q] = dw1[p][q] / m; // dw[1] /= m

}

db1[p] = db1[p] / m; // db[1] /= m

}

for (int p = 0; p < this->output_layer_node_num; ++p) {

for (int q = 0; q < this->hidden_layer_node_num; ++q) {

w2[p][q] = w2[p][q] - this->alpha * dw2[p][q]; // w[2]=w[2]-alpha*dw[2]

}

b2[p] = b2[p] - this->alpha * db2[p]; // b[2]=b[2]-alpha*db[2]

}

for (int p = 0; p < this->hidden_layer_node_num; ++p) {

for (int q = 0; q < this->feature_length; ++q) {

w1[p][q] = w1[p][q] - this->alpha * dw1[p][q]; // w[1]=w[1]-alpha*dw[1]

}

b1[p] = b1[p] - this->alpha * db1[p]; // b[1]=b[1]-alpha*db[1]

}

}

CHECK(store_model(model) == 0);

}

template<typename T>

int SingleHiddenLayer<T>::load_model(const std::string& model)

{

std::ifstream file;

file.open(model.c_str(), std::ios::binary);

if (!file.is_open()) {

fprintf(stderr, "open file fail: %s\n", model.c_str());

return -1;

}

file.read((char*)&this->hidden_layer_node_num, sizeof(int));

file.read((char*)&this->output_layer_node_num, sizeof(int));

int type{ -1 };

file.read((char*)&type, sizeof(int));

this->hidden_layer_activation_type = static_cast<ActivationFunctionType>(type);

file.read((char*)&type, sizeof(int));

this->output_layer_activation_type = static_cast<ActivationFunctionType>(type);

file.read((char*)&this->feature_length, sizeof(int));

this->w1.resize(this->hidden_layer_node_num);

for (int i = 0; i < this->hidden_layer_node_num; ++i) {

this->w1[i].resize(this->feature_length);

}

this->b1.resize(this->hidden_layer_node_num);

this->w2.resize(this->output_layer_node_num);

for (int i = 0; i < this->output_layer_node_num; ++i) {

this->w2[i].resize(this->hidden_layer_node_num);

}

this->b2.resize(this->output_layer_node_num);

int length = w1.size() * w1[0].size();

std::unique_ptr<T[]> data1(new T[length]);

T* p = data1.get();

file.read((char*)p, sizeof(T)* length);

file.read((char*)this->b1.data(), sizeof(T)* b1.size());

int count{ 0 };

for (int i = 0; i < this->w1.size(); ++i) {

for (int j = 0; j < this->w1[0].size(); ++j) {

w1[i][j] = p[count++];

}

}

length = w2.size() * w2[0].size();

std::unique_ptr<T[]> data2(new T[length]);

p = data2.get();

file.read((char*)p, sizeof(T)* length);

file.read((char*)this->b2.data(), sizeof(T)* b2.size());

count = 0;

for (int i = 0; i < this->w2.size(); ++i) {

for (int j = 0; j < this->w2[0].size(); ++j) {

w2[i][j] = p[count++];

}

}

file.close();

return 0;

}

template<typename T>

T SingleHiddenLayer<T>::predict(const T* data, int feature_length) const

{

CHECK(feature_length == this->feature_length);

CHECK(this->output_layer_node_num == 1);

CHECK(this->hidden_layer_activation_type >= 0 && this->hidden_layer_activation_type < 4);

CHECK(this->output_layer_activation_type >= 0 && this->output_layer_activation_type < 4);

std::vector<T> z1(this->hidden_layer_node_num, (T)0.), a1(this->hidden_layer_node_num, (T)0.),

z2(this->output_layer_node_num, (T)0.), a2(this->output_layer_node_num, (T)0.);

for (int p = 0; p < this->hidden_layer_node_num; ++p) {

for (int q = 0; q < this->feature_length; ++q) {

z1[p] += w1[p][q] * data[q];

}

z1[p] += b1[p];

a1[p] = calculate_activation_function(z1[p], this->hidden_layer_activation_type);

}

for (int p = 0; p < this->output_layer_node_num; ++p) {

for (int q = 0; q < this->hidden_layer_node_num; ++q) {

z2[p] += w2[p][q] * a1[q];

}

z2[p] += b2[p];

a2[p] = calculate_activation_function(z2[p], this->output_layer_activation_type);

}

return a2[0];

}

template<typename T>

T SingleHiddenLayer<T>::calculate_activation_function(T value, ActivationFunctionType type) const

{

T result{ 0 };

switch (type) {

case Sigmoid:

result = (T)1. / ((T)1. + std::exp(-value));

break;

case TanH:

result = (T)(std::exp(value) - std::exp(-value)) / (std::exp(value) + std::exp(-value));

break;

case ReLU:

result = std::max((T)0., value);

break;

case Leaky_ReLU:

result = std::max((T)0.01*value, value);

break;

default:

CHECK(0);

break;

}

return result;

}

template<typename T>

T SingleHiddenLayer<T>::calcuate_activation_function_derivative(T value, ActivationFunctionType type) const

{

T result{ 0 };

switch (type) {

case Sigmoid: {

T tmp = calculate_activation_function(value, Sigmoid);

result = tmp * (1. - tmp);

}

break;

case TanH: {

T tmp = calculate_activation_function(value, TanH);

result = 1 - tmp * tmp;

}

break;

case ReLU:

result = value < 0. ? 0. : 1.;

break;

case Leaky_ReLU:

result = value < 0. ? 0.01 : 1.;

break;

default:

CHECK(0);

break;

}

return result;

}

template<typename T>

int SingleHiddenLayer<T>::store_model(const std::string& model) const

{

std::ofstream file;

file.open(model.c_str(), std::ios::binary);

if (!file.is_open()) {

fprintf(stderr, "open file fail: %s\n", model.c_str());

return -1;

}

file.write((char*)&this->hidden_layer_node_num, sizeof(int));

file.write((char*)&this->output_layer_node_num, sizeof(int));

int type = this->hidden_layer_activation_type;

file.write((char*)&type, sizeof(int));

type = this->output_layer_activation_type;

file.write((char*)&type, sizeof(int));

file.write((char*)&this->feature_length, sizeof(int));

int length = w1.size() * w1[0].size();

std::unique_ptr<T[]> data1(new T[length]);

T* p = data1.get();

for (int i = 0; i < w1.size(); ++i) {

for (int j = 0; j < w1[0].size(); ++j) {

p[i * w1[0].size() + j] = w1[i][j];

}

}

file.write((char*)p, sizeof(T)* length);

file.write((char*)this->b1.data(), sizeof(T)* this->b1.size());

length = w2.size() * w2[0].size();

std::unique_ptr<T[]> data2(new T[length]);

p = data2.get();

for (int i = 0; i < w2.size(); ++i) {

for (int j = 0; j < w2[0].size(); ++j) {

p[i * w2[0].size() + j] = w2[i][j];

}

}

file.write((char*)p, sizeof(T)* length);

file.write((char*)this->b2.data(), sizeof(T)* this->b2.size());

file.close();

return 0;

}

template class SingleHiddenLayer<float>;

template class SingleHiddenLayer<double>;

} // namespace ANN

#include "funset.hpp"

#include <iostream>

#include "perceptron.hpp"

#include "BP.hpp""

#include "CNN.hpp"

#include "linear_regression.hpp"

#include "naive_bayes_classifier.hpp"

#include "logistic_regression.hpp"

#include "common.hpp"

#include "knn.hpp"

#include "decision_tree.hpp"

#include "pca.hpp"

#include <opencv2/opencv.hpp>

#include "logistic_regression2.hpp"

#include "single_hidden_layer.hpp"

// ====================== single hidden layer(two categories) ===============

int test_single_hidden_layer_train()

{

const std::string image_path{ "E:/GitCode/NN_Test/data/images/digit/handwriting_0_and_1/" };

cv::Mat data, labels;

for (int i = 1; i < 11; ++i) {

const std::vector<std::string> label{ "0_", "1_" };

for (const auto& value : label) {

std::string name = std::to_string(i);

name = image_path + value + name + ".jpg";

cv::Mat image = cv::imread(name, 0);

if (image.empty()) {

fprintf(stderr, "read image fail: %s\n", name.c_str());

return -1;

}

data.push_back(image.reshape(0, 1));

}

}

data.convertTo(data, CV_32F);

std::unique_ptr<float[]> tmp(new float[20]);

for (int i = 0; i < 20; ++i) {

if (i % 2 == 0) tmp[i] = 0.f;

else tmp[i] = 1.f;

}

labels = cv::Mat(20, 1, CV_32FC1, tmp.get());

ANN::SingleHiddenLayer<float> shl;

const float learning_rate{ 0.00001f };

const int iterations{ 10000 };

const int hidden_layer_node_num{ static_cast<int>(std::log2(data.cols)) };

const int hidden_layer_activation_type{ ANN::SingleHiddenLayer<float>::ReLU };

const int output_layer_activation_type{ ANN::SingleHiddenLayer<float>::Sigmoid };

int ret = shl.init((float*)data.data, (float*)labels.data, data.rows, data.cols,

hidden_layer_node_num, learning_rate, iterations, hidden_layer_activation_type, output_layer_activation_type);

if (ret != 0) {

fprintf(stderr, "single_hidden_layer(two categories) init fail: %d\n", ret);

return -1;

}

const std::string model{ "E:/GitCode/NN_Test/data/single_hidden_layer.model" };

ret = shl.train(model);

if (ret != 0) {

fprintf(stderr, "single_hidden_layer(two categories) train fail: %d\n", ret);

return -1;

}

return 0;

}

int test_single_hidden_layer_predict()

{

const std::string image_path{ "E:/GitCode/NN_Test/data/images/digit/handwriting_0_and_1/" };

cv::Mat data, labels, result;

for (int i = 11; i < 21; ++i) {

const std::vector<std::string> label{ "0_", "1_" };

for (const auto& value : label) {

std::string name = std::to_string(i);

name = image_path + value + name + ".jpg";

cv::Mat image = cv::imread(name, 0);

if (image.empty()) {

fprintf(stderr, "read image fail: %s\n", name.c_str());

return -1;

}

data.push_back(image.reshape(0, 1));

}

}

data.convertTo(data, CV_32F);

std::unique_ptr<int[]> tmp(new int[20]);

for (int i = 0; i < 20; ++i) {

if (i % 2 == 0) tmp[i] = 0;

else tmp[i] = 1;

}

labels = cv::Mat(20, 1, CV_32SC1, tmp.get());

CHECK(data.rows == labels.rows);

const std::string model{ "E:/GitCode/NN_Test/data/single_hidden_layer.model" };

ANN::SingleHiddenLayer<float> shl;

int ret = shl.load_model(model);

if (ret != 0) {

fprintf(stderr, "load single_hidden_layer(two categories) model fail: %d\n", ret);

return -1;

}

for (int i = 0; i < data.rows; ++i) {

float probability = shl.predict((float*)(data.row(i).data), data.cols);

fprintf(stdout, "probability: %.6f, ", probability);

if (probability > 0.5) fprintf(stdout, "predict result: 1, ");

else fprintf(stdout, "predict result: 0, ");

fprintf(stdout, "actual result: %d\n", ((int*)(labels.row(i).data))[0]);

}

return 0;

}GitHub: https://github.com/fengbingchun/NN_Test

1225

1225

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?