题目:压缩感知重构算法之迭代硬阈值(Iterative Hard Thresholding,IHT)

本篇来介绍IHT重构算法。一般在压缩感知参考文献中,提到IHT时一般引用的都是文献【1】,但IHT实际上是在文献【2】中提出的。IHT并不是一种凸优化算法,它类似于OMP,是一种迭代算法,但它是由一个优化问题推导得到的。文献【1】和文献【2】的作者相同,署名单位为英国爱丁堡大学(University ofEdinburgh),第一作者的个人主页见参考文献【3】,从个人主页来看,作者现在已到英国南安普敦大学(University of Southampton),作者发表的论文均可以从其个人主页中下载。

文献【1】的贡献是当把IHT应用于压缩感知重构问题时进行了一个理论分析:

1、迭代硬阈值(IHT)的提出

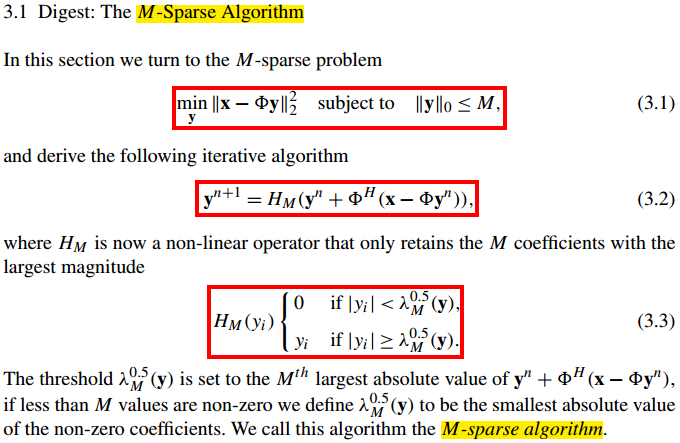

值得一提的是,IHT在文献【2】中提出时并不叫Iterative Hard Thresholding,而是M-Sparse Algorithm,如下图所示:

该算法是为了求解M-稀疏问题(M-sparse problem)式(3.1)而提出的,经过一番推导得到了迭代公式式(3.2),其中HM(·)的含义参见式(3.3)。

这里面最关键的问题是:式(3.2)这个迭代公式是如何推导得到的呢?

以下Step1~Step4推导过程可以参见本文的补充说明:迭代硬阈值(IHT)的补充说明,若要透彻地理解IHT,需要知道Majorization-Minimization优化框架和硬阈值(Hard Thresholding)函数。

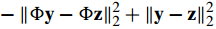

2、Step1:替代目标函数

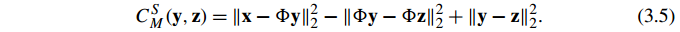

首先,将式(3.1)的目标函数用替代目标函数(surrogate objective fucntion)式(3.5)替换:

这里中

为什么式目标函数式(3.1)可以用式(3.5) 替代呢?这得往回看一下了……

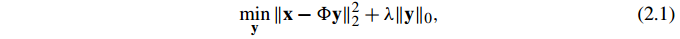

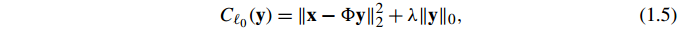

实际上,文献【2】分别针对两个优化问题进行了讨论,本篇主要是文献中的第二个优化问题,由于两个问题有一定的相似性,所以文中在推导第二个问题时进行了一些简化,下面简单回顾一些必要的有关第一个问题的内容,第一个优化问题是:

将目标函数定义为:

为了推导迭代公式(详见式(2.2)和式(2.3))式(1.5)用如下替代目标函数代替:

这里注意波浪下划线中提到的“[29]”(参见文献【4】),surrogate objective function的思想来自这篇文件。然后注意对Φ的约束(第一个红框),之后以会有这个约束,个人认为是为了使式(2.5)后半部分大于等于零,即为了使

大于等于零(当y=z时这部分等于零)。由此自然就有了式(2.5)与式(1.5)两个目标函数的关系(第二个红框),这也很容易理解,将y=z代入式(2.5)自然可得这个关系。

到此应该明白式(2.5)为什么可以替代式(1.5)了吧……

而我们用式(3.5)替代目标函数

的道理是一模一样的。

补充一点:有关对||Φ||2<1的约束文献【2】中有一处提到了如下描述:

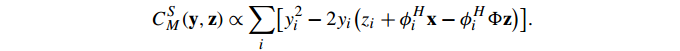

3、Step2:替代目标函数变形

接下来,式(3.5)进行了变形:

这个式子是怎么来的呢?我们对式(3.5)进行一下推导:

这里,后面三项2范数的平方是与y无关的项,因此可视为常量,若对参数y求最优化时这三项并不影响优化结果,可略去,因此就有了变形的结果,符号“∝”表示成正比例。

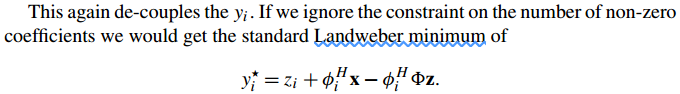

4、Step3:极值点的获得

接下来文献【2】直接给出了极值点:

注意文中提到了“landweder”,搜索一下可知经常出现的是“landweder迭代”,这个暂且不提。那么极值点是如何推导得到的呢?其实就是一个简单的配方,中学生就会的:

令

当

5、Step4:迭代公式的获得

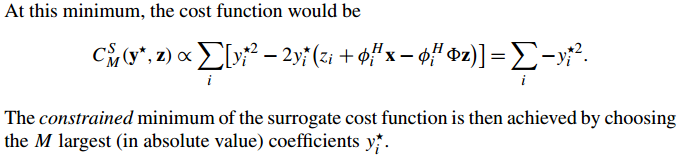

极值点得到了,替代目标函数的极小值也得到了:

那么如何得到迭代公式式(3.2)呢?这时要注意,推导过程中有一个约束条件一直没管,即式(3.1)中的约束条件:

也就是向量y的稀疏度不大于M。综合起来说,替代函数的最小值是

那么怎么使这个最小值在向量y的稀疏度不大于M的约束下最小呢,显然是保留最大的M项(因为是平方,所以要取绝对值absolute value),剩余的置零(注意这里有个负号,所以要保留最大的M项)。

至此,我们就得到了迭代公式式(3.2)。

6、IHT算法的MATLAB代码

这里一共给出三个版本的IHT实现:

第一个版本:

在作者的主页有官方版IHT算法MATLAB代码,但有些复杂,这里给出一个简化版的IHT代码,方便理解:

function [ y ] = IHT_Basic( x,Phi,M,mu,epsilon,loopmax )

%IHT_Basic Summary of this function goes here

%Version: 1.0 written by jbb0523 @2016-07-30

%Reference:Blumensath T, Davies M E. Iterative Thresholding for Sparse Approximations[J].

%Journal of Fourier Analysis & Applications, 2008, 14(5):629-654.

%(Available at: http://link.springer.com/article/10.1007%2Fs00041-008-9035-z)

% Detailed explanation goes here

if nargin < 6

loopmax = 3000;

end

if nargin < 5

epsilon = 1e-3;

end

if nargin < 4

mu = 1;

end

[x_rows,x_columns] = size(x);

if x_rows<x_columns

x = x';%x should be a column vector

end

n = size(Phi,2);

y = zeros(n,1);%Initialize y=0

loop = 0;

while(norm(x-Phi*y)>epsilon && loop < loopmax)

y = y + Phi'*(x-Phi*y)*mu;%update y

%the following two lines of code realize functionality of H_M(.)

%1st: permute absolute value of y in descending order

[ysorted inds] = sort(abs(y), 'descend');

%2nd: set all but M largest coordinates to zeros

y(inds(M+1:n)) = 0;

loop = loop + 1;

end

end 第二个版本:(作者给出的官方版本)

文件:hard_l0_Mterm.m(\sparsify_0_5\HardLab)

链接:http://www.personal.soton.ac.uk/tb1m08/sparsify/sparsify_0_5.zip

function [s, err_mse, iter_time]=hard_l0_Mterm(x,A,m,M,varargin)

% hard_l0_Mterm: Hard thresholding algorithm that keeps exactly M elements

% in each iteration.

%

% This algorithm has certain performance guarantees as described in [1],

% [2] and [3].

%

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Usage

%

% [s, err_mse, iter_time]=hard_l0_Mterm(x,P,m,M,'option_name','option_value')

%

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

%

% Input

%

% Mandatory:

% x Observation vector to be decomposed

% P Either:

% 1) An nxm matrix (n must be dimension of x)

% 2) A function handle (type "help function_format"

% for more information)

% Also requires specification of P_trans option.

% 3) An object handle (type "help object_format" for

% more information)

% m length of s

% M non-zero elements to keep in each iteration

%

% Possible additional options:

% (specify as many as you want using 'option_name','option_value' pairs)

% See below for explanation of options:

%__________________________________________________________________________

% option_name | available option_values | default

%--------------------------------------------------------------------------

% stopTol | number (see below) | 1e-16

% P_trans | function_handle (see below) |

% maxIter | positive integer (see below) | n^2

% verbose | true, false | false

% start_val | vector of length m | zeros

% step_size | number | 0 (auto)

%

% stopping criteria used : (OldRMS-NewRMS)/RMS(x) < stopTol

%

% stopTol: Value for stopping criterion.

%

% P_trans: If P is a function handle, then P_trans has to be specified and

% must be a function handle.

%

% maxIter: Maximum number of allowed iterations.

%

% verbose: Logical value to allow algorithm progress to be displayed.

%

% start_val: Allows algorithms to start from partial solution.

%

%

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

%

% Outputs

%

% s Solution vector

% err_mse Vector containing mse of approximation error for each

% iteration

% iter_time Vector containing computation times for each iteration

%

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

%

% Description

%

% Implements the M-sparse algorithm described in [1], [2] and [3].

% This algorithm takes a gradient step and then thresholds to only retain

% M non-zero elements. It allows the step-size to be calculated

% automatically as described in [3] and is therefore now independent from

% a rescaling of P.

%

%

% References

% [1] T. Blumensath and M.E. Davies, "Iterative Thresholding for Sparse

% Approximations", submitted, 2007

% [2] T. Blumensath and M. Davies; "Iterative Hard Thresholding for

% Compressed Sensing" to appear Applied and Computational Harmonic

% Analysis

% [3] T. Blumensath and M. Davies; "A modified Iterative Hard

% Thresholding algorithm with guaranteed performance and stability"

% in preparation (title may change)

% See Also

% hard_l0_reg

%

% Copyright (c) 2007 Thomas Blumensath

%

% The University of Edinburgh

% Email: thomas.blumensath@ed.ac.uk

% Comments and bug reports welcome

%

% This file is part of sparsity Version 0.4

% Created: April 2007

% Modified January 2009

%

% Part of this toolbox was developed with the support of EPSRC Grant

% D000246/1

%

% Please read COPYRIGHT.m for terms and conditions.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Default values and initialisation

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

[n1 n2]=size(x);

if n2 == 1

n=n1;

elseif n1 == 1

x=x';

n=n2;

else

error('x must be a vector.');

end

sigsize = x'*x/n;

oldERR = sigsize;

err_mse = [];

iter_time = [];

STOPTOL = 1e-16;

MAXITER = n^2;

verbose = false;

initial_given=0;

s_initial = zeros(m,1);

MU = 0;

if verbose

display('Initialising...')

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Output variables

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

switch nargout

case 3

comp_err=true;

comp_time=true;

case 2

comp_err=true;

comp_time=false;

case 1

comp_err=false;

comp_time=false;

case 0

error('Please assign output variable.')

otherwise

error('Too many output arguments specified')

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Look through options

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Put option into nice format

Options={};

OS=nargin-4;

c=1;

for i=1:OS

if isa(varargin{i},'cell')

CellSize=length(varargin{i});

ThisCell=varargin{i};

for j=1:CellSize

Options{c}=ThisCell{j};

c=c+1;

end

else

Options{c}=varargin{i};

c=c+1;

end

end

OS=length(Options);

if rem(OS,2)

error('Something is wrong with argument name and argument value pairs.')

end

for i=1:2:OS

switch Options{i}

case {'stopTol'}

if isa(Options{i+1},'numeric') ; STOPTOL = Options{i+1};

else error('stopTol must be number. Exiting.'); end

case {'P_trans'}

if isa(Options{i+1},'function_handle'); Pt = Options{i+1};

else error('P_trans must be function _handle. Exiting.'); end

case {'maxIter'}

if isa(Options{i+1},'numeric'); MAXITER = Options{i+1};

else error('maxIter must be a number. Exiting.'); end

case {'verbose'}

if isa(Options{i+1},'logical'); verbose = Options{i+1};

else error('verbose must be a logical. Exiting.'); end

case {'start_val'}

if isa(Options{i+1},'numeric') && length(Options{i+1}) == m ;

s_initial = Options{i+1};

initial_given=1;

else error('start_val must be a vector of length m. Exiting.'); end

case {'step_size'}

if isa(Options{i+1},'numeric') && (Options{i+1}) > 0 ;

MU = Options{i+1};

else error('Stepsize must be between a positive number. Exiting.'); end

otherwise

error('Unrecognised option. Exiting.')

end

end

if nargout >=2

err_mse = zeros(MAXITER,1);

end

if nargout ==3

iter_time = zeros(MAXITER,1);

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Make P and Pt functions

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

if isa(A,'float') P =@(z) A*z; Pt =@(z) A'*z;

elseif isobject(A) P =@(z) A*z; Pt =@(z) A'*z;

elseif isa(A,'function_handle')

try

if isa(Pt,'function_handle'); P=A;

else error('If P is a function handle, Pt also needs to be a function handle. Exiting.'); end

catch error('If P is a function handle, Pt needs to be specified. Exiting.'); end

else error('P is of unsupported type. Use matrix, function_handle or object. Exiting.'); end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Do we start from zero or not?

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

if initial_given ==1;

if length(find(s_initial)) > M

display('Initial vector has more than M non-zero elements. Keeping only M largest.')

end

s = s_initial;

[ssort sortind] = sort(abs(s),'descend');

s(sortind(M+1:end)) = 0;

Ps = P(s);

Residual = x-Ps;

oldERR = Residual'*Residual/n;

else

s_initial = zeros(m,1);

Residual = x;

s = s_initial;

Ps = zeros(n,1);

oldERR = sigsize;

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Random Check to see if dictionary norm is below 1

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

x_test=randn(m,1);

x_test=x_test/norm(x_test);

nP=norm(P(x_test));

if abs(MU*nP)>1;

display('WARNING! Algorithm likely to become unstable.')

display('Use smaller step-size or || P ||_2 < 1.')

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Main algorithm

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

if verbose

display('Main iterations...')

end

tic

t=0;

done = 0;

iter=1;

while ~done

if MU == 0

%Calculate optimal step size and do line search

olds = s;

oldPs = Ps;

IND = s~=0;

d = Pt(Residual);

% If the current vector is zero, we take the largest elements in d

if sum(IND)==0

[dsort sortdind] = sort(abs(d),'descend');

IND(sortdind(1:M)) = 1;

end

id = (IND.*d);

Pd = P(id);

mu = id'*id/(Pd'*Pd);

s = olds + mu * d;

[ssort sortind] = sort(abs(s),'descend');

s(sortind(M+1:end)) = 0;

Ps = P(s);

% Calculate step-size requirement

omega = (norm(s-olds)/norm(Ps-oldPs))^2;

% As long as the support changes and mu > omega, we decrease mu

while mu > (0.99)*omega && sum(xor(IND,s~=0))~=0 && sum(IND)~=0

% display(['decreasing mu'])

% We use a simple line search, halving mu in each step

mu = mu/2;

s = olds + mu * d;

[ssort sortind] = sort(abs(s),'descend');

s(sortind(M+1:end)) = 0;

Ps = P(s);

% Calculate step-size requirement

omega = (norm(s-olds)/norm(Ps-oldPs))^2;

end

else

% Use fixed step size

s = s + MU * Pt(Residual);

[ssort sortind] = sort(abs(s),'descend');

s(sortind(M+1:end)) = 0;

Ps = P(s);

end

Residual = x-Ps;

ERR=Residual'*Residual/n;

if comp_err

err_mse(iter)=ERR;

end

if comp_time

iter_time(iter)=toc;

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Are we done yet?

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

if comp_err && iter >=2

if ((err_mse(iter-1)-err_mse(iter))/sigsize<STOPTOL);

if verbose

display(['Stopping. Approximation error changed less than ' num2str(STOPTOL)])

end

done = 1;

elseif verbose && toc-t>10

display(sprintf('Iteration %i. --- %i mse change',iter ,(err_mse(iter-1)-err_mse(iter))/sigsize))

t=toc;

end

else

if ((oldERR - ERR)/sigsize < STOPTOL) && iter >=2;

if verbose

display(['Stopping. Approximation error changed less than ' num2str(STOPTOL)])

end

done = 1;

elseif verbose && toc-t>10

display(sprintf('Iteration %i. --- %i mse change',iter ,(oldERR - ERR)/sigsize))

t=toc;

end

end

% Also stop if residual gets too small or maxIter reached

if comp_err

if err_mse(iter)<1e-16

display('Stopping. Exact signal representation found!')

done=1;

end

elseif iter>1

if ERR<1e-16

display('Stopping. Exact signal representation found!')

done=1;

end

end

if iter >= MAXITER

display('Stopping. Maximum number of iterations reached!')

done = 1;

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% If not done, take another round

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

if ~done

iter=iter+1;

oldERR=ERR;

end

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% Only return as many elements as iterations

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

if nargout >=2

err_mse = err_mse(1:iter);

end

if nargout ==3

iter_time = iter_time(1:iter);

end

if verbose

display('Done')

end第三个版本:

文件:Demo_CS_IHT.m(部分)

链接:http://www.pudn.com/downloads518/sourcecode/math/detail2151378.html

function hat_x=cs_iht(y,T_Mat,m)

% y=T_Mat*x, T_Mat is n-by-m

% y - measurements

% T_Mat - combination of random matrix and sparse representation basis

% m - size of the original signal

% the sparsity is length(y)/4

hat_x_tp=zeros(m,1); % initialization with the size of original

s=floor(length(y)/4); % sparsity

u=0.5; % impact factor

% T_Mat=T_Mat/sqrt(sum(sum(T_Mat.^2))); % normalizae the whole matrix

for times=1:s

x_increase=T_Mat'*(y-T_Mat*hat_x_tp);

hat_x=hat_x_tp+u*x_increase;

[val,pos]=sort((hat_x),'descend'); % why? worse performance with abs()

hat_x(pos(s+1:end))=0; % thresholding, keeping the larges s elements

hat_x_tp=hat_x; % update

end7、单次重构代码

%压缩感知重构算法测试

clear all;close all;clc;

M = 64;%观测值个数

N = 256;%信号x的长度

K = 10;%信号x的稀疏度

Index_K = randperm(N);

x = zeros(N,1);

x(Index_K(1:K)) = 5*randn(K,1);%x为K稀疏的,且位置是随机的

Psi = eye(N);%x本身是稀疏的,定义稀疏矩阵为单位阵x=Psi*theta

Phi = randn(M,N);%测量矩阵为高斯矩阵

Phi = orth(Phi')';

A = Phi * Psi;%传感矩阵

% sigma = 0.005;

% e = sigma*randn(M,1);

% y = Phi * x + e;%得到观测向量y

y = Phi * x;%得到观测向量y

%% 恢复重构信号x

tic

theta = IHT_Basic(y,A,K);

% theta = cs_iht(y,A,size(A,2));

% theta = hard_l0_Mterm(y,A,size(A,2),round(1.5*K),'verbose',true);

x_r = Psi * theta;% x=Psi * theta

toc

%% 绘图

figure;

plot(x_r,'k.-');%绘出x的恢复信号

hold on;

plot(x,'r');%绘出原信号x

hold off;

legend('Recovery','Original')

fprintf('\n恢复残差:');

norm(x_r-x)%恢复残差 这里就不给出重构结果了,给出仿真结论:本人编的IHT基本版能够正常工作,但偶尔会重构失败;第二个版本hard_l0_Mterm.m重构效果很好;第三个版本Demo_CS_IHT.m重构效果很差,估计是作者疑问(why? worse performance with abs()),没有加abs取绝对值的原因吧……

8、结束语

8.1有关算法的名字

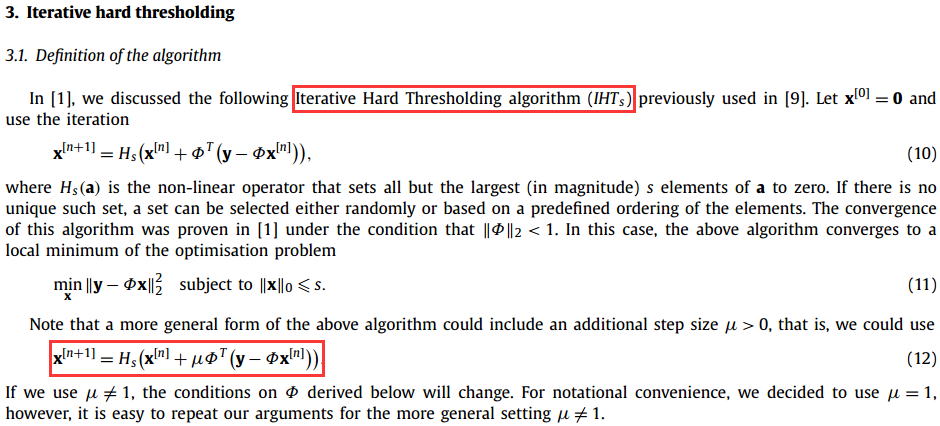

值得注意的是,在文献【2】中将式(2.2)称为iterative hard-thresholding algorithm,而将式(3.2)称为M-sparse algorithm,在文献【1】中又将式(3.2)称为Iterative Hard Thresholding algorithm (IHTs),一般简称IHT的较多,多余的s指的是s稀疏。可见算法的名称是也是一不断完善的过程啊……

8.2与GraDeS算法的关系

如果你学习过GraDeS算法(参见http://blog.csdn.net/jbb0523/article/details/52059296),然后再学习本算法,是不是有一种似曾相似的感觉?

没错,这两个算法的迭代公式几乎是一样的,尤其是文献【1】中的式(12)(如上图第二个红框)进一步拓展了该算法的定义。这个就跟CoSaMP与SP两个算法一样,在GraDeS的提出文献【5】中开始部分还提到了IHT,但后面就没提了,不知道作者是怎么看待这个问题的。如果非说二者有区别,那就是GraDeS的参数γ=1+δ2s,且δ2s<1/3。

所以,有想法得赶紧写成论文发表出来,否则被抢了先机那就……

8.3重构效果问题

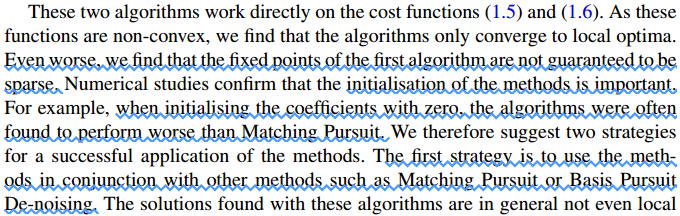

另外,在GraDeS算法中提到该算法的重构效果不好,这里注意文献【2】中的一段话:

也就是说,IHT作者也意识到了该种算法的问题,并提出了两种应用策略(two strategies for asuccessful application of the methods)。

8.4Landweber迭代

在网上搜索“Landweber迭代”时找到了一段程序[6]:

function [x,k]=Landweber(A,b,x0)

alfa=1/sum(diag(A*A'));

k=1;

L=200;

x=x0;

while k<L

x1=x;

x=x+alfa*A'*(b-A*x);

if norm(b-A*x)/norm(b)<0.005

break;

elseif norm(x1-x)/norm(x)<0.001

break;

end

k=k+1;

end注意该程序的迭代部分“x=x+alfa*A'*(b-A*x);”,除了多了一些alfa系数外,这跟IHT不是基本一样么?或者说与GraDeS有什么区别?

有关LandWeber迭代可参见文献:“Landweber L. An iteration formula for Fredholm integral equations of the first kind[J]. American journal of mathematics, 1951, 73(3): 615-624.”,此处不再多述。

8.5改进算法

作者后来又提出了两个关于IHT的改进算法,分别是RIHT(Normalized IHT)[7]和AIHT(Accelerated IHT)[8]。

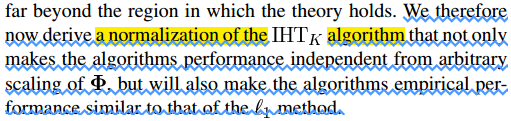

提出RIHT主要是由于IHT有一些缺点[7]:

新算法RIHT将会有如下优点:

之所以作者提供的软件包(第二个版本IHT)重构效果更好是由于最新版的hard_l0_Mterm.m (\sparsify_0_5\HardLab)程序中已经更新成了RIHT。

RIHT的算法流程如下:

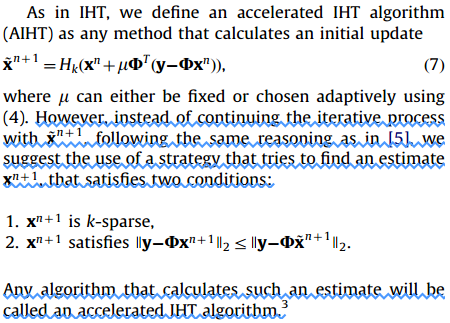

将IHT改进为AIHT后会有如下优点[8]:

值得注意的是,AIHT应该是一类算法的总称(虽然作者只阐述了两种实现策略),这个类似于FFT是所有DFT快速算法的总称:

8.6稀疏度对IHT的影响

自己可以试一下,IHT输入参数中的稀疏度并不是很关键,若实际稀疏度为K,则稀疏度这个输入参数只要不小于K就可以了,重构效果都挺不错的,比如第三个版本的IHT程序,作者直接将稀疏度定义为信号y长度的四分之一。

8.7作者去向?

细心的人会发现,文献【8】的暑名单位为剑桥大学(University of Oxford),并不是作者主页所在的南安普敦大学(University of Southampton),在文献【8】的最后南提到:

Previous position?难道作者跳到Oxford了?

9、参考文献

【1】Blumensath T, Davies M E.Iterative hard thresholding for compressed sensing[J]. Applied & Computational HarmonicAnalysis, 2008, 27(3):265-274. (Available at:http://www.sciencedirect.com/science/article/pii/S1063520309000384)

【2】Blumensath T, Davies M E.Iterative Thresholding for Sparse Approximations[J]. Journal of Fourier Analysis & Applications,2008, 14(5):629-654. (Available at:http://link.springer.com/article/10.1007%2Fs00041-008-9035-z)

【3】Homepageof Blumensath T :http://www.personal.soton.ac.uk/tb1m08/index.html

【4】Lange, K., Hunter, D.R., Yang, I.. OptimizationTransfer Using Surrogate Objective Functions[J]. Journal of Computational &Graphical Statistics, 2000, 9(1):1-20. (Available at: http://sites.stat.psu.edu/~dhunter/papers/ot.pdf)

【5】GargR, Khandekar R. Gradient descent with sparsification: an iterative algorithmfor sparse recovery with restricted isometry property[C]//Proceedings of the26th Annual InternationalConference on Machine Learning. ACM, 2009: 337-344

【6】shasying2. landweber迭代方法.http://download.csdn.net/detail/shasying2/5092828

【7】Blumensath T, Davies M E.Normalized Iterative Hard Thresholding: Guaranteed Stability and Performance[J]. IEEE Journal of Selected Topics in Signal Processing, 2010,4(2):298-309.

【8】Blumensath T. Accelerated iterative hard thresholding[J]. Signal Processing, 2012, 92(3):752-756.

576

576

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?