来自:http://blog.csdn.net/myarrow/article/details/7066007

Android StagefrightPlayer

1. 对StagefrightPlayer的好奇

前面对StagefrightPlayer的创建流程已经分析清楚了,即在Android::createPlayer中根据url的type来创建不同的player. StagefrightPlayer是Android提供的,比较经典的一个Player。但个人觉得它不怎么样,还不如ffmpeg支持的codec和parser多。还有一个opencore,更是复杂无比的东东,它采用datapath的方式,类似于大家熟悉的GStreamer。不理解大家为什么会把简单的事情复杂化。

2. StagefrightPlayer是个什么东东?

仔细一看代码,它也是一个空壳公司,其中就一个员工给他干活,它就是AwesomePlayer *mPlayer,在创建StagefrightPlayer时,它就被创建了。StagefrighPlayer中的所有接口都是简单调用AwesomePlayer的对应接口来实现。所以它只是一个接口人,什么都不是。这个AwesomePlayer才是我们的研究重点。

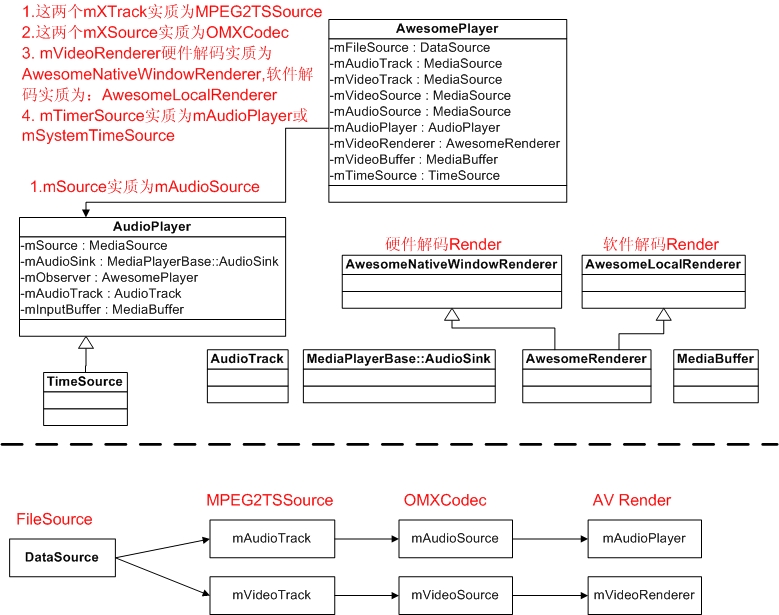

3. AwesomePlayer有些什么东东?

它再神奇,不也就是实现AV播放吗?看看自己直接基于Driver的MyPlayer不也就1000多行代码就把TS播放玩得很爽了。但google为了其开放性,搞得一下子搞不明白。既然想跟着Android混饭吃,只好读它这一大堆没有什么文档和注释的代码了。

AVPlayer肯定具有以下模块:

1) 数据源(如TS流,MP4...)

2) Demux (音视频分离)

3) 音视频解码

4) 音频播放和视频播放

5) 音视频同步

6) 整个工作流程

AwesomePlayer就是把以上6位员工组织起来工作的老板,下面就对每一个问题进行一一分析。

下面先看看它的几位骨干员工:

- // Events

- sp<TimedEventQueue::Event> mVideoEvent;

- sp<TimedEventQueue::Event> mStreamDoneEvent;

- sp<TimedEventQueue::Event> mBufferingEvent;

- sp<TimedEventQueue::Event> mCheckAudioStatusEvent;

- sp<TimedEventQueue::Event> mVideoLagEvent;

- // Audio Source and Decoder

- void setAudioSource(sp<MediaSource> source);

- status_t initAudioDecoder();

- // Video Source and Decoder

- void setVideoSource(sp<MediaSource> source);

- status_t initVideoDecoder(uint32_t flags = 0);

- // Data source

- sp<DataSource> mFileSource;

- // Video render

- sp<MediaSource> mVideoTrack;

- sp<MediaSource> mVideoSource;

- sp<AwesomeRenderer> mVideoRenderer;

- // Audio render

- sp<MediaSource> mAudioTrack;

- sp<MediaSource> mAudioSource;

- AudioPlayer *mAudioPlayer;

AwesomePlayer的启动工作

继前一篇文章AwesomePlayer的准备工作,本文主要描述当Java调用mp.start();时,AwesomePlayer做了些什么...

1. AwesomePlayer::play_l

其调用流程如下:

StagefrightPlayer::start->

AwesomePlayer::play->

AwesomePlayer::play_l

AwesomePlayer::play_l主要代码如下:

- status_t AwesomePlayer::play_l() {

- modifyFlags(SEEK_PREVIEW, CLEAR);

- modifyFlags(PLAYING, SET);

- modifyFlags(FIRST_FRAME, SET);

- // 创建AudioPlayer

- if (mAudioSource != NULL) {

- if (mAudioPlayer == NULL) {

- if (mAudioSink != NULL) {

- mAudioPlayer = new AudioPlayer(mAudioSink, this);

- mAudioPlayer->setSource(mAudioSource);

- mTimeSource = mAudioPlayer;

- // If there was a seek request before we ever started,

- // honor the request now.

- // Make sure to do this before starting the audio player

- // to avoid a race condition.

- seekAudioIfNecessary_l();

- }

- }

- CHECK(!(mFlags & AUDIO_RUNNING));

- //如果只播放音频,则启动AudioPlayer

- if (mVideoSource == NULL) {

- // We don't want to post an error notification at this point,

- // the error returned from MediaPlayer::start() will suffice.

- status_t err = startAudioPlayer_l(

- false /* sendErrorNotification */);

- if (err != OK) {

- delete mAudioPlayer;

- mAudioPlayer = NULL;

- modifyFlags((PLAYING | FIRST_FRAME), CLEAR);

- if (mDecryptHandle != NULL) {

- mDrmManagerClient->setPlaybackStatus(

- mDecryptHandle, Playback::STOP, 0);

- }

- return err;

- }

- }

- }

- if (mTimeSource == NULL && mAudioPlayer == NULL) {

- mTimeSource = &mSystemTimeSource;

- }

- // 启动视频回放

- if (mVideoSource != NULL) {

- // Kick off video playback

- postVideoEvent_l();

- if (mAudioSource != NULL && mVideoSource != NULL) {

- postVideoLagEvent_l();

- }

- }

- ...

- return OK;

- }

status_t AwesomePlayer::play_l() {

modifyFlags(SEEK_PREVIEW, CLEAR);

modifyFlags(PLAYING, SET);

modifyFlags(FIRST_FRAME, SET);

// 创建AudioPlayer

if (mAudioSource != NULL) {

if (mAudioPlayer == NULL) {

if (mAudioSink != NULL) {

mAudioPlayer = new AudioPlayer(mAudioSink, this);

mAudioPlayer->setSource(mAudioSource);

mTimeSource = mAudioPlayer;

// If there was a seek request before we ever started,

// honor the request now.

// Make sure to do this before starting the audio player

// to avoid a race condition.

seekAudioIfNecessary_l();

}

}

CHECK(!(mFlags & AUDIO_RUNNING));

//如果只播放音频,则启动AudioPlayer

if (mVideoSource == NULL) {

// We don't want to post an error notification at this point,

// the error returned from MediaPlayer::start() will suffice.

status_t err = startAudioPlayer_l(

false /* sendErrorNotification */);

if (err != OK) {

delete mAudioPlayer;

mAudioPlayer = NULL;

modifyFlags((PLAYING | FIRST_FRAME), CLEAR);

if (mDecryptHandle != NULL) {

mDrmManagerClient->setPlaybackStatus(

mDecryptHandle, Playback::STOP, 0);

}

return err;

}

}

}

if (mTimeSource == NULL && mAudioPlayer == NULL) {

mTimeSource = &mSystemTimeSource;

}

// 启动视频回放

if (mVideoSource != NULL) {

// Kick off video playback

postVideoEvent_l();

if (mAudioSource != NULL && mVideoSource != NULL) {

postVideoLagEvent_l();

}

}

...

return OK;

}

1.1 创建AudioPlayer

创建AudioPlayer,创建之后,如果只播放音频,则调用AwesomePlayer::startAudioPlayer_l启动音频播放,在启动音频播放时,主要调用以下启动工作:

AudioPlayer::start->

mSource->start

mSource->read

mAudioSink->open

mAudioSink->start

1.2 启动视频回放

调用AwesomePlayer::postVideoEvent_l启动视频回放。此函数代码如下:

- void AwesomePlayer::postVideoEvent_l(int64_t delayUs) {

- if (mVideoEventPending) {

- return;

- }

- mVideoEventPending = true;

- mQueue.postEventWithDelay(mVideoEvent, delayUs < 0 ? 10000 : delayUs);

- }

void AwesomePlayer::postVideoEvent_l(int64_t delayUs) {

if (mVideoEventPending) {

return;

}

mVideoEventPending = true;

mQueue.postEventWithDelay(mVideoEvent, delayUs < 0 ? 10000 : delayUs);

}

前面已经讲过, mQueue.postEventWithDelay发送一个事件到队列中,最终执行事件的fire函数。这些事件的初始化在AwesomePlayer::AwesomePlayer中进行。

- AwesomePlayer::AwesomePlayer()

- : mQueueStarted(false),

- mUIDValid(false),

- mTimeSource(NULL),

- mVideoRendererIsPreview(false),

- mAudioPlayer(NULL),

- mDisplayWidth(0),

- mDisplayHeight(0),

- mFlags(0),

- mExtractorFlags(0),

- mVideoBuffer(NULL),

- mDecryptHandle(NULL),

- mLastVideoTimeUs(-1),

- mTextPlayer(NULL) {

- CHECK_EQ(mClient.connect(), (status_t)OK);

- DataSource::RegisterDefaultSniffers();

- mVideoEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoEvent);

- mVideoEventPending = false;

- mStreamDoneEvent = new AwesomeEvent(this, &AwesomePlayer::onStreamDone);

- mStreamDoneEventPending = false;

- mBufferingEvent = new AwesomeEvent(this, &AwesomePlayer::onBufferingUpdate);

- mBufferingEventPending = false;

- mVideoLagEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoLagUpdate);

- mVideoEventPending = false;

- mCheckAudioStatusEvent = new AwesomeEvent(

- this, &AwesomePlayer::onCheckAudioStatus);

- mAudioStatusEventPending = false;

- reset();

- }

AwesomePlayer::AwesomePlayer()

: mQueueStarted(false),

mUIDValid(false),

mTimeSource(NULL),

mVideoRendererIsPreview(false),

mAudioPlayer(NULL),

mDisplayWidth(0),

mDisplayHeight(0),

mFlags(0),

mExtractorFlags(0),

mVideoBuffer(NULL),

mDecryptHandle(NULL),

mLastVideoTimeUs(-1),

mTextPlayer(NULL) {

CHECK_EQ(mClient.connect(), (status_t)OK);

DataSource::RegisterDefaultSniffers();

mVideoEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoEvent);

mVideoEventPending = false;

mStreamDoneEvent = new AwesomeEvent(this, &AwesomePlayer::onStreamDone);

mStreamDoneEventPending = false;

mBufferingEvent = new AwesomeEvent(this, &AwesomePlayer::onBufferingUpdate);

mBufferingEventPending = false;

mVideoLagEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoLagUpdate);

mVideoEventPending = false;

mCheckAudioStatusEvent = new AwesomeEvent(

this, &AwesomePlayer::onCheckAudioStatus);

mAudioStatusEventPending = false;

reset();

}

现在明白了,对于mVideoEnent,最终将执行函数AwesomePlayer::onVideoEvent,一层套一层,再继续向下看看...

1.2.1 AwesomePlayer::onVideoEvent

相关简化代码如下:

- <SPAN style="FONT-SIZE: 10px">void AwesomePlayer::postVideoEvent_l(int64_t delayUs)

- {

- mQueue.postEventWithDelay(mVideoEvent, delayUs);

- }

- void AwesomePlayer::onVideoEvent()

- {

- mVideoSource->read(&mVideoBuffer,&options); //获取解码后的YUV数据

- [Check Timestamp] //进行AV同步

- mVideoRenderer->render(mVideoBuffer); //显示解码后的YUV数据

- postVideoEvent_l(); //进行下一帧的显示

- }

- </SPAN>

void AwesomePlayer::postVideoEvent_l(int64_t delayUs)

{

mQueue.postEventWithDelay(mVideoEvent, delayUs);

}

void AwesomePlayer::onVideoEvent()

{

mVideoSource->read(&mVideoBuffer,&options); //获取解码后的YUV数据

[Check Timestamp] //进行AV同步

mVideoRenderer->render(mVideoBuffer); //显示解码后的YUV数据

postVideoEvent_l(); //进行下一帧的显示

}

1)调用OMXCodec::read创建mVideoBuffer

2)调用AwesomePlayer::initRenderer_l初始化mVideoRender

- if (USE_SURFACE_ALLOC //硬件解码

- && !strncmp(component, "OMX.", 4)

- && strncmp(component, "OMX.google.", 11)) {

- // Hardware decoders avoid the CPU color conversion by decoding

- // directly to ANativeBuffers, so we must use a renderer that

- // just pushes those buffers to the ANativeWindow.

- mVideoRenderer =

- new AwesomeNativeWindowRenderer(mNativeWindow, rotationDegrees);

- } else { //软件解码

- // Other decoders are instantiated locally and as a consequence

- // allocate their buffers in local address space. This renderer

- // then performs a color conversion and copy to get the data

- // into the ANativeBuffer.

- mVideoRenderer = new AwesomeLocalRenderer(mNativeWindow, meta);

- }

if (USE_SURFACE_ALLOC //硬件解码

&& !strncmp(component, "OMX.", 4)

&& strncmp(component, "OMX.google.", 11)) {

// Hardware decoders avoid the CPU color conversion by decoding

// directly to ANativeBuffers, so we must use a renderer that

// just pushes those buffers to the ANativeWindow.

mVideoRenderer =

new AwesomeNativeWindowRenderer(mNativeWindow, rotationDegrees);

} else { //软件解码

// Other decoders are instantiated locally and as a consequence

// allocate their buffers in local address space. This renderer

// then performs a color conversion and copy to get the data

// into the ANativeBuffer.

mVideoRenderer = new AwesomeLocalRenderer(mNativeWindow, meta);

}

3)调用AwesomePlayer::startAudioPlayer_l启动音频播放

4)然后再循环调用postVideoEvent_l来post mVideoEvent事件,以循环工作。

其主要对象及关系如下图所示:

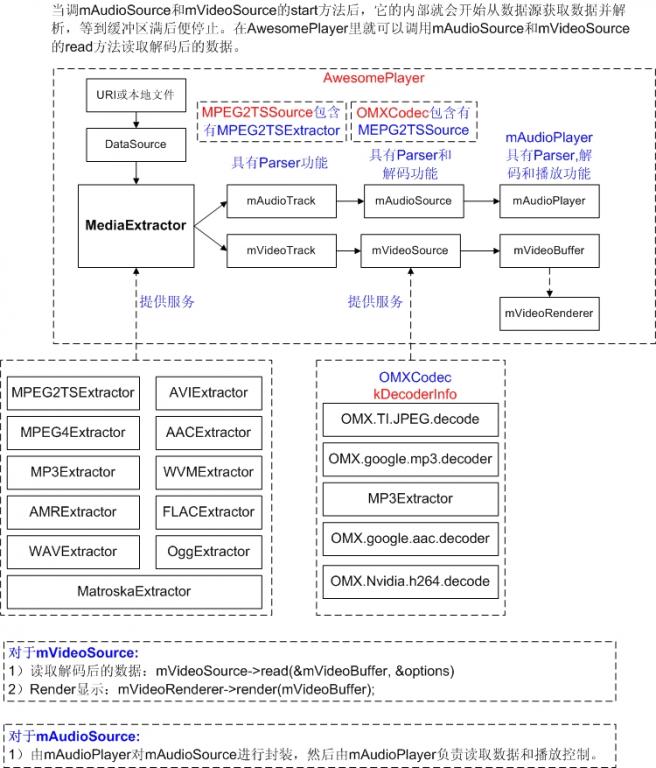

2. AwesomePlayer数据流

AwesomePlayer的准备工作

1. 前提条件

本文以播放本地文件为例,且setDataSource时传入的是文件的url地址。

在Java中,若要播放一个本地文件,其代码如下:

MediaPlayer mp = new MediaPlayer();

mp.setDataSource(PATH_TO_FILE); ...... (1)

mp.prepareAsync(); ........................ (2)、(3)

当收到视频准备完毕,收到OnPreparedListener时

mp.start(); .......................... (4)

在AwesomePlayer中,则会看到相应的处理;

2. AwesomePlayer::setDataSource

为了能播放本地文件,需要通过AwesomePlayer::setDataSource来告诉AwesomePlayer播放url地址,AwesomePlayer也只是简单地把此url地址存入mUri和mStats.mURI中以备在prepare时使用。

3. AwesomePlayer::prepareAsync或AwesomePlayer::prepare

3.1 mQueue.start();

表面看是启动一个队例,实质上是创建了一个线程,此程入口函数为:TimedEventQueue::ThreadWrapper。真正的线程处理函数为:TimedEventQueue::threadEntry, 从TimedEventQueue::mQueue队列中读取事件,然后调用event->fire处理此事件。TimedEventQueue中的每一个事件都带有触发此事件的绝对时间,到时间之后才执行此事件的fire.

TimedEventQueue::Event的fire是一个纯虚函数,其实现由其派生类来实现,如在AwesomePlayer::prepareAsync_l中,创建了一个AwesomeEvent,然后通过mQueue.postEvent把事件发送到mQueue中,此时,fire函数为AwesomePlayer::onPrepareAsyncEvent.

3.2 AwesomePlayer::onPrepareAsyncEvent被执行

根据上面的描述,把事件发送到队列之后,队列线程将读取此线程的事件,然后执行event的fire. 3.1中事件的fire函数为AwesomePlayer::onPrepareAsyncEvent,其代码为:

- void AwesomePlayer::onPrepareAsyncEvent() {

- Mutex::Autolock autoLock(mLock);

- ....

- if (mUri.size() > 0) { //获取mAudioTrack和mVideoTrack

- status_t err = finishSetDataSource_l(); ---3.2.1

- ...

- }

- if (mVideoTrack != NULL && mVideoSource == NULL) { //获取mVideoSource

- status_t err = initVideoDecoder(); ---3.2.2

- ...

- }

- if (mAudioTrack != NULL && mAudioSource == NULL) { //获取mAudioSource

- status_t err = initAudioDecoder(); ---3.2.3

- ...

- }

- modifyFlags(PREPARING_CONNECTED, SET);

- if (isStreamingHTTP() || mRTSPController != NULL) {

- postBufferingEvent_l();

- } else {

- finishAsyncPrepare_l();

- }

- }

void AwesomePlayer::onPrepareAsyncEvent() {

Mutex::Autolock autoLock(mLock);

....

if (mUri.size() > 0) { //获取mAudioTrack和mVideoTrack

status_t err = finishSetDataSource_l(); ---3.2.1

...

}

if (mVideoTrack != NULL && mVideoSource == NULL) { //获取mVideoSource

status_t err = initVideoDecoder(); ---3.2.2

...

}

if (mAudioTrack != NULL && mAudioSource == NULL) { //获取mAudioSource

status_t err = initAudioDecoder(); ---3.2.3

...

}

modifyFlags(PREPARING_CONNECTED, SET);

if (isStreamingHTTP() || mRTSPController != NULL) {

postBufferingEvent_l();

} else {

finishAsyncPrepare_l();

}

}

3.2.1 finishSetDataSource_l

- {

- dataSource = DataSource::CreateFromURI(mUri.string(), ...); (3.2.1.1)

- sp<MediaExtractor> extractor =

- MediaExtractor::Create(dataSource); ..... (3.2.1.2)

- return setDataSource_l(extractor); ......................... (3.2.1.3)

- }

{

dataSource = DataSource::CreateFromURI(mUri.string(), ...); (3.2.1.1)

sp<MediaExtractor> extractor =

MediaExtractor::Create(dataSource); ..... (3.2.1.2)

return setDataSource_l(extractor); ......................... (3.2.1.3)

}

3.2.1.1 创建dataSource

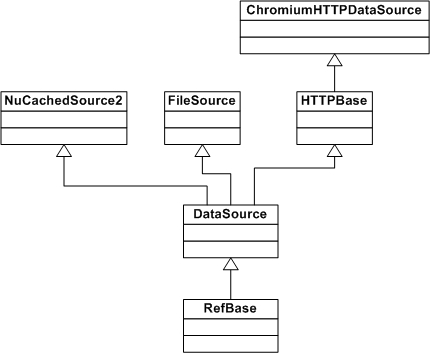

a. 对于本地文件(http://,https://,rtsp://实现方式不一样)的实现方式如下:

dataSource = DataSource::CreateFromURI(mUri.string(), &mUriHeaders);

根据url创建dataSource,它实际上new了一个FileSource。当new FileSource时,它打开此文件:

mFd = open(filename, O_LARGEFILE | O_RDONLY);

b. 对于http://和https://,则new一个ChromiumHTTPDataSource,

这些类之间的派生关系如下图所示:

3.2.1.2 创建一个MediaExtractor

创建MediaExtractor::Create中创建真正的MediaExtractor,以下以MPEG2TSExtractor为例,它解析TS流,它也是一个空架子,它有传入的mDataSource给它读数据,并创建了一个mParser(ATSParser)来真正的数据解析。在此过程中产生的对象即拥有关系为:

MPEG2TSExtractor->ATSParser->ATSParser::Program->ATSParser::Stream->AnotherPacketSource

extractor = MediaExtractor::Create(dataSource);它解析source所指定的文件,并且根据其header来选择extractor(解析器)。其代码如下:

- sp<MediaExtractor> MediaExtractor::Create(

- const sp<DataSource> &source, const char *mime) {

- sp<AMessage> meta;

- String8 tmp;

- if (mime == NULL) {

- float confidence;

- if (!source->sniff(&tmp, &confidence, &meta)) {

- return NULL;

- }

- mime = tmp.string();

- }

- ...

- MediaExtractor *ret = NULL;

- if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG4)

- || !strcasecmp(mime, "audio/mp4")) {

- ret = new MPEG4Extractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_MPEG)) {

- ret = new MP3Extractor(source, meta);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_NB)

- || !strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_WB)) {

- ret = new AMRExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_FLAC)) {

- ret = new FLACExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WAV)) {

- ret = new WAVExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_OGG)) {

- ret = new OggExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MATROSKA)) {

- ret = new MatroskaExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG2TS)) {

- ret = new MPEG2TSExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_AVI)) {

- ret = new AVIExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WVM)) {

- ret = new WVMExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AAC_ADTS)) {

- ret = new AACExtractor(source);

- }

- if (ret != NULL) {

- if (isDrm) {

- ret->setDrmFlag(true);

- } else {

- ret->setDrmFlag(false);

- }

- }

- ...

- return ret;

- }

sp<MediaExtractor> MediaExtractor::Create(

const sp<DataSource> &source, const char *mime) {

sp<AMessage> meta;

String8 tmp;

if (mime == NULL) {

float confidence;

if (!source->sniff(&tmp, &confidence, &meta)) {

return NULL;

}

mime = tmp.string();

}

...

MediaExtractor *ret = NULL;

if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG4)

|| !strcasecmp(mime, "audio/mp4")) {

ret = new MPEG4Extractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_MPEG)) {

ret = new MP3Extractor(source, meta);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_NB)

|| !strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_WB)) {

ret = new AMRExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_FLAC)) {

ret = new FLACExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WAV)) {

ret = new WAVExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_OGG)) {

ret = new OggExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MATROSKA)) {

ret = new MatroskaExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG2TS)) {

ret = new MPEG2TSExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_AVI)) {

ret = new AVIExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WVM)) {

ret = new WVMExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AAC_ADTS)) {

ret = new AACExtractor(source);

}

if (ret != NULL) {

if (isDrm) {

ret->setDrmFlag(true);

} else {

ret->setDrmFlag(false);

}

}

...

return ret;

}当然对于TS流,它将创建一个MPEG2TSExtractor并返回。

当执行new MPEG2TSExtractor(source)时:

1) 把传入的FileSource对象保存在MEPG2TSExtractor的mDataSource成没变量中

2) 创建一个ATSParser并保存在mParser中,它负责TS文件的解析,

3) 在feedMore中,通过mDataSource->readAt从文件读取数据,把读取的数据作为mParser->feedTSPacket的参数,它将分析PAT表(ATSParser::parseProgramAssociationTable)而找到并创建对应的Program,并把Program存入ATSParser::mPrograms中。每个Program有一个唯一的program_number和programMapPID.

扫盲一下,PAT中包含有所有PMT的PID,一个Program有一个对应的PMT,PMT中包含有Audio PID和Video PID.

ATSParser::Program::parseProgramMap中,它分析PMT表,并分别根据Audio和Video的PID,为他们分别创建一个Stream。然后把新创建的Stream保存在ATSParser::Program的mStreams成员变量中。

ATSParser::Stream::Stream构造函数中,它根据媒体类型,创建一个类型为ElementaryStreamQueue的对象mQueue;并创建一个类型为ABuffer的对象mBuffer(mBuffer = new ABuffer(192 * 1024);)用于保存数据 。

注:ATSParser::Stream::mSource<AnotherPacketSource>创建流程为:

MediaExtractor::Create->

MPEG2TSExtractor::MPEG2TSExtractor->

MPEG2TSExtractor::init->

MPEG2TSExtractor::feedMore->

ATSParser::feedTSPacket->

ATSParser::parseTS->

ATSParser::parsePID->

ATSParser::parseProgramAssociationTable

ATSParser::Program::parsePID->

ATSParser::Program::parseProgramMap

ATSParser::Stream::parse->

ATSParser::Stream::flush->

ATSParser::Stream::parsePES->

ATSParser::Stream::onPayloadData

以上source->sniff函数在DataSource::sniff中实现,这些sniff函数是通过DataSource::RegisterSniffer来进行注册的,如MEPG2TS的sniff函数为:SniffMPEG2TS,其代码如下:

- bool SniffMPEG2TS(

- const sp<DataSource> &source, String8 *mimeType, float *confidence,

- sp<AMessage> *) {

- for (int i = 0; i < 5; ++i) {

- char header;

- if (source->readAt(kTSPacketSize * i, &header, 1) != 1

- || header != 0x47) {

- return false;

- }

- }

- *confidence = 0.1f;

- mimeType->setTo(MEDIA_MIMETYPE_CONTAINER_MPEG2TS);

- return true;

- }

bool SniffMPEG2TS(

const sp<DataSource> &source, String8 *mimeType, float *confidence,

sp<AMessage> *) {

for (int i = 0; i < 5; ++i) {

char header;

if (source->readAt(kTSPacketSize * i, &header, 1) != 1

|| header != 0x47) {

return false;

}

}

*confidence = 0.1f;

mimeType->setTo(MEDIA_MIMETYPE_CONTAINER_MPEG2TS);

return true;

}由此可见,这些sniff是根据文件开始的内容来识别各种file container. 比如wav文件通过其头中的RIFF或WAVE字符串来识别。注:在创建player时,是根据url中的相关信息来判断的,而不是文件的内容来判断。

3.2.1.3 AwesomePlayer::setDataSource_l(extractor)

主要逻辑代码如下(当然此extractor实质为MPEG2TSExtractor对象):

- status_t AwesomePlayer::setDataSource_l(const sp<MediaExtractor> &extractor)

- {

- for (size_t i = 0; i < extractor->countTracks(); ++i) {

- ...

- if (!haveVideo && !strncasecmp(mime, "video/", 6))

- setVideoSource(extractor->getTrack(i));

- ...

- if(!haveAudio && !strncasecmp(mime, "audio/", 6))

- setAudioSource(extractor->getTrack(i));

- ...

- }

- }

status_t AwesomePlayer::setDataSource_l(const sp<MediaExtractor> &extractor)

{

for (size_t i = 0; i < extractor->countTracks(); ++i) {

...

if (!haveVideo && !strncasecmp(mime, "video/", 6))

setVideoSource(extractor->getTrack(i));

...

if(!haveAudio && !strncasecmp(mime, "audio/", 6))

setAudioSource(extractor->getTrack(i));

...

}

}先看看extractor->getTrack做了些什么?

它以MPEG2TSExtractor和AnotherPacketSource做为参数创建了一个MPEG2TSSource对象返回,然后AwesomePlayer把它保存在mVideoTrack或mAudioTrack中。

3.2.2 initVideoDecoder

主要代码如下:

- status_t AwesomePlayer::initVideoDecoder(uint32_t flags) {

- if (mDecryptHandle != NULL) {

- flags |= OMXCodec::kEnableGrallocUsageProtected;

- }

- mVideoSource = OMXCodec::Create( //3.2.2.1

- mClient.interface(), mVideoTrack->getFormat(),

- false, // createEncoder

- mVideoTrack,

- NULL, flags, USE_SURFACE_ALLOC ? mNativeWindow : NULL);

- if (mVideoSource != NULL) {

- int64_t durationUs;

- if (mVideoTrack->getFormat()->findInt64(kKeyDuration, &durationUs)) {

- Mutex::Autolock autoLock(mMiscStateLock);

- if (mDurationUs < 0 || durationUs > mDurationUs) {

- mDurationUs = durationUs;

- }

- }

- status_t err = mVideoSource->start(); //3.2.2.2

- if (err != OK) {

- mVideoSource.clear();

- return err;

- }

- }

- return mVideoSource != NULL ? OK : UNKNOWN_ERROR;

- }

status_t AwesomePlayer::initVideoDecoder(uint32_t flags) {

if (mDecryptHandle != NULL) {

flags |= OMXCodec::kEnableGrallocUsageProtected;

}

mVideoSource = OMXCodec::Create( //3.2.2.1

mClient.interface(), mVideoTrack->getFormat(),

false, // createEncoder

mVideoTrack,

NULL, flags, USE_SURFACE_ALLOC ? mNativeWindow : NULL);

if (mVideoSource != NULL) {

int64_t durationUs;

if (mVideoTrack->getFormat()->findInt64(kKeyDuration, &durationUs)) {

Mutex::Autolock autoLock(mMiscStateLock);

if (mDurationUs < 0 || durationUs > mDurationUs) {

mDurationUs = durationUs;

}

}

status_t err = mVideoSource->start(); //3.2.2.2

if (err != OK) {

mVideoSource.clear();

return err;

}

}

return mVideoSource != NULL ? OK : UNKNOWN_ERROR;

}它主要做了两件事,1)创建一个OMXCodec对象,2)调用OMXCodec的start方法。注mClient.interface()返回为一个OMX对象。其创建流程如下:

AwesomePlayer::AwesomePlayer->

mClient.connect->

OMXClient::connect(获取OMX对象,并保存在mOMX)->

BpMediaPlayerService::getOMX->

BnMediaPlayerService::onTransact(GET_OMX)->

MediaPlayerService::getOMX

3.2.2.1 创建OMXCodec对象

从上面的代码中可以看出,其mVideoTrack参数为一个MPEG2TSSource对象。

1)从MPEG2TSSource的metadata中获取mime类型

2)调用OMXCodec::findMatchingCodecs从kDecoderInfo中寻找可以解此mime媒体类型的codec名,并放在matchingCodecs变量中

3)创建一个OMXCodecObserver对象

4)调用OMX::allocateNode函数,以codec名和OMXCodecObserver对象为参数,创建一个OMXNodeInstance对象,并把其makeNodeID的返回值保存在node(node_id)中。

5)以node,codec名,mime媒体类型,MPEG2TSSource对象为参数,创建一个OMXCodec对象,并把此OMXCodec对象保存在OMXCodecObserver::mTarget中

- OMXCodec::OMXCodec(

- const sp<IOMX> &omx, IOMX::node_id node,

- uint32_t quirks, uint32_t flags,

- bool isEncoder,

- const char *mime,

- const char *componentName,

- const sp<MediaSource> &source,

- const sp<ANativeWindow> &nativeWindow)

OMXCodec::OMXCodec(

const sp<IOMX> &omx, IOMX::node_id node,

uint32_t quirks, uint32_t flags,

bool isEncoder,

const char *mime,

const char *componentName,

const sp<MediaSource> &source,

const sp<ANativeWindow> &nativeWindow)

6)调用OMXCodec::configureCodec并以MEPG2TSSource的MetaData为参数,对此Codec进行配置。

3.2.2.2 调用OMXCodec::start方法

1)它调用mSource->start,即调用MPEG2TSSource::start函数。

2)它又调用Impl->start,即AnotherPacketSource::start,真遗憾,这其中什么都没有做。只是return OK;就完事了。

3.2.3 initAudioDecoder

其流程基本上与initVideoDecoder类似。创建一个OMXCodec保存在mAudioSource中。

至此,AwesomePlayer的准备工作已经完成。其架构如下图所示:

2429

2429

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?