网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

}

}

CarDriver:

package com.hadoop.Car2;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class CarDriver extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration configuration = new Configuration();

Job JB = Job.getInstance(configuration, “CarDriver”);

FileSystem fileSystem = FileSystem.get(configuration);

JB.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(JB,new Path("E:\\出租车数据\\2012001"));

JB.setMapperClass(CarMapper.class);

JB.setMapOutputKeyClass(Text.class);

JB.setMapOutputValueClass(Text.class);

JB.setReducerClass(CarReduce.class);

JB.setOutputKeyClass(Text.class);

JB.setOutputValueClass(Text.class);

boolean exists = fileSystem.exists(new Path("E:\\出租车数据\\Out"));

if (exists){

fileSystem.delete(new Path("E:\\出租车数据\\Out"),true);

}

JB.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(JB,new Path("E:\\出租车数据\\Out"));

return JB.waitForCompletion(true)?0:1;

}

public static void main(String[] args) throws Exception {

ToolRunner.run(new CarDriver(),args);

}

}

## 计算出10月1日这天载客次数超过10次的车辆,载客总次数,载客详细时间。

CarMapper:

package com.hadoop.Car3;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class CarMapper extends Mapper<LongWritable, Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

if (value!=null&&value.toString().length()>0){

String[] split = value.toString().split(“,”);

if (split[3].substring(4,6).equals(“10”)&&split[3].substring(6,8).equals(“01”)){

context.write(new Text(split[0]),value);

}

}

}

}

CarReduce:

package com.hadoop.Car3;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class CarReduce extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

int count=0;

StringBuffer sb = new StringBuffer();

for (Text value : values) {

String[] split = value.toString().split(",");

if (split[2].equals("1")||split[2]=="1"){

count++;

String s=split[3];

sb.append(s).append("\_");

}

}

if (count>10){

context.write(new Text(key),new Text("载客总次数:"+count+"------>载客详细时间:"+sb));

}

}

}

CarDriver:

package com.hadoop.Car3;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class CarDriver extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration configuration = new Configuration();

Job JB = Job.getInstance(configuration, “CarDriver”);

FileSystem fileSystem = FileSystem.get(configuration);

JB.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(JB,new Path("E:\\出租车数据\\2012001"));

JB.setMapperClass(CarMapper.class);

JB.setMapOutputKeyClass(Text.class);

JB.setMapOutputValueClass(Text.class);

JB.setReducerClass(CarReduce.class);

JB.setOutputKeyClass(Text.class);

JB.setOutputValueClass(Text.class);

boolean exists = fileSystem.exists(new Path("E:\\出租车数据\\Out"));

if (exists){

fileSystem.delete(new Path("E:\\出租车数据\\Out"),true);

}

JB.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(JB,new Path("E:\\出租车数据\\Out"));

return JB.waitForCompletion(true)?0:1;

}

public static void main(String[] args) throws Exception {

ToolRunner.run(new CarDriver(),args);

}

}

## 计算出10月1日这天连续与运行12小时一以上的车辆

CarMapper:

package com.hadoop.Car4;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class CarMapper extends Mapper<LongWritable, Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

if (value!=null&&value.toString().length()>0){

String[] split = value.toString().split(“,”);

if (split[3].substring(4,6).equals(“10”)&&split[3].substring(6,8).equals(“01”)){

context.write(new Text(split[0]),value);

}

}

}

}

CarReduce:

package com.hadoop.Car4;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.util.HashSet;

public class CarReduce extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

StringBuffer sb = new StringBuffer();

// ArrayList

HashSet list12 = new HashSet<>();

for (Text value : values) {

String[] split = value.toString().split(“,”);

// list.add(new Text(split[3].substring(8,10)));

for (int i = 0; i < 24; i++) {

if (Integer.parseInt(split[3].substring(8,10))==i){

list12.add(Integer.parseInt(split[3].substring(8,10)));

}

}

}

for (int i = 0; i < 24; i++) {

if (list12.contains(i)){

sb.append("1");

}else{

sb.append("0");

}

}

if (sb.toString().contains("111111111111")) {

context.write(key,new Text("----->此车运行了12以上"));

}

}

}

CarDriver:

package com.hadoop.Car4;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class CarDriver extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration configuration = new Configuration();

Job JB = Job.getInstance(configuration, “CarDriver”);

FileSystem fileSystem = FileSystem.get(configuration);

JB.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(JB,new Path("E:\\出租车数据\\2012001"));

JB.setMapperClass(CarMapper.class);

JB.setMapOutputKeyClass(Text.class);

JB.setMapOutputValueClass(Text.class);

JB.setReducerClass(CarReduce.class);

JB.setOutputKeyClass(Text.class);

JB.setOutputValueClass(Text.class);

boolean exists = fileSystem.exists(new Path("E:\\出租车数据\\Out"));

if (exists){

fileSystem.delete(new Path("E:\\出租车数据\\Out"),true);

}

JB.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(JB,new Path("E:\\出租车数据\\Out"));

return JB.waitForCompletion(true)?0:1;

}

public static void main(String[] args) throws Exception {

ToolRunner.run(new CarDriver(),args);

}

}

## 计算出10月1日载客次数大于10月2日载客次数的车辆

CarMapper:

package com.hadoop.Car5;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class CarMapper extends Mapper<LongWritable, Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

FileSplit sp = (FileSplit) context.getInputSplit();

String name = sp.getPath().getName();

if (name.contains(“20121001”)){

if (value!=null&&value.toString().length()>0){

String[] split = value.toString().split(“,”);

context.write(new Text(split[0]),value);

}

}else {

if (value!=null&&value.toString().length()>0){

String[] split = value.toString().split(“,”);

context.write(new Text(split[0]),value);

}

}

}

}

CarReduce:

package com.hadoop.Car5;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class CarReduce extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

int One=0;

int Two=0;

for (Text value : values) {

if (value.toString().contains("20121001")){

String[] split = value.toString().split(",");

if (split[1].equals("4")&&split[2].equals("1")){

One++;

}

}else{

String[] split = value.toString().split(",");

if (split[1].equals("4")&&split[2].equals("1")) {

Two++;

}

}

}

if (One>Two){

context.write(key,new Text("十月一日载客量:"+One+"----大于---"+"十月二日载客量:"+Two));

}

}

}

CarDriver:

package com.hadoop.Car5;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class CarDriver extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration configuration = new Configuration();

Job JB = Job.getInstance(configuration, “CarDriver”);

FileSystem fileSystem = FileSystem.get(configuration);

JB.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(JB,new Path("E:\\出租车数据\\201210011002"));

JB.setMapperClass(CarMapper.class);

JB.setMapOutputKeyClass(Text.class);

JB.setMapOutputValueClass(Text.class);

JB.setReducerClass(CarReduce.class);

JB.setOutputKeyClass(Text.class);

JB.setOutputValueClass(Text.class);

boolean exists = fileSystem.exists(new Path("E:\\出租车数据\\Out"));

if (exists){

fileSystem.delete(new Path("E:\\出租车数据\\Out"),true);

}

JB.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(JB,new Path("E:\\出租车数据\\Out"));

return JB.waitForCompletion(true)?0:1;

}

public static void main(String[] args) throws Exception {

ToolRunner.run(new CarDriver(),args);

}

}

## 计算出10月1日上班10月2日没有上班的车辆

CarMapper:

package com.hadoop.Car6;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class CarMapper extends Mapper<LongWritable, Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

FileSplit sp = (FileSplit) context.getInputSplit();

String name = sp.getPath().getName();

if (name.contains(“20121001”)){

if (value!=null&&value.toString().length()>0){

String[] split = value.toString().split(“,”);

context.write(new Text(split[0]),value);

}

}else {

if (value!=null&&value.toString().length()>0){

String[] split = value.toString().split(“,”);

context.write(new Text(split[0]),value);

}

}

}

}

CarReduce:

package com.hadoop.Car6;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class CarReduce extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

boolean falg1=true;//当falg1为false的时候就证明10月1日没有上班

boolean falg2=true;//当falg1为false的时候就证明10月2日没有上班

for (Text value : values) {

if (value.toString().contains("20121001")){

String[] split = value.toString().split(",");

if (split[2].equals("3")){

falg1=false;

}

}else{

String[] split = value.toString().split(",");

if (split[2].equals("3")) {

falg2=false;

}

}

}

if (falg1==true&&falg2==false){

context.write(key,new Text("十月一日:"+falg1+"-----"+"十月二日:"+falg2));

}

}

}

CarDriver:

package com.hadoop.Car6;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class CarDriver extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration configuration = new Configuration();

Job JB = Job.getInstance(configuration, “CarDriver”);

FileSystem fileSystem = FileSystem.get(configuration);

JB.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(JB,new Path("E:\\出租车数据\\201210011002"));

JB.setMapperClass(CarMapper.class);

JB.setMapOutputKeyClass(Text.class);

JB.setMapOutputValueClass(Text.class);

JB.setReducerClass(CarReduce.class);

JB.setOutputKeyClass(Text.class);

JB.setOutputValueClass(Text.class);

boolean exists = fileSystem.exists(new Path("E:\\出租车数据\\Out"));

if (exists){

fileSystem.delete(new Path("E:\\出租车数据\\Out"),true);

}

JB.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(JB,new Path("E:\\出租车数据\\Out"));

return JB.waitForCompletion(true)?0:1;

}

public static void main(String[] args) throws Exception {

ToolRunner.run(new CarDriver(),args);

}

}

## 计算出连续48小时运营的车辆

CarMapper:

package com.hadoop.Taxi08;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class TaxiMap08 extends Mapper<LongWritable,Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

FileSplit fileSplit = (FileSplit) context.getInputSplit();

String name = fileSplit.getPath().getName();

if (name.contains("20121001")){

if (value.toString().length()>0){

String[] split = value.toString().split(",");

context.write(new Text(split[0]), value);

}

}else{

if (value.toString().length()>0){

String[] split = value.toString().split(",");

context.write(new Text(split[0]), value);

}

}

}

}

CarReduce:

package com.hadoop.Taxi08;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.util.HashSet;

public class TaxiReduce08 extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable

HashSet One = new HashSet<>();

HashSet Two = new HashSet<>();

StringBuffer sbOne = new StringBuffer();

StringBuffer sbTwo = new StringBuffer();

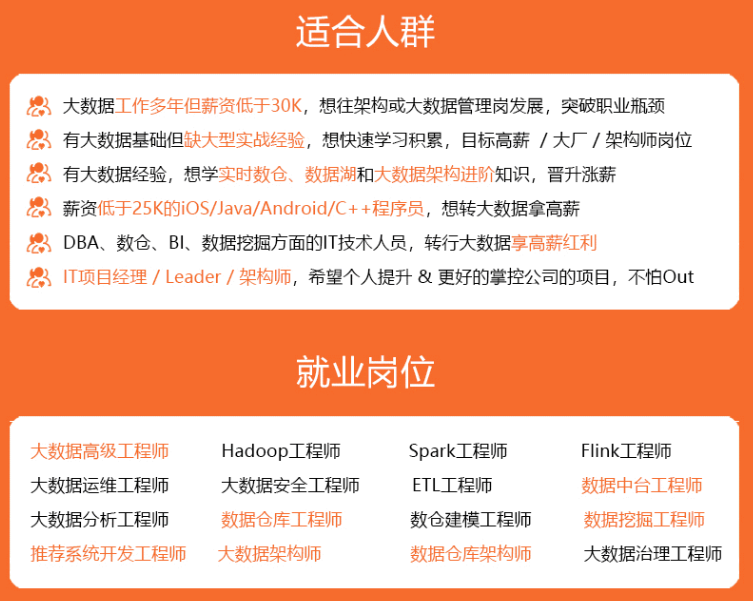

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

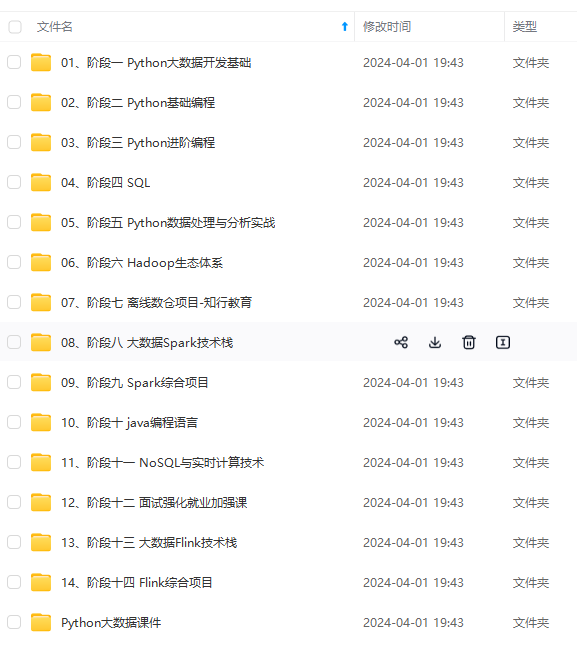

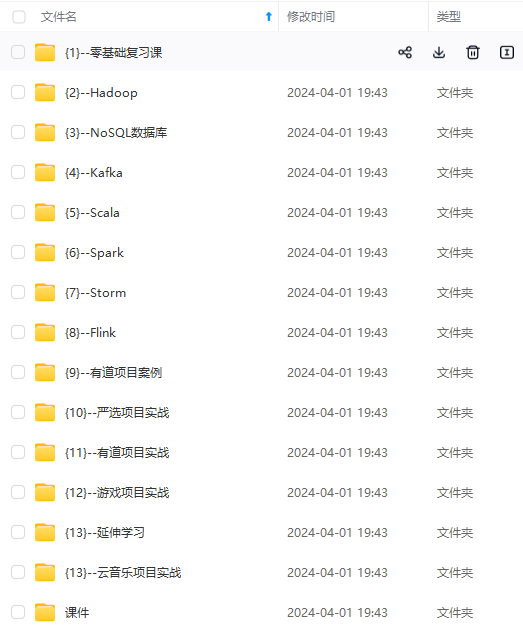

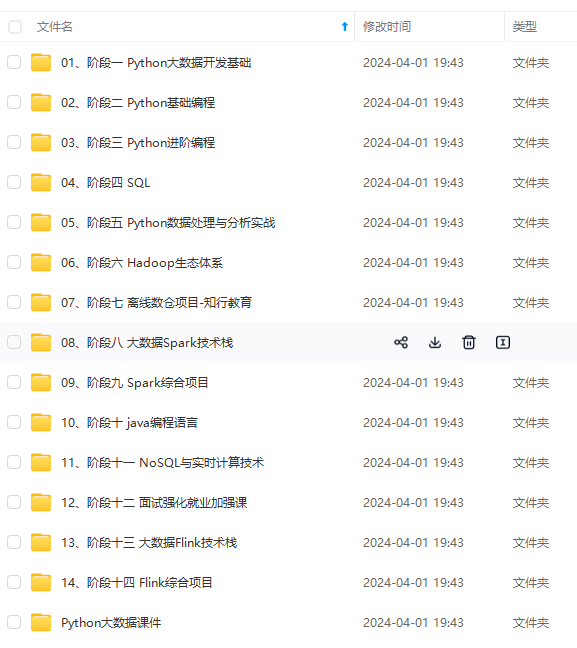

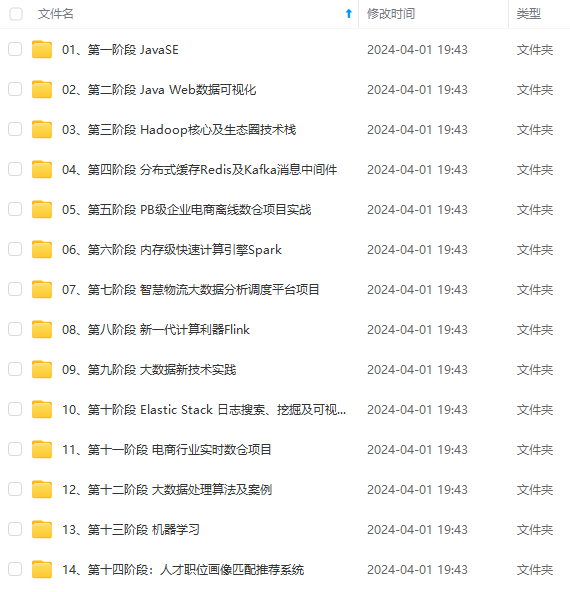

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable

HashSet One = new HashSet<>();

HashSet Two = new HashSet<>();

StringBuffer sbOne = new StringBuffer();

StringBuffer sbTwo = new StringBuffer();

[外链图片转存中…(img-U5qsPzZB-1715796242838)]

[外链图片转存中…(img-qNytrpTN-1715796242838)]

[外链图片转存中…(img-DmOGUWNY-1715796242838)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

7130

7130

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?