Kafka 0-10 Direct 模式

通过 SparkStreaming 从 Kafka 读取数据

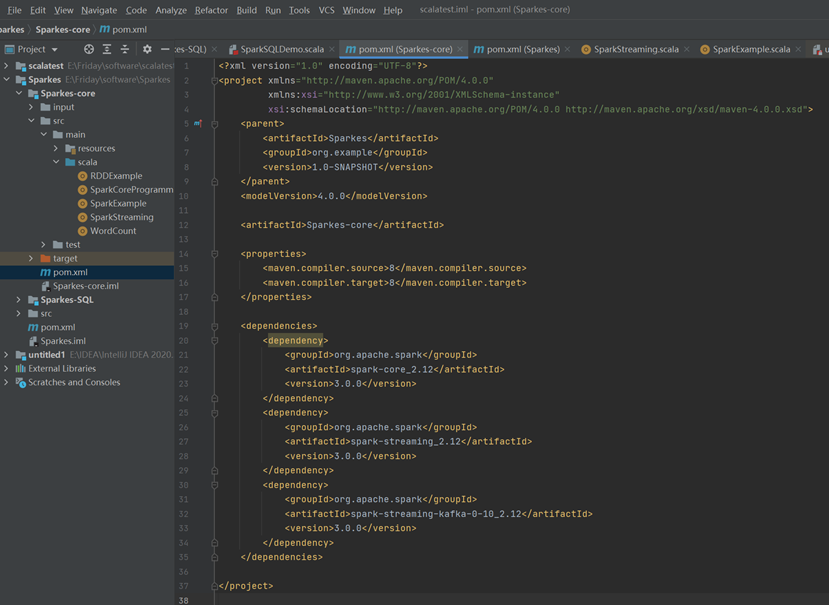

导入依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_2.12</artifactId>

<version>3.0.0</version>

</dependency>

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>Sparkes</artifactId>

<groupId>org.example</groupId>

<version>1.0-SNAPSHOT</version>

</parent>

<modelVersion>4.0.0</modelVersion>

<artifactId>Sparkes-core</artifactId>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.12</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_2.12</artifactId>

<version>3.0.0</version>

</dependency>

</dependencies>

</project>

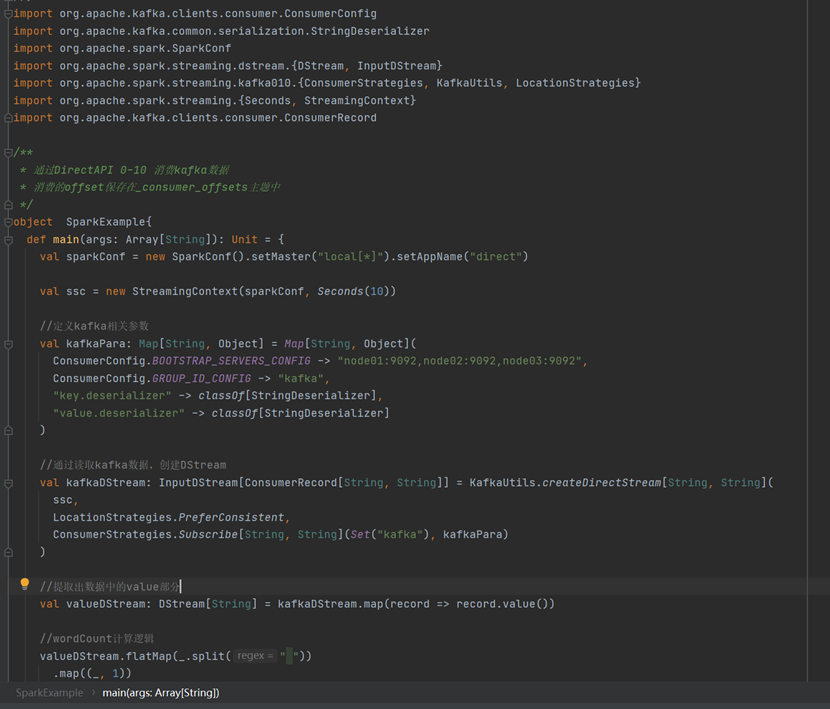

编写代码

import org.apache.kafka.clients.consumer.ConsumerConfig

import org.apache.kafka.common.serialization.StringDeserializer

import org.apache.spark.SparkConf

import org.apache.spark.streaming.dstream.{DStream, InputDStream}

import org.apache.spark.streaming.kafka010.{ConsumerStrategies, KafkaUtils, LocationStrategies}

import org.apache.spark.streaming.{Seconds, StreamingContext}

import org.apache.kafka.clients.consumer.ConsumerRecord

/**

* 通过DirectAPI 0-10 消费kafka数据

* 消费的offset保存在_consumer_offsets主题中

*/

object SparkExample{

def main(args: Array[String]): Unit = {

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("direct")

val ssc = new StreamingContext(sparkConf, Seconds(10))

//定义kafka相关参数

val kafkaPara: Map[String, Object] = Map[String, Object](

ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG -> "node01:9092,node02:9092,node03:9092",

ConsumerConfig.GROUP_ID_CONFIG -> "kafka",

"key.deserializer" -> classOf[StringDeserializer],

"value.deserializer" -> classOf[StringDeserializer]

)

//通过读取kafka数据,创建DStream

val kafkaDStream: InputDStream[ConsumerRecord[String, String]] = KafkaUtils.createDirectStream[String, String](

ssc,

LocationStrategies.PreferConsistent,

ConsumerStrategies.Subscribe[String, String](Set("kafka"), kafkaPara)

)

//提取出数据中的value部分

val valueDStream: DStream[String] = kafkaDStream.map(record => record.value())

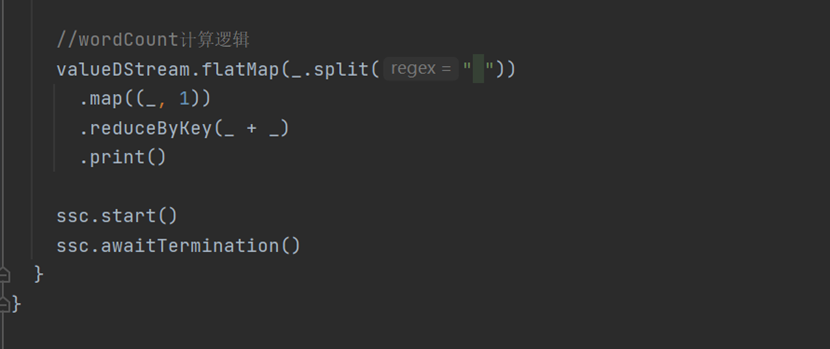

//wordCount计算逻辑

valueDStream.flatMap(_.split(" "))

.map((_, 1))

.reduceByKey(_ + _)

.print()

ssc.start()

ssc.awaitTermination()

}

}

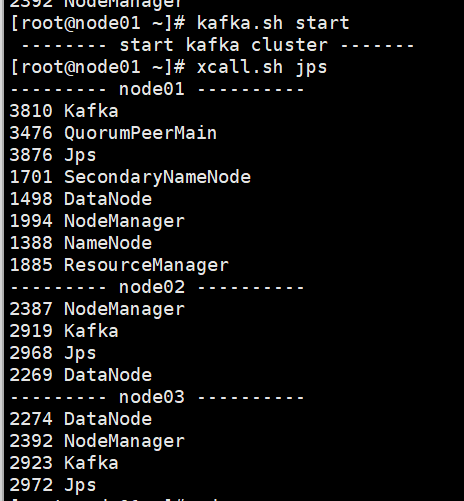

开启Kafka集群

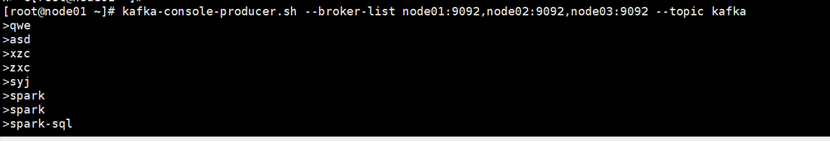

开启Kafka生产者,产生数据

kafka-console-producer.sh --broker-list node01:9092,node02:9092,node03:9092 --topic kafka

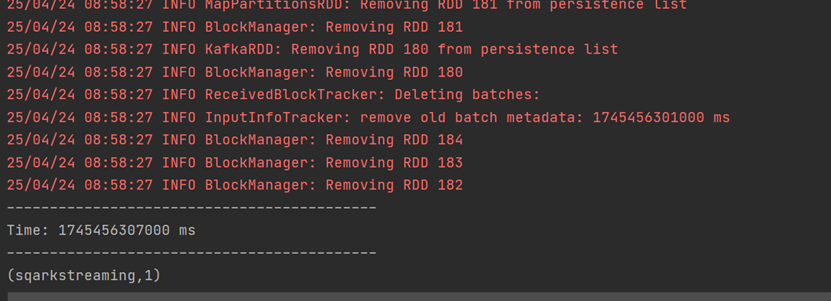

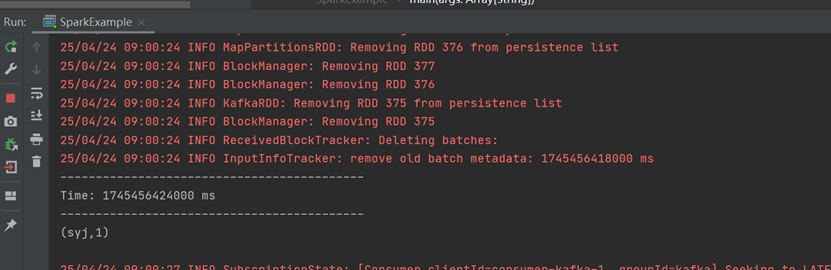

运行程序,接收Kafka生产的数据并进行相应处理

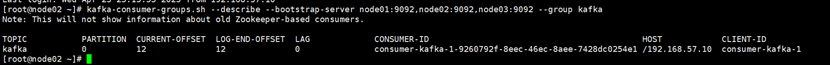

查看消费进度

kafka-consumer-groups.sh --describe --bootstrap-server node01:9092,node02:9092,node03:9092 --group kafka

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?