目录

5.尝试故障转移测试(关闭主服务器nginx后keepalived也停止运行)

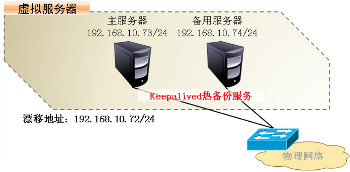

一、Keepalived工作原理

- Keepalived 是一个基于VRRP协议来实现的LVS服务高可用方案,可以解决静态路由出现的单点故障问题。

- 在一个LVS服务集群中通常有主服务器(MASTER)和备份服务器(BACKUP)两种角色的服务器,但是对外表现为一个虚拟IP(VIP),主服务器会发送VRRP通告信息给备份服务器,当备份服务器收不到VRRP消息的时候,即主服务器异常的时候,备份服务器就会接管虚拟IP,继续提供服务,从而保证了高可用性。

1.Keepalived体系主要模块及其作用:

keepalived体系架构中主要有三个模块,分别是core、check和vrrp。

- core模块:为keepalived的核心,负责主进程的启动、维护及全局配置文件的加载和解析。

- vrrp模块:是来实现VRRP协议的。(调度器之间的健康检查和主备切换)

- check模块:负责健康检查,常见的方式有端口检查及URL检查。(节点服务器的健康检查)

2.一个合格的群集应该具备的特点:

- 负载均衡 用于提高群集的性能 LVS Nginx HAProxy SLB F5

- 健康检查(探针) 针对于调度器和节点服务器 Keepalived Heartbeat

- 故障转移 通过VIP漂移实现主备切换 VRRP 脚本

3.健康检查(探针)常用的工作方式:

- 发送心跳消息 vrrp报文 ping/pong

- TCP端口检查 向目标主机的 IP:PORT 发起TCP连接请求,如果TCP连接三次握手成功则认为健康检查正常,否则认为健康检查异常

- HTTP URL检查 向目标主机的URL路径(比如http://IP:PORT/URI路径)发起 HTTP GET 请求方法,如果响应消息的状态码为 2XX 或 3XX,则认为健康检查正常;如果响应消息的状态码为 4XX 或 5XX,则认为健康检查异常。

二、Keepalived案例讲解

- Keepalived可实现多机热备,每个热备组可有多台服务器

- 双机热备的故障切换是由虚拟IP地址的漂移来实现,适用于各种应用服务器

- 实现基于Web服务的双机热备

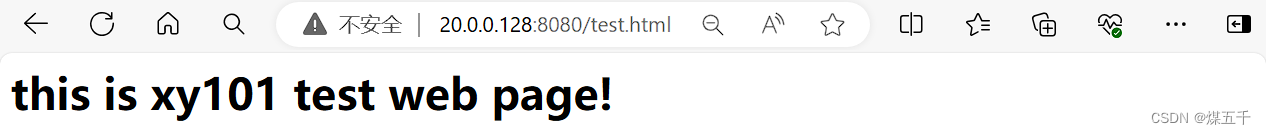

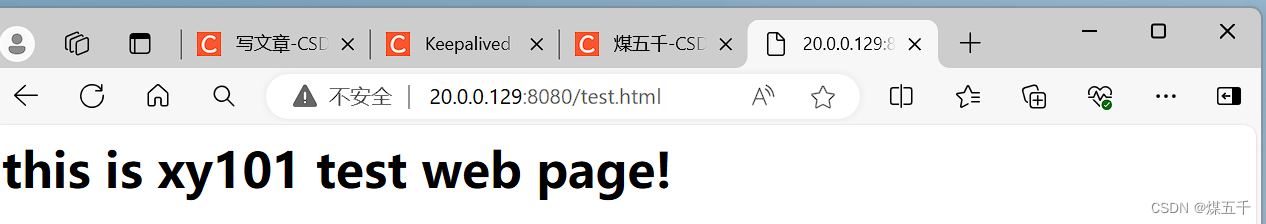

- 主服务器:20.0.0.128

- 备服务器:20.0.0.129

- VIP地址:20.0.0.188

三、Keepalived安装与启动

- 在LVS群集环境中应用时,也需用到ipvsadm管理工具

- YUM安装Keepalived

- 启用Keepalived服务

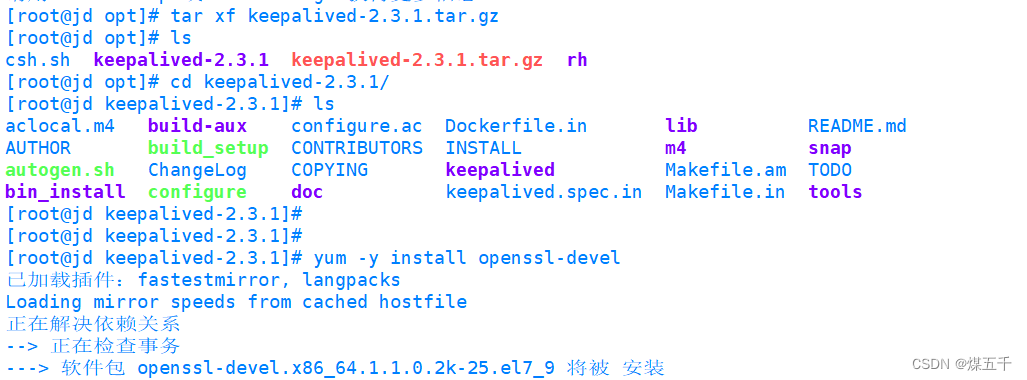

1.部署Keepalived

主服务器

[root@jd opt]# bash csh.sh

[root@jd opt]# tar xf keepalived-2.3.1.tar.gz

[root@jd opt]# ls

csh.sh keepalived-2.3.1 keepalived-2.3.1.tar.gz rh

[root@jd opt]# cd keepalived-2.3.1/

[root@jd keepalived-2.3.1]# ls

aclocal.m4 build-aux configure.ac Dockerfile.in lib README.md

AUTHOR build_setup CONTRIBUTORS INSTALL m4 snap

autogen.sh ChangeLog COPYING keepalived Makefile.am TODO

bin_install configure doc keepalived.spec.in Makefile.in tools

[root@jd keepalived-2.3.1]# yum -y install openssl-devel

[root@jd keepalived-2.3.1]# yum install libnl-devel

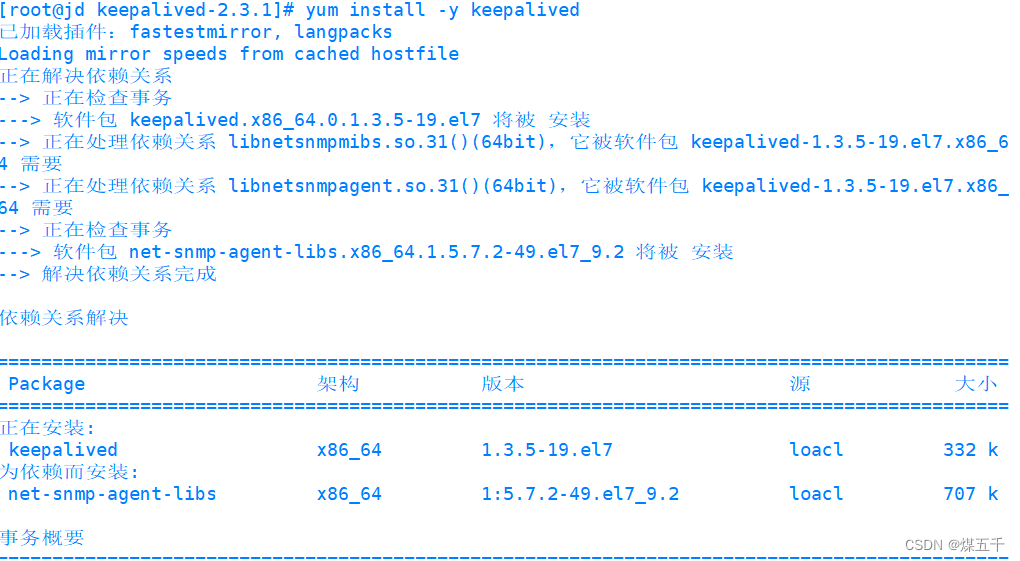

[root@jd keepalived-2.3.1]# yum install -y keepalived

[root@jd etc]# cd /etc/keepalived

[root@jd keepalived]# ls

keepalived.bak keepalived.conf

[root@jd keepalived]# vim keepalived.bak

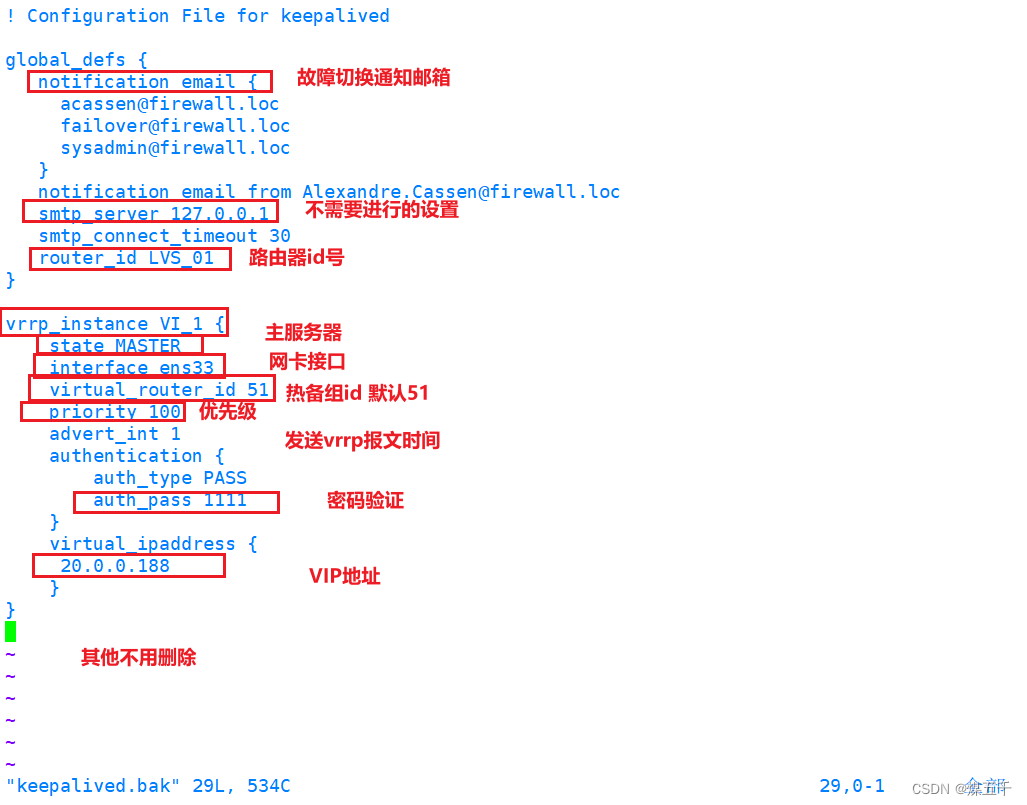

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_01

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

20.0.0.188

}

}

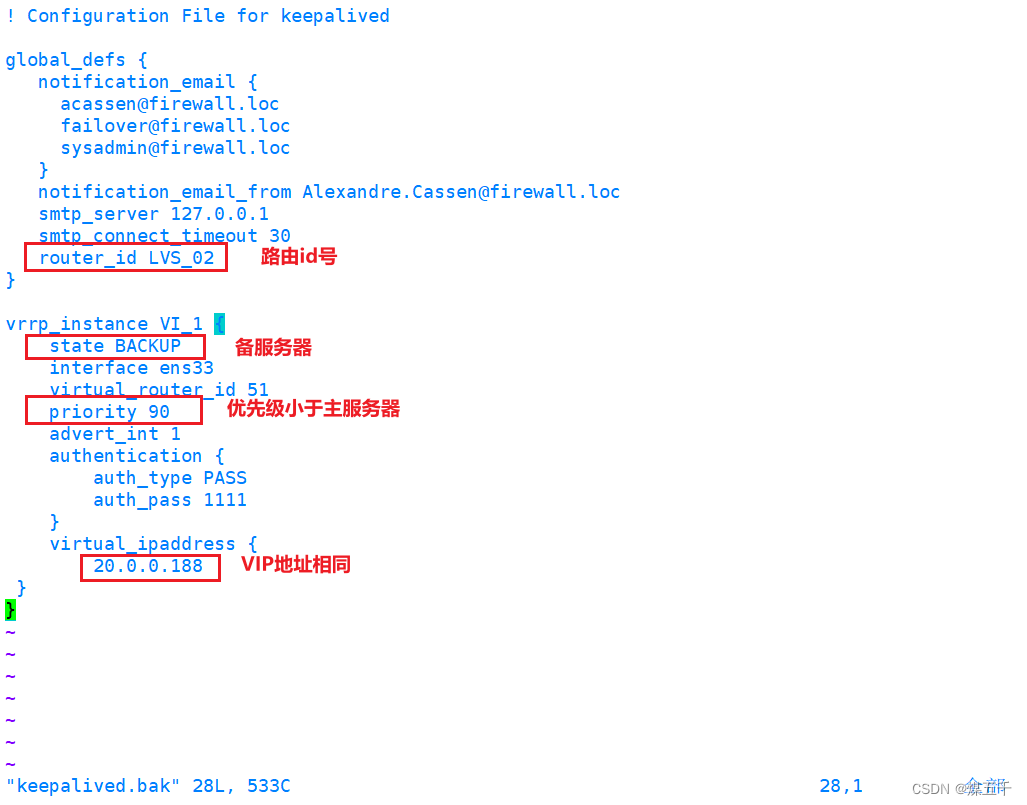

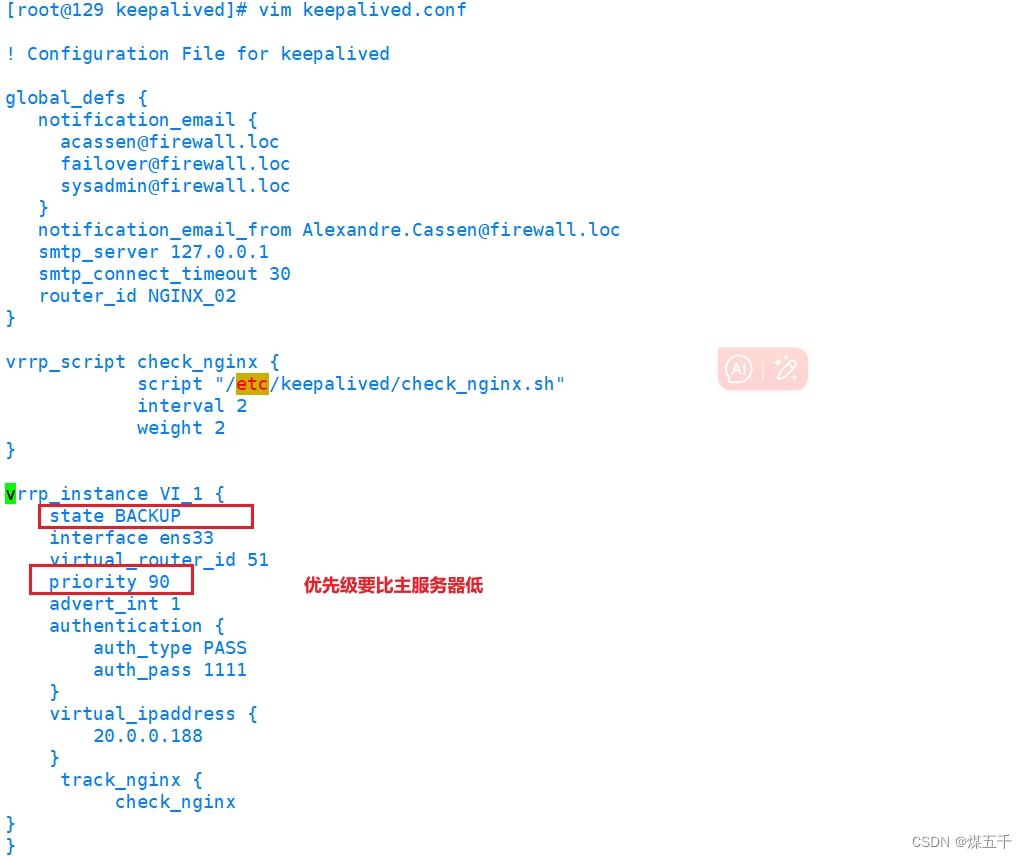

备服务器

操作相同,配置文件修改稍作更改如下

[root@jd keepalived]# systemctl start keepalived.service

[root@jd keepalived]# systemctl enable keepalived.service

Created symlink from /etc/systemd/system/multi-user.target.wants/keepalived.service to /usr/lib/systemd/system/keepalived.service.

2.准备5台服务器

nfs服务器与节点服务器配置见部署LVS—DR群集-CSDN博客

- 负载调度器(均已安装keepalived服务,见上述部署操作)

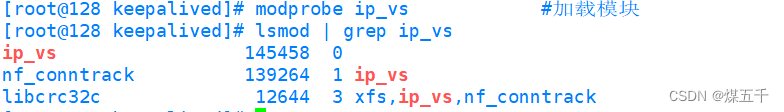

[root@128 keepalived]# modprobe ip_vs #加载模块

[root@128 keepalived]# lsmod | grep ip_vs

ip_vs 145458 0

nf_conntrack 139264 1 ip_vs

libcrc32c 12644 3 xfs,ip_vs,nf_conntrack

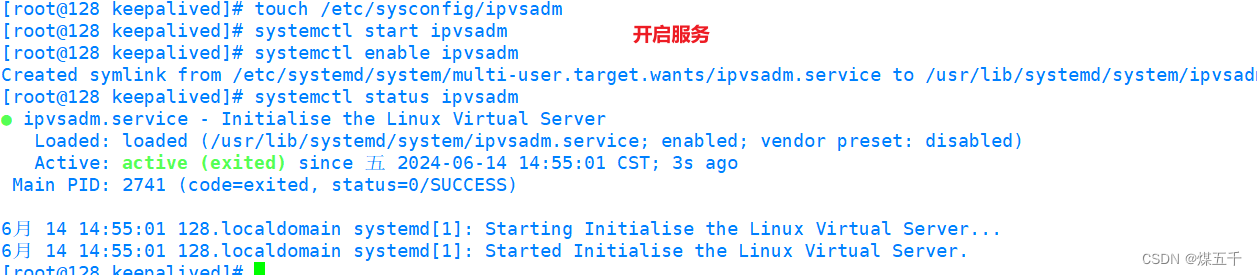

[root@128 keepalived]# touch /etc/sysconfig/ipvsadm

[root@128 keepalived]# systemctl start ipvsadm

[root@128 keepalived]# systemctl enable ipvsadm

Created symlink from /etc/systemd/system/multi-user.target.wants/ipvsadm.service to /usr/lib/systemd/system/ipvsadm.service.

[root@128 keepalived]# systemctl status ipvsadm

● ipvsadm.service - Initialise the Linux Virtual Server

Loaded: loaded (/usr/lib/systemd/system/ipvsadm.service; enabled; vendor preset: disabled)

Active: active (exited) since 五 2024-06-14 14:55:01 CST; 3s ago

Main PID: 2741 (code=exited, status=0/SUCCESS)

6月 14 14:55:01 128.localdomain systemd[1]: Starting Initialise the Linux Virtual Server...

6月 14 14:55:01 128.localdomain systemd[1]: Started Initialise the Linux Virtual Server.

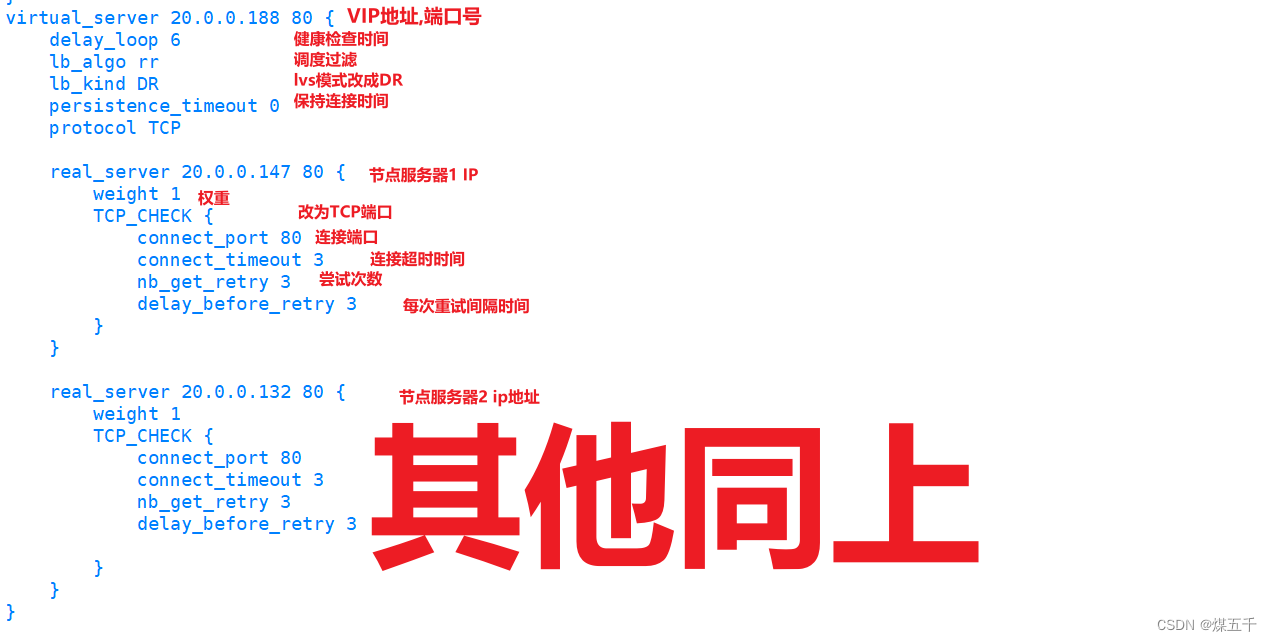

3.TCP端口检查方式

vim /etc/keepalived/keepalived.conf

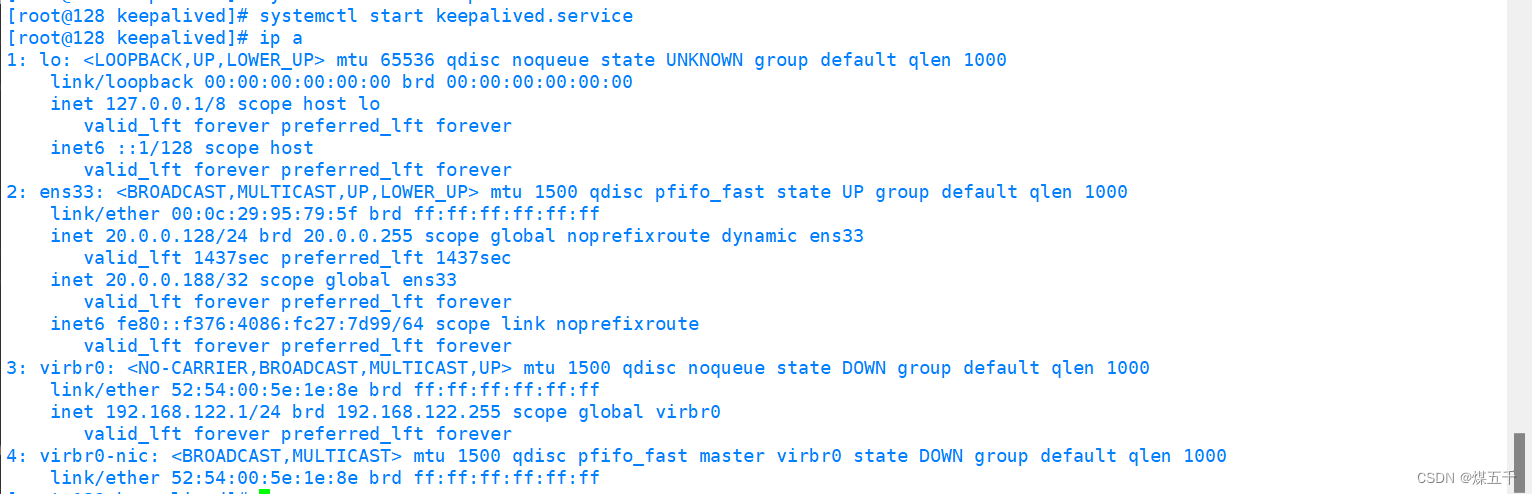

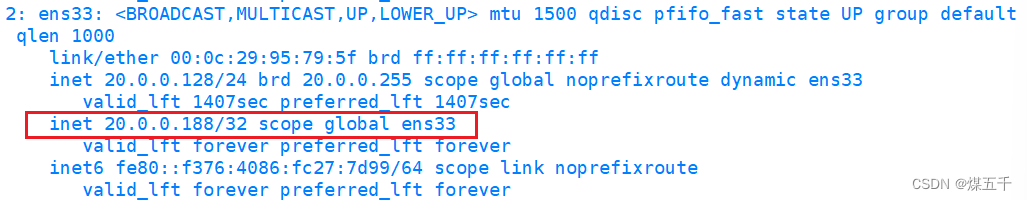

主服务器

[root@128 keepalived]# systemctl start keepalived.service

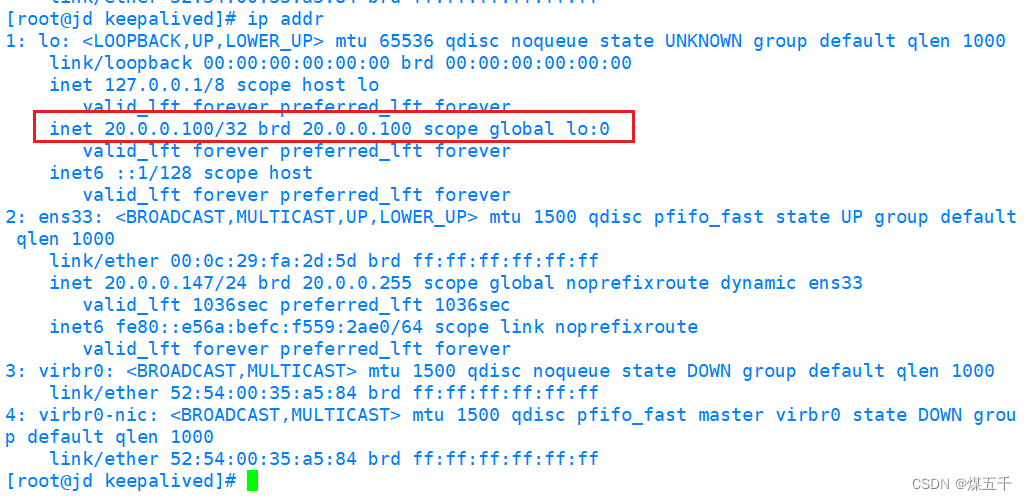

[root@128 keepalived]# ip a

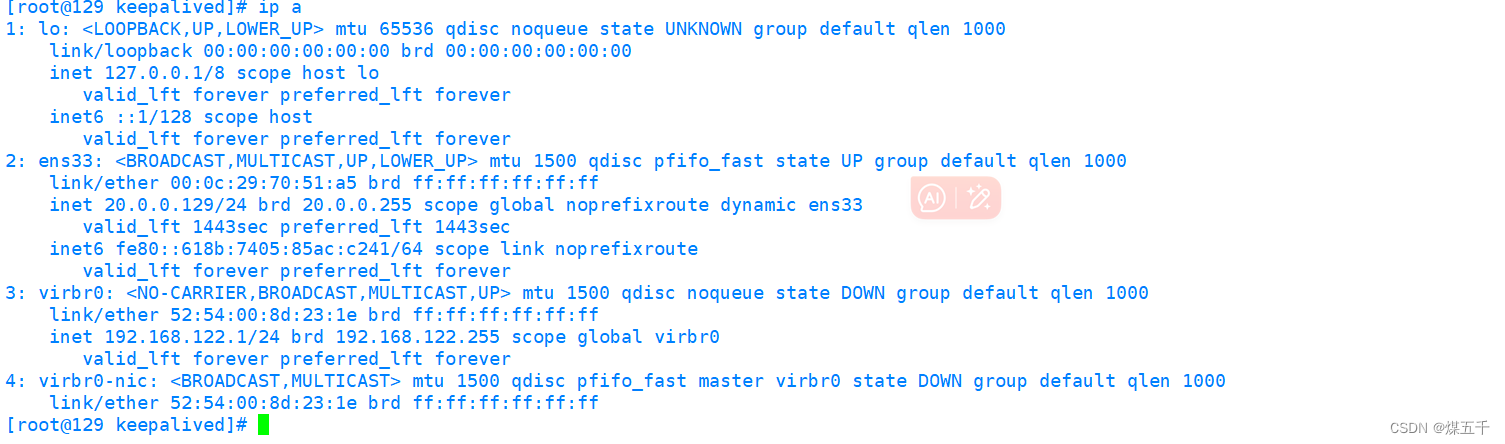

备服务器

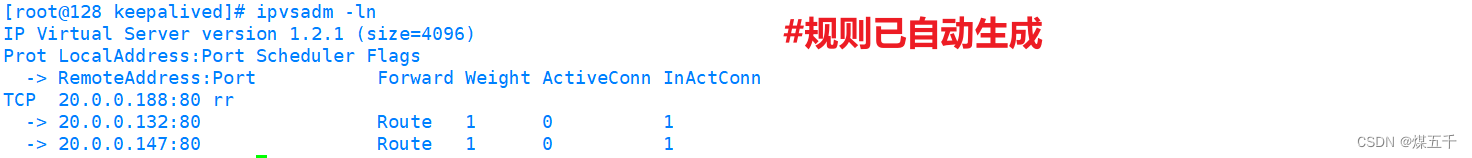

[root@128 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 20.0.0.188:80 rr

-> 20.0.0.132:80 Route 1 0 1

-> 20.0.0.147:80 Route 1 0 1

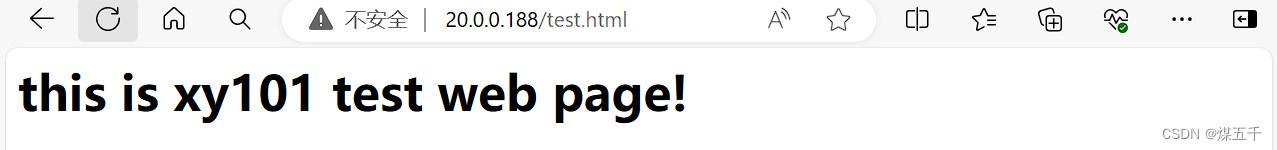

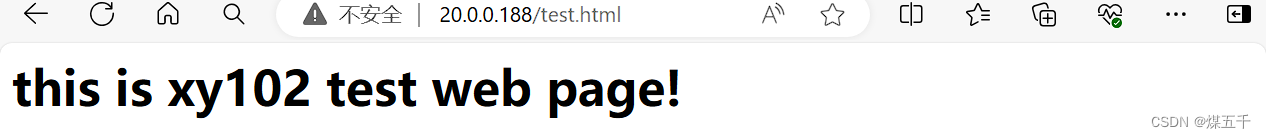

4. 验证

4. 验证

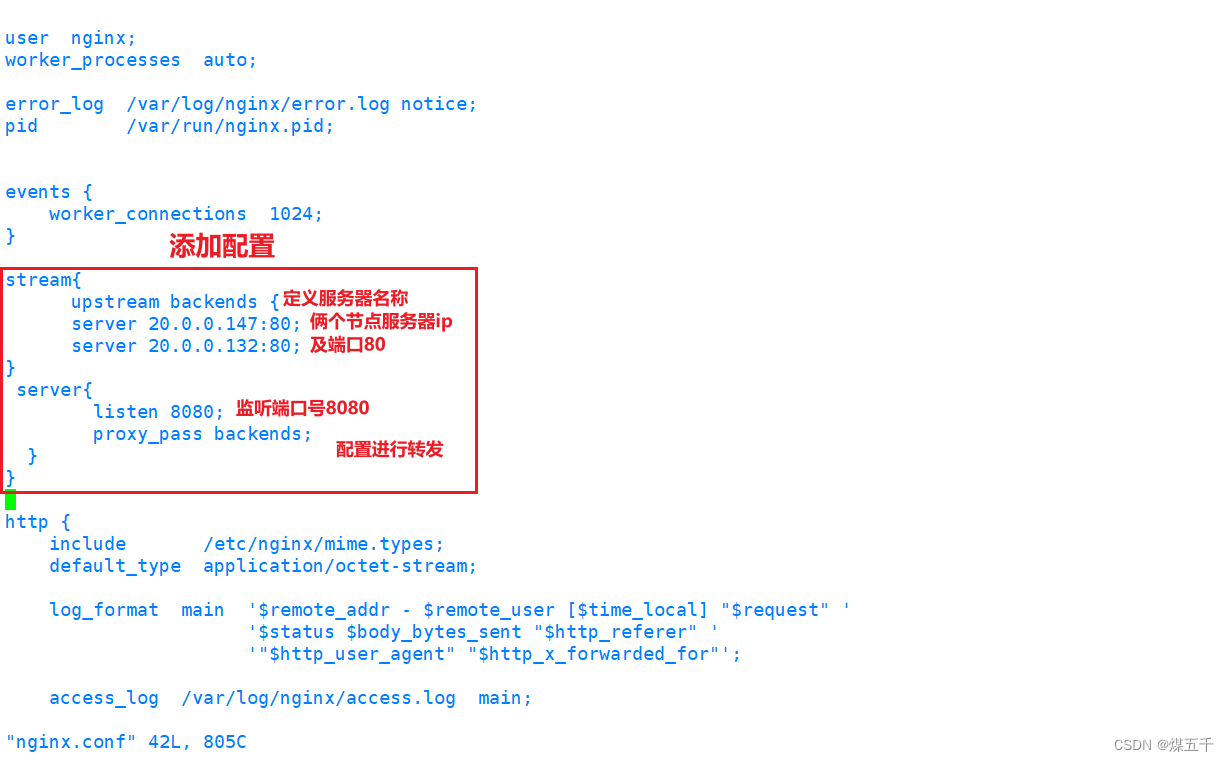

四. Nginx实现高可用

实验环境同LVS+Keepalived高可用负载均衡,负载调度器配置不同

1.安装nginx

[root@128 opt]# yum -y localinstall nginx-1.24.0-1.el7.ngx.x86_64.rpm

[root@128 opt]# cd /etc/nginx/

[root@128 nginx]# vim nginx.conf

[root@128 nginx]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

[root@128 nginx]# vim nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log notice;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

stream{

upstream backends {

server 20.0.0.147:80;

server 20.0.0.132:80;

}

server{

listen 8080;

proxy_pass backends;

}

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

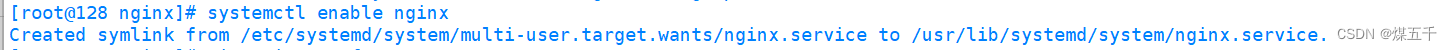

[root@128 nginx]# systemctl enable nginx

Created symlink from /etc/systemd/system/multi-user.target.wants/nginx.service to /usr/lib/systemd/system/nginx.service.

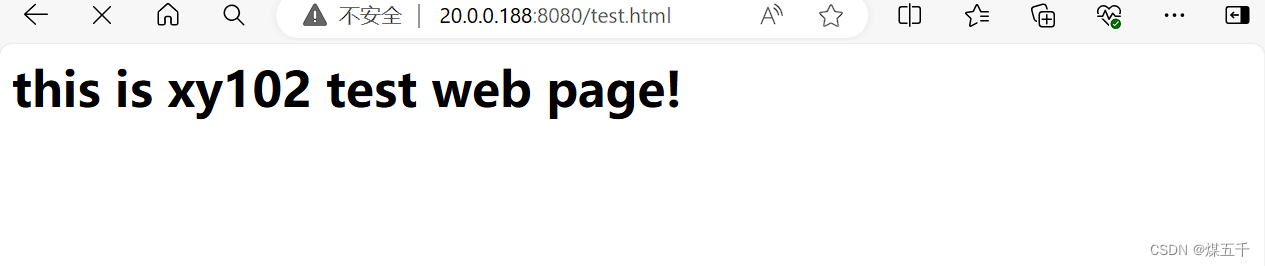

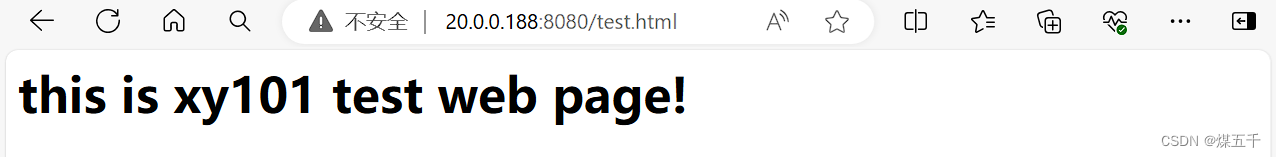

2. 验证

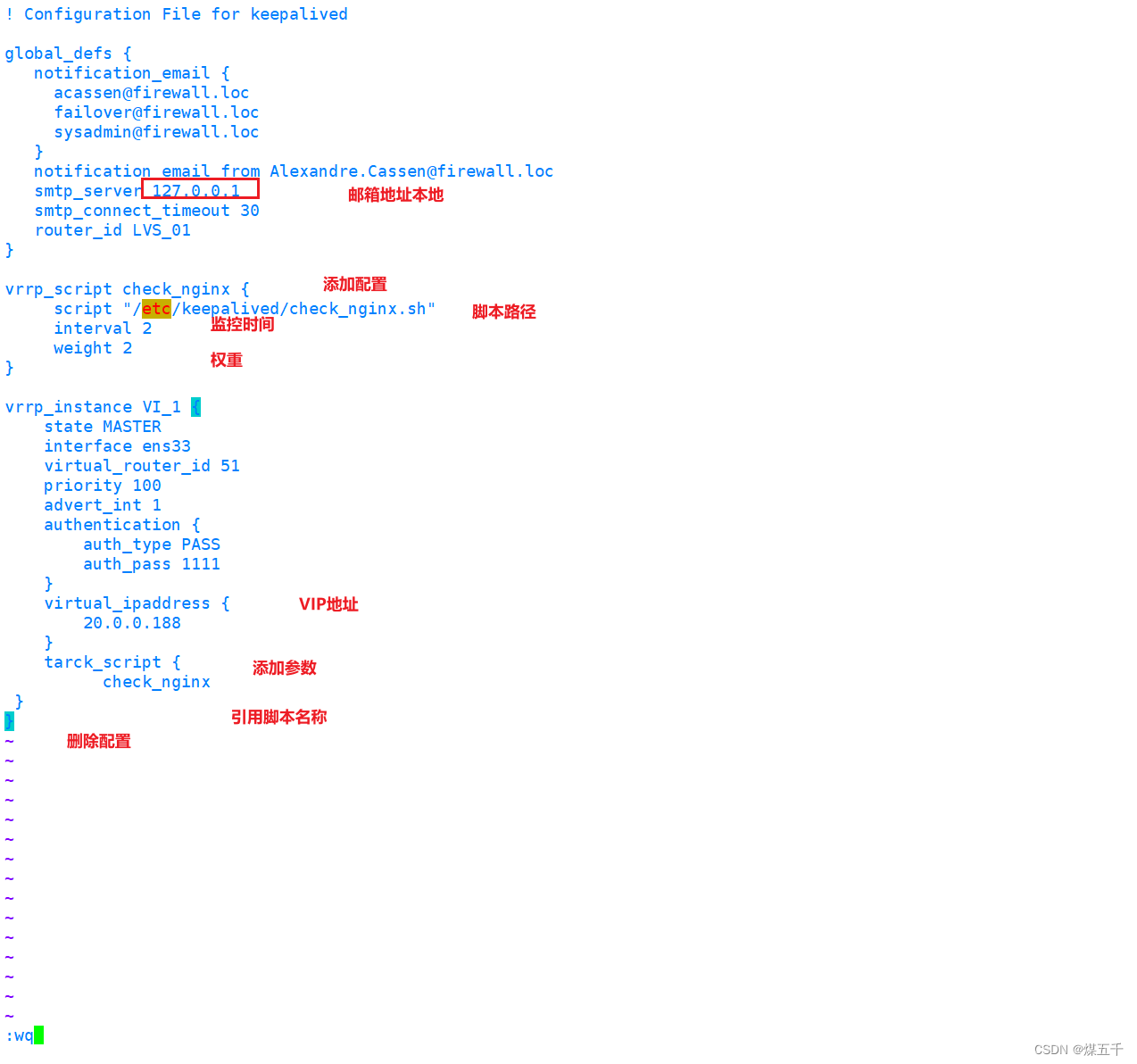

3.高可用操作

[root@128 opt]# yum install -y keepalived

[root@128 opt]# cd /etc/keepalived

[root@128 keepalived]# touch check_nginx.sh

[root@128 keepalived]# vim check_nginx.sh

[root@128 keepalived]# chmod +x check_nginx.sh

主服务器

[root@128 keepalived]# systemctl start keepalived

[root@128 keepalived]# systemctl enable keepalived

[root@128 keepalived]# systemctl restart keepalived

[root@128 keepalived]# ip a

备服务器

4.验证

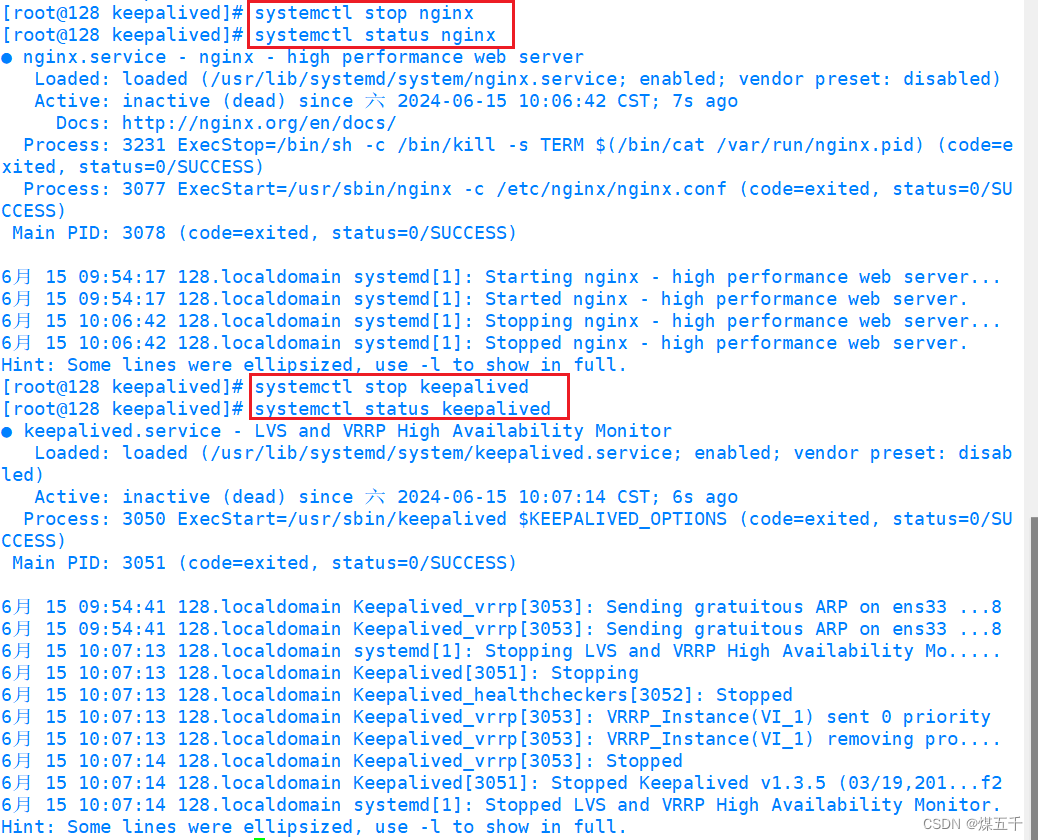

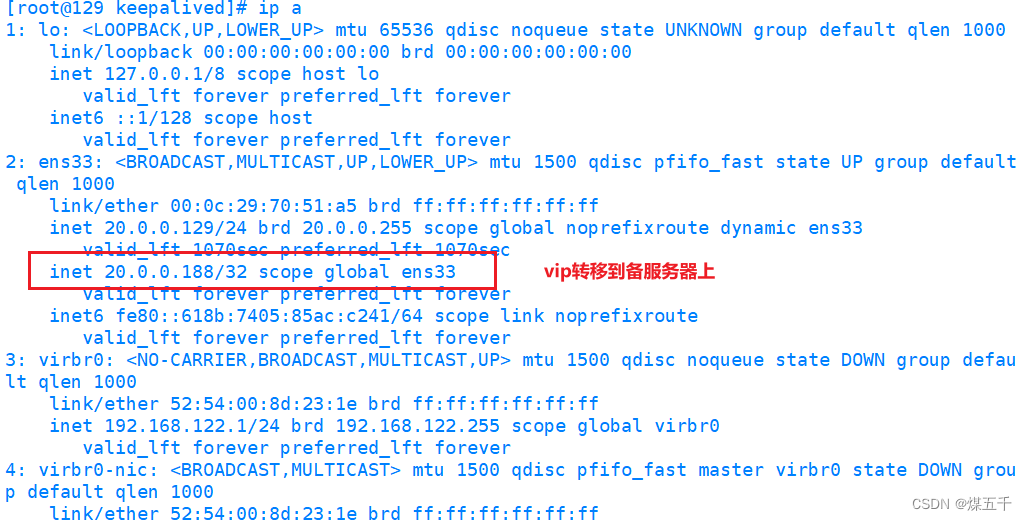

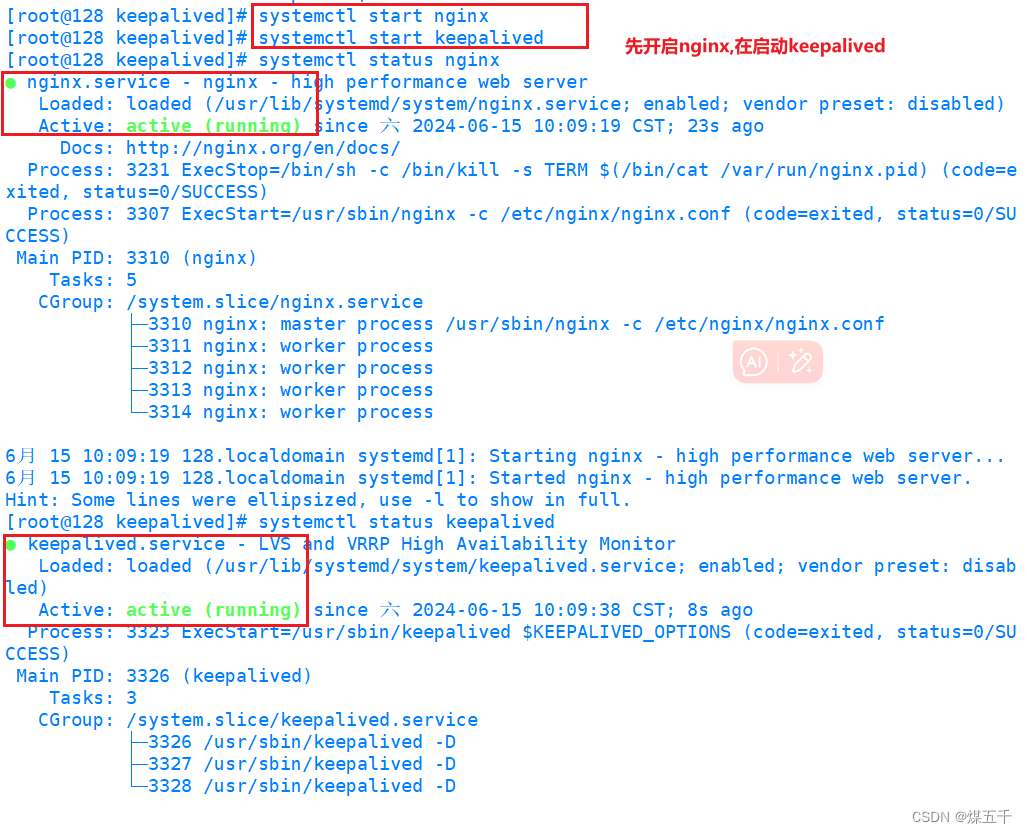

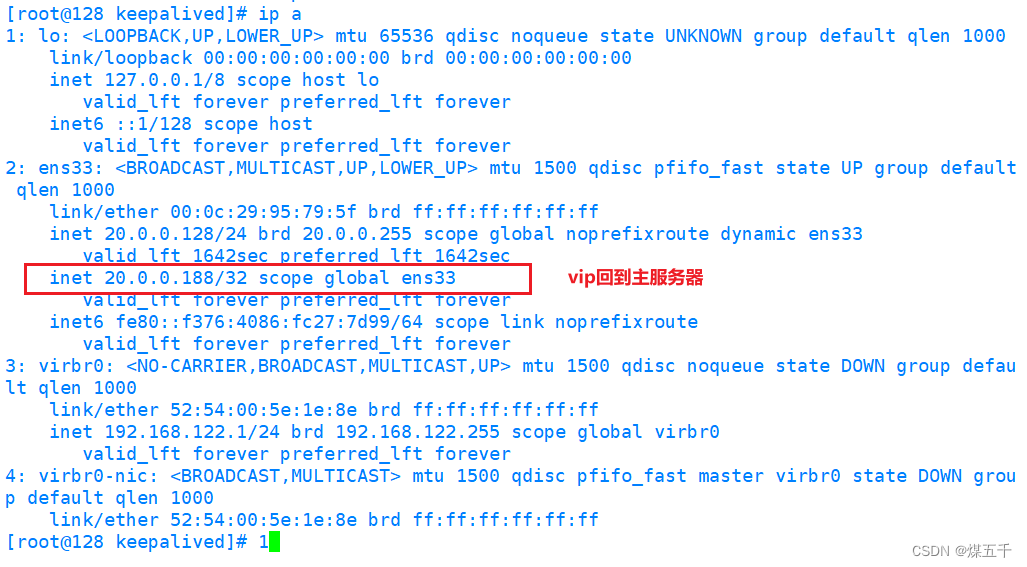

5.尝试故障转移测试(关闭主服务器nginx后keepalived也停止运行)

若需要还原,则因为脚本的缘故,应先启动主服务器nginx服务再启动keepalived

五. Keepalived脑裂故障

现象:

- 主服务器和备服务器都同时拥有相同的VIP

原因:

- 因为主服务器和备服务器的通信中断,导致备服务器无法收到主服务器发送的VRRP报文,备服务器误认为主服务器已经故障了并通过ip命令生成VIP

解决:

- 关闭主服务器或备服务器其中一个的keepalived服务

预防:

- (1)如果是系统防火墙导致,则关闭防火墙或添加防火墙规则放通VRRP组播地址(224.0.0.18)的传输

- (2)如果是主备服务器之间的通信链路中断导致,则可以在主备服务器之间添加双链路通信

- (3)在主服务器使用脚本定时判断与备服务器通信链路是否中断,如果判断是主备服务器之间的链接中断则自行关闭主服务器上的keepalived服务

- (4)利用第三方应用或监控系统检测是否发生了脑裂故障现象,如果确认发生了脑裂故障则通过第三方应用或监控系统来关闭主服务器或备服务器其中一个的keepalived服务

332

332

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?