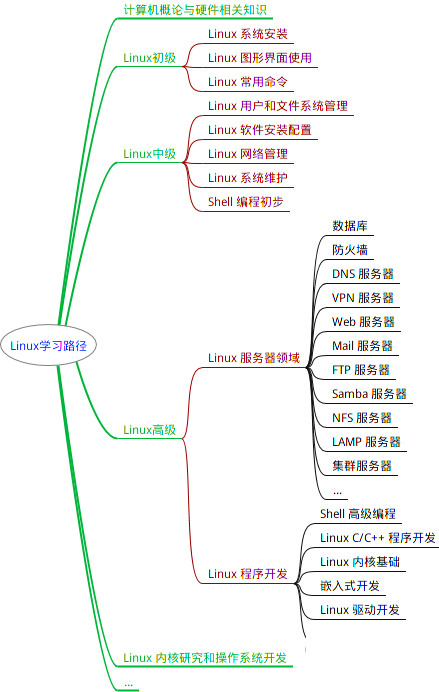

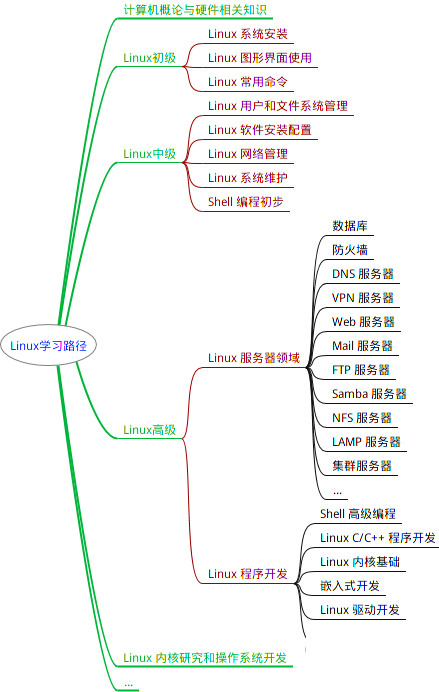

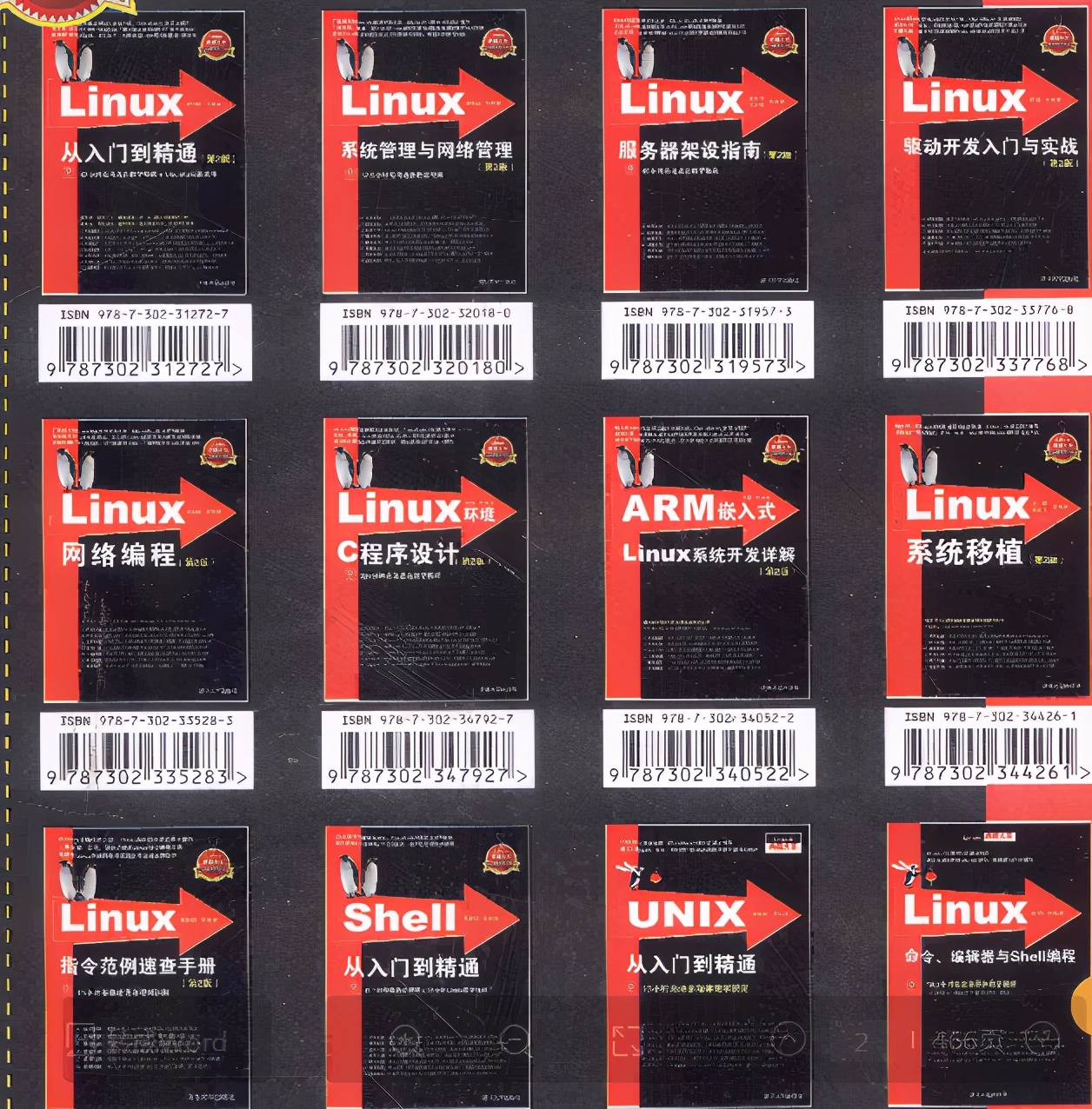

最全的Linux教程,Linux从入门到精通

======================

-

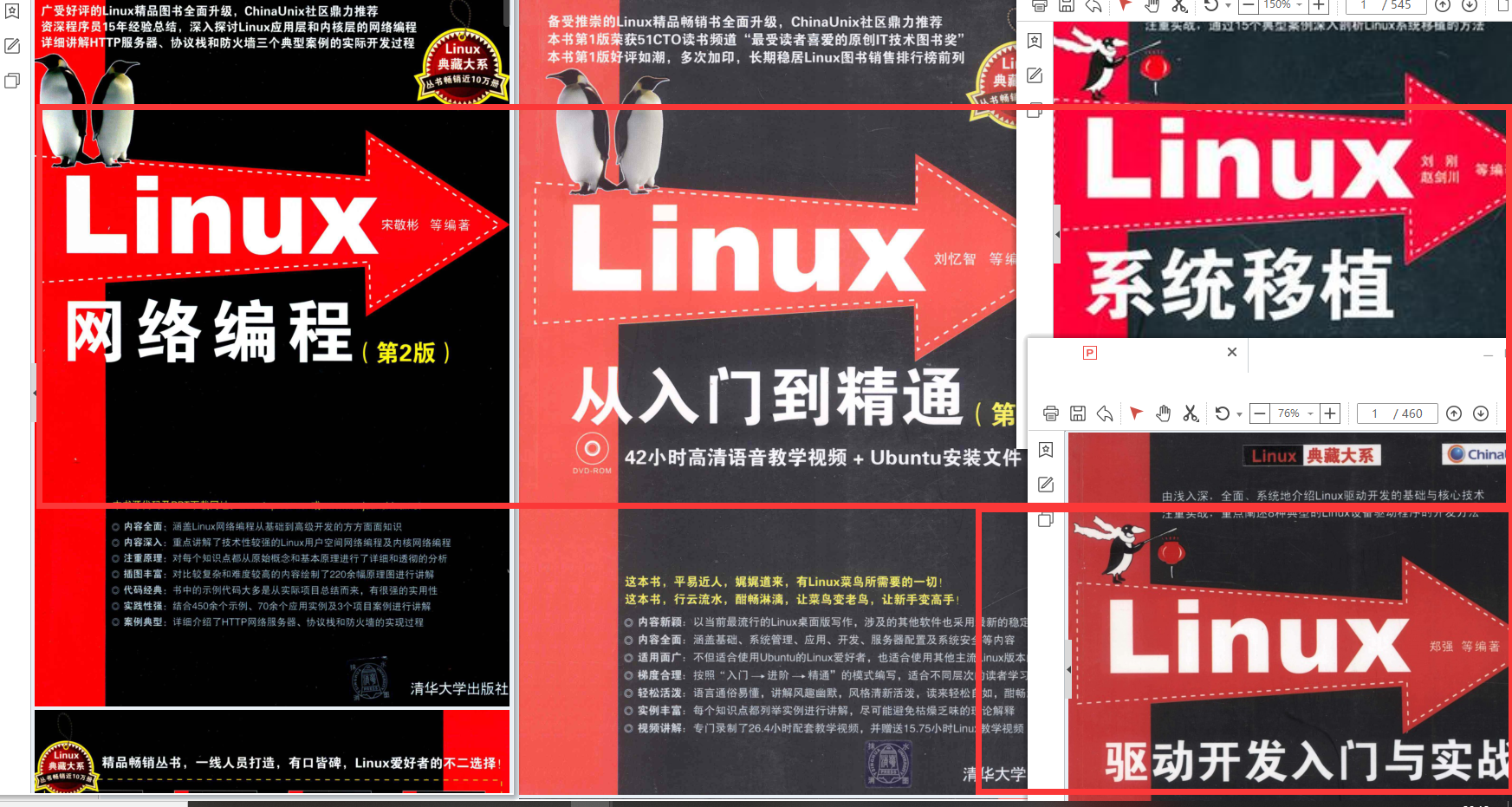

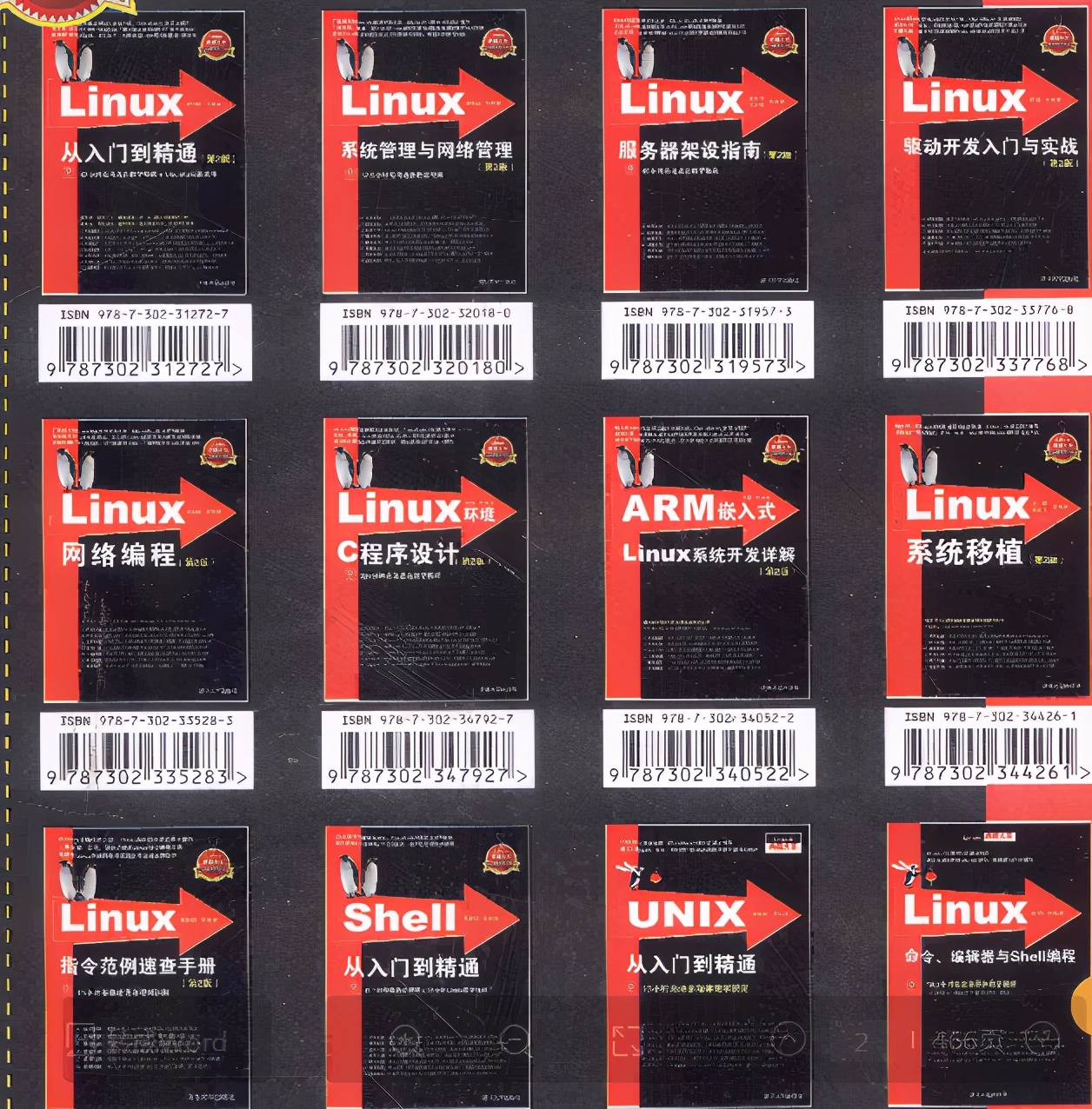

linux从入门到精通(第2版)

-

Linux系统移植

-

Linux驱动开发入门与实战

-

LINUX 系统移植 第2版

-

Linux开源网络全栈详解 从DPDK到OpenFlow

第一份《Linux从入门到精通》466页

====================

内容简介

====

本书是获得了很多读者好评的Linux经典畅销书**《Linux从入门到精通》的第2版**。本书第1版出版后曾经多次印刷,并被51CTO读书频道评为“最受读者喜爱的原创IT技术图书奖”。本书第﹖版以最新的Ubuntu 12.04为版本,循序渐进地向读者介绍了Linux 的基础应用、系统管理、网络应用、娱乐和办公、程序开发、服务器配置、系统安全等。本书附带1张光盘,内容为本书配套多媒体教学视频。另外,本书还为读者提供了大量的Linux学习资料和Ubuntu安装镜像文件,供读者免费下载。

本书适合广大Linux初中级用户、开源软件爱好者和大专院校的学生阅读,同时也非常适合准备从事Linux平台开发的各类人员。

需要《Linux入门到精通》、《linux系统移植》、《Linux驱动开发入门实战》、《Linux开源网络全栈》电子书籍及教程的工程师朋友们劳烦您转发+评论

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

self.conv1 = nn.Conv2d(in_channels= in_channel, out\_channels=out_channel, kernel\_size=1,

stride =stride,padding=1,bias=False)

self.bn1 = nn.BatchNorm2d(out_channel)

self.conv2 = nn.Conv2d(in_channels= out_channel, out\_channels=out_channel, kernel\_size=3,

stride =stride,padding=1,bias=False)

self.bn2 = nn.BatchNorm2d(out_channel)

self.conv3 = nn.Conv2d(in_channels= out_channel, out\_channels=out_channel*self.expansion, kernel\_size=1,

stride =1,bias=False)

self.bn3 = nn.BatchNorm2d(out_channel * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, num\_outputs=None, #输出的分类数

backbone=None,

pretrained=False,

curriculum\_steps=None,

extra\_outputs=0,

share\_top\_y=True,

pred\_category=False,

block=BasicBlock, block\_num=[2,2,2,2],include_top=True, groups=1,

width\_per\_group=64):

# blocks\_num:残差结构中每个block存在多少个layer层

super(ResNet, self).__init__()

self.include_top = include_top

self.in_channel = 64 # 输入图片经过第一层卷积的通道数

self.groups = groups

self.width_per_group = width_per_group

self.conv1 = nn.Conv2d(3, self.in_channel, kernel\_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(self.in_channel)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, block_num[0])

self.layer2 = self._make_layer(block, 128, block_num[1], stride=2)

self.layer3 = self._make_layer(block, 256, block_num[2], stride=2)

self.layer4 = self._make_layer(block, 512, block_num[3], stride=2)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) # output size =(1,1)

self.fc = nn.Linear(512 * block.expansion, 1000)

image_size = 12

patch_size = 3 # 后期尝试改为2

dim = 128

depth = 2

num_classes = 35

expansion_factor = 4

num_patches = (image_size // patch_size) ** 2

self.curriculum_steps = [0, 0, 0, 0] if curriculum_steps is None else curriculum_steps

self.share_top_y = share_top_y

self.extra_outputs = extra_outputs

self.pred_category = pred_category

self.sigmoid = nn.Sigmoid()

def _make_layer(self, block, channel, block_num, stride=1):

downsample = None

if stride != 1 or self.in_channel != channel * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.in_channel, channel * block.expansion, kernel\_size=1, stride=stride, bias=False),

nn.BatchNorm2d(channel * block.expansion))

layers = []

layers.append(block(self.in_channel,

channel,

downsample=downsample,

stride=stride,

groups=self.groups,

width\_per\_group=self.width_per_group))

self.in_channel = channel * block.expansion

for \_ in range(1, block_num):

layers.append(block(self.in_channel,

channel,

groups=self.groups,

width\_per\_group=self.width_per_group))

return nn.Sequential(*layers)

def forward(self, x, epoch=None, **kwargs):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x) # torch.Size[B 128 12 20]

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

if name == “__main__”:

# device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”)

device = ‘cpu’

print(“-----device:{}”.format(device))

print(“-----Pytorch version:{}”.format(torch.version))

input_tensor = torch.zeros(1, 3, 100, 100)

print('input\_tensor:', input_tensor.shape)

pretrained_file = "./model\_resnet18.pt"

model = ResNet()

model.load_state_dict(torch.load(pretrained_file))

model.eval()

out = model(input_tensor)

print("out:", out.shape, out[0, 0:10])

运行结果如下:

-----device:cpu

-----Pytorch version:1.5.0

input_tensor: torch.Size([1, 3, 100, 100])

out: torch.Size([1, 1000]) tensor([ 0.4010, 0.8436, 0.3071, 0.0627, 0.4446, 0.8470, 0.1882, 0.7012,

0.2988, -0.7574], grad_fn=)

3.修改resnet18的网络架构后,如何加载原来已经训练好的模型参数。

例如:

#将114行的代码修改成

self.layer44 = self._make_layer(block, 512, block_num[3], stride=2)

#将166行的代码修改成

x = self.layer44(x)

直接加载模型,运行结果:

RuntimeError: Error(s) in loading state_dict for ResNet:

Missing key(s) in state_dict: “layer44.0.conv1.weight”, “layer44.0.bn1.weight”, “layer44.0.bn1.bias”, “layer44.0.bn1.running_mean”, “layer44.0.bn1.running_var”, “layer44.0.conv2.weight”, “layer44.0.bn2.weight”, “layer44.0.bn2.bias”, “layer44.0.bn2.running_mean”, “layer44.0.bn2.running_var”, “layer44.0.downsample.0.weight”, “layer44.0.downsample.1.weight”, “layer44.0.downsample.1.bias”, “layer44.0.downsample.1.running_mean”, “layer44.0.downsample.1.running_var”, “layer44.1.conv1.weight”, “layer44.1.bn1.weight”, “layer44.1.bn1.bias”, “layer44.1.bn1.running_mean”, “layer44.1.bn1.running_var”, “layer44.1.conv2.weight”, “layer44.1.bn2.weight”, “layer44.1.bn2.bias”, “layer44.1.bn2.running_mean”, “layer44.1.bn2.running_var”.

Unexpected key(s) in state_dict: “layer4.0.conv1.weight”, “layer4.0.bn1.weight”, “layer4.0.bn1.bias”, “layer4.0.bn1.running_mean”, “layer4.0.bn1.running_var”, “layer4.0.bn1.num_batches_tracked”, “layer4.0.conv2.weight”, “layer4.0.bn2.weight”, “layer4.0.bn2.bias”, “layer4.0.bn2.running_mean”, “layer4.0.bn2.running_var”, “layer4.0.bn2.num_batches_tracked”, “layer4.0.downsample.0.weight”, “layer4.0.downsample.1.weight”, “layer4.0.downsample.1.bias”, “layer4.0.downsample.1.running_mean”, “layer4.0.downsample.1.running_var”, “layer4.0.downsample.1.num_batches_tracked”, “layer4.1.conv1.weight”, “layer4.1.bn1.weight”, “layer4.1.bn1.bias”, “layer4.1.bn1.running_mean”, “layer4.1.bn1.running_var”, “layer4.1.bn1.num_batches_tracked”, “layer4.1.conv2.weight”, “layer4.1.bn2.weight”, “layer4.1.bn2.bias”, “layer4.1.bn2.running_mean”, “layer4.1.bn2.running_var”, “layer4.1.bn2.num_batches_tracked”.

方法一:将原来预训练好的模型参数迁移到新的resnet18网络架构中,只有迁移两者相同的模型参数,不同的参数还是随机初始化。

def transfer_model(pretrained_file, model):

pretrained_dict = torch.load(pretrained_file) # get pretrained dict

model_dict = model.state_dict() # get model dict

# 在合并前(update),需要去除pretrained\_dict一些不需要的参数

pretrained_dict = transfer_state_dict(pretrained_dict, model_dict)

model_dict.update(pretrained_dict) # 更新(合并)模型的参数

model.load_state_dict(model_dict)

return model

def transfer_state_dict(pretrained_dict, model_dict):

# state_dict2 = {k: v for k, v in save_model.items() if k in model_dict.keys()}

state_dict = {}

for k, v in pretrained_dict.items():

if k in model_dict.keys():

# state_dict.setdefault(k, v)

state_dict[k] = v

else:

print(“Missing key(s) in state_dict :{}”.format(k))

return state_dict

if name == “__main__”:

input_tensor = torch.zeros(1, 3, 100, 100)

print(‘input_tensor:’, input_tensor.shape)

pretrained_file = “./model_resnet18.pt”

# model = resnet18()

# model.load_state_dict(torch.load(pretrained_file))

# model.eval()

# out = model(input_tensor)

# print(“out:”, out.shape, out[0, 0:10])

model1 = ResNet()

model1 = transfer_model(pretrained_file, model1)

out1 = model1(input_tensor)

print("out1:", out1.shape, out1[0, 0:10])

方法二:修改网络名称并迁移学习

由于我们将官方的resnet18的self.layer4改为了:self.layer44 ,我们仅仅修改了一个网络名称而已,就导致模型参数加载出错。那么,我们如何将预训练好的模型修改成符合新网络架构?

def string_rename(old_string, new_string, start, end):

new_string = old_string[:start] + new_string + old_string[end:]

return new_string

def modify_model(pretrained_file, model, old_prefix, new_prefix):

‘’’

:param pretrained_file:

:param model:

:param old_prefix:

:param new_prefix:

:return:

‘’’

pretrained_dict = torch.load(pretrained_file)

model_dict = model.state_dict()

state_dict = modify_state_dict(pretrained_dict, model_dict, old_prefix, new_prefix)

model.load_state_dict(state_dict)

return model

def modify_state_dict(pretrained_dict, model_dict, old_prefix, new_prefix):

‘’’

修改model dict

:param pretrained_dict:

:param model_dict:

:param old_prefix:

:param new_prefix:

:return:

‘’’

state_dict = {}

for k, v in pretrained_dict.items():

if k in model_dict.keys():

# state_dict.setdefault(k, v)

state_dict[k] = v

else:

for o, n in zip(old_prefix, new_prefix):

prefix = k[:len(o)]

if prefix == o:

kk = string_rename(old_string=k, new_string=n, start=0, end=len(o))

print(“rename layer modules:{}–>{}”.format(k, kk))

state_dict[kk] = v

return state_dict

if name == “__main__”:

input_tensor = torch.zeros(1, 3, 100, 100)

print(‘input_tensor:’, input_tensor.shape)

pretrained_file = “./model_resnet18.pt”

最全的Linux教程,Linux从入门到精通

======================

-

linux从入门到精通(第2版)

-

Linux系统移植

-

Linux驱动开发入门与实战

-

LINUX 系统移植 第2版

-

Linux开源网络全栈详解 从DPDK到OpenFlow

第一份《Linux从入门到精通》466页

====================

内容简介

====

本书是获得了很多读者好评的Linux经典畅销书**《Linux从入门到精通》的第2版**。本书第1版出版后曾经多次印刷,并被51CTO读书频道评为“最受读者喜爱的原创IT技术图书奖”。本书第﹖版以最新的Ubuntu 12.04为版本,循序渐进地向读者介绍了Linux 的基础应用、系统管理、网络应用、娱乐和办公、程序开发、服务器配置、系统安全等。本书附带1张光盘,内容为本书配套多媒体教学视频。另外,本书还为读者提供了大量的Linux学习资料和Ubuntu安装镜像文件,供读者免费下载。

本书适合广大Linux初中级用户、开源软件爱好者和大专院校的学生阅读,同时也非常适合准备从事Linux平台开发的各类人员。

需要《Linux入门到精通》、《linux系统移植》、《Linux驱动开发入门实战》、《Linux开源网络全栈》电子书籍及教程的工程师朋友们劳烦您转发+评论

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

2194

2194

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?