先自我介绍一下,小编浙江大学毕业,去过华为、字节跳动等大厂,目前阿里P7

深知大多数程序员,想要提升技能,往往是自己摸索成长,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

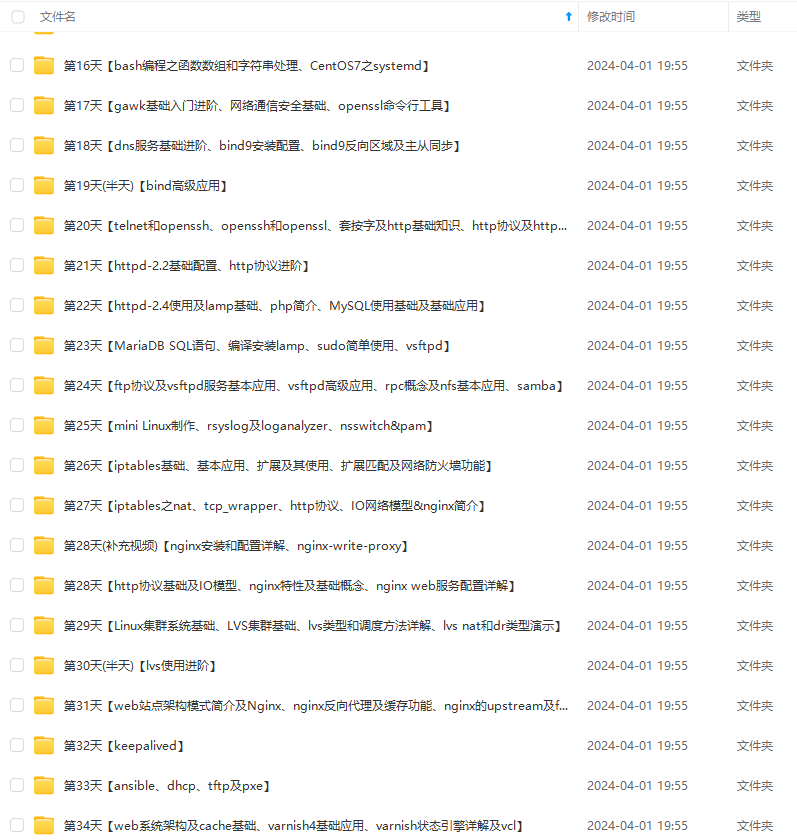

因此收集整理了一份《2024年最新Linux运维全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上运维知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

如果你需要这些资料,可以添加V获取:vip1024b (备注运维)

正文

(需要修改环境配置文件hadoop-env.sh 申明JAVA安装路径和hadoop配置文件路径)

修改配置文件

[root@hadoop hadoop]# vim hadoop-env.sh

验证

[root@hadoop hadoop]# ./bin/hadoop version

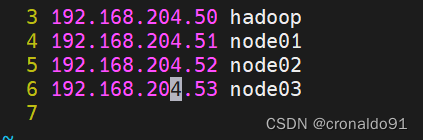

(6)修改节点配置文件

[root@hadoop hadoop]# vim slaves

修改前:

修改后:

node01

node02

node03

(7)查看官方文档

https://hadoop.apache.org/docs/

指定版本

https://hadoop.apache.org/docs/r2.7.7/

查看核心配置文件

https://hadoop.apache.org/docs/r2.7.7/hadoop-project-dist/hadoop-common/core-default.xml

文件系统配置参数:

数据目录配置参数:

(8)修改核心配置文件

[root@hadoop hadoop]# vim core-site.xml

修改前:

修改后:

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop:9000</value>

<description>hdfs file system</description>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/var/hadoop</value>

</property>

</configuration>

(9)查看HDFS配置文件

https://hadoop.apache.org/docs/r2.7.7/hadoop-project-dist/hadoop-hdfs/hdfs-default.xml

namenode:

副本数量:

(10)修改HDFS配置文件

[root@hadoop hadoop]# vim hdfs-site.xml

修改前:

修改后:

<configuration>

<property>

<name>dfs.namenode.http-address</name>

<value>hadoop:50070</value>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop:50090</value>

<name>dfs.replication</name>

<value>2</value>

</property>

</configuration>

(11) 查看同步

[root@hadoop ~]# rpm -q rsync

同步

[root@hadoop ~]# rsync -aXSH --delete /usr/local/hadoop node01:/usr/local/

[root@hadoop ~]# rsync -aXSH --delete /usr/local/hadoop node02:/usr/local/

[root@hadoop ~]# rsync -aXSH --delete /usr/local/hadoop node03:/usr/local/

(12)初始化hdfs

[root@hadoop ~]# mkdir /var/hadoop

(13)查看命令

[root@hadoop hadoop]# ./bin/hdfs

Usage: hdfs [--config confdir] [--loglevel loglevel] COMMAND

where COMMAND is one of:

dfs run a filesystem command on the file systems supported in Hadoop.

classpath prints the classpath

namenode -format format the DFS filesystem

secondarynamenode run the DFS secondary namenode

namenode run the DFS namenode

journalnode run the DFS journalnode

zkfc run the ZK Failover Controller daemon

datanode run a DFS datanode

dfsadmin run a DFS admin client

haadmin run a DFS HA admin client

fsck run a DFS filesystem checking utility

balancer run a cluster balancing utility

jmxget get JMX exported values from NameNode or DataNode.

mover run a utility to move block replicas across

storage types

oiv apply the offline fsimage viewer to an fsimage

oiv_legacy apply the offline fsimage viewer to an legacy fsimage

oev apply the offline edits viewer to an edits file

fetchdt fetch a delegation token from the NameNode

getconf get config values from configuration

groups get the groups which users belong to

snapshotDiff diff two snapshots of a directory or diff the

current directory contents with a snapshot

lsSnapshottableDir list all snapshottable dirs owned by the current user

Use -help to see options

portmap run a portmap service

nfs3 run an NFS version 3 gateway

cacheadmin configure the HDFS cache

crypto configure HDFS encryption zones

storagepolicies list/get/set block storage policies

version print the version

Most commands print help when invoked w/o parameters.

(14)格式化hdfs

[root@hadoop hadoop]# ./bin/hdfs namenode -format

查看目录

[root@hadoop hadoop]# cd /var/hadoop/

[root@hadoop hadoop]# tree .

.

└── dfs

└── name

└── current

├── fsimage_0000000000000000000

├── fsimage_0000000000000000000.md5

├── seen_txid

└── VERSION

3 directories, 4 files

(15) 启动集群

查看目录

[root@hadoop hadoop]# cd ~

[root@hadoop ~]# cd /usr/local/hadoop/

[root@hadoop hadoop]# ls

启动

[root@hadoop hadoop]# ./sbin/start-dfs.sh

查看日志(新生成logs目录)

[root@hadoop hadoop]# cd logs/ ; ll

查看jps

[root@hadoop hadoop]# jps

datanode节点查看(node01)

datanode节点查看(node02)

datanode节点查看(node03)

(16)查看命令

[root@hadoop hadoop]# ./bin/hdfs dfsadmin

Usage: hdfs dfsadmin

Note: Administrative commands can only be run as the HDFS superuser.

[-report [-live] [-dead] [-decommissioning]]

[-safemode <enter | leave | get | wait>]

[-saveNamespace]

[-rollEdits]

[-restoreFailedStorage true|false|check]

[-refreshNodes]

[-setQuota <quota> <dirname>...<dirname>]

[-clrQuota <dirname>...<dirname>]

[-setSpaceQuota <quota> [-storageType <storagetype>] <dirname>...<dirname>]

[-clrSpaceQuota [-storageType <storagetype>] <dirname>...<dirname>]

[-finalizeUpgrade]

[-rollingUpgrade [<query|prepare|finalize>]]

[-refreshServiceAcl]

[-refreshUserToGroupsMappings]

[-refreshSuperUserGroupsConfiguration]

[-refreshCallQueue]

[-refresh <host:ipc_port> <key> [arg1..argn]

[-reconfig <datanode|...> <host:ipc_port> <start|status>]

[-printTopology]

[-refreshNamenodes datanode_host:ipc_port]

[-deleteBlockPool datanode_host:ipc_port blockpoolId [force]]

[-setBalancerBandwidth <bandwidth in bytes per second>]

[-fetchImage <local directory>]

[-allowSnapshot <snapshotDir>]

[-disallowSnapshot <snapshotDir>]

[-shutdownDatanode <datanode_host:ipc_port> [upgrade]]

[-getDatanodeInfo <datanode_host:ipc_port>]

[-metasave filename]

[-triggerBlockReport [-incremental] <datanode_host:ipc_port>]

[-help [cmd]]

Generic options supported are

-conf <configuration file> specify an application configuration file

-D <property=value> use value for given property

-fs <local|namenode:port> specify a namenode

-jt <local|resourcemanager:port> specify a ResourceManager

-files <comma separated list of files> specify comma separated files to be copied to the map reduce cluster

-libjars <comma separated list of jars> specify comma separated jar files to include in the classpath.

-archives <comma separated list of archives> specify comma separated archives to be unarchived on the compute machines.

The general command line syntax is

(17)验证集群

查看报告,发现3个节点

[root@hadoop hadoop]# ./bin/hdfs dfsadmin -report

Configured Capacity: 616594919424 (574.25 GB)

Present Capacity: 598915952640 (557.78 GB)

DFS Remaining: 598915915776 (557.78 GB)

DFS Used: 36864 (36 KB)

DFS Used%: 0.00%

Under replicated blocks: 0

Blocks with corrupt replicas: 0

Missing blocks: 0

Missing blocks (with replication factor 1): 0

-------------------------------------------------

Live datanodes (3):

Name: 192.168.204.53:50010 (node03)

Hostname: node03

Decommission Status : Normal

Configured Capacity: 205531639808 (191.42 GB)

DFS Used: 12288 (12 KB)

Non DFS Used: 5620584448 (5.23 GB)

DFS Remaining: 199911043072 (186.18 GB)

DFS Used%: 0.00%

DFS Remaining%: 97.27%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Thu Mar 14 10:30:18 CST 2024

Name: 192.168.204.51:50010 (node01)

Hostname: node01

Decommission Status : Normal

Configured Capacity: 205531639808 (191.42 GB)

DFS Used: 12288 (12 KB)

Non DFS Used: 6028849152 (5.61 GB)

DFS Remaining: 199502778368 (185.80 GB)

DFS Used%: 0.00%

DFS Remaining%: 97.07%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Thu Mar 14 10:30:18 CST 2024

Name: 192.168.204.52:50010 (node02)

Hostname: node02

Decommission Status : Normal

Configured Capacity: 205531639808 (191.42 GB)

DFS Used: 12288 (12 KB)

Non DFS Used: 6029533184 (5.62 GB)

DFS Remaining: 199502094336 (185.80 GB)

DFS Used%: 0.00%

DFS Remaining%: 97.07%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Thu Mar 14 10:30:18 CST 2024

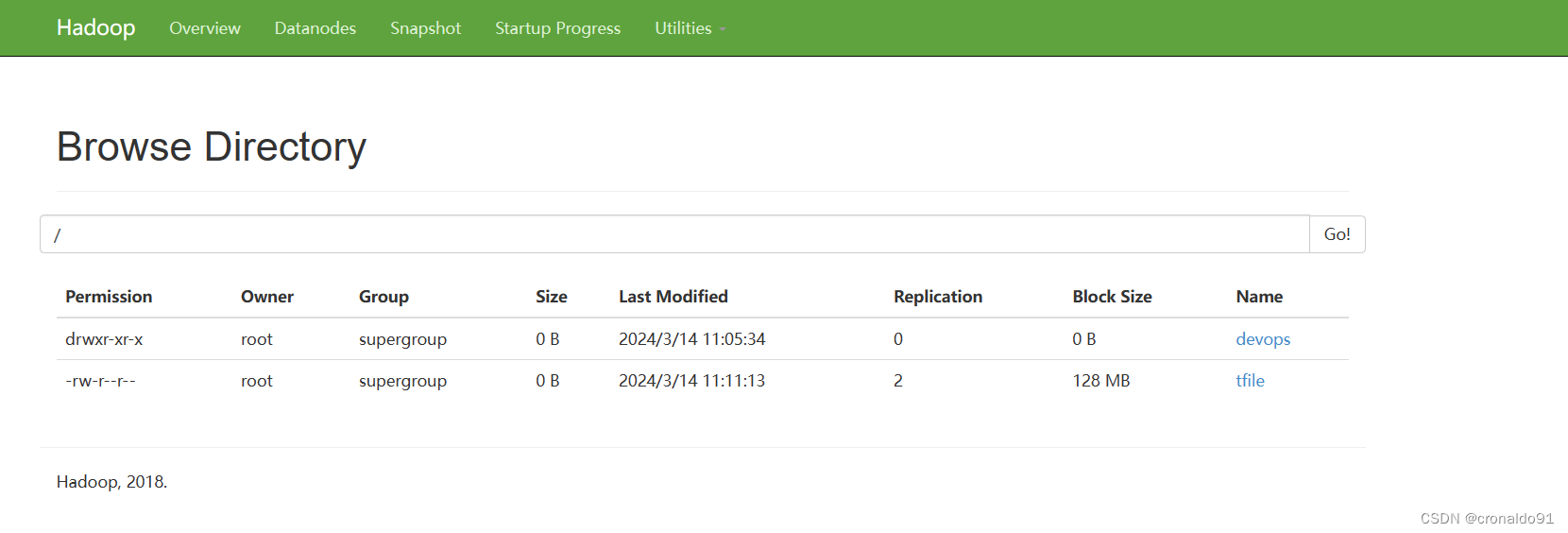

(18)web页面验证

http://192.168.204.50:50070/

http://192.168.204.50:50090/

http://192.168.204.51:50075/

(19)访问系统

目前为空

3.Linux 使用 HDFS 文件系统

(1)查看命令

[root@hadoop hadoop]# ./bin/hadoop

Usage: hadoop [--config confdir] [COMMAND | CLASSNAME]

CLASSNAME run the class named CLASSNAME

or

where COMMAND is one of:

fs run a generic filesystem user client

version print the version

jar <jar> run a jar file

note: please use "yarn jar" to launch

YARN applications, not this command.

checknative [-a|-h] check native hadoop and compression libraries availability

distcp <srcurl> <desturl> copy file or directories recursively

archive -archiveName NAME -p <parent path> <src>* <dest> create a hadoop archive

classpath prints the class path needed to get the

credential interact with credential providers

Hadoop jar and the required libraries

daemonlog get/set the log level for each daemon

trace view and modify Hadoop tracing settings

Most commands print help when invoked w/o parameters.

[root@hadoop hadoop]# ./bin/hadoop fs

Usage: hadoop fs [generic options]

[-appendToFile <localsrc> ... <dst>]

[-cat [-ignoreCrc] <src> ...]

[-checksum <src> ...]

[-chgrp [-R] GROUP PATH...]

[-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...]

[-chown [-R] [OWNER][:[GROUP]] PATH...]

[-copyFromLocal [-f] [-p] [-l] <localsrc> ... <dst>]

[-copyToLocal [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]

[-count [-q] [-h] <path> ...]

[-cp [-f] [-p | -p[topax]] <src> ... <dst>]

[-createSnapshot <snapshotDir> [<snapshotName>]]

[-deleteSnapshot <snapshotDir> <snapshotName>]

[-df [-h] [<path> ...]]

[-du [-s] [-h] <path> ...]

[-expunge]

[-find <path> ... <expression> ...]

[-get [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]

[-getfacl [-R] <path>]

[-getfattr [-R] {-n name | -d} [-e en] <path>]

[-getmerge [-nl] <src> <localdst>]

[-help [cmd ...]]

[-ls [-d] [-h] [-R] [<path> ...]]

[-mkdir [-p] <path> ...]

[-moveFromLocal <localsrc> ... <dst>]

[-moveToLocal <src> <localdst>]

[-mv <src> ... <dst>]

[-put [-f] [-p] [-l] <localsrc> ... <dst>]

[-renameSnapshot <snapshotDir> <oldName> <newName>]

[-rm [-f] [-r|-R] [-skipTrash] <src> ...]

[-rmdir [--ignore-fail-on-non-empty] <dir> ...]

[-setfacl [-R] [{-b|-k} {-m|-x <acl_spec>} <path>]|[--set <acl_spec> <path>]]

[-setfattr {-n name [-v value] | -x name} <path>]

[-setrep [-R] [-w] <rep> <path> ...]

[-stat [format] <path> ...]

[-tail [-f] <file>]

[-test -[defsz] <path>]

[-text [-ignoreCrc] <src> ...]

[-touchz <path> ...]

[-truncate [-w] <length> <path> ...]

[-usage [cmd ...]]

Generic options supported are

-conf <configuration file> specify an application configuration file

-D <property=value> use value for given property

-fs <local|namenode:port> specify a namenode

-jt <local|resourcemanager:port> specify a ResourceManager

-files <comma separated list of files> specify comma separated files to be copied to the map reduce cluster

-libjars <comma separated list of jars> specify comma separated jar files to include in the classpath.

-archives <comma separated list of archives> specify comma separated archives to be unarchived on the compute machines.

The general command line syntax is

bin/hadoop command [genericOptions] [commandOptions]

(2)查看文件目录

[root@hadoop hadoop]# ./bin/hadoop fs -ls /

(3)创建文件夹

[root@hadoop hadoop]# ./bin/hadoop fs -mkdir /devops

查看

查看web

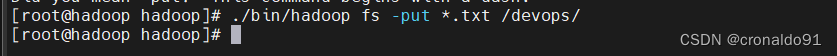

(4)上传文件

[root@hadoop hadoop]# ./bin/hadoop fs -put *.txt /devops/

查看

[root@hadoop hadoop]# ./bin/hadoop fs -ls /devops/

查看web

Permission Owner Group Size Last Modified Replication Block Size Name

-rw-r--r-- root supergroup 84.4 KB 2024/3/14 11:05:33 2 128 MB LICENSE.txt

-rw-r--r-- root supergroup 14.63 KB 2024/3/14 11:05:34 2 128 MB NOTICE.txt

-rw-r--r-- root supergroup 1.33 KB 2024/3/14 11:05:34 2 128 MB README.txt

下载

(5)创建文件

[root@hadoop hadoop]# ./bin/hadoop fs -touchz /tfile

查看

[root@hadoop hadoop]# ./bin/hadoop fs -ls /

(5)下载文件

[root@hadoop hadoop]# ./bin/hadoop fs -get /tfile /tmp/

查看

[root@hadoop hadoop]# ls -l /tmp/ | grep tfile

查看web

(6) 查看命令比较

之前的设置

所以查看功能相同

[root@hadoop hadoop]# ./bin/hadoop fs -ls /

[root@hadoop hadoop]# ./bin/hadoop fs -ls hdfs://hadoop:9000/

另外官网默认是file ,使用的是本地文件目录

[root@hadoop hadoop]# ./bin/hadoop fs -ls file:///

二、问题

1.ssh-copy-id 报错

(1)报错

/usr/bin/ssh-copy-id: ERROR: ssh: connect to host hadoop port 22: Connection refused

(2)原因分析

主机解析错误。

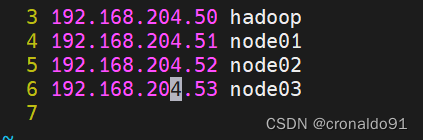

(3)解决方法

修改前:

修改后:

成功:

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024b (备注运维)

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

adoop]# ./bin/hadoop fs -ls file:///

## 二、问题

### 1.ssh-copy-id 报错

(1)报错

/usr/bin/ssh-copy-id: ERROR: ssh: connect to host hadoop port 22: Connection refused

(2)原因分析

主机解析错误。

(3)解决方法

修改前:

修改后:

成功:

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**需要这份系统化的资料的朋友,可以添加V获取:vip1024b (备注运维)**

[外链图片转存中...(img-uE2t7cNx-1713328732624)]

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

3213

3213

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?