网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

urls = []

for link in links:

if StringUtil.filtString(self.fillUrl+“pn\d+?/”,link):

urls.append(link)

return urls

def parseItems(self,html,url):

houselist = html.xpath(“.//ul[@class=‘house-list-wrap’]//div[@class=‘list-info’]”)

items = []

for houseinfo in houselist:

detailurl = houseinfo.xpath(“.//h2[1]/a/@href”)

title = “”.join(houseinfo.xpath(“.//h2[1]/a/text()”))

roomNum = “”.join(houseinfo.xpath(“.//p[1]/span[1]/text()”)[0].split())

size = “”.join(houseinfo.xpath(“.//p[1]/span[2]/text()”))

orient = “”.join(houseinfo.xpath(“.//p[1]/span[3]/text()”))

floor = “”.join(houseinfo.xpath(“.//p[1]/span[4]/text()”))

address = “”.join((“”.join(houseinfo.xpath(“.//p[2]/span[1]//a/text()”))).split())

sumprice = “”.join(houseinfo.xpath(“./following-sibling::div[1]//p[@class=‘sum’]/b/text()”))

unitprice = “”.join(houseinfo.xpath(“./following-sibling::div[@class=‘price’]//p[@class=‘unit’]/text()”))

items.append(HouseItem(

_id = “”.join(detailurl),

title = title,

roomNum = roomNum,

size = NumberUtil.fromString(size),

orient = orient,

floor = floor,

address = address,

sumPrice = NumberUtil.fromString(sumprice),

unitPrice = NumberUtil.fromString(unitprice),

city=self.city,

fromUrl = url,

nowTime = time.time(),

status = “SUBSPENDING”)

)

return items

def parse(self,response):

if(response.body ==‘None’):

return

doc = html.fromstring(response.body.decode(“utf-8”))

urls = self.parseUrls(doc)

items = self.parseItems(doc,response.url)

for url in urls:

yield scrapy.Request(url,callback=self.parse)

for item in items:

yield item

2.items

class HouseItem(scrapy.Item):

define the fields for your item here like:

name = scrapy.Field()

title = scrapy.Field()

roomNum = scrapy.Field()

size = scrapy.Field()

orient = scrapy.Field()

floor = scrapy.Field()

address = scrapy.Field()

sumPrice = scrapy.Field()

unitPrice = scrapy.Field()

_id = scrapy.Field()

imageurl = scrapy.Field()

fromUrl = scrapy.Field()

city = scrapy.Field()

nowTime = scrapy.Field()

status = scrapy.Field()

3.pipelines

#coding: utf-8

import codecs

import json

import pymongo

from scrapy.utils.project import get_project_settings

Define your item pipelines here

Don’t forget to add your pipeline to the ITEM_PIPELINES setting

See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

from ershoufang.items import ProxyItem

class ErshoufangPipeline(object):

def init(self):

self.settings = get_project_settings()

self.client = pymongo.MongoClient(

host=self.settings[‘MONGO_IP’],

port=self.settings[‘MONGO_PORT’])

self.db = self.client[self.settings[‘MONGO_DB’]]

self.proxyclient = self.proxy = self.client[self.settings[‘PROXY_DB’]][self.settings[‘POOL_NAME’]]

self.itemNumber = 0

def process_proxy(self,item):

self.proxyclient.insert(dict(item))

def process_item(self, item, spider):

if isinstance (item,ProxyItem):

self.process_proxy(item)

return item

try:

if not item[‘address’]:

print(item[“fromUrl”+“网页异常”])

return item

‘’’

if self.db.ershoufang.count({“_id”:item[“_id”],“city”:item[‘city’]})<= 0:

print(“删除”)

self.db.ershoufang.remove({“_id”:item[“_id”]})

‘’’

coll = self.db[self.settings[‘ALL’]]

coll.insert(dict(item))

self.itemNumber += 1

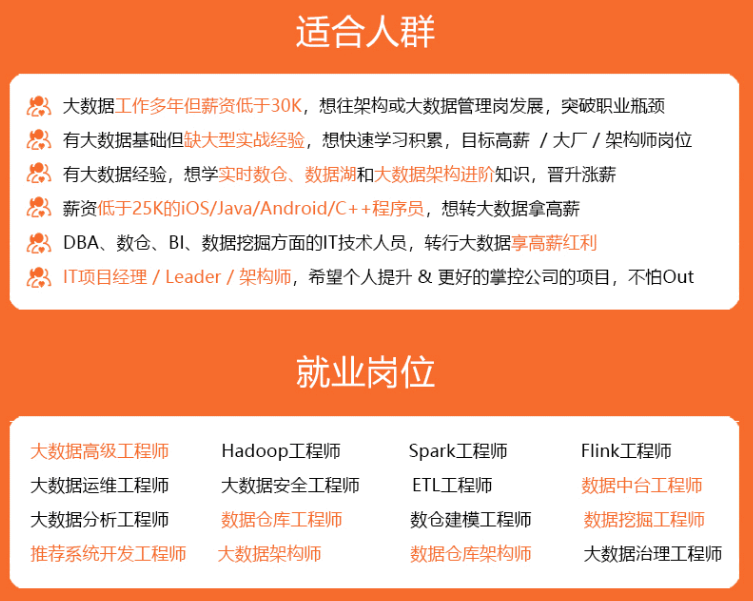

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

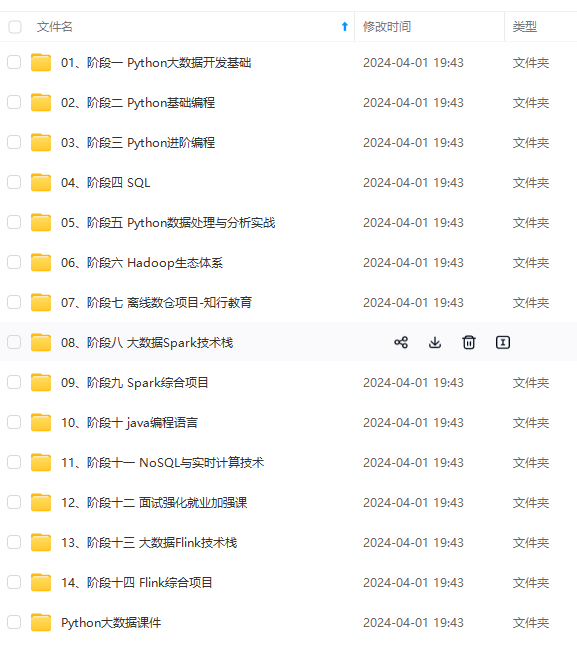

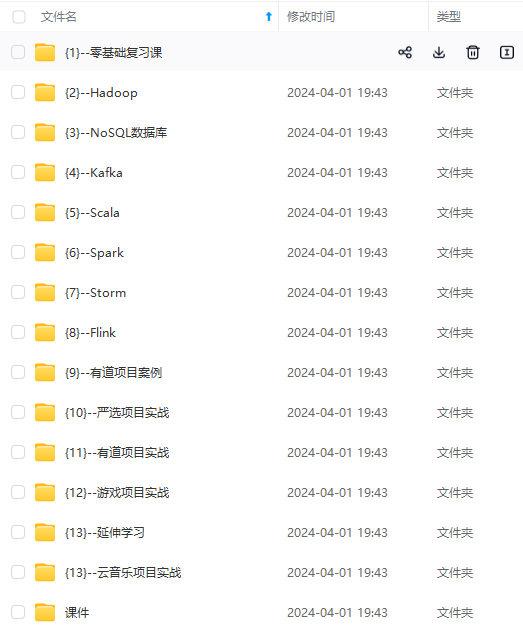

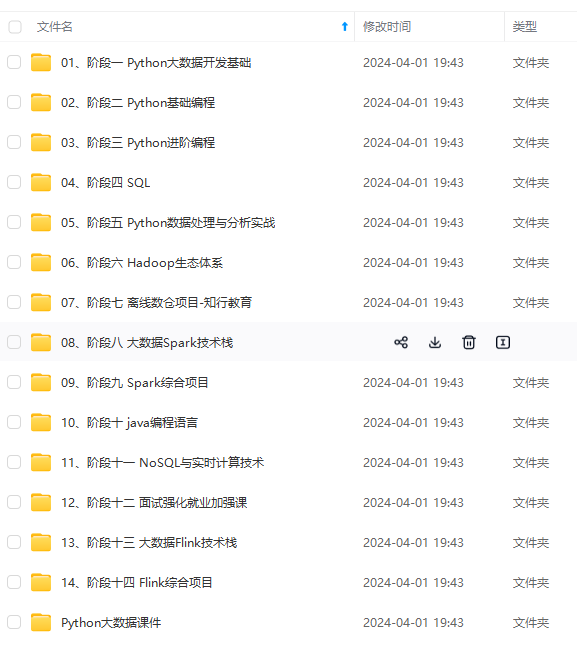

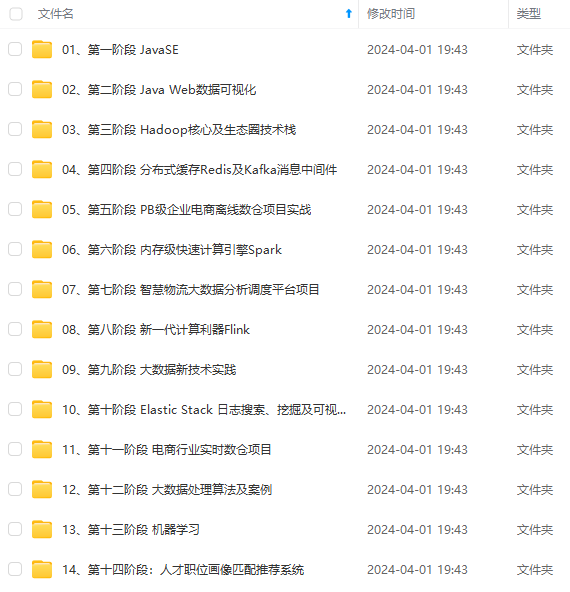

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

…(img-2BmxvutB-1715657511583)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

685

685

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?