class Block(nn.Module):

def __init__(self, input_channel=64, output_channel=64, kernel_size=3, stride=1, padding=1):

super().init()

self.layer = nn.Sequential(

nn.Conv2d(input_channel, output_channel, kernel_size, stride, bias=False, padding=1),

nn.BatchNorm2d(output_channel),

nn.PReLU(),

nn.Conv2d(output_channel, output_channel, kernel_size, stride, bias=False, padding=1),

nn.BatchNorm2d(output_channel)

)

def forward(self, x0):

x1 = self.layer(x0)

return x0 + x1

class Generator(nn.Module):

def __init__(self, scale=2):

super().init()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, 9, stride=1, padding=4),

nn.PReLU()

)

self.residual_block = nn.Sequential(

Block(),

Block(),

Block(),

Block(),

Block(),

)

self.conv2 = nn.Sequential(

nn.Conv2d(64, 64, 3, stride=1, padding=1),

nn.BatchNorm2d(64),

)

self.conv3 = nn.Sequential(

nn.Conv2d(64, 256, 3, stride=1, padding=1),

nn.PixelShuffle(scale),

nn.PReLU(),

nn.Conv2d(64, 256, 3, stride=1, padding=1),

nn.PixelShuffle(scale),

nn.PReLU(),

)

self.conv4 = nn.Conv2d(64, 3, 9, stride=1, padding=4)

def forward(self, x):

x0 = self.conv1(x)

x = self.residual_block(x0)

x = self.conv2(x)

x = self.conv3(x + x0)

x = self.conv4(x)

return x

class DownSalmpe(nn.Module):

def __init__(self, input_channel, output_channel, stride, kernel_size=3, padding=1):

super().init()

self.layer = nn.Sequential(

nn.Conv2d(input_channel, output_channel, kernel_size, stride, padding),

nn.BatchNorm2d(output_channel),

nn.LeakyReLU(inplace=True)

)

def forward(self, x):

x = self.layer(x)

return x

class Discriminator(nn.Module):

def __init__(self):

super().init()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, 3, stride=1, padding=1),

nn.LeakyReLU(inplace=True),

)

self.down = nn.Sequential(

DownSalmpe(64, 64, stride=2, padding=1),

DownSalmpe(64, 128, stride=1, padding=1),

DownSalmpe(128, 128, stride=2, padding=1),

DownSalmpe(128, 256, stride=1, padding=1),

DownSalmpe(256, 256, stride=2, padding=1),

DownSalmpe(256, 512, stride=1, padding=1),

DownSalmpe(512, 512, stride=2, padding=1),

)

self.dense = nn.Sequential(

nn.AdaptiveAvgPool2d(1),

nn.Conv2d(512, 1024, 1),

nn.LeakyReLU(inplace=True),

nn.Conv2d(1024, 1, 1),

nn.Sigmoid()

)

def forward(self, x):

x = self.conv1(x)

x = self.down(x)

x = self.dense(x)

return x

#### ESRGAN

**论文**:<https://arxiv.org/abs/1809.00219>

**代码**:

Pytorch <https://github.com/xinntao/ESRGAN>

1. **创新点**:(1) 提出 Residual-in-Residual Dense Block (RRDB) 结构,并去掉去掉 BatchNorm 层; (2) 借鉴 Relativistic GAN 的想法,让判别器预测图像的真实性而不是图像“是否是 fake 图像”;(3) 使用激活前的特征计算感知损失。

2. **好处**:(1) 密集连接可以更好地融合特征和加速训练,更加提升恢复得到的纹理(因为深度模型具有强大的表示能力来捕获语义信息),而且可以去除噪声,同时去掉 BatchNorm 可以获得更好的效果;(2) 让重建的图像更加接近真实图像;(3) 激活前的特征会提供更尖锐的边缘和更符合视觉的结果。

3. **核心代码**:

import functools

import torch

import torch.nn as nn

import torch.nn.functional as F

def make_layer(block, n_layers):

layers = []

for _ in range(n_layers):

layers.append(block())

return nn.Sequential(*layers)

class ResidualDenseBlock_5C(nn.Module):

def __init__(self, nf=64, gc=32, bias=True):

super(ResidualDenseBlock_5C, self).init()

# gc: growth channel, i.e. intermediate channels

self.conv1 = nn.Conv2d(nf, gc, 3, 1, 1, bias=bias)

self.conv2 = nn.Conv2d(nf + gc, gc, 3, 1, 1, bias=bias)

self.conv3 = nn.Conv2d(nf + 2 * gc, gc, 3, 1, 1, bias=bias)

self.conv4 = nn.Conv2d(nf + 3 * gc, gc, 3, 1, 1, bias=bias)

self.conv5 = nn.Conv2d(nf + 4 * gc, nf, 3, 1, 1, bias=bias)

self.lrelu = nn.LeakyReLU(negative_slope=0.2, inplace=True)

# initialization

# mutil.initialize\_weights([self.conv1, self.conv2, self.conv3, self.conv4, self.conv5], 0.1)

def forward(self, x):

x1 = self.lrelu(self.conv1(x))

x2 = self.lrelu(self.conv2(torch.cat((x, x1), 1)))

x3 = self.lrelu(self.conv3(torch.cat((x, x1, x2), 1)))

x4 = self.lrelu(self.conv4(torch.cat((x, x1, x2, x3), 1)))

x5 = self.conv5(torch.cat((x, x1, x2, x3, x4), 1))

return x5 \* 0.2 + x

class RRDB(nn.Module):

‘’‘Residual in Residual Dense Block’‘’

def \_\_init\_\_(self, nf, gc=32):

super(RRDB, self).__init__()

self.RDB1 = ResidualDenseBlock_5C(nf, gc)

self.RDB2 = ResidualDenseBlock_5C(nf, gc)

self.RDB3 = ResidualDenseBlock_5C(nf, gc)

def forward(self, x):

out = self.RDB1(x)

out = self.RDB2(out)

out = self.RDB3(out)

return out \* 0.2 + x

class RRDBNet(nn.Module):

def __init__(self, in_nc, out_nc, nf, nb, gc=32):

super(RRDBNet, self).init()

RRDB_block_f = functools.partial(RRDB, nf=nf, gc=gc)

self.conv_first = nn.Conv2d(in_nc, nf, 3, 1, 1, bias=True)

self.RRDB_trunk = make_layer(RRDB_block_f, nb)

self.trunk_conv = nn.Conv2d(nf, nf, 3, 1, 1, bias=True)

#### upsampling

self.upconv1 = nn.Conv2d(nf, nf, 3, 1, 1, bias=True)

self.upconv2 = nn.Conv2d(nf, nf, 3, 1, 1, bias=True)

self.HRconv = nn.Conv2d(nf, nf, 3, 1, 1, bias=True)

self.conv_last = nn.Conv2d(nf, out_nc, 3, 1, 1, bias=True)

self.lrelu = nn.LeakyReLU(negative_slope=0.2, inplace=True)

def forward(self, x):

fea = self.conv_first(x)

trunk = self.trunk_conv(self.RRDB_trunk(fea))

fea = fea + trunk

fea = self.lrelu(self.upconv1(F.interpolate(fea, scale_factor=2, mode='nearest')))

fea = self.lrelu(self.upconv2(F.interpolate(fea, scale_factor=2, mode='nearest')))

out = self.conv_last(self.lrelu(self.HRconv(fea)))

return out

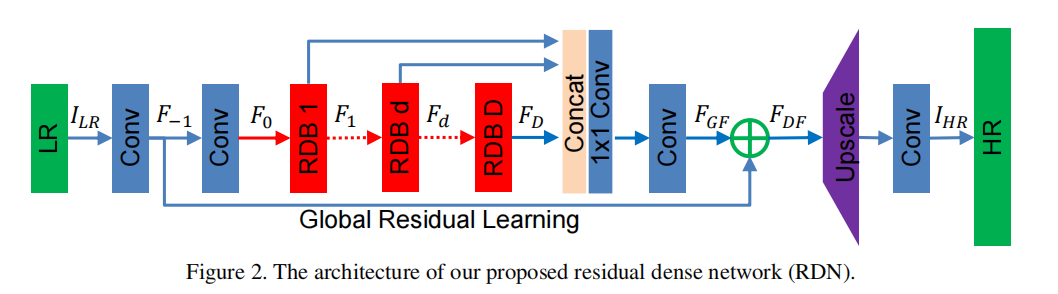

#### RDN

**论文**:<https://arxiv.org/abs/1802.08797>

**代码**:

TensorFlow <https://github.com/hengchuan/RDN-TensorFlow>

Pytorch <https://github.com/lizhengwei1992/ResidualDenseNetwork-Pytorch>

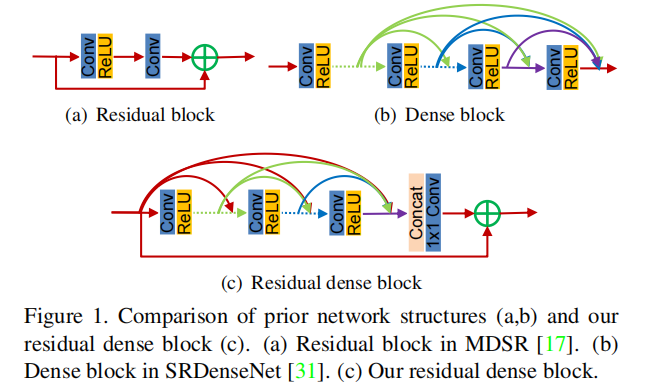

1. **创新点**:提出Residual Dense Block (RDB) 结构;

2. **好处**:残差学习和密集连接有效缓解网络深度增加引发的梯度消失的现象,其中密集连接加强特征传播, 鼓励特征复用。

3. **核心代码**:

import torch

import torch.nn as nn

import torch.nn.functional as F

class sub_pixel(nn.Module):

def __init__(self, scale, act=False):

super(sub_pixel, self).init()

modules = []

modules.append(nn.PixelShuffle(scale))

self.body = nn.Sequential(*modules)

def forward(self, x):

x = self.body(x)

return x

class make_dense(nn.Module):

def __init__(self, nChannels, growthRate, kernel_size=3):

super(make_dense, self).init()

self.conv = nn.Conv2d(nChannels, growthRate, kernel_size=kernel_size, padding=(kernel_size-1)//2, bias=False)

def forward(self, x):

out = F.relu(self.conv(x))

out = torch.cat((x, out), 1)

return out

Residual dense block (RDB) architecture

class RDB(nn.Module):

def __init__(self, nChannels, nDenselayer, growthRate):

super(RDB, self).init()

nChannels_ = nChannels

modules = []

for i in range(nDenselayer):

modules.append(make_dense(nChannels_, growthRate))

nChannels_ += growthRate

self.dense_layers = nn.Sequential(*modules)

self.conv_1x1 = nn.Conv2d(nChannels_, nChannels, kernel_size=1, padding=0, bias=False)

def forward(self, x):

out = self.dense_layers(x)

out = self.conv_1x1(out)

out = out + x

return out

Residual Dense Network

class RDN(nn.Module):

def __init__(self, args):

super(RDN, self).init()

nChannel = args.nChannel

nDenselayer = args.nDenselayer

nFeat = args.nFeat

scale = args.scale

growthRate = args.growthRate

self.args = args

# F-1

self.conv1 = nn.Conv2d(nChannel, nFeat, kernel_size=3, padding=1, bias=True)

# F0

self.conv2 = nn.Conv2d(nFeat, nFeat, kernel_size=3, padding=1, bias=True)

# RDBs 3

self.RDB1 = RDB(nFeat, nDenselayer, growthRate)

self.RDB2 = RDB(nFeat, nDenselayer, growthRate)

self.RDB3 = RDB(nFeat, nDenselayer, growthRate)

# global feature fusion (GFF)

self.GFF_1x1 = nn.Conv2d(nFeat\*3, nFeat, kernel_size=1, padding=0, bias=True)

self.GFF_3x3 = nn.Conv2d(nFeat, nFeat, kernel_size=3, padding=1, bias=True)

# Upsampler

self.conv_up = nn.Conv2d(nFeat, nFeat\*scale\*scale, kernel_size=3, padding=1, bias=True)

self.upsample = sub_pixel(scale)

# conv

self.conv3 = nn.Conv2d(nFeat, nChannel, kernel_size=3, padding=1, bias=True)

def forward(self, x):

F_ = self.conv1(x)

F_0 = self.conv2(F_)

F_1 = self.RDB1(F_0)

F_2 = self.RDB2(F_1)

F_3 = self.RDB3(F_2)

FF = torch.cat((F_1, F_2, F_3), 1)

FdLF = self.GFF_1x1(FF)

FGF = self.GFF_3x3(FdLF)

FDF = FGF + F_

us = self.conv_up(FDF)

us = self.upsample(us)

output = self.conv3(us)

return output

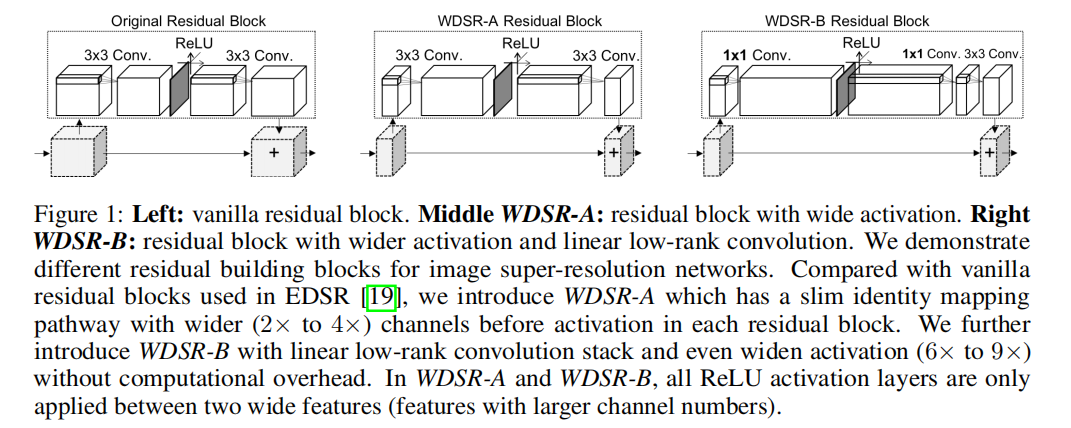

#### WDSR

**论文**:<https://arxiv.org/abs/1808.08718>

**代码**:

TensorFlow <https://github.com/ychfan/tf_estimator_barebone>

Pytorch <https://github.com/JiahuiYu/wdsr_ntire2018>

Keras <https://github.com/krasserm/super-resolution>

1. **创新点**:(1) 增多激活函数前的特征图通道数,即宽泛特征图;(2) Weight Normalization;(3) 两个分支进行相同的上采样操作,直接相加得到高分图像。

2. **好处**:(1) 激活函数会阻止信息流的传递,通过增加特征图通道数可以降低激活函数对信息流的影响;(2) 网络的训练速度和性能都有提升,同时也使得训练可以使用较大的学习率;(3) 大卷积核拆分成两个小卷积核,可以节省参数。

3. **核心代码**:

import torch

import torch.nn as nn

class Block(nn.Module):

def __init__(

self, n_feats, kernel_size, wn, act=nn.ReLU(True), res_scale=1):

super(Block, self).init()

self.res_scale = res_scale

body = []

expand = 6

linear = 0.8

body.append(

wn(nn.Conv2d(n_feats, n_feats*expand, 1, padding=1//2)))

body.append(act)

body.append(

wn(nn.Conv2d(n_feats*expand, int(n_feats*linear), 1, padding=1//2)))

body.append(

wn(nn.Conv2d(int(n_feats*linear), n_feats, kernel_size, padding=kernel_size//2)))

self.body = nn.Sequential(\*body)

def forward(self, x):

res = self.body(x) \* self.res_scale

res += x

return res

class MODEL(nn.Module):

def __init__(self, args):

super(MODEL, self).init()

# hyper-params

self.args = args

scale = args.scale[0]

n_resblocks = args.n_resblocks

n_feats = args.n_feats

kernel_size = 3

act = nn.ReLU(True)

# wn = lambda x: x

wn = lambda x: torch.nn.utils.weight_norm(x)

self.rgb_mean = torch.autograd.Variable(torch.FloatTensor(

[args.r_mean, args.g_mean, args.b_mean])).view([1, 3, 1, 1])

# define head module

head = []

head.append(

wn(nn.Conv2d(args.n_colors, n_feats, 3, padding=3//2)))

# define body module

body = []

for i in range(n_resblocks):

body.append(

Block(n_feats, kernel_size, act=act, res_scale=args.res_scale, wn=wn))

# define tail module

tail = []

out_feats = scale\*scale\*args.n_colors

tail.append(

wn(nn.Conv2d(n_feats, out_feats, 3, padding=3//2)))

tail.append(nn.PixelShuffle(scale))

skip = []

skip.append(

wn(nn.Conv2d(args.n_colors, out_feats, 5, padding=5//2))

)

skip.append(nn.PixelShuffle(scale))

# make object members

self.head = nn.Sequential(\*head)

self.body = nn.Sequential(\*body)

self.tail = nn.Sequential(\*tail)

self.skip = nn.Sequential(\*skip)

def forward(self, x):

x = (x - self.rgb_mean.cuda()\*255)/127.5

s = self.skip(x)

x = self.head(x)

x = self.body(x)

x = self.tail(x)

x += s

x = x\*127.5 + self.rgb_mean.cuda()\*255

return x

#### LapSRN

**论文**:<https://arxiv.org/abs/1704.03915>

**代码**:

MatLab <https://github.com/phoenix104104/LapSRN>

TensorFlow <https://github.com/zjuela/LapSRN-tensorflow>

Pytorch <https://github.com/twtygqyy/pytorch-LapSRN>

1. **创新点**:(1) 提出一种级联的金字塔结构;(2) 提出一种新的损失函数。

2. **好处**:(1) 降低计算复杂度,同时低级特征与高级特征来增加网络的非线性,从而更好地学习和映射细节特征。此外,金字塔结构也使得该算法可以一次就完成多个尺度;(2) MSE 损失会导致重建的高分图像细节模糊和平滑,新的损失函数可以改善这一点。

3. **拉普拉斯图像金字塔**:<https://www.jianshu.com/p/e3570a9216a6>

4. **核心代码**:

import torch

import torch.nn as nn

import numpy as np

import math

def get_upsample_filter(size):

“”“Make a 2D bilinear kernel suitable for upsampling”“”

factor = (size + 1) // 2

if size % 2 == 1:

center = factor - 1

else:

center = factor - 0.5

og = np.ogrid[:size, :size]

filter = (1 - abs(og[0] - center) / factor) *

(1 - abs(og[1] - center) / factor)

return torch.from_numpy(filter).float()

class _Conv_Block(nn.Module):

def __init__(self):

super(_Conv_Block, self).init()

self.cov_block = nn.Sequential(

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.ConvTranspose2d(in_channels=64, out_channels=64, kernel_size=4, stride=2, padding=1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

)

def forward(self, x):

output = self.cov_block(x)

return output

class Net(nn.Module):

def __init__(self):

super(Net, self).init()

self.conv_input = nn.Conv2d(in_channels=1, out_channels=64, kernel_size=3, stride=1, padding=1, bias=False)

self.relu = nn.LeakyReLU(0.2, inplace=True)

self.convt_I1 = nn.ConvTranspose2d(in_channels=1, out_channels=1, kernel_size=4, stride=2, padding=1, bias=False)

self.convt_R1 = nn.Conv2d(in_channels=64, out_channels=1, kernel_size=3, stride=1, padding=1, bias=False)

self.convt_F1 = self.make_layer(_Conv_Block)

self.convt_I2 = nn.ConvTranspose2d(in_channels=1, out_channels=1, kernel_size=4, stride=2, padding=1, bias=False)

self.convt_R2 = nn.Conv2d(in_channels=64, out_channels=1, kernel_size=3, stride=1, padding=1, bias=False)

self.convt_F2 = self.make_layer(_Conv_Block)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] \* m.kernel_size[1] \* m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

if m.bias is not None:

m.bias.data.zero_()

if isinstance(m, nn.ConvTranspose2d):

c1, c2, h, w = m.weight.data.size()

weight = get_upsample_filter(h)

m.weight.data = weight.view(1, 1, h, w).repeat(c1, c2, 1, 1)

if m.bias is not None:

m.bias.data.zero_()

def make\_layer(self, block):

layers = []

layers.append(block())

return nn.Sequential(\*layers)

def forward(self, x):

out = self.relu(self.conv_input(x))

convt_F1 = self.convt_F1(out)

convt_I1 = self.convt_I1(x)

convt_R1 = self.convt_R1(convt_F1)

HR_2x = convt_I1 + convt_R1

convt_F2 = self.convt_F2(convt_F1)

convt_I2 = self.convt_I2(HR_2x)

convt_R2 = self.convt_R2(convt_F2)

HR_4x = convt_I2 + convt_R2

return HR_2x, HR_4x

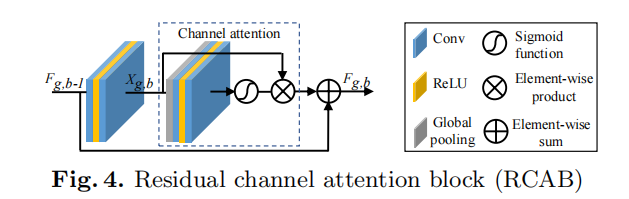

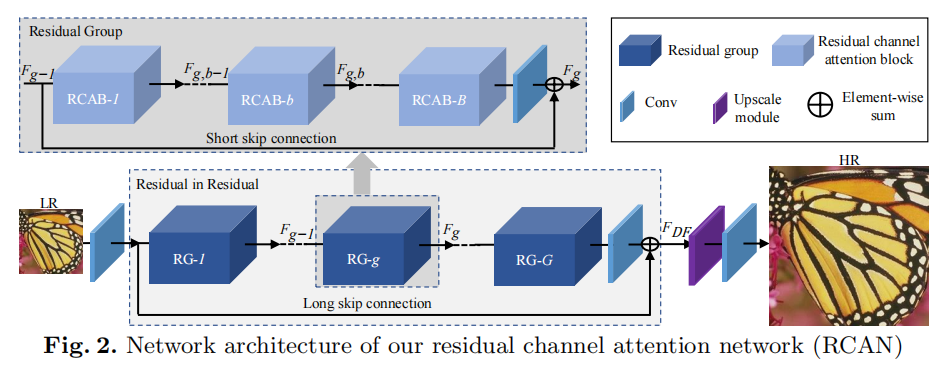

#### RCAN

论文:<https://arxiv.org/abs/1807.02758>

代码:

TensorFlow (1) <https://github.com/dongheehand/RCAN-tf> (2) <https://github.com/keerthan2/Residual-Channel-Attention-Network>

Pytorch <https://github.com/yulunzhang/RCAN>

1. **创新点**:(1) 使用通道注意力来加强特征学习;(2) 提出 Residual In Residual (RIR) 结构;

2. **好处**:(1) 通过特征不同通道的特征来重新调整每一通道的权重;(2) 多个残差组和长跳跃连接构建粗粒度的残差学习,在残差组内部再堆叠多个简化的残差块并采用短跳跃连接 (大的残差内部嵌入小残差),使得高低频充分融合,同时加速网络训练和稳定性。

3. **核心代码**:

from model import common

import torch.nn as nn

Channel Attention (CA) Layer

class CALayer(nn.Module):

def __init__(self, channel, reduction=16):

super(CALayer, self).init()

# global average pooling: feature --> point

self.avg_pool = nn.AdaptiveAvgPool2d(1)

# feature channel downscale and upscale --> channel weight

self.conv_du = nn.Sequential(

nn.Conv2d(channel, channel // reduction, 1, padding=0, bias=True),

nn.ReLU(inplace=True),

nn.Conv2d(channel // reduction, channel, 1, padding=0, bias=True),

nn.Sigmoid()

)

def forward(self, x):

y = self.avg_pool(x)

y = self.conv_du(y)

return x \* y

Residual Channel Attention Block (RCAB)

class RCAB(nn.Module):

def __init__(

self, conv, n_feat, kernel_size, reduction,

bias=True, bn=False, act=nn.ReLU(True), res_scale=1):

super(RCAB, self).__init__()

modules_body = []

for i in range(2):

modules_body.append(conv(n_feat, n_feat, kernel_size, bias=bias))

if bn: modules_body.append(nn.BatchNorm2d(n_feat))

if i == 0: modules_body.append(act)

modules_body.append(CALayer(n_feat, reduction))

self.body = nn.Sequential(\*modules_body)

self.res_scale = res_scale

def forward(self, x):

res = self.body(x)

res += x

return res

Residual Group (RG)

class ResidualGroup(nn.Module):

def __init__(self, conv, n_feat, kernel_size, reduction, act, res_scale, n_resblocks):

super(ResidualGroup, self).init()

modules_body = []

modules_body = [

RCAB(

conv, n_feat, kernel_size, reduction, bias=True, bn=False, act=nn.ReLU(True), res_scale=1)

for _ in range(n_resblocks)]

modules_body.append(conv(n_feat, n_feat, kernel_size))

self.body = nn.Sequential(*modules_body)

def forward(self, x):

res = self.body(x)

res += x

return res

Residual Channel Attention Network (RCAN)

class RCAN(nn.Module):

def __init__(self, args, conv=common.default_conv):

super(RCAN, self).init()

n_resgroups = args.n_resgroups

n_resblocks = args.n_resblocks

n_feats = args.n_feats

kernel_size = 3

reduction = args.reduction

scale = args.scale[0]

act = nn.ReLU(True)

# RGB mean for DIV2K

rgb_mean = (0.4488, 0.4371, 0.4040)

rgb_std = (1.0, 1.0, 1.0)

self.sub_mean = common.MeanShift(args.rgb_range, rgb_mean, rgb_std)

# define head module

modules_head = [conv(args.n_colors, n_feats, kernel_size)]

# define body module

modules_body = [

ResidualGroup(

conv, n_feats, kernel_size, reduction, act=act, res_scale=args.res_scale, n_resblocks=n_resblocks) \

for _ in range(n_resgroups)]

modules_body.append(conv(n_feats, n_feats, kernel_size))

# define tail module

modules_tail = [

common.Upsampler(conv, scale, n_feats, act=False),

conv(n_feats, args.n_colors, kernel_size)]

self.add_mean = common.MeanShift(args.rgb_range, rgb_mean, rgb_std, 1)

self.head = nn.Sequential(\*modules_head)

self.body = nn.Sequential(\*modules_body)

self.tail = nn.Sequential(\*modules_tail)

def forward(self, x):

x = self.sub_mean(x)

x = self.head(x)

res = self.body(x)

res += x

x = self.tail(res)

x = self.add_mean(x)

return x

#### SAN

**论文**:<https://csjcai.github.io/papers/SAN.pdf>

**代码**:

Pytorch <https://github.com/daitao/SAN>

1. **创新点**:(1) 提出二阶注意力机制 Second-order Channel Attention (SOCA);(2) 提出非局部增强残差组 Non-Locally Enhanced Residual Group (NLRG) 结构。

2. **好处**:(1) 通过二阶特征的分布自适应学习特征的内部依赖关系,使得网络能够专注于更有益的信息且能够提高判别学习的能力;(2) 非局部操作可以聚合上下文信息,同时利用残差结构来训练深度网络,加速和稳定网络训练过程。

3. **核心代码**:

from model import common

import torch

import torch.nn as nn

import torch.nn.functional as F

from model.MPNCOV.python import MPNCOV

class NONLocalBlock2D(_NonLocalBlockND):

def __init__(self, in_channels, inter_channels=None, mode=‘embedded_gaussian’, sub_sample=True, bn_layer=True):

super(NONLocalBlock2D, self).init(in_channels,

inter_channels=inter_channels,

dimension=2, mode=mode,

sub_sample=sub_sample,

bn_layer=bn_layer)

Channel Attention (CA) Layer

class CALayer(nn.Module):

def __init__(self, channel, reduction=8):

super(CALayer, self).init()

# global average pooling: feature --> point

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.max_pool = nn.AdaptiveMaxPool2d(1)

# feature channel downscale and upscale --> channel weight

self.conv_du = nn.Sequential(

nn.Conv2d(channel, channel // reduction, 1, padding=0, bias=True),

nn.ReLU(inplace=True),

nn.Conv2d(channel // reduction, channel, 1, padding=0, bias=True),

)

def forward(self, x):

_,_,h,w = x.shape

y_ave = self.avg_pool(x)

y_ave = self.conv_du(y_ave)

return y_ave

second-order Channel attention (SOCA)

class SOCA(nn.Module):

def __init__(self, channel, reduction=8):

super(SOCA, self).init()

self.max_pool = nn.MaxPool2d(kernel_size=2)

# feature channel downscale and upscale --> channel weight

self.conv_du = nn.Sequential(

nn.Conv2d(channel, channel // reduction, 1, padding=0, bias=True),

nn.ReLU(inplace=True),

nn.Conv2d(channel // reduction, channel, 1, padding=0, bias=True),

nn.Sigmoid()

)

def forward(self, x):

batch_size, C, h, w = x.shape # x: NxCxHxW

N = int(h \* w)

min_h = min(h, w)

h1 = 1000

w1 = 1000

if h < h1 and w < w1:

x_sub = x

elif h < h1 and w > w1:

# H = (h - h1) // 2

W = (w - w1) // 2

x_sub = x[:, :, :, W:(W + w1)]

elif w < w1 and h > h1:

H = (h - h1) // 2

# W = (w - w1) // 2

x_sub = x[:, :, H:H + h1, :]

else:

H = (h - h1) // 2

W = (w - w1) // 2

x_sub = x[:, :, H:(H + h1), W:(W + w1)]

## MPN-COV

cov_mat = MPNCOV.CovpoolLayer(x_sub) # Global Covariance pooling layer

cov_mat_sqrt = MPNCOV.SqrtmLayer(cov_mat,5) # Matrix square root layer( including pre-norm,Newton-Schulz iter. and post-com. with 5 iteration)

cov_mat_sum = torch.mean(cov_mat_sqrt,1)

cov_mat_sum = cov_mat_sum.view(batch_size,C,1,1)

y_cov = self.conv_du(cov_mat_sum)

return y_cov\*x

self-attention+ channel attention module

class Nonlocal_CA(nn.Module):

def __init__(self, in_feat=64, inter_feat=32, reduction=8,sub_sample=False, bn_layer=True):

super(Nonlocal_CA, self).init()

# second-order channel attention

self.soca=SOCA(in_feat, reduction=reduction)

# nonlocal module

self.non_local = (NONLocalBlock2D(in_channels=in_feat,inter_channels=inter_feat, sub_sample=sub_sample,bn_layer=bn_layer))

self.sigmoid = nn.Sigmoid()

def forward(self,x):

## divide feature map into 4 part

batch_size,C,H,W = x.shape

H1 = int(H / 2)

W1 = int(W / 2)

nonlocal_feat = torch.zeros_like(x)

feat_sub_lu = x[:, :, :H1, :W1]

feat_sub_ld = x[:, :, H1:, :W1]

feat_sub_ru = x[:, :, :H1, W1:]

feat_sub_rd = x[:, :, H1:, W1:]

nonlocal_lu = self.non_local(feat_sub_lu)

nonlocal_ld = self.non_local(feat_sub_ld)

nonlocal_ru = self.non_local(feat_sub_ru)

nonlocal_rd = self.non_local(feat_sub_rd)

nonlocal_feat[:, :, :H1, :W1] = nonlocal_lu

nonlocal_feat[:, :, H1:, :W1] = nonlocal_ld

nonlocal_feat[:, :, :H1, W1:] = nonlocal_ru

nonlocal_feat[:, :, H1:, W1:] = nonlocal_rd

return nonlocal_feat

Residual Block (RB)

class RB(nn.Module):

def __init__(self, conv, n_feat, kernel_size, reduction, bias=True, bn=False, act=nn.ReLU(inplace=True), res_scale=1, dilation=2):

super(RB, self).init()

modules_body = []

self.gamma1 = 1.0

self.conv_first = nn.Sequential(conv(n_feat, n_feat, kernel_size, bias=bias),

act,

conv(n_feat, n_feat, kernel_size, bias=bias))

self.res_scale = res_scale

def forward(self, x):

y = self.conv_first(x)

y = y + x

return y

Local-source Residual Attention Group (LSRARG)

class LSRAG(nn.Module):

def __init__(self, conv, n_feat, kernel_size, reduction, act, res_scale, n_resblocks):

super(LSRAG, self).init()

##

self.rcab= nn.ModuleList([RB(conv, n_feat, kernel_size, reduction,

bias=True, bn=False, act=nn.ReLU(inplace=True), res_scale=1) for _ in range(n_resblocks)])

self.soca = (SOCA(n_feat,reduction=reduction))

self.conv_last = (conv(n_feat, n_feat, kernel_size))

self.n_resblocks = n_resblocks

self.gamma = nn.Parameter(torch.zeros(1))

def make\_layer(self, block, num_of_layer):

layers = []

for _ in range(num_of_layer):

layers.append(block)

return nn.ModuleList(layers)

def forward(self, x):

residual = x

for i,l in enumerate(self.rcab):

x = l(x)

x = self.soca(x)

x = self.conv_last(x)

x = x + residual

return x

Second-order Channel Attention Network (SAN)

class SAN(nn.Module):

def __init__(self, args, conv=common.default_conv):

super(SAN, self).init()

n_resgroups = args.n_resgroups

n_resblocks = args.n_resblocks

n_feats = args.n_feats

kernel_size = 3

reduction = args.reduction

scale = args.scale[0]

act = nn.ReLU(inplace=True)

# RGB mean for DIV2K

rgb_mean = (0.4488, 0.4371, 0.4040)

rgb_std = (1.0, 1.0, 1.0)

self.sub_mean = common.MeanShift(args.rgb_range, rgb_mean, rgb_std)

# define head module

modules_head = [conv(args.n_colors, n_feats, kernel_size)]

# define body module

## share-source skip connection

self.gamma = nn.Parameter(torch.zeros(1))

self.n_resgroups = n_resgroups

self.RG = nn.ModuleList([LSRAG(conv, n_feats, kernel_size, reduction, \

act=act, res_scale=args.res_scale, n_resblocks=n_resblocks) for _ in range(n_resgroups)])

self.conv_last = conv(n_feats, n_feats, kernel_size)

# define tail module

modules_tail = [

common.Upsampler(conv, scale, n_feats, act=False),

conv(n_feats, args.n_colors, kernel_size)]

self.add_mean = common.MeanShift(args.rgb_range, rgb_mean, rgb_std, 1)

self.non_local = Nonlocal_CA(in_feat=n_feats, inter_feat=n_feats//8, reduction=8,sub_sample=False, bn_layer=False)

self.head = nn.Sequential(\*modules_head)

self.tail = nn.Sequential(\*modules_tail)

def make\_layer(self, block, num_of_layer):

layers = []

for _ in range(num_of_layer):

layers.append(block)

return nn.ModuleList(layers)

def forward(self, x):

x = self.sub_mean(x)

x = self.head(x)

## add nonlocal

xx = self.non_local(x)

# share-source skip connection

residual = xx

# share-source residual gruop

for i,l in enumerate(self.RG):

xx = l(xx) + self.gamma\*residual

## add nonlocal

res = self.non_local(xx)

res = res + x

x = self.tail(res)

x = self.add_mean(x)

return x

#### IGNN

**论文**:<https://proceedings.neurips.cc/paper/2020/file/8b5c8441a8ff8e151b191c53c1842a38-Paper.pdf>

**代码**:

Pytorch <https://github.com/sczhou/IGNN>

1. **创新点**:(1) 提出非局部图卷积聚合模块 non-locally Graph convolution Aggregation (GraphAgg) ,进而提出隐式神经网络 Implicit Graph Neural Network (IGNN)。

2. **好处**:(1) 巧妙地为每个低分图像找到多个高分图像块近邻,再构建出低分到高分的连接图,进而将多个高分图像的纹理信息聚合在低分图像上,从而实现超分重建。

3. **核心代码**:

from models.submodules import *

from models.VGG19 import VGG19

from config import cfg

class IGNN(nn.Module):

def __init__(self):

super(IGNN, self).init()

kernel_size = 3

n_resblocks = cfg.NETWORK.N_RESBLOCK

n_feats = cfg.NETWORK.N_FEATURE

n_neighbors = cfg.NETWORK.N_REIGHBOR

scale = cfg.CONST.SCALE

if cfg.CONST.SCALE == 4:

scale = 2

window = cfg.NETWORK.WINDOW_SIZE

gcn_stride = 2

patch_size = 3

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

妙地为每个低分图像找到多个高分图像块近邻,再构建出低分到高分的连接图,进而将多个高分图像的纹理信息聚合在低分图像上,从而实现超分重建。

3. 核心代码:

from models.submodules import \*

from models.VGG19 import VGG19

from config import cfg

class IGNN(nn.Module):

def \_\_init\_\_(self):

super(IGNN, self).__init__()

kernel_size = 3

n_resblocks = cfg.NETWORK.N_RESBLOCK

n_feats = cfg.NETWORK.N_FEATURE

n_neighbors = cfg.NETWORK.N_REIGHBOR

scale = cfg.CONST.SCALE

if cfg.CONST.SCALE == 4:

scale = 2

window = cfg.NETWORK.WINDOW_SIZE

gcn_stride = 2

patch_size = 3

[外链图片转存中...(img-WAXIKqQj-1714757025035)]

[外链图片转存中...(img-39oh5qmS-1714757025036)]

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

1665

1665

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?