网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

//TODO 1.获取执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

env.disableOperatorChaining();

// TODO kafka消费

// 配置 kafka 输入流信息

Properties consumerprops = new Properties();

consumerprops.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "10.0.2.67:9092");

consumerprops.put(ConsumerConfig.GROUP_ID_CONFIG, "group1");

consumerprops.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, "false");

consumerprops.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "latest");

consumerprops.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

consumerprops.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

// 添加 kafka 数据源

DataStreamSource<String> dataStreamSource = env.addSource(new FlinkKafkaConsumer<>("atlas-source-topic", new SimpleStringSchema(), consumerprops));

// 配置kafka输入流信息

Properties producerprops = new Properties();

producerprops.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "10.0.2.67:9092");

// 配置证书信息

dataStreamSource.addSink(new FlinkKafkaProducer<String>("atlas-sink-topic", new KeyedSerializationSchemaWrapper(new SerializationSchema<String>(){

@Override

public byte[] serialize(String element) {

return element.getBytes();

}

}), producerprops));

env.execute("AtlasTest");

}

}

###### flink on yarn 日志输出

###### 修改 json 解析方式

`org.apache.atlas.utils.AtlasJson#toJson`

public static String toJson(Object obj) {

String ret;

if (obj instanceof JsonNode && ((JsonNode) obj).isTextual()) {

ret = ((JsonNode) obj).textValue();

} else {

// 修改 json 处理方式:fastjson,原来的ObjectMapper.writeValueAsString() 一度卡住不往下执行

// ret = mapper.writeValueAsString(obj);

ret = JSONObject.toJSONString(JSONObject.toJSON(obj));

LOG.info(ret);

}

return ret;

}

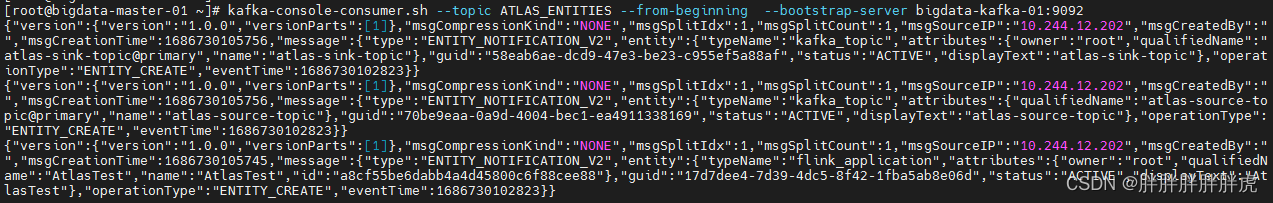

###### 查看目标 kafka 对应topic

###### flinksql

Caused by: java.lang.ClassCastException: org.apache.flink.table.runtime.operators.sink.SinkOperator cannot be cast to org.apache.flink.streaming.api.operators.StreamSink

`org.apache.atlas.flink.hook.FlinkAtlasHook#addSinkEntities`

private void addSinkEntities(List sinks, AtlasEntity flinkApp, AtlasEntity.AtlasEntityExtInfo entityExtInfo) {

List outputs = new ArrayList<>();

for (StreamNode sink : sinks) {

// StreamSink<?> sinkOperator = (StreamSink<?>) sink.getOperator();

// SinkFunction<?> sinkFunction = sinkOperator.getUserFunction();

// List<AtlasEntity> dsEntities = createSinkEntity(sinkFunction, entityExtInfo);

// outputs.addAll(dsEntities);

if (sink.getOperator().getClass().getName().equals("org.apache.flink.streaming.api.operators.StreamSink")) {

StreamSink<?> sinkOperator = (StreamSink<?>) sink.getOperator();

SinkFunction<?> sinkFunction = sinkOperator.getUserFunction();

List<AtlasEntity> dsEntities = createSinkEntity(sinkFunction, entityExtInfo);

outputs.addAll(dsEntities);

} else if (sink.getOperator().getClass().getName().equals("org.apache.flink.table.runtime.operators.sink.SinkOperator")) {

SinkOperator sinkOperator = (SinkOperator) sink.getOperator();

SinkFunction<?> sinkFunction = sinkOperator.getUserFunction();

List<AtlasEntity> dsEntities = createSinkEntity(sinkFunction, entityExtInfo);

outputs.addAll(dsEntities);

}

}

flinkApp.setRelationshipAttribute("outputs", AtlasTypeUtil.getAtlasRelatedObjectIds(outputs, RELATIONSHIP\_PROCESS\_DATASET\_OUTPUTS));

LOG.info("-------------- flinkApp: -------------" + flinkApp);

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

bs.csdn.net/topics/618545628)**

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

363

363

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?