Parameters:

optimizer: class

The weight optimizer that will be used to tune the weights in order of minimizing

the loss.

loss: class

Loss function used to measure the model’s performance. SquareLoss or CrossEntropy.

validation: tuple

A tuple containing validation data and labels (X, y)

“”"

def init(self, optimizer, loss, validation_data=None):

self.optimizer = optimizer

self.layers = []

self.errors = {“training”: [], “validation”: []}

self.loss_function = loss()

self.progressbar = progressbar.ProgressBar(widgets=bar_widgets)

self.val_set = None

if validation_data:

X, y = validation_data

self.val_set = {“X”: X, “y”: y}

def set_trainable(self, trainable):

“”" Method which enables freezing of the weights of the network’s layers. “”"

for layer in self.layers:

layer.trainable = trainable

def add(self, layer):

“”" Method which adds a layer to the neural network “”"

If this is not the first layer added then set the input shape

to the output shape of the last added layer

if self.layers:

layer.set_input_shape(shape=self.layers[-1].output_shape())

If the layer has weights that needs to be initialized

if hasattr(layer, ‘initialize’):

layer.initialize(optimizer=self.optimizer)

Add layer to the network

self.layers.append(layer)

def test_on_batch(self, X, y):

“”" Evaluates the model over a single batch of samples “”"

y_pred = self._forward_pass(X, training=False)

loss = np.mean(self.loss_function.loss(y, y_pred))

acc = self.loss_function.acc(y, y_pred)

return loss, acc

def train_on_batch(self, X, y):

“”" Single gradient update over one batch of samples “”"

y_pred = self._forward_pass(X)

loss = np.mean(self.loss_function.loss(y, y_pred))

acc = self.loss_function.acc(y, y_pred)

Calculate the gradient of the loss function wrt y_pred

loss_grad = self.loss_function.gradient(y, y_pred)

Backpropagate. Update weights

self._backward_pass(loss_grad=loss_grad)

return loss, acc

def fit(self, X, y, n_epochs, batch_size):

“”" Trains the model for a fixed number of epochs “”"

for _ in self.progressbar(range(n_epochs)):

batch_error = []

for X_batch, y_batch in batch_iterator(X, y, batch_size=batch_size):

loss, _ = self.train_on_batch(X_batch, y_batch)

batch_error.append(loss)

self.errors[“training”].append(np.mean(batch_error))

if self.val_set is not None:

val_loss, _ = self.test_on_batch(self.val_set[“X”], self.val_set[“y”])

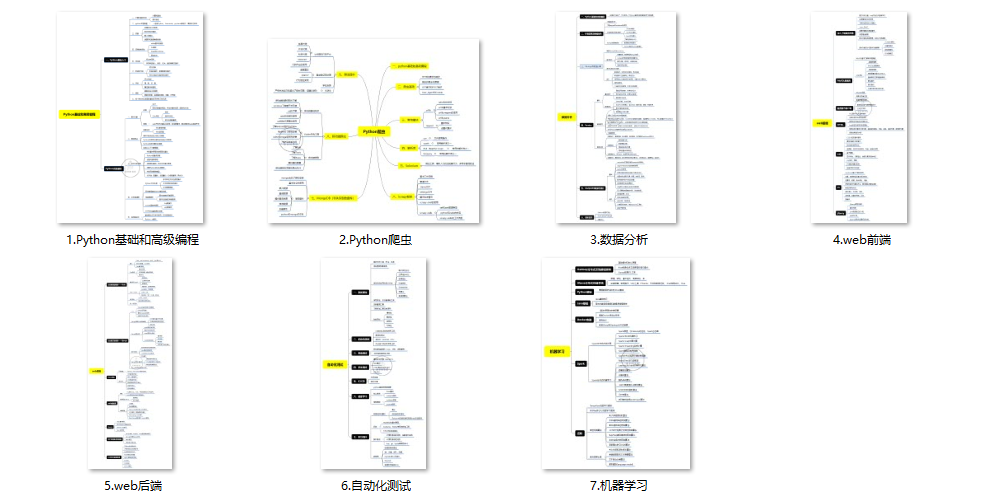

(1)Python所有方向的学习路线(新版)

这是我花了几天的时间去把Python所有方向的技术点做的整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

最近我才对这些路线做了一下新的更新,知识体系更全面了。

(2)Python学习视频

包含了Python入门、爬虫、数据分析和web开发的学习视频,总共100多个,虽然没有那么全面,但是对于入门来说是没问题的,学完这些之后,你可以按照我上面的学习路线去网上找其他的知识资源进行进阶。

(3)100多个练手项目

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了,只是里面的项目比较多,水平也是参差不齐,大家可以挑自己能做的项目去练练。

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

本文介绍了用于神经网络训练的关键组件,如优化器(如SGD)、损失函数(如均方误差或交叉熵)、验证数据以及训练和测试过程。它强调了如何设置可训练层、添加新层和进行批量学习的重要性。

本文介绍了用于神经网络训练的关键组件,如优化器(如SGD)、损失函数(如均方误差或交叉熵)、验证数据以及训练和测试过程。它强调了如何设置可训练层、添加新层和进行批量学习的重要性。

3048

3048

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?