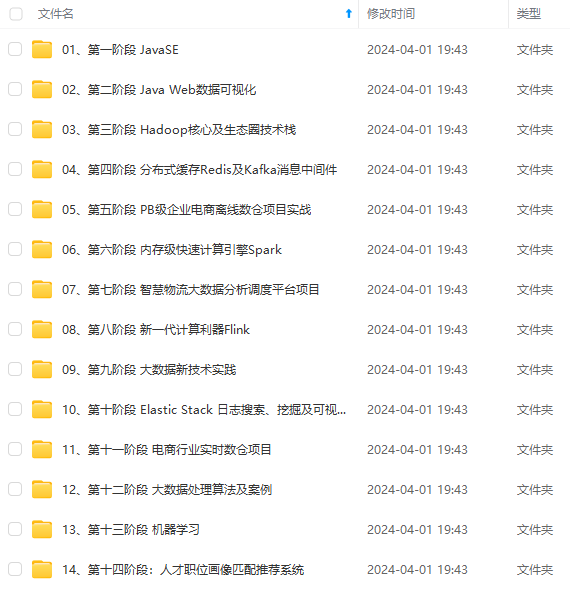

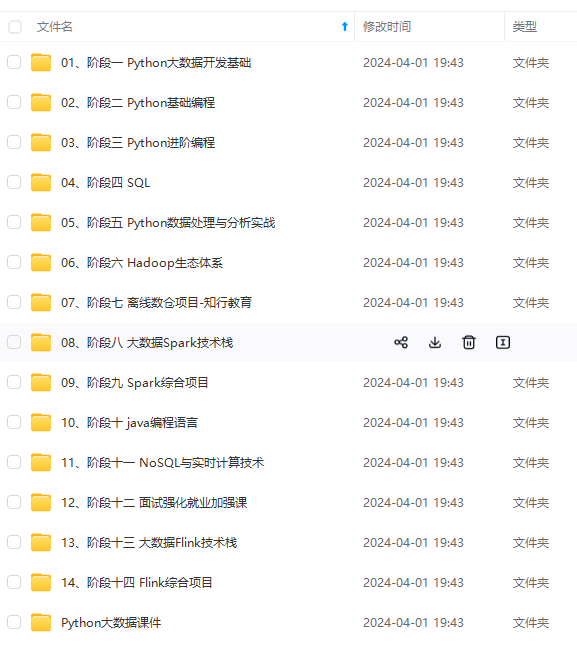

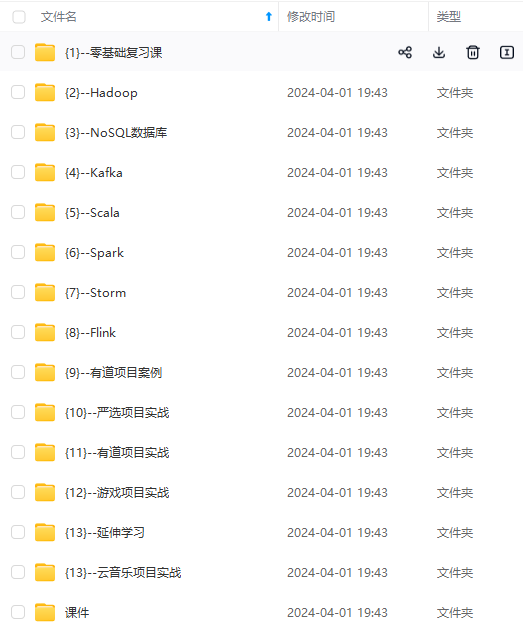

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

<!-- json -->

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>${fastjson.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-java -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>${flink.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-clients -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients</artifactId>

<version>${flink.version}</version>

</dependency>

<!--================================集成外部依赖==========================================-->

<!--集成日志框架 start-->

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-slf4j-impl</artifactId>

<version>${log4j.version}</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-api</artifactId>

<version>${log4j.version}</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>${log4j.version}</version>

</dependency>

<!--集成日志框架 end-->

<!--kafka依赖 start-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka</artifactId>

<version>3.0.2-1.18</version>

</dependency>

<!--kafka依赖 end-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-base</artifactId>

<version>1.18.0</version>

</dependency>

</dependencies>

<!--编译打包-->

<build>

<finalName>${project.name}</finalName>

<!--资源文件打包-->

<resources>

<resource>

<directory>src/main/resources</directory>

</resource>

<resource>

<directory>src/main/java</directory>

<includes>

<include>**/*.xml</include>

</includes>

</resource>

</resources>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>3.1.1</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<artifactSet>

<excludes>

<exclude>org.apache.flink:force-shading</exclude>

<exclude>org.google.code.flindbugs:jar305</exclude>

<exclude>org.slf4j:*</exclude>

<excluder>org.apache.logging.log4j:*</excluder>

</excludes>

</artifactSet>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>org.aurora.KafkaStreamingJob</mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

<!--插件统一管理-->

<pluginManagement>

<plugins>

<!--maven打包插件-->

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<version>${spring.boot.version}</version>

<configuration>

<fork>true</fork>

<finalName>${project.build.finalName}</finalName>

</configuration>

<executions>

<execution>

<goals>

<goal>repackage</goal>

</goals>

</execution>

</executions>

</plugin>

<!--编译打包插件-->

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>${maven.plugin.version}</version>

<configuration>

<source>${java.version}</source>

<target>${java.version}</target>

<encoding>UTF-8</encoding>

<compilerArgs>

<arg>-parameters</arg>

</compilerArgs>

</configuration>

</plugin>

</plugins>

</pluginManagement>

</build>

<!--配置Maven项目中需要使用的远程仓库-->

<repositories>

<repository>

<id>aliyun-repos</id>

<url>https://maven.aliyun.com/nexus/content/groups/public/</url>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository>

</repositories>

<!--用来配置maven插件的远程仓库-->

<pluginRepositories>

<pluginRepository>

<id>aliyun-plugin</id>

<url>https://maven.aliyun.com/nexus/content/groups/public/</url>

<snapshots>

<enabled>false</enabled>

</snapshots>

</pluginRepository>

</pluginRepositories>

### 6.3 配置文件

(1)application.properties

#kafka集群地址

kafka.bootstrapServers=localhost:9092

#kafka主题

kafka.topic=topic_a

#kafka消费者组

kafka.group=aurora_group

(2)log4j2.properties

rootLogger.level=INFO

rootLogger.appenderRef.console.ref=ConsoleAppender

appender.console.name=ConsoleAppender

appender.console.type=CONSOLE

appender.console.layout.type=PatternLayout

appender.console.layout.pattern=%d{HH:mm:ss,SSS} %-5p %-60c %x - %m%n

log.file=D:\tmprootLogger.level=INFO

rootLogger.appenderRef.console.ref=ConsoleAppender

appender.console.name=ConsoleAppender

appender.console.type=CONSOLE

appender.console.layout.type=PatternLayout

appender.console.layout.pattern=%d{HH:mm:ss,SSS} %-5p %-60c %x - %m%n

log.file=D:\tmp

### 6.4 创建sink作业

package com.aurora;

import org.apache.flink.api.common.eventtime.WatermarkStrategy;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.connector.base.DeliveryGuarantee;

import org.apache.flink.connector.kafka.sink.KafkaRecordSerializationSchema;

import org.apache.flink.connector.kafka.sink.KafkaSink;

import org.apache.flink.connector.kafka.source.KafkaSource;

import org.apache.flink.connector.kafka.source.KafkaSourceBuilder;

import org.apache.flink.connector.kafka.source.enumerator.initializer.OffsetsInitializer;

import org.apache.flink.runtime.state.StateBackend;

import org.apache.flink.runtime.state.filesystem.FsStateBackend;

import org.apache.flink.streaming.api.CheckpointingMode;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.CheckpointConfig;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.util.Collector;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import java.util.ArrayList;

/**

-

@author 浅夏的猫

-

@description kafka 连接器使用demo作业

-

@datetime 22:21 2024/2/1

*/

public class KafkaSinkStreamingJob {private static final Logger logger = LoggerFactory.getLogger(KafkaSinkStreamingJob.class);

public static void main(String[] args) throws Exception {

//===============1.获取参数============================== //定义文件路径 String propertiesFilePath = "E:\\project\\aurora_dev\\aurora_flink_connector_kafka\\src\\main\\resources\\application.properties"; //方式一:直接使用内置工具类 ParameterTool paramsMap = ParameterTool.fromPropertiesFile(propertiesFilePath); //================2.初始化kafka参数============================== String bootstrapServers = paramsMap.get("kafka.bootstrapServers"); String topic = paramsMap.get("kafka.topic"); KafkaSink<String> sink = KafkaSink.<String>builder() //设置kafka地址 .setBootstrapServers(bootstrapServers) //设置消息序列号方式 .setRecordSerializer(KafkaRecordSerializationSchema.builder() .setTopic(topic) .setValueSerializationSchema(new SimpleStringSchema()) .build() ) //至少一次 .setDeliveryGuarantee(DeliveryGuarantee.AT_LEAST_ONCE) .build(); //=================4.创建Flink运行环境================= StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); ArrayList<String> listData = new ArrayList<>(); listData.add("test"); listData.add("java"); listData.add("c++"); DataStreamSource<String> dataStreamSource = env.fromCollection(listData); //=================5.数据简单处理====================== SingleOutputStreamOperator<String> flatMap = dataStreamSource.flatMap(new FlatMapFunction<String, String>() { @Override public void flatMap(String record, Collector<String> collector) throws Exception { logger.info("正在处理kafka数据:{}", record); collector.collect(record); } }); //数据输出算子 flatMap.sinkTo(sink); //=================6.启动服务========================================= //开启flink的checkpoint功能:每隔1000ms启动一个检查点(设置checkpoint的声明周期) env.enableCheckpointing(1000); //checkpoint高级选项设置 //设置checkpoint的模式为exactly-once(这也是默认值) env.getCheckpointConfig().setCheckpointingMode(CheckpointingMode.EXACTLY_ONCE); //确保检查点之间至少有500ms间隔(即checkpoint的最小间隔) env.getCheckpointConfig().setMinPauseBetweenCheckpoints(500); //确保检查必须在1min之内完成,否则就会被丢弃掉(即checkpoint的超时时间) env.getCheckpointConfig().setCheckpointTimeout(60000); //同一时间只允许操作一个检查点

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

图片转存中…(img-FpbwbCNX-1715285429017)]

[外链图片转存中…(img-W7vVYwOM-1715285429017)]

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

1639

1639

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?