Homework_Week5_Coursera【Machine Learning】AndrewNg、Neural Networks: Learning

- 1 You are training a three layer neural network and would like to use backpropagation to compute the gradient of the cost function. In the backpropagation algorithm, one of the steps is to updateXX for every i, ji,j. Which of the following is a correct vectorization of this step?

- 2 Suppose Theta1 is a 5x3 matrix, and Theta2 is a 4x6 matrix. You set thetaVec = [Theta1(:);Theta2(:)]} Which of the following correctly recovers Theta2?

- 3 What value do you get?

- 4 Which of the following statements are true? Check all that apply.

- 5 Which of the following statements are true? Check all that apply.

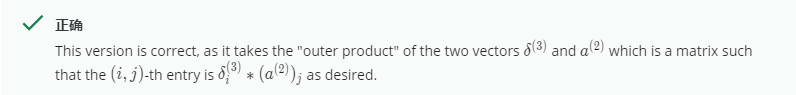

1 You are training a three layer neural network and would like to use backpropagation to compute the gradient of the cost function. In the backpropagation algorithm, one of the steps is to updateXX for every i, ji,j. Which of the following is a correct vectorization of this step?

答案 C

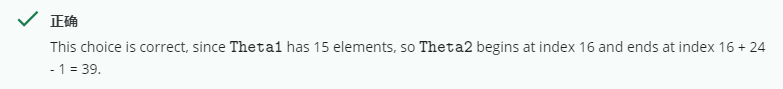

2 Suppose Theta1 is a 5x3 matrix, and Theta2 is a 4x6 matrix. You set thetaVec = [Theta1(😃;Theta2(😃]} Which of the following correctly recovers Theta2?

答案 A

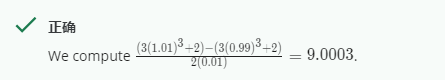

3 What value do you get?

答案 D

4 Which of the following statements are true? Check all that apply.

解析

A 使用梯度下降可以验证反向传播的应用如果是没有Bug的话 正确

B 使用一个很大的lamda不会损伤神经网络的表现,不设置太大是为了避免数值问题、错误 lamda太大会变成一条直线 形成欠拟合

C梯度检查是很有用的如图过我们用梯度下降作为最优算法,然而、如果我们用别的最优化,它就不太适合我们的目标、check只是检查神经网络中参数是否正确,在所有算法都可以用、不存在到别的高级算法就效果不好的说法 错误

D如果过拟合、一个合理的步骤就是增加正则化项参数 lamda 正确 regularization的目的就是解决过拟合问题

答案 A D

5 Which of the following statements are true? Check all that apply.

解析

A 假设我有三层网络伴随着theta1和theta2 一个控制输入到隐藏单元映射的函数,另一个控制着隐藏单元到输出层的映射函数、如果我们把所有的theta1都作为0,theta2都作为1 那么对称会被打破,神经元不在计算同样的输入的函数

B 假设再用梯度下降训练神经网络,你的算法会走向局部最优,假设你用的是随机初始化、

C 如果你训练的神经网络使用了梯度下降,一个合理的debug步骤就是保证每次迭代j(theta)会减少 正确

D 如果初始化一个神经网络的参数而不是全0,就会让堆成打破因为参数不在对称于0

答案 BC

3675

3675

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?