OpenCV4Android开发实录(2): 使用OpenCV3.3.0库实现人脸检测

转载请声明出处:http://write.blog.csdn.net/postedit/78992490

OpenCV4Android系列:

1. OpenCV4Android开发实录(1):移植OpenCV3.3.0库到Android Studio

2.OpenCV4Android开发实录(2): 使用OpenCV3.3.0库实现人脸检测

上一篇文章OpenCV4Android开发实录(1):移植OpenCV3.3.0库到Android Studio大概介绍了下OpenCV库的基本情况,阐述了将OpenCV库移植到Android Studio项目中的具体步骤。本文将在此文的基础上,通过对OpenCV框架中的人脸检测模块做相应介绍,然后实现人脸检测功能。

一、人脸检测模块移植

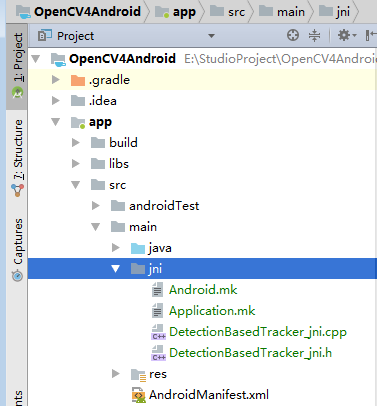

1.拷贝opencv-3.3.0-android-sdk\OpenCV-android-sdk\samples\face-detection\jni目录到工程app module的main目录下

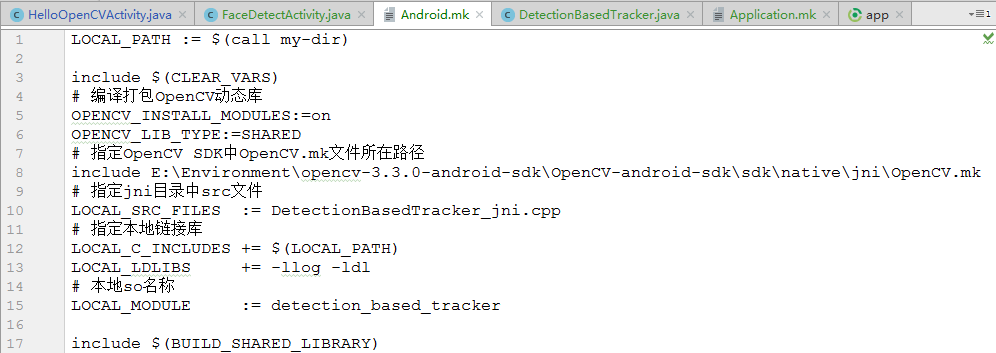

2.修改jni目录下的Android.mk

(1) 将

#OPENCV_INSTALL_MODULES:=off

#OPENCV_LIB_TYPE:=SHARED OPENCV_INSTALL_MODULES:=on

OPENCV_LIB_TYPE:=SHARED(2) 将

ifdef OPENCV_ANDROID_SDK

ifneq ("","$(wildcard $(OPENCV_ANDROID_SDK)/OpenCV.mk)")

include ${OPENCV_ANDROID_SDK}/OpenCV.mk

else

include ${OPENCV_ANDROID_SDK}/sdk/native/jni/OpenCV.mk

endif

include ../../sdk/native/jni/OpenCV.mk

endif include E:\Environment\opencv-3.3.0-android-sdk\OpenCV-android-sdk\sdk\native\jni\OpenCV.mk其中,include包含的就是OpenCV SDK中OpenCV.mk文件所存储的绝对路径。最终Android.mk修改效果如下:

3.修改jni目录下Application.mk。由于在导入OpenCV libs时只拷贝了armeabi 、armeabi-v7a、arm64-v8a,因此这里指定编译平台也为上述三个;修改APP_PLaTFORM版本为android-16(可根据自身情况而定),具体如下:

APP_STL := gnustl_static

APP_CPPFLAGS := -frtti –fexceptions

# 指定编译平台

APP_ABI := armeabi armeabi-v7a arm64-v8a

# 指定Android平台

APP_PLATFORM := android-164.修改DetectionBasedTracker_jni.h和DetectionBasedTracker_jni.cpp文件,将源文件中所有包含前缀“Java_org_opencv_samples_facedetect_”替换为“Java_com_jiangdg_opencv4android_natives_”,其中com.jiangdg.opencv4android.natives是Java层类DetectionBasedTracker.java所在的包路径,该类包含了人脸检测相关的native方法,否则,在调用自己编译生成的so库时会提示找不到该本地函数错误,以DetectionBasedTracker_jni.h为例:

/* DO NOT EDIT THIS FILE - it is machine generated */

#include <jni.h>

/* Header for class org_opencv_samples_fd_DetectionBasedTracker */

#ifndef _Included_org_opencv_samples_fd_DetectionBasedTracker

#define _Included_org_opencv_samples_fd_DetectionBasedTracker

#ifdef __cplusplus

extern "C" {

#endif

/*

* Class: org_opencv_samples_fd_DetectionBasedTracker

* Method: nativeCreateObject

* Signature: (Ljava/lang/String;F)J

*/

JNIEXPORT jlong JNICALL Java_com_jiangdg_opencv4android_natives_DetectionBasedTracker_nativeCreateObject

(JNIEnv *, jclass, jstring, jint);

/*

* Class: org_opencv_samples_fd_DetectionBasedTracker

* Method: nativeDestroyObject

* Signature: (J)V

*/

JNIEXPORT void JNICALL Java_com_jiangdg_opencv4android_natives_DetectionBasedTracker_nativeDestroyObject

(JNIEnv *, jclass, jlong);

/*

* Class: org_opencv_samples_fd_DetectionBasedTracker

* Method: nativeStart

* Signature: (J)V

*/

JNIEXPORT void JNICALL Java_com_jiangdg_opencv4android_natives_DetectionBasedTracker_nativeStart

(JNIEnv *, jclass, jlong);

/*

* Class: org_opencv_samples_fd_DetectionBasedTracker

* Method: nativeStop

* Signature: (J)V

*/

JNIEXPORT void JNICALL Java_com_jiangdg_opencv4android_natives_DetectionBasedTracker_nativeStop

(JNIEnv *, jclass, jlong);

/*

* Class: org_opencv_samples_fd_DetectionBasedTracker

* Method: nativeSetFaceSize

* Signature: (JI)V

*/

JNIEXPORT void JNICALL Java_com_jiangdg_opencv4android_natives_DetectionBasedTracker_nativeSetFaceSize

(JNIEnv *, jclass, jlong, jint);

/*

* Class: org_opencv_samples_fd_DetectionBasedTracker

* Method: nativeDetect

* Signature: (JJJ)V

*/

JNIEXPORT void JNICALL Java_com_jiangdg_opencv4android_natives_DetectionBasedTracker_nativeDetect

(JNIEnv *, jclass, jlong, jlong, jlong);

#ifdef __cplusplus

}

#endif

#endif5.打开Android Studio中的Terminal窗口,使用cd命令切换到工程jni目录所在位置,并执行ndk-build命令,然后会自动在工程的app/src/main目录下生成libs和obj目录,其中libs目录存放的是目标动态库libdetection_based_tracker.so。

生成so库:

注意:如果执行ndk-build命令提示命令不存在,说明你的ndk环境变量没有配置好。

6.修改app模块build.gradle中的sourceSets字段,禁止自动调用ndk-build命令,设置目标so的存放路径,代码如下:android {

compileSdkVersion 25

defaultConfig {

applicationId "com.jiangdg.opencv4android"

minSdkVersion 15

targetSdkVersion 25

versionCode 1

versionName "1.0&

本文详细记录了在OpenCV4Android环境中,使用OpenCV3.3.0库实现人脸检测的步骤,包括库的移植、Android.mk和Application.mk的修改。接着介绍了如何升级到OpenCV3.4.1并使用Cmake编译,解决手动编译SO和缺少代码提示的问题。提供了源码下载链接。

本文详细记录了在OpenCV4Android环境中,使用OpenCV3.3.0库实现人脸检测的步骤,包括库的移植、Android.mk和Application.mk的修改。接着介绍了如何升级到OpenCV3.4.1并使用Cmake编译,解决手动编译SO和缺少代码提示的问题。提供了源码下载链接。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1021

1021

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?