摘要:本文主要讲了如何用Strom来做一个WordCont

1、目录结构

2、pom文件

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.lin</groupId>

<artifactId>Storm-Demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>org.apache.storm</groupId>

<artifactId>storm-core</artifactId>

<version>0.9.5</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>commons-io</groupId>

<artifactId>commons-io</artifactId>

<version>2.4</version>

</dependency>

</dependencies>

<build>

<finalName>${project.artifactId}</finalName>

<resources>

<resource>

<directory>src/main/resources</directory>

<filtering>true</filtering>

</resource>

</resources>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>2.5.1</version>

<configuration>

<source>1.7</source>

<target>1.7</target>

<encoding>utf-8</encoding>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<transformers>

<transformer

implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.handlers</resource>

</transformer>

<transformer

implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.schemas</resource>

</transformer>

<transformer

implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>com.lin.topology.WordCountTopology</mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>3、spout

package com.lin.spout;

import java.util.Map;

import java.util.Random;

import backtype.storm.spout.SpoutOutputCollector;

import backtype.storm.task.TopologyContext;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.base.BaseRichSpout;

import backtype.storm.tuple.Fields;

import backtype.storm.tuple.Values;

import backtype.storm.utils.Utils;

public class WordReaderSpout extends BaseRichSpout {

private static final long serialVersionUID = 2197521792014017918L;

private SpoutOutputCollector collector;

@SuppressWarnings("rawtypes")

public void open(Map conf, TopologyContext context, SpoutOutputCollector collector) {

this.collector = collector;

}

public void nextTuple() {

Utils.sleep(100);

String[] sentences = new String[] { "the cow jumped over the moon", "an apple a day keeps the doctor away", "four score and seven years ago", "snow white and the seven dwarfs", "i am at two with nature" };

final Random rand = new Random();

final String line = sentences[rand.nextInt(sentences.length)];

collector.emit(new Values(line));

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("line"));

}

}4、Bolt

字符分割

package com.lin.bolt;

import org.apache.commons.lang.StringUtils;

import backtype.storm.topology.BasicOutputCollector;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.base.BaseBasicBolt;

import backtype.storm.tuple.Fields;

import backtype.storm.tuple.Tuple;

import backtype.storm.tuple.Values;

public class WordSpliterBolt extends BaseBasicBolt {

private static final long serialVersionUID = -5653803832498574866L;

public void execute(Tuple input, BasicOutputCollector collector) {

String line = input.getString(0);

String[] words = line.split("\\s+");

for (String word : words) {

word = word.trim();

if (StringUtils.isNotBlank(word)) {

word = word.toLowerCase();

collector.emit(new Values(word));

}

}

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("word"));

}

}

package com.lin.bolt;

import java.util.HashMap;

import java.util.Map;

import java.util.Map.Entry;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import backtype.storm.task.TopologyContext;

import backtype.storm.topology.BasicOutputCollector;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.base.BaseBasicBolt;

import backtype.storm.tuple.Tuple;

public class WordCounterBolt extends BaseBasicBolt {

private static final Logger logger = LoggerFactory.getLogger(WordCounterBolt.class);

private static final long serialVersionUID = 5683648523524179434L;

private HashMap<String, Integer> counters = new HashMap<String, Integer>();

private volatile boolean edit = false;

@Override

@SuppressWarnings("rawtypes")

public void prepare(Map stormConf, TopologyContext context) {

new Thread(new Runnable() {

public void run() {

while (true) {

if (edit) {

logger.info("<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<WordCounter result>>>>>>>>>>>>>>>>>>>>>>>>>>>>");

for (Entry<String, Integer> entry : counters.entrySet()) {

logger.info("<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<Word:{},Count:{}>>>>>>>>>>>>>>>>>>>>>>>>>>>>",entry.getKey(),entry.getValue());

}

edit = false;

}

try {

Thread.sleep(1000);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

}).start();

}

public void execute(Tuple input, BasicOutputCollector collector) {

String str = input.getString(0);

if (!counters.containsKey(str)) {

counters.put(str, 1);

} else {

Integer c = counters.get(str) + 1;

counters.put(str, c);

}

edit = true;

logger.info("<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<WordCounter to add string ={} >>>>>>>>>>>>>>>>>>>>>>>>>>>>",str);

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

}

}

5、拓扑

package com.lin.topology;

import backtype.storm.Config;

import backtype.storm.LocalCluster;

import backtype.storm.StormSubmitter;

import backtype.storm.generated.AlreadyAliveException;

import backtype.storm.generated.InvalidTopologyException;

import backtype.storm.topology.TopologyBuilder;

import com.lin.bolt.WordCounterBolt;

import com.lin.bolt.WordSpliterBolt;

import com.lin.spout.WordReaderSpout;

/**

* 功能概要:

*

* @author linbingwen

* @since 2016年8月28日

*/

public class WordCountTopology {

public static void main(String[] args) throws AlreadyAliveException, InvalidTopologyException {

TopologyBuilder builder = new TopologyBuilder();

builder.setSpout("word-reader", new WordReaderSpout());

builder.setBolt("word-spilter", new WordSpliterBolt()).shuffleGrouping("word-reader");

builder.setBolt("word-counter", new WordCounterBolt()).shuffleGrouping("word-spilter");

Config conf = new Config();

conf.setDebug(true);

if (args.length >0 && "cluster".equals(args[0])) {

StormSubmitter.submitTopology("Cluster-Storm-Demo", conf, builder.createTopology());

} else {

LocalCluster cluster = new LocalCluster();

cluster.submitTopology("Local-Storm-Demo", conf, builder.createTopology());

}

}

}

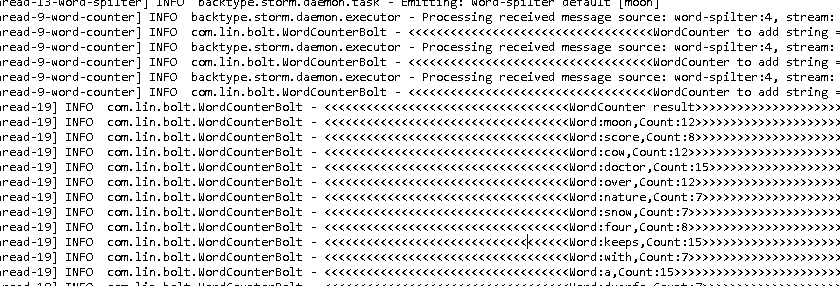

本地模式运行结果:

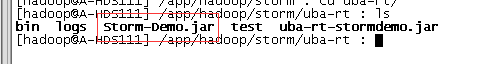

6、打包提交到Storm集群运行

使用命令clean package打包,得到Storm-Demo.jar,并上传到linux服务器上。如下

使用命令启动:

jstorm jar /app/hadoop/storm/uba-rt/Storm-Demo.jar com.lin.topology.WordCountTopology cluster注意后面的cluster是传给main方法的参数

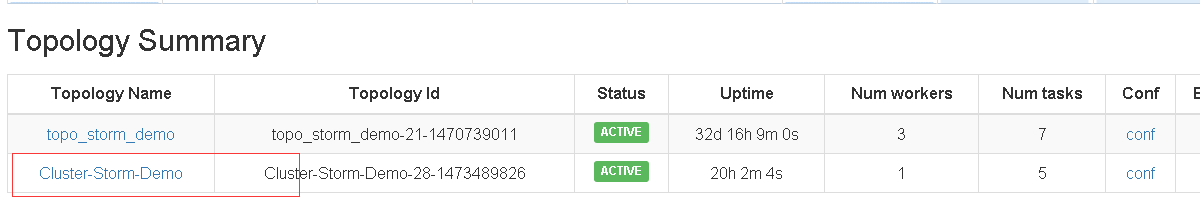

打开Storm监控网页:

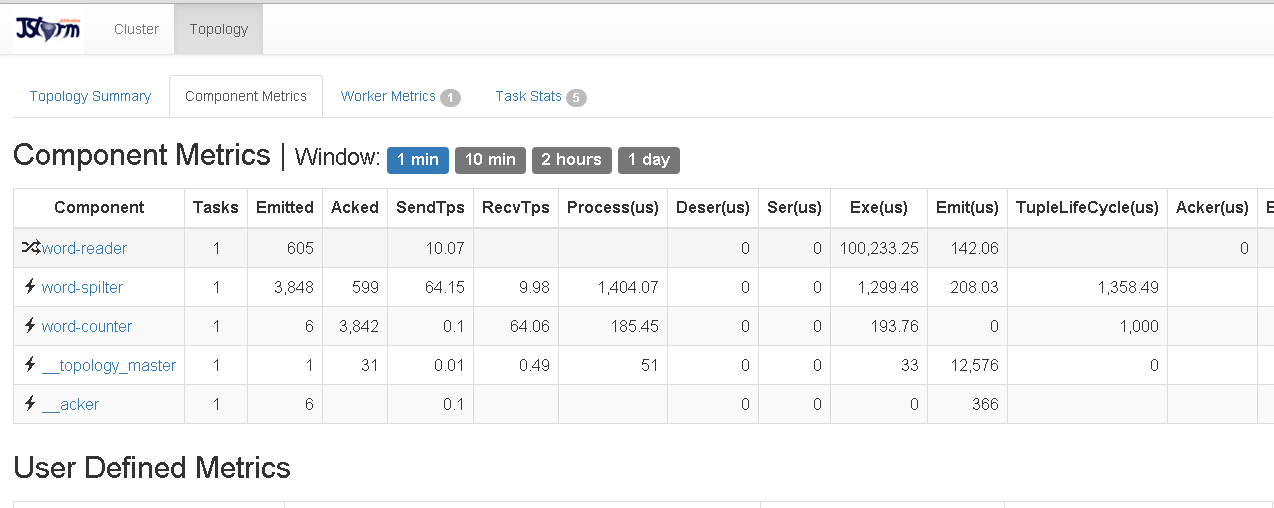

日志也可以在上面来看:

由于程序会一直运行,如果想停止可以使用命令

jstorm kill Cluster-Storm-Demo

9429

9429

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?