本学期学习了大数据技术之spark,期末大作业就是使用Flume+kafka+SparkStreaming实现实时处理,在这之中有很多奇奇怪怪的问题出现,最终还是艰难的将此实验完成。如果你也刚好在做这个实验,希望能对你有用。有帮助的好希望一键三连哦,持续学习,持续更新

Spark大作业之FLume+Kafka+SparkStreaming实时处理+log4j实时生成日志

前言

代码开发环境“IDEA”

动态:对每天每个城市的用户浏览的网页IP的数量的统计,将前20输出到MySQL数据库

实现方法

使用一个java程序持续生成log4j日志,采用flume监听获取数据,将获取的数据sink到kafka的topic,sparkStreaming去消费数据,并对数据进行分析统计。

如果你需要实现你自己想要的功能只需要稍稍修改“日志生成程序”和

“处理程序”中的部分代码即可实现更改!!!!

处理流程分析

静态分析:

实时分析:

实现步骤

1.创建一个Maven项目并创建两个maven模块

2、导入依赖

- 提交 log4j日志的jar包到logProduct模块的pom.xml文件中

<dependency>

<groupId>org.apache.flume.flume-ng-clients</groupId>

<artifactId>flume-ng-log4jappender</artifactId>

<version>1.8.0</version>

</dependency>

-

做数据处理的spark相关依赖,直接引入到Maven项目框架 的pom.xml中即可

<dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.11</artifactId> <version>2.1.1</version> </dependency> <dependency> <groupId>mysql</groupId> <artifactId>mysql-connector-java</artifactId> <version>5.1.27</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-sql_2.11</artifactId> <version>2.1.1</version> </dependency> <!--添加spark支持hive的包--> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-hive_2.11</artifactId> <version>2.1.1</version> </dependency> <!--spark-Stream实时处理--> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-streaming-kafka-0-10_2.11</artifactId> <version>2.1.1</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-streaming_2.11</artifactId> <version>2.1.1</version> </dependency> <!-- 使用scala2.11.8进行编译和打包 --> <dependency> <groupId>org.scala-lang</groupId> <artifactId>scala-library</artifactId> <version>2.11.8</version> </dependency> <!-- flume依赖 --> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-streaming-flume_2.11</artifactId> <version>2.1.1</version> </dependency>

3、配置log4j.properties

此处主要针对LogProduct模块

log4j.rootLogger=INFO,stdout,flume

log4j.appender.stdout = org.apache.log4j.ConsoleAppender

log4j.appender.stdout.target = System.out

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss,SSS} [%t] [%c] [%p] - %m%n

log4j.appender.flume = org.apache.flume.clients.log4jappender.Log4jAppender

#注意修改为你自己flume监听的服务器主机名和端口号

log4j.appender.flume.Hostname = ethan002

log4j.appender.flume.Port = 41414

log4j.appender.flume.UnsafeMode = true

3、配置flume文件

在flume的安装目录下新建一个目录jobs

mkdir jobs

在jobs目录下新建一个文件a3.conf(名字自定,以**.conf**结尾)

在文件中输入一下内容:

a1.sources=r1

a1.sinks=k1

a1.channels=c1

#Describe/configure the source

a1.sources.r1.type=avro

a1.sources.r1.bind=ethan002

a1.sources.r1.port=41414

#define sink

a1.sinks.k1.type=org.apache.flume.sink.kafka.KafkaSink

#你的kafka中有的topic,若无则创建

a1.sinks.k1.kafka.topic=test

#你的主机名

a1.sinks.k1.kafka.bootstrap.servers=ethan002:9092

a1.sinks.k1.requiredAcks = 1

a1.sinks.k1.batchSize = 100

##kafka消息生产的大小限制

a1.sinks.k1.kafka.producer.max.request.size=51200000

# Use a channel which buffers events in memory

a1.channels.c1.type=memory

a1.channels.c1.capacity=1000

a1.channels.c1.transactionCapacity=100

# Bind the source and sink to the channel

a1.sources.r1.channels=c1

a1.sinks.k1.channel=c1

4、程序

4.1、日志生成

代码如下:

package com.ethan.finalWork;

import org.apache.log4j.Logger;

public class LogProduct {

private static Logger logger = Logger.getLogger(LogProduct.class.getName());

public static void main(String[] args) throws InterruptedException {

while(true) {

Thread.sleep(5000);

for (int i = 0; i < 1000; i++) {

StringBuilder sb = new StringBuilder();

String date = timeGen();

sb.append(date)

.append("_")

.append(userIdGen()) // 用户ID

.append("_")

.append(sessionIdGen()) // sessionId

.append("_")

.append(date + " " + timeStampGen())

.append("_")

.append(pageId()) // 页面ID

.append("_")

.append(keyWorld()) // 搜索关键字

.append("_")

.append(cIdGen()) // 点击品类ID

.append("_")

.append(productIdGen()) // 产品ID

.append("_")

.append(orderIdsGen()) // 下单品类ID

.append("_")

.append(cIdGen()) // 下单产品ID

.append("_")

.append(orderIdsGen()) // 支付品类Ids

.append("_")

.append(cIdGen()) // 产品ids

.append("_")

.append(cityIdsGen()); // 城市Id

System.out.println(sb.toString());

logger.info(sb);

}

}

}

public static String userIdGen() {

return (String)((int)(Math.random() * (1000000 - 1) + 1)+"");

}

public static String sessionIdGen(){

int n = (int)(Math.random() * (1000000 - 1) + 1);

String str = n+"-"+(int)(Math.random()*123*Math.random()*1000);

return str;

}

public static String pageId(){

return (String) ((int)(Math.random() * (1200 - 1000) + 1000)+"");

}

private static String timeGen() {

int year = (int)(Math.random() * (2021 - 2010 + 1) + 2010);

int month = (int)(Math.random() * (12 - 1 + 1) + 1);

int day = (int)(Math.random() * (31 - 1 + 1) + 1);

return (2021 + "-" + 12 + "-" + 12);

}

private static String timeStampGen(){

int hour = (int)(Math.random() * (24 - 1 + 1) + 1);

int munite = (int)(Math.random() * (60 - 1 + 1) + 1);

int second = (int)(Math.random() * (60 - 1 + 1) + 1);

return (hour + "-" + munite + "-" + second);

}

public static String keyWorld(){

String[] kw ={"手机","数码","服装","篮球","文具","食品","学习资料","工具","餐饮用具"};

int value = (int)(Math.random() * 9);

String str = kw[value];

return str;

}

public static String cIdGen() {

return (String)((int)(Math.random() * (10000 - 1) + 1)+"");

}

public static String productIdGen(){

return (String)((int)(Math.random() * (100000 - 100) + 100)+"");

}

public static String orderIdsGen(){

int r = (int)(Math.random() * (3 - 1) + 2);

String ret = "";

for (int i =1;i<r;i++) {

if (i < r - 1) {

ret = ret + productIdGen() + ",";

}else if(i == r - 1){

ret += productIdGen();

}

}

return ret;

}

public static String cityIdsGen(){

String[] city= {

"北京市",

"天津市",

"石家庄市",

"唐山市",

"秦皇岛市",

"邯郸市",

"邢台市",

"保定市",

"张家口市",

"承德市",

"沧州市",

"廊坊市",

"衡水市",

"太原市",

"大同市",

"阳泉市",

"长治市",

"晋城市",

"朔州市",

"晋中市",

"运城市",

"忻州市",

"临汾市",

"吕梁市",

"呼和浩特市",

"包头市",

"乌海市",

"赤峰市",

"通辽市",

"鄂尔多斯市",

"呼伦贝尔市",

"巴彦淖尔市",

"乌兰察布市",

"兴安盟",

"锡林郭勒盟",

"阿拉善盟",

"沈阳市",

"大连市",

"鞍山市",

"抚顺市",

"本溪市",

"丹东市",

"锦州市",

"营口市",

"阜新市",

"辽阳市",

"盘锦市",

"铁岭市",

"朝阳市",

"葫芦岛市",

"长春市",

"吉林市",

"四平市",

"辽源市",

"通化市",

"白山市",

"松原市",

"白城市",

"延边朝鲜族自治州",

"哈尔滨市",

"齐齐哈尔市",

"鸡西市",

"鹤岗市",

"双鸭山市",

"大庆市",

"伊春市",

"佳木斯市",

"七台河市",

"牡丹江市",

"黑河市",

"绥化市",

"大兴安岭地区",

"上海市",

"南京市",

"无锡市",

"徐州市",

"常州市",

"苏州市",

"南通市",

"连云港市",

"淮安市",

"盐城市",

"扬州市",

"镇江市",

"泰州市",

"宿迁市",

"杭州市",

"宁波市",

"温州市",

"嘉兴市",

"湖州市",

"绍兴市",

"金华市",

"衢州市",

"舟山市",

"台州市",

"丽水市",

"合肥市",

"芜湖市",

"蚌埠市",

"淮南市",

"马鞍山市",

"淮北市",

"铜陵市",

"安庆市",

"黄山市",

"滁州市",

"阜阳市",

"宿州市",

"六安市",

"亳州市",

"池州市",

"宣城市",

"福州市",

"厦门市",

"莆田市",

"三明市",

"泉州市",

"漳州市",

"南平市",

"龙岩市",

"宁德市",

"南昌市",

"景德镇市",

"萍乡市",

"九江市",

"新余市",

"鹰潭市",

"赣州市",

"吉安市",

"宜春市",

"抚州市",

"上饶市",

"济南市",

"青岛市",

"淄博市",

"枣庄市",

"东营市",

"烟台市",

"潍坊市",

"济宁市",

"泰安市",

"威海市",

"日照市",

"莱芜市",

"临沂市",

"德州市",

"聊城市",

"滨州市",

"菏泽市",

"郑州市",

"开封市",

"洛阳市",

"平顶山市",

"安阳市",

"鹤壁市",

"新乡市",

"焦作市",

"濮阳市",

"许昌市",

"漯河市",

"三门峡市",

"南阳市",

"商丘市",

"信阳市",

"周口市",

"驻马店市",

"省直辖县",

"武汉市",

"黄石市",

"十堰市",

"宜昌市",

"襄阳市",

"鄂州市",

"荆门市",

"孝感市",

"荆州市",

"黄冈市",

"咸宁市",

"随州市",

"恩施土家族苗族自治州",

"省直辖县",

"长沙市",

"株洲市",

"湘潭市",

"衡阳市",

"邵阳市",

"岳阳市",

"常德市",

"张家界市",

"益阳市",

"郴州市",

"永州市",

"怀化市",

"娄底市",

"湘西土家族苗族自治州",

"广州市",

"韶关市",

"深圳市",

"珠海市",

"汕头市",

"佛山市",

"江门市",

"湛江市",

"茂名市",

"肇庆市",

"惠州市",

"梅州市",

"汕尾市",

"河源市",

"阳江市",

"清远市",

"东莞市",

"中山市",

"潮州市",

"揭阳市",

"云浮市",

"南宁市",

"柳州市",

"桂林市",

"梧州市",

"北海市",

"防城港市",

"钦州市",

"贵港市",

"玉林市",

"百色市",

"贺州市",

"河池市",

"来宾市",

"崇左市",

"海口市",

"三亚市",

"三沙市",

"儋州市",

"重庆市",

"成都市",

"自贡市",

"攀枝花市",

"泸州市",

"德阳市",

"绵阳市",

"广元市",

"遂宁市",

"内江市",

"乐山市",

"南充市",

"眉山市",

"宜宾市",

"广安市",

"达州市",

"雅安市",

"巴中市",

"资阳市",

"阿坝藏族羌族自治州",

"甘孜藏族自治州",

"凉山彝族自治州",

"贵阳市",

"六盘水市",

"遵义市",

"安顺市",

"毕节市",

"铜仁市",

"黔西南布依族苗族自治州",

"黔东南苗族侗族自治州",

"黔南布依族苗族自治州",

"昆明市",

"曲靖市",

"玉溪市",

"保山市",

"昭通市",

"丽江市",

"普洱市",

"临沧市",

"楚雄彝族自治州",

"红河哈尼族彝族自治州",

"文山壮族苗族自治州",

"西双版纳傣族自治州",

"大理白族自治州",

"德宏傣族景颇族自治州",

"怒江傈僳族自治州",

"迪庆藏族自治州",

"拉萨市",

"日喀则市",

"昌都市",

"林芝市",

"山南市",

"那曲市",

"阿里地区",

"西安市",

"铜川市",

"宝鸡市",

"咸阳市",

"渭南市",

"延安市",

"汉中市",

"榆林市",

"安康市",

"商洛市",

"兰州市",

"嘉峪关市",

"金昌市",

"白银市",

"天水市",

"武威市",

"张掖市",

"平凉市",

"酒泉市",

"庆阳市",

"定西市",

"陇南市",

"临夏回族自治州",

"甘南藏族自治州",

"西宁市",

"海东市",

"海北藏族自治州",

"黄南藏族自治州",

"海南藏族自治州",

"果洛藏族自治州",

"玉树藏族自治州",

"海西蒙古族藏族自治州",

"银川市",

"石嘴山市",

"吴忠市",

"固原市",

"中卫市",

"乌鲁木齐市",

"克拉玛依市",

"吐鲁番市",

"哈密市",

"昌吉回族自治州",

"博尔塔拉蒙古自治州",

"巴音郭楞蒙古自治州",

"阿克苏地区",

"克孜勒苏柯尔克孜自治州",

"喀什地区",

"和田地区",

"伊犁哈萨克自治州",

"塔城地区",

"阿勒泰地区",

"自治区直辖县级行政区划",

"台北市",

"高雄市",

"台南市",

"台中市",

"金门县",

"南投县",

"基隆市",

"新竹市",

"嘉义市",

"新北市",

"宜兰县",

"新竹县",

"桃园县",

"苗栗县",

"彰化县",

"嘉义县",

"云林县",

"屏东县",

"台东县",

"花莲县",

"澎湖县",

"连江县",

"香港岛",

"九龙",

"新界",

"澳门半岛",

"离岛"

};

int num = (int)(Math.random()*(368-1)+1);

return city[num];

}

}

4.1、数据分析处理代码

代码如下:

package com.ethan.online

import java.sql.{Connection, DriverManager, PreparedStatement}

import org.apache.kafka.common.serialization.StringDeserializer

import org.apache.spark.SparkConf

import org.apache.spark.streaming.dstream.DStream

import org.apache.spark.streaming.kafka010.ConsumerStrategies.Subscribe

import org.apache.spark.streaming.kafka010.KafkaUtils

import org.apache.spark.streaming.kafka010.LocationStrategies.PreferConsistent

import org.apache.spark.streaming.{Seconds, StreamingContext}

object PageTop {

def main(args: Array[String]): Unit = {

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("PageTop")

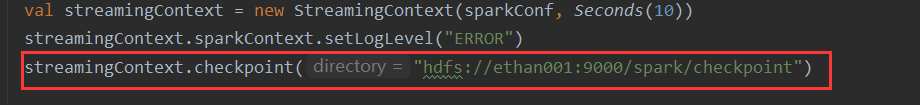

val streamingContext = new StreamingContext(sparkConf, Seconds(10))

streamingContext.sparkContext.setLogLevel("ERROR")

streamingContext.checkpoint("hdfs://ethan001:9000/spark/checkpoint")

val kafkaParams = Map[String,Object](

"bootstrap.servers" -> "ethan002:9092",

"key.deserializer" -> classOf[StringDeserializer],

"value.deserializer" -> classOf[StringDeserializer],

"group.id" -> "use_a_separate_group_id_for_each_stream",

"auto.offset.reset" -> "latest",

"enable.auto.commit" -> (false: java.lang.Boolean)

)

val topics = Array("test", "knf")

val stream = KafkaUtils.createDirectStream[String, String](

streamingContext,

PreferConsistent,

Subscribe[String, String](topics, kafkaParams) //订阅主题

)

val mapDStream: DStream[(String, String)] = stream.map(record => (record.key, record.value)) //转换格式

val resultDS:DStream[(String,Int)] = mapDStream.map(rdd => {

val fields = rdd._2.split("_")

//获取日期

val str = fields(0).trim

//获取城市

val city: String = fields(12).trim

//获取页面

val page: String = fields(4).trim

//封装为元组

(str + "_" + city + "_" + page, 1)

})

//每天没城市页面点击数进行聚合(天_城市_页面ID,sum)

val updateDS:DStream[(String,Int)]=resultDS.updateStateByKey(

(seq:Seq[Int],buffer:Option[Int])=>{

Option(seq.sum+buffer.getOrElse(0))

})

updateDS.foreachRDD(rdd=>{

val temp = rdd.sortBy(_._2,false).take(20)

val url = "jdbc:mysql://localhost:3306/finalwork?useUnicode=true&characterEncoding=UTF-8"

val user = "root"

val password = "123456"

Class.forName("com.mysql.jdbc.Driver").newInstance()

//截断数据表,将数据表原有的数据进行删除

var conn1: Connection = DriverManager.getConnection(url,user,password)

val sql1 = "truncate table online"

var stmt1 : PreparedStatement = conn1.prepareStatement(sql1)

stmt1.executeUpdate()

conn1.close()

temp.foreach(

data=>{

val fields = data._1.split("_")

val conn2: Connection = DriverManager.getConnection(url,user,password)

val sql2 = "insert into online(date,cityName,pageId,sum) values(?,?,?,?)"

val stmt2 : PreparedStatement = conn2.prepareStatement(sql2)

stmt2.setString(1,fields(0).toString)

stmt2.setString(2,fields(1).toString)

stmt2.setString(3,fields(2).toString)

stmt2.setInt(4,data._2.toInt)

stmt2.executeUpdate()

conn2.close()

})

})

updateDS.foreachRDD(rdd=>{

rdd.sortBy(_._2,false).take(20).foreach(println)

})

streamingContext.start()

streamingContext.awaitTermination()

}

}

5、执行程序

1、启动hadoop

因为我们实时处理需要检查点,我们通常设置在hdfs上

2、启动zookeeper

bin/zkServer.sh start

注意:如果是集群的话所有的主机都需要启动

3、启动kafka

bin/kafka-server-start.sh -daemon config/server.properties

注意:如果是集群的话所有的主机都需要启动

4、启动flume

bin/flume-ng agent -n a1 -c conf/ -f jobs/flume-kafka-sparkstreaming.conf -Dflume.root.logger=INFO,console

5、启动日志生成程序

6、启动sparkStreaming程序

执行结果

日志生成

数据处理

总结

主要注意:

1、日志是否能被flume监听到

2、flume是否能将数据sink到kafka的topic,启动消费者程序查看是否有数据可验证

3、sparkStreaming能否连接到kafka并且消费topic中的数据

4、数据是否能存储到MySQL中

347

347

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?