前言

没有永远的安全,如何在被攻击的情况下,快速响应和快速溯源分析攻击动作是个重要的话题。想要分析攻击者做了什么、怎么攻击进来的、还攻击了谁,那么日志是必不可少的一项,因此我们需要尽可能采集多的日志来进行分析攻击者的动作,甚至在攻击者刚落脚的时候就阻断攻击者。

安装Elastic+Kibana

Docker安装

这边根据官方文档:https://www.elastic.co/guide/en/elasticsearch/reference/current/docker.html

使用Docker安装了Elastic和Kibana

# 安装es

docker network create elastic

docker pull docker.elastic.co/elasticsearch/elasticsearch:8.12.1

docker run --name es01 --net elastic -p 9200:9200 -it -m 1GB docker.elastic.co/elasticsearch/elasticsearch:8.12.1

# 记录es的密码和注册kibana所需要的token

# 测试es是否正确运行

export ELASTIC_PASSWORD="es_your_password"

docker cp es01:/usr/share/elasticsearch/config/certs/http_ca.crt .

curl --cacert http_ca.crt -u elastic:$ELASTIC_PASSWORD https://localhost:9200

# 安装kibana

docker pull docker.elastic.co/kibana/kibana:8.12.1

docker run --name kib01 --net elastic -p 5601:5601 docker.elastic.co/kibana/kibana:8.12.1

会给出kibana的注册地址,访问填入上述记录的token即可。

不知道是机器性能问题还是Docker安装的问题,es容器经常会挂掉。

RPM安装

根据https://www.elastic.co/guide/en/elasticsearch/reference/current/rpm.html、https://www.elastic.co/guide/en/kibana/current/rpm.html官方文档,可以yum

install安装。因为公司访问镜像源被封,因此使用上述文档中下载rpm安装:

# 安装es

wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-8.12.1-x86_64.rpm

wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-8.12.1-x86_64.rpm.sha512

shasum -a 512 -c elasticsearch-8.12.1-x86_64.rpm.sha512

sudo rpm --install elasticsearch-8.12.1-x86_64.rpm

安装kibana

wget https://artifacts.elastic.co/downloads/kibana/kibana-8.12.1-x86_64.rpm

wget https://artifacts.elastic.co/downloads/kibana/kibana-8.12.1-x86_64.rpm.sha512

shasum -a 512 -c kibana-8.12.1-x86_64.rpm.sha512

sudo rpm --install kibana-8.12.1-x86_64.rpm

依然在安装es的rpm时,会吐出es/kibana的密码:

The generated password for the elastic built-in superuser is : Fq*S7jxCjFfPu6nN8NG8

es服务可能启动不了,但是安装时会给出启动命令:

sudo systemctl daemon-reload

sudo systemctl enable elasticsearch.service

测试es启动是否正常:

export ELASTIC_PASSWORD="Fq*S7jxCjFfPu6nN8NG8"

curl --cacert /etc/elasticsearch/certs/http_ca.crt -u elastic:$ELASTIC_PASSWORD https://localhost:9200

记得改下配置文件,使其监听在0.0.0.0上面:

# /etc/elasticsearch/elasticsearch.yml

# Allow HTTP API connections from anywhere

# Connections are encrypted and require user authentication

http.host: 0.0.0.0

# Allow other nodes to join the cluster from anywhere

# Connections are encrypted and mutually authenticated

transport.host: 0.0.0.0

kibana一样,记得改下配置文件,使其监听在0.0.0.0上面:

# /etc/kibana/kibana.yml

server.host: "0.0.0.0"

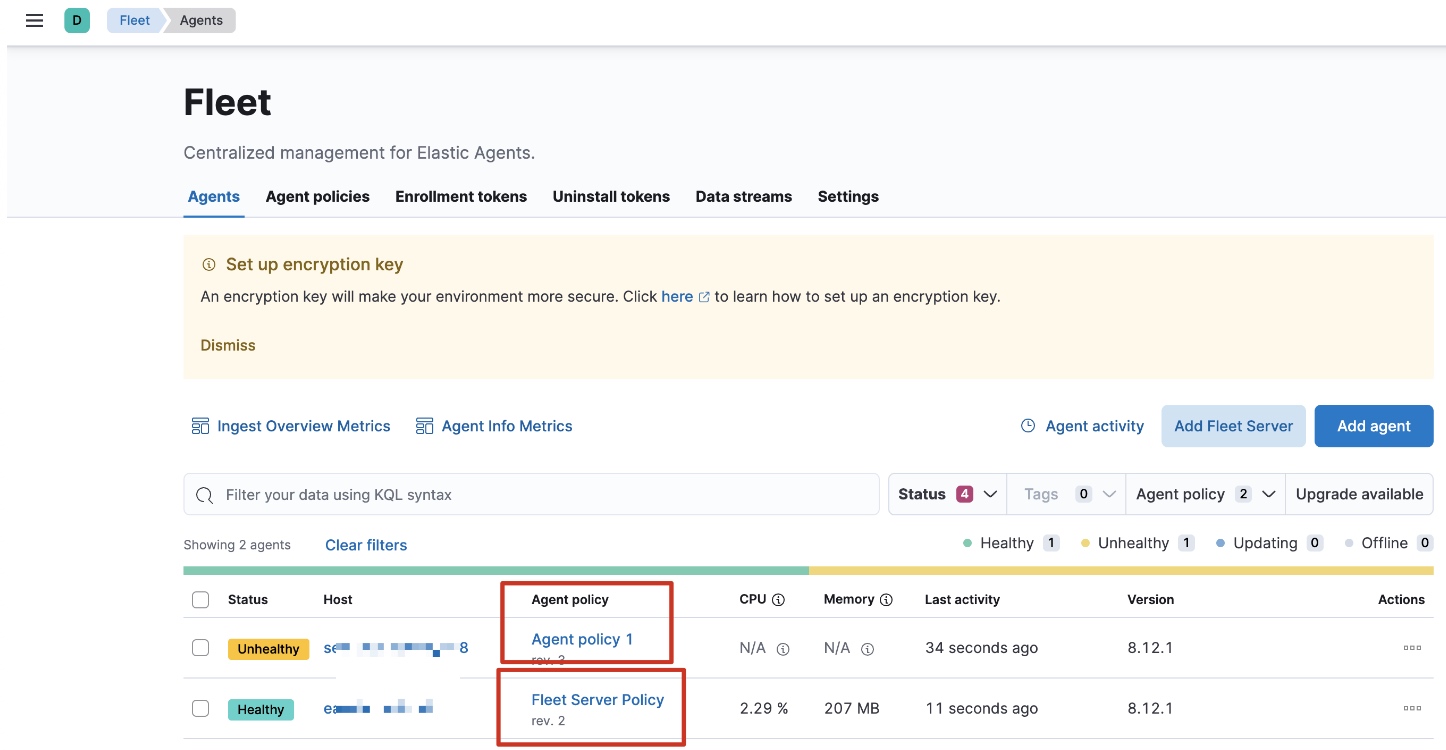

安装Fleet和Elastic Agent

在Kibana上,Management->Fleet->Add Fleet Server,先安装Fleet

Server,URL填写https协议,端口可以填默认的8220。

装好Fleet Server后再装Elastic Agent,Fleet Server也是一个Agent,因此不能在同一台机器上同时装Fleet

Server和Elastic Agent。

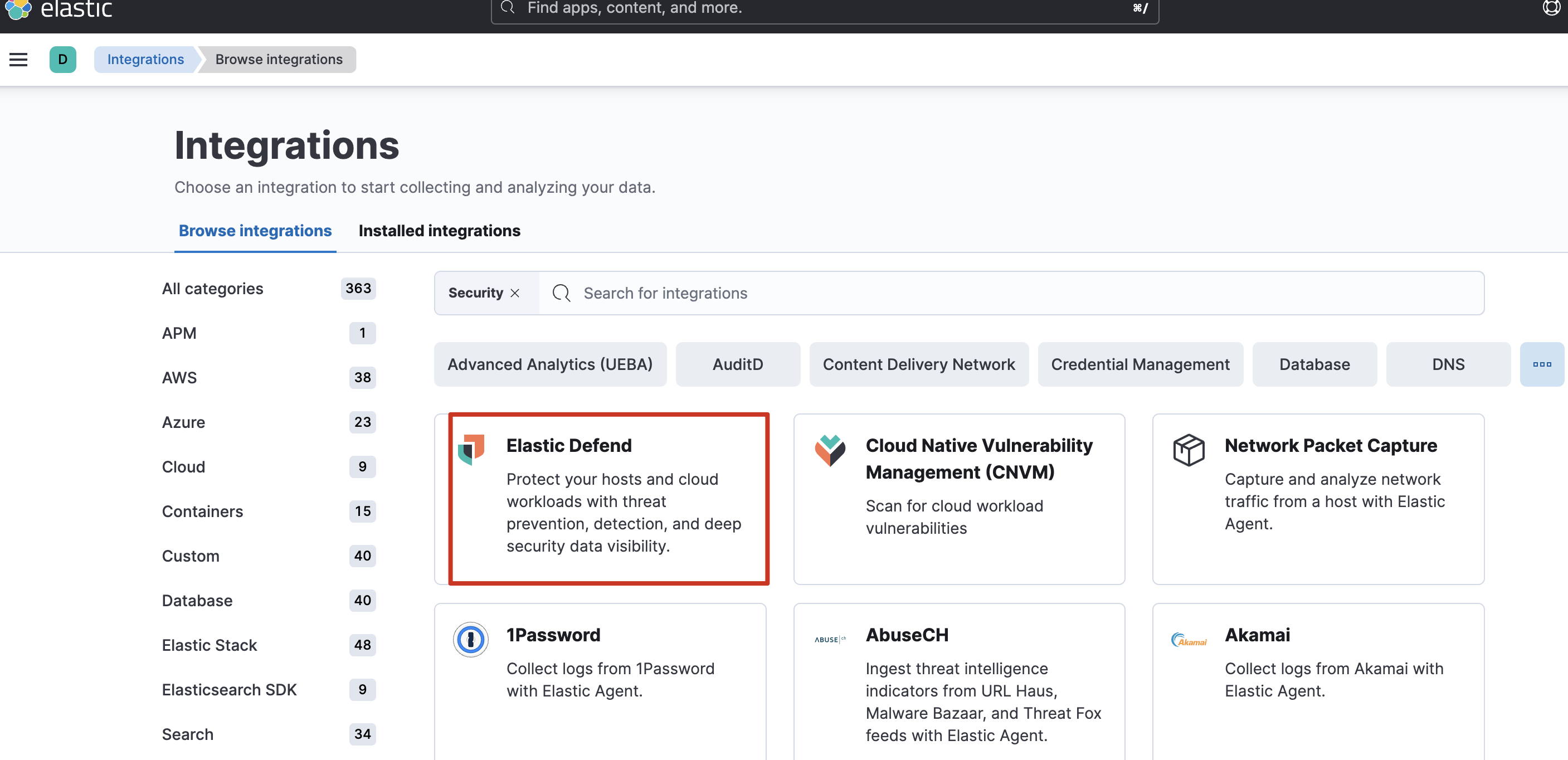

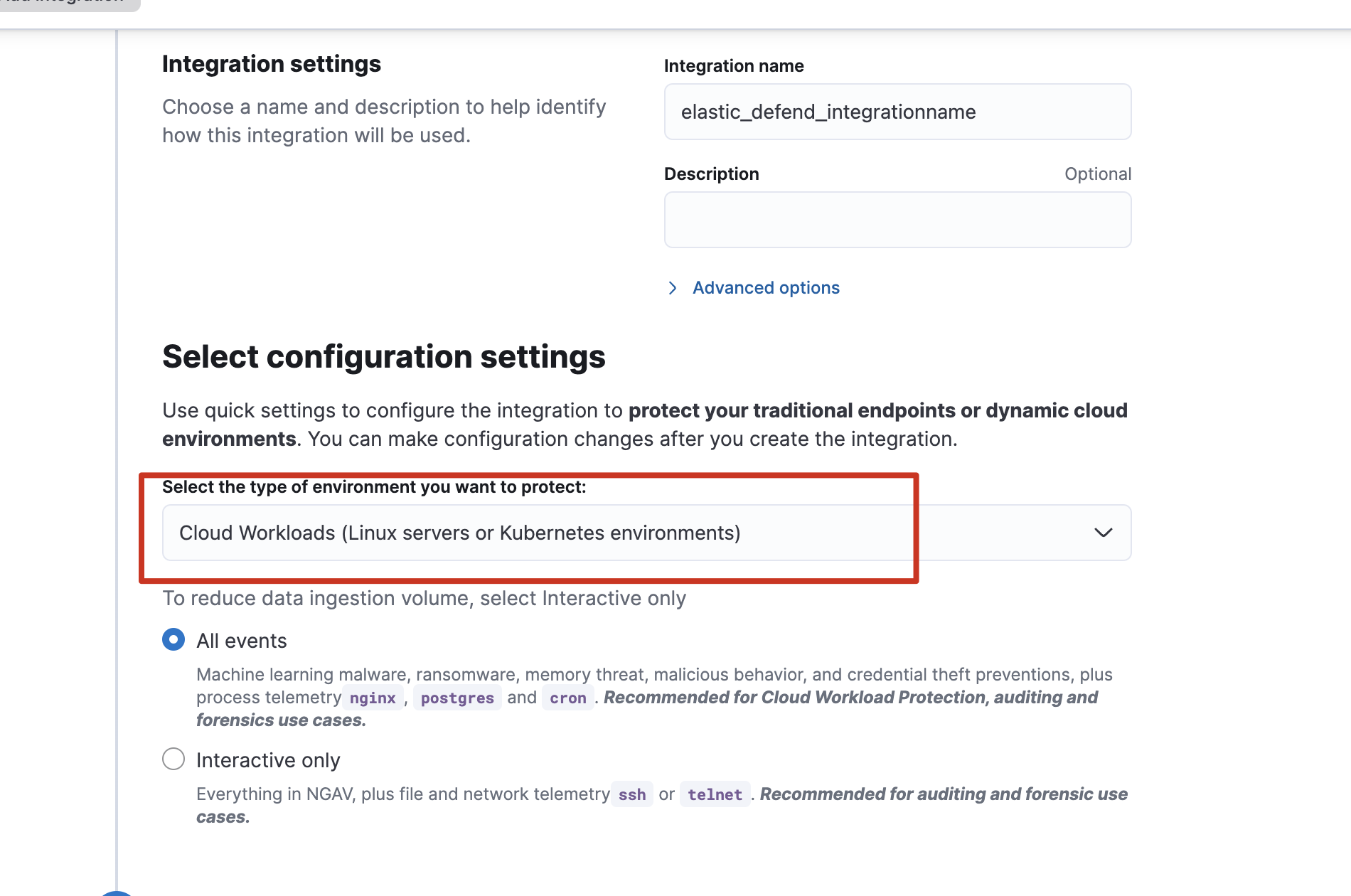

为了使其Elastic Agent附带Security能力,可以添加各式各样的integrations集成,如到Security->Manage->Get

started->Add security integrations安装安全集成:

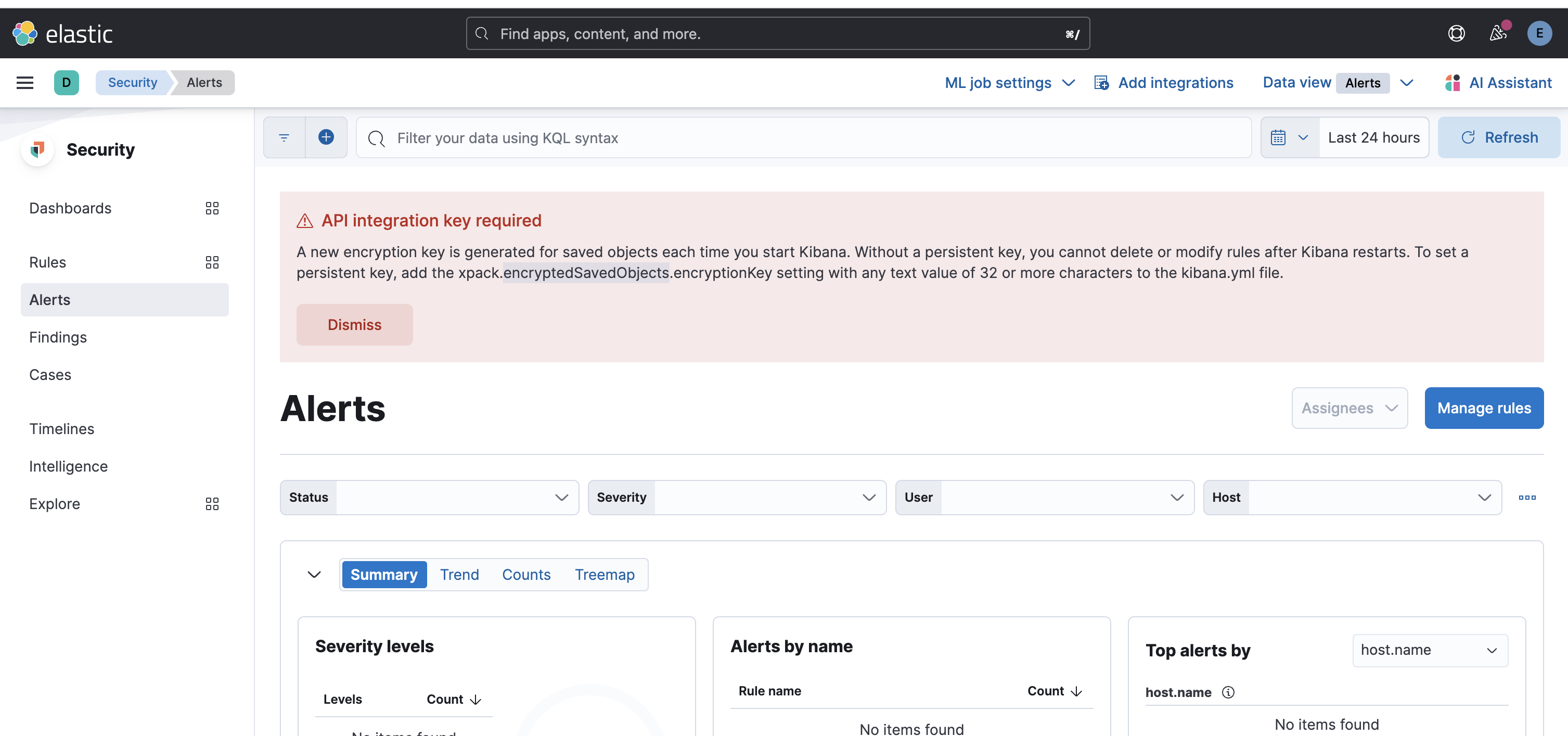

测试告警

注意,如果有如下错误需解决,否则会无法查阅规则:

帮助网安学习,全套资料S信免费领取:

① 网安学习成长路径思维导图

② 60+网安经典常用工具包

③ 100+SRC分析报告

④ 150+网安攻防实战技术电子书

⑤ 最权威CISSP 认证考试指南+题库

⑥ 超1800页CTF实战技巧手册

⑦ 最新网安大厂面试题合集(含答案)

⑧ APP客户端安全检测指南(安卓+IOS)

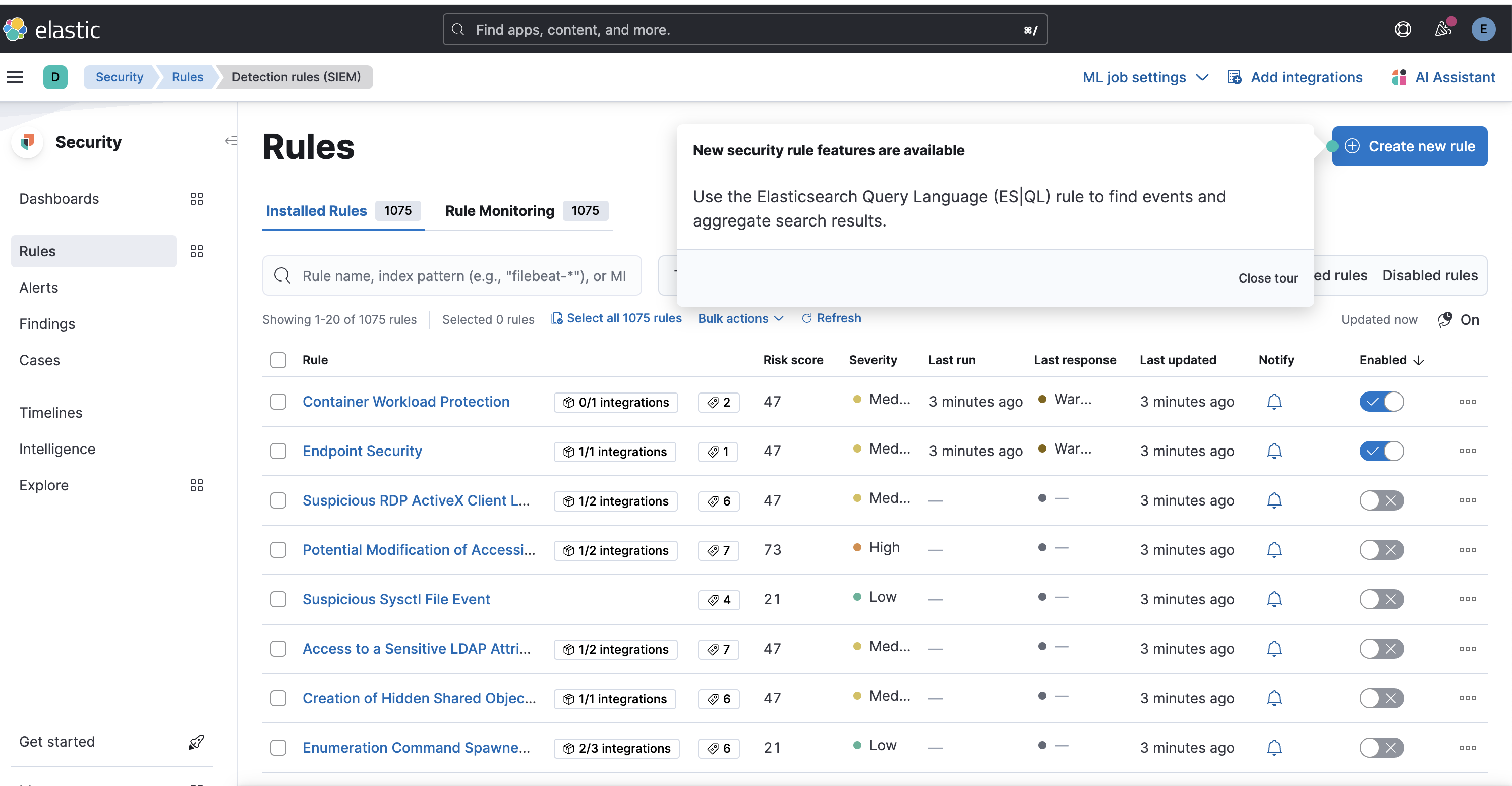

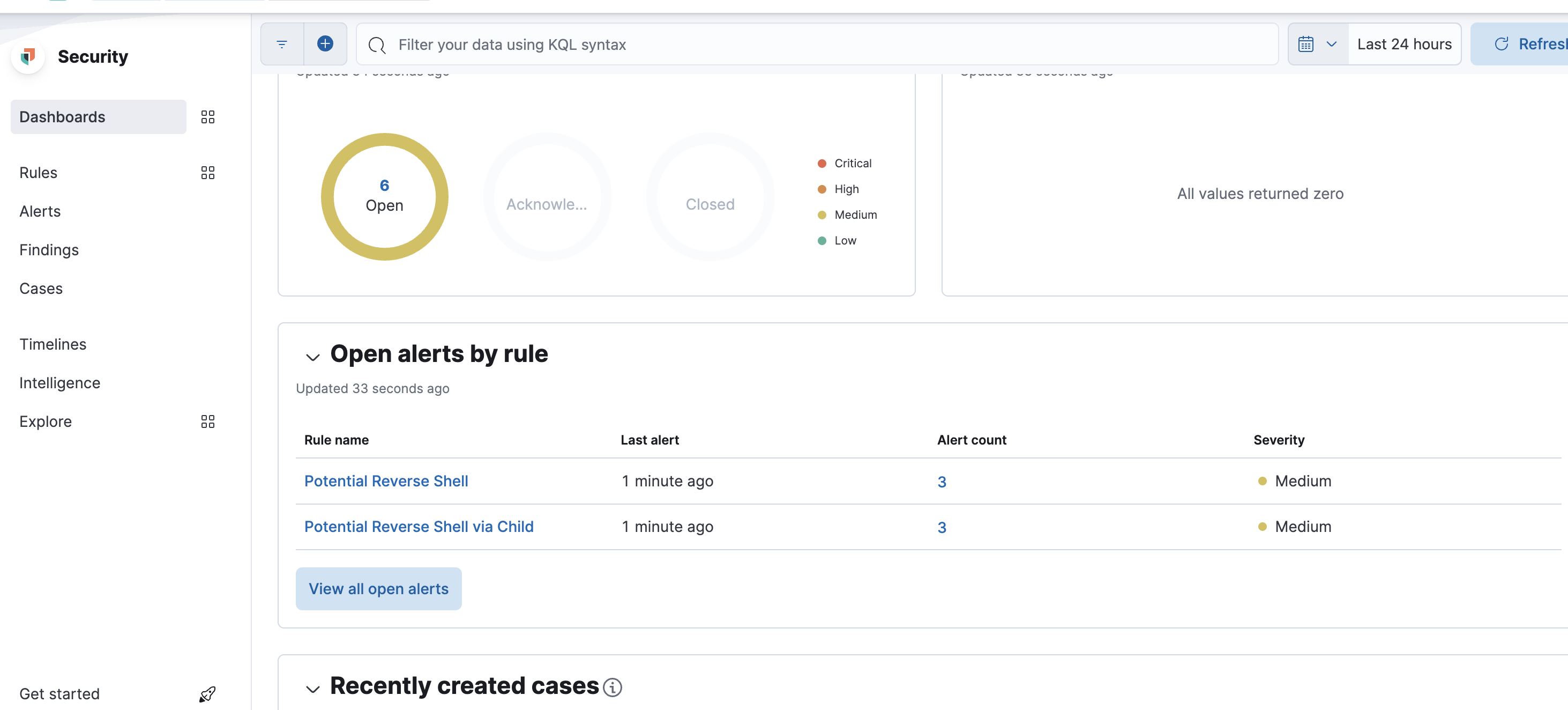

有些规则没开,记得开下,比如我把reverse shell规则打开后:

Security->Dashboards->Detection & Response可以看到一些概览:

记录Windows事件管理器日志

安装

Windows事件管理器日志不是单纯的文本,因此需要借助一些工具来帮助我们完成采集的目的。这里使用winlogbeat(https://www.elastic.co/cn/beats/winlogbeat)完成。

官方提供了很详细的安装文档(<https://www.elastic.co/guide/en/beats/winlogbeat/current/winlogbeat-

installation-configuration.html>),核心就是配置想要记录的日志类型和对外传输的方案,这里我使用的方案为:

- 记录的日志类型,我没改,默认就是Security、Application,甚至还有Sysmon

- 对外传输,我使用了传输到elastic

配置好winlogbeat.yml后可以进行测试配置文件对不对:

.\winlogbeat.exe test config -c .\winlogbeat.yml -e

下面为我的配置文件信息:

###################### Winlogbeat Configuration Example ########################

# This file is an example configuration file highlighting only the most common

# options. The winlogbeat.reference.yml file from the same directory contains

# all the supported options with more comments. You can use it as a reference.

#

# You can find the full configuration reference here:

# https://www.elastic.co/guide/en/beats/winlogbeat/index.html

# ======================== Winlogbeat specific options =========================

# event_logs specifies a list of event logs to monitor as well as any

# accompanying options. The YAML data type of event_logs is a list of

# dictionaries.

#

# The supported keys are name, id, xml_query, tags, fields, fields_under_root,

# forwarded, ignore_older, level, event_id, provider, and include_xml.

# The xml_query key requires an id and must not be used with the name,

# ignore_older, level, event_id, or provider keys. Please visit the

# documentation for the complete details of each option.

# https://go.es.io/WinlogbeatConfig

winlogbeat.event_logs:

- name: Application

ignore_older: 72h

- name: System

- name: Security

- name: Microsoft-Windows-Sysmon/Operational

- name: Windows PowerShell

event_id: 400, 403, 600, 800

- name: Microsoft-Windows-PowerShell/Operational

event_id: 4103, 4104, 4105, 4106

- name: ForwardedEvents

tags: [forwarded]

# ====================== Elasticsearch template settings =======================

setup.template.settings:

index.number_of_shards: 1

#index.codec: best_compression

#_source.enabled: false

# ================================== General ===================================

# The name of the shipper that publishes the network data. It can be used to group

# all the transactions sent by a single shipper in the web interface.

#name:

# The tags of the shipper are included in their field with each

# transaction published.

#tags: ["service-X", "web-tier"]

# Optional fields that you can specify to add additional information to the

# output.

#fields:

# env: staging

# ================================= Dashboards =================================

# These settings control loading the sample dashboards to the Kibana index. Loading

# the dashboards is disabled by default and can be enabled either by setting the

# options here or by using the `setup` command.

#setup.dashboards.enabled: false

# The URL from where to download the dashboard archive. By default, this URL

# has a value that is computed based on the Beat name and version. For released

# versions, this URL points to the dashboard archive on the artifacts.elastic.co

# website.

#setup.dashboards.url:

# =================================== Kibana ===================================

# Starting with Beats version 6.0.0, the dashboards are loaded via the Kibana API.

# This requires a Kibana endpoint configuration.

setup.kibana:

# Kibana Host

# Scheme and port can be left out and will be set to the default (http and 5601)

# In case you specify and additional path, the scheme is required: http://localhost:5601/path

# IPv6 addresses should always be defined as: https://[2001:db8::1]:5601

#host: "localhost:5601"

# Kibana Space ID

# ID of the Kibana Space into which the dashboards should be loaded. By default,

# the Default Space will be used.

#space.id:

# =============================== Elastic Cloud ================================

# These settings simplify using Winlogbeat with the Elastic Cloud (https://cloud.elastic.co/).

# The cloud.id setting overwrites the `output.elasticsearch.hosts` and

# `setup.kibana.host` options.

# You can find the `cloud.id` in the Elastic Cloud web UI.

#cloud.id:

# The cloud.auth setting overwrites the `output.elasticsearch.username` and

# `output.elasticsearch.password` settings. The format is `<user>:<pass>`.

#cloud.auth:

# ================================== Outputs ===================================

# Configure what output to use when sending the data collected by the beat.

# ---------------------------- Elasticsearch Output ----------------------------

output.elasticsearch:

# Array of hosts to connect to.

hosts: ["your_ip:9200"]

# Protocol - either `http` (default) or `https`.

#protocol: "https"

# Authentication credentials - either API key or username/password.

#api_key: "id:api_key"

username: "elastic"

password: "passwords"

# Pipeline to route events to security, sysmon, or powershell pipelines.

pipeline: "winlogbeat-%{[agent.version]}-routing"

# ------------------------------ Logstash Output -------------------------------

#output.logstash:

# The Logstash hosts

#hosts: ["localhost:5044"]

# Optional SSL. By default is off.

# List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

# Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/cert.pem"

# Client Certificate Key

#ssl.key: "/etc/pki/client/cert.key"

# ================================= Processors =================================

processors:

- add_host_metadata:

when.not.contains.tags: forwarded

- add_cloud_metadata: ~

# ================================== Logging ===================================

# Sets log level. The default log level is info.

# Available log levels are: error, warning, info, debug

#logging.level: debug

# At debug level, you can selectively enable logging only for some components.

# To enable all selectors, use ["*"]. Examples of other selectors are "beat",

# "publisher", "service".

#logging.selectors: ["*"]

# ============================= X-Pack Monitoring ==============================

# Winlogbeat can export internal metrics to a central Elasticsearch monitoring

# cluster. This requires xpack monitoring to be enabled in Elasticsearch. The

# reporting is disabled by default.

# Set to true to enable the monitoring reporter.

#monitoring.enabled: false

# Sets the UUID of the Elasticsearch cluster under which monitoring data for this

# Winlogbeat instance will appear in the Stack Monitoring UI. If output.elasticsearch

# is enabled, the UUID is derived from the Elasticsearch cluster referenced by output.elasticsearch.

#monitoring.cluster_uuid:

# Uncomment to send the metrics to Elasticsearch. Most settings from the

# Elasticsearch outputs are accepted here as well.

# Note that the settings should point to your Elasticsearch *monitoring* cluster.

# Any setting that is not set is automatically inherited from the Elasticsearch

# output configuration, so if you have the Elasticsearch output configured such

# that it is pointing to your Elasticsearch monitoring cluster, you can simply

# uncomment the following line.

#monitoring.elasticsearch:

# ============================== Instrumentation ===============================

# Instrumentation support for the winlogbeat.

#instrumentation:

# Set to true to enable instrumentation of winlogbeat.

#enabled: false

# Environment in which winlogbeat is running on (eg: staging, production, etc.)

#environment: ""

# APM Server hosts to report instrumentation results to.

#hosts:

# - http://localhost:8200

# API Key for the APM Server(s).

# If api_key is set then secret_token will be ignored.

#api_key:

# Secret token for the APM Server(s).

#secret_token:

# ================================= Migration ==================================

# This allows to enable 6.7 migration aliases

#migration.6_to_7.enabled: true

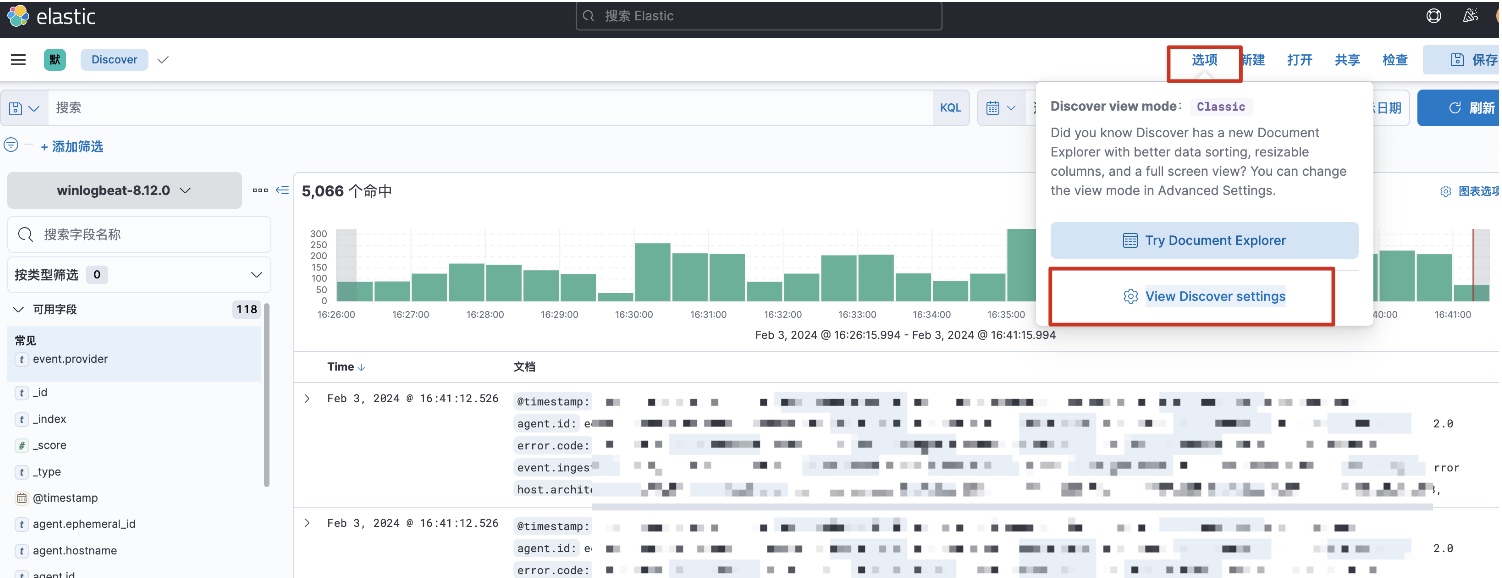

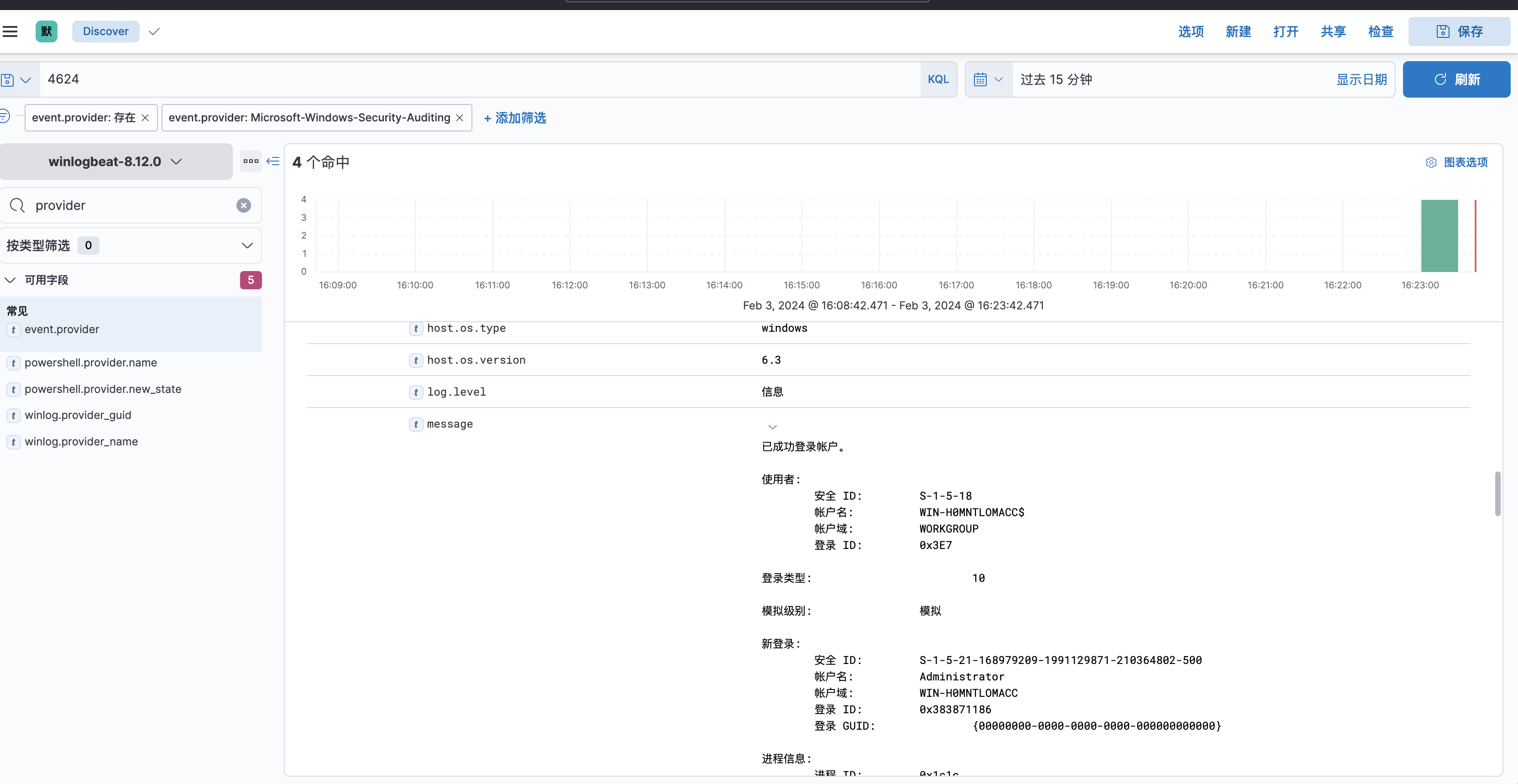

数据检索

这时候,可能elastic上面搜不到数据,是因为winlogbeat使用了他自己的索引,默认为winlogbeat-

version,笔者测试时,发现kibana上迟迟出现不了该索引,还以为数据传输没成功呢,因此通过如下手段进行排查,先获取es上面的索引信息:

http://your_ip:9200/_cat/indices?v

发现有类似winlogbeat的索引信息:

yellow open .ds-winlogbeat-8.12.0-2024.02.03-000001 UpWhWCpdR-2WgMVe_kiH9A 1 1 192705 0 131.1mb 131.1mb

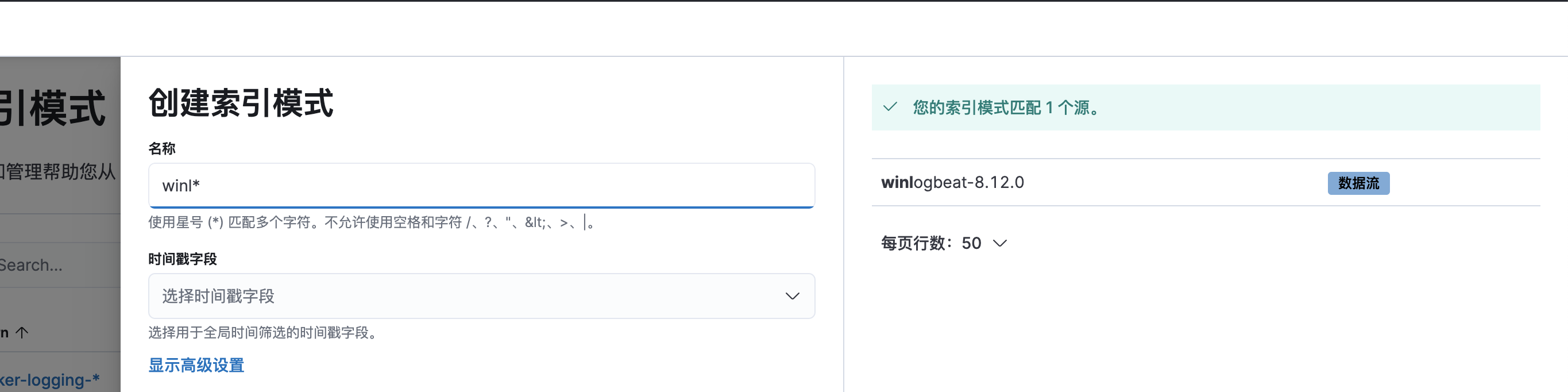

后面尝试在kibana上创建一个新的索引即可,v7.17.12版本的kibana创建索引的过程如下:

discover->选项->View Discover settings

找到“索引模式”创建一个新的索引即可:

这时候就可以检索事件管理器日志了:

接下来我将给各位同学划分一张学习计划表!

学习计划

那么问题又来了,作为萌新小白,我应该先学什么,再学什么?

既然你都问的这么直白了,我就告诉你,零基础应该从什么开始学起:

阶段一:初级网络安全工程师

接下来我将给大家安排一个为期1个月的网络安全初级计划,当你学完后,你基本可以从事一份网络安全相关的工作,比如渗透测试、Web渗透、安全服务、安全分析等岗位;其中,如果你等保模块学的好,还可以从事等保工程师。

综合薪资区间6k~15k

1、网络安全理论知识(2天)

①了解行业相关背景,前景,确定发展方向。

②学习网络安全相关法律法规。

③网络安全运营的概念。

④等保简介、等保规定、流程和规范。(非常重要)

2、渗透测试基础(1周)

①渗透测试的流程、分类、标准

②信息收集技术:主动/被动信息搜集、Nmap工具、Google Hacking

③漏洞扫描、漏洞利用、原理,利用方法、工具(MSF)、绕过IDS和反病毒侦察

④主机攻防演练:MS17-010、MS08-067、MS10-046、MS12-20等

3、操作系统基础(1周)

①Windows系统常见功能和命令

②Kali Linux系统常见功能和命令

③操作系统安全(系统入侵排查/系统加固基础)

4、计算机网络基础(1周)

①计算机网络基础、协议和架构

②网络通信原理、OSI模型、数据转发流程

③常见协议解析(HTTP、TCP/IP、ARP等)

④网络攻击技术与网络安全防御技术

⑤Web漏洞原理与防御:主动/被动攻击、DDOS攻击、CVE漏洞复现

5、数据库基础操作(2天)

①数据库基础

②SQL语言基础

③数据库安全加固

6、Web渗透(1周)

①HTML、CSS和JavaScript简介

②OWASP Top10

③Web漏洞扫描工具

④Web渗透工具:Nmap、BurpSuite、SQLMap、其他(菜刀、漏扫等)

那么,到此为止,已经耗时1个月左右。你已经成功成为了一名“脚本小子”。那么你还想接着往下探索吗?

阶段二:中级or高级网络安全工程师(看自己能力)

综合薪资区间15k~30k

7、脚本编程学习(4周)

在网络安全领域。是否具备编程能力是“脚本小子”和真正网络安全工程师的本质区别。在实际的渗透测试过程中,面对复杂多变的网络环境,当常用工具不能满足实际需求的时候,往往需要对现有工具进行扩展,或者编写符合我们要求的工具、自动化脚本,这个时候就需要具备一定的编程能力。在分秒必争的CTF竞赛中,想要高效地使用自制的脚本工具来实现各种目的,更是需要拥有编程能力。

零基础入门的同学,我建议选择脚本语言Python/PHP/Go/Java中的一种,对常用库进行编程学习

搭建开发环境和选择IDE,PHP环境推荐Wamp和XAMPP,IDE强烈推荐Sublime;

Python编程学习,学习内容包含:语法、正则、文件、 网络、多线程等常用库,推荐《Python核心编程》,没必要看完

用Python编写漏洞的exp,然后写一个简单的网络爬虫

PHP基本语法学习并书写一个简单的博客系统

熟悉MVC架构,并试着学习一个PHP框架或者Python框架 (可选)

了解Bootstrap的布局或者CSS。

阶段三:顶级网络安全工程师

如果你对网络安全入门感兴趣,那么你需要的话可以点击这里👉网络安全重磅福利:入门&进阶全套282G学习资源包免费分享!

学习资料分享

当然,只给予计划不给予学习资料的行为无异于耍流氓,这里给大家整理了一份【282G】的网络安全工程师从入门到精通的学习资料包,可点击下方二维码链接领取哦。

2077

2077

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?