软件工程第四次实验:CNN实战

- 猫狗大战挑战赛

1 环境准备

import numpy as np

import matplotlib.pyplot as plt

import os

import torch

import torch.nn as nn

import torchvision

from torchvision import models,transforms,datasets

import time

import json

# 判断是否存在GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using gpu: %s ' % torch.cuda.is_available())

1.1 下载图片

- 因为这个代码需要在colab上跑,速度会相对较慢。因此重新整理了数据,制作了新的数据集,训练集包含1800张图(猫的图片900张,狗的图片900张),测试集包含2000张图。

1.2 数据处理

- 在使用CNN处理图像时,需要进行预处理。图片将被整理成 224x224x3的大小,同时还将进行归一化处理。

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

data_dir = './dogscats'

dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format)

for x in ['train', 'valid']}

dset_sizes = {x: len(dsets[x]) for x in ['train', 'valid']}

dset_classes = dsets['train'].classes

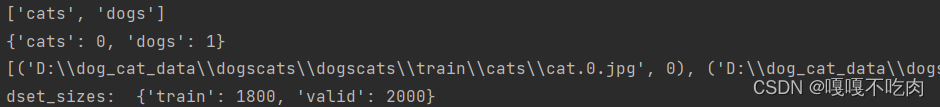

- 查看训练集的属性

print(dsets['train'].classes)

print(dsets['train'].class_to_idx)

print(dsets['train'].imgs[:5])

print('dset_sizes: ', dset_sizes)

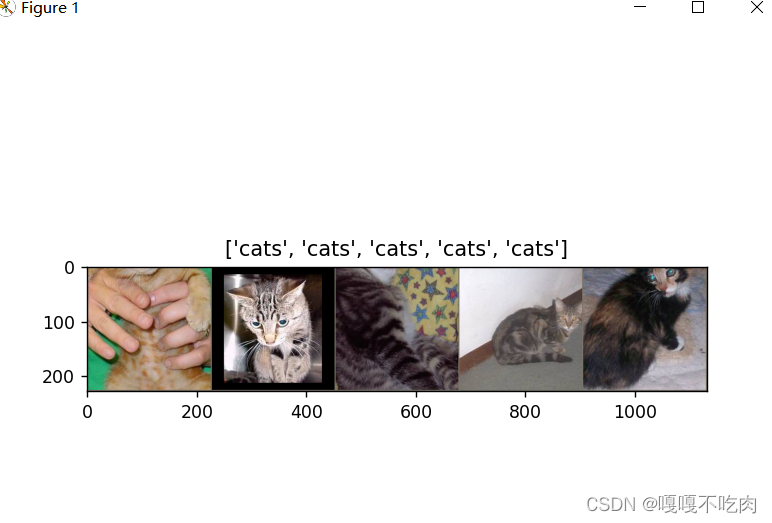

- 可视化地查看加载后的图片,可以看到第一个batch的5张图片

loader_train = torch.utils.data.DataLoader(dsets['train'], batch_size=64, shuffle=True, num_workers=6)

loader_valid = torch.utils.data.DataLoader(dsets['valid'], batch_size=5, shuffle=False, num_workers=6)

out = torchvision.utils.make_grid(inputs_try)

imshow(out, title=[dset_classes[x] for x in labels_try])

2 创建VGG模型

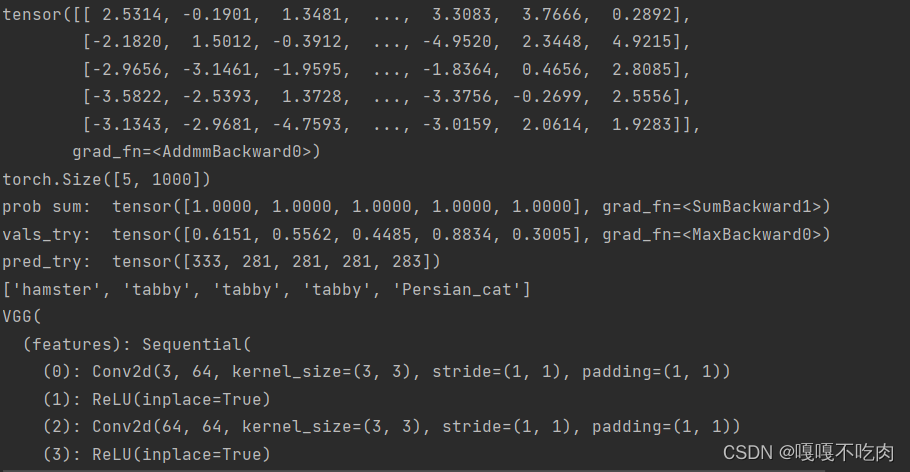

- 在这部分代码中,对输入的5个图片利用VGG模型进行预测,同时,使用softmax对结果进行处理,随后展示了识别结果。

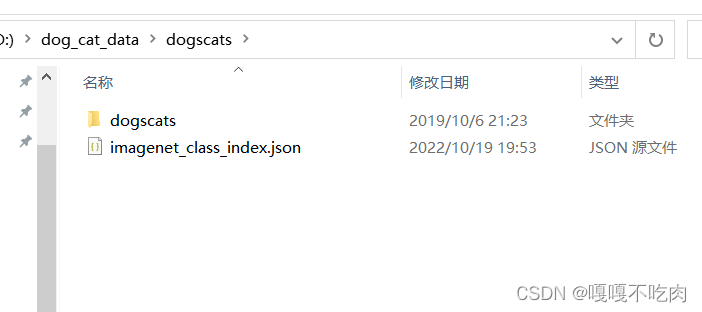

2.1 下载json文件

- 下载地址

- https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json

2.2 创建VGG模型

model_vgg = models.vgg16(pretrained=True)

with open('./imagenet_class_index.json') as f:

class_dict = json.load(f)

dic_imagenet = [class_dict[str(i)][1] for i in range(len(class_dict))]

inputs_try , labels_try = inputs_try.to(device), labels_try.to(device)

model_vgg = model_vgg.to(device)

outputs_try = model_vgg(inputs_try)

print(outputs_try)

print(outputs_try.shape)

'''

可以看到结果为5行,1000列的数据,每一列代表对每一种目标识别的结果。

但是我也可以观察到,结果非常奇葩,有负数,有正数,

为了将VGG网络输出的结果转化为对每一类的预测概率,我们把结果输入到 Softmax 函数

'''

m_softm = nn.Softmax(dim=1)

probs = m_softm(outputs_try)

vals_try,pred_try = torch.max(probs,dim=1)

print( 'prob sum: ', torch.sum(probs,1))

print( 'vals_try: ', vals_try)

print( 'pred_try: ', pred_try)

print([dic_imagenet[i] for i in pred_try.data])

imshow(torchvision.utils.make_grid(inputs_try.data.cpu()),

title=[dset_classes[x] for x in labels_try.data.cpu()])

- 输出如下

3 修改最后一层,冻结前面层的参数

- 为了在训练中冻结前面层的参数,需要设置 required_grad=False。这样,反向传播训练梯度时,前面层的权重就不会自动更新了。训练中,只会更新最后一层的参数。

print(model_vgg)

model_vgg_new = model_vgg;

for param in model_vgg_new.parameters():

param.requires_grad = False

model_vgg_new.classifier._modules['6'] = nn.Linear(4096, 2)

model_vgg_new.classifier._modules['7'] = torch.nn.LogSoftmax(dim = 1)

model_vgg_new = model_vgg_new.to(device)

print(model_vgg_new.classifier)

4 训练并测试全连接层

- 创建损失函数和优化器及模型训练

criterion = nn.NLLLoss()

# 学习率

lr = 0.001

optimizer_vgg = torch.optim.SGD(model_vgg_new.classifier[6].parameters(),lr = lr)

def train_model(model,dataloader,size,epochs=1,optimizer=None):

model.train()

for epoch in range(epochs):

running_loss = 0.0

running_corrects = 0

count = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

optimizer = optimizer

optimizer.zero_grad()

loss.backward()

optimizer.step()

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

count += len(inputs)

print('Training: No. ', count, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

train_model(model_vgg_new,loader_train,size=dset_sizes['train'], epochs=1,

optimizer=optimizer_vgg)

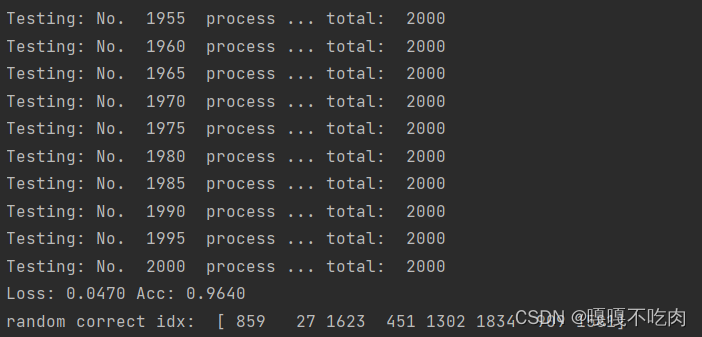

- 测试模型

def test_model(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

all_classes = np.zeros(size)

all_proba = np.zeros((size,2))

i = 0

running_loss = 0.0

running_corrects = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

predictions[i:i+len(classes)] = preds.to('cpu').numpy()

all_classes[i:i+len(classes)] = classes.to('cpu').numpy()

all_proba[i:i+len(classes),:] = outputs.data.to('cpu').numpy()

i += len(classes)

print('Testing: No. ', i, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

return predictions, all_proba, all_classes

predictions, all_proba, all_classes = test_model(model_vgg_new,loader_valid,size=dset_sizes['valid'])

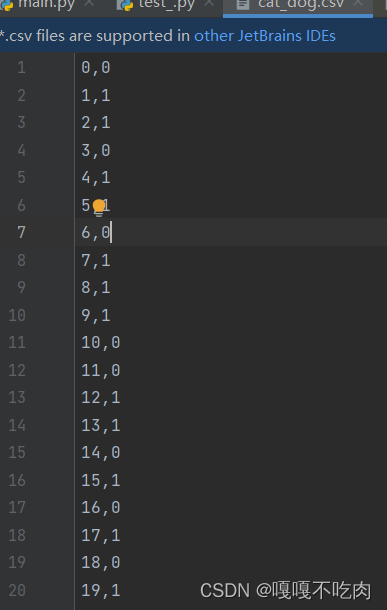

5 用训练好的模型提交csv到比赛页面

5.1 测试代码

dsets = datasets.ImageFolder('D:/dog_cat_data/dogscats/test', vgg_format)

final = {} # 结果数组

loader_test = torch.utils.data.DataLoader(dsets, batch_size=1, shuffle=False, num_workers=0)

model_vgg_new = torch.load("./model/md.pth")

def test(model, dataloader, size):

model.eval() # 参数固定

cnt = 0 # count

for inputs, _ in dataloader:

if cnt < size:

inputs = inputs.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs.data, 1) # 预测值最大化

key = dsets.imgs[cnt][0].split("/")[-1].split('.')[0] # 对目录项进行分割

final[key] = preds[0]

cnt += 1

else:

break;

test(model_vgg_new, loader_test, size=2000)

with open("./cat_dog.csv", 'a+') as f:

for key in range(2000):

f.write("{},{}\n".format(key, final[str(key)]))

5.2 保存在csv中,提交文件

6 心得体会

通过本次实验,完整地体会到了从模型训练到预测数据的全过程,深刻了解cnn模型的构建和使用。卷积神经网络和多层感知机比较类似,不过他相对于多层感知机加入了一些层。这些层由卷积成和池化组成。所以,在CNN中神经网络的层次和结构都是不固定的可用调整的。

580

580

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?